User login

The Critical Value of Telepathology in the COVID-19 Era

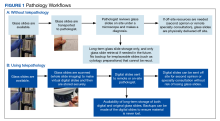

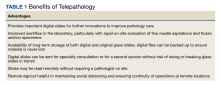

Advances in technology, including ubiquitous access to the internet and the capacity to transfer high-resolution representative images, have facilitated the adoption of telepathology by laboratories worldwide.1-5 Telepathology includes the use of telecommunication links that enable transmission of digital pathology images for primary diagnosis, quality assurance (QA), education, research, or second opinion diagnoses.3 This improvement has culminated in approvals by the US Food and Drug Administration (FDA) of whole slide imaging (WSI) systems for surgical pathology slides: specifically, the Philips IntelliSite Digital Pathology Solution in 2017 and the Leica Aperio AT2 DX in 2020.6-8 However, the approvals do not include telecytology due to lack of whole slide multiplanar scanning at different planes of focus or z-stacking capabilities.7

Long-term trends in pathology, specifically the slow reduction in the number of practicing pathologists available in the workforce compared with the total served population, along with the social distancing imperatives and disruptions brought about by the COVID-19 pandemic have made telepathology implementation pertinent to continue and improve pathology practice.8-10

Description and Definitions

The primary modes of telepathology (static image telepathology, robotic telepathology, video microscopy, WSI, and multimodality telepathology) have been defined by the American Telemedicine Association (ATA).2 WSI has been particularly suited for telepathology due to the ability to view digital slides in high resolution at various magnifications. These image files can also be viewed and shared with ease with other observers. Also, they take a shorter time to view compared with the use of a robotic microscope.3

Selection, Validation, and Implementation

WSI platforms vary in their characteristics and have several parameters, including but not limited to batch scanning vs continuous or random-access processing, throughput volume capacities, scan speed, cost, manual vs automatic loading of slides, image quality, slide capacity, flexibility for different slide sizes/features, telepathology capabilities once slide scanned, z-stacking, and regulatory approval status.8 Selection of the WSI device is dependent on need and cost considerations. For example, use for frozen section requires faster scanning speed and does not generally require a high throughput scanner.

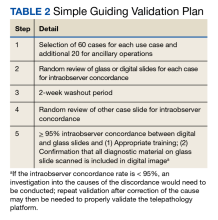

Validation of telepathology by the testing site demonstrates that the new system performs as expected for its intended clinical use before being put into service and that the digital slides produced are acceptable for clinical diagnostic interpretation.11 The College of American Pathologists (CAP) established WSI validation guidelines are part of the published laboratory standard of care.11-13 An appropriate validation enables the benefits of telepathology while mitigating the risks.

There are 3 major CAP recommendations for validation. First, ≥ 60 cases should be included for each use case being validated with 20 additional cases for relevant ancillary applications not included in the 60 cases. Second, diagnostic concordance (ideally ≥ 95%) should be established between digital and glass slides for the same observer. Third, there should be a 2-week washout period between the viewing of digital and glass slides (Table 2).12,13

Guidelines from the ATA establish that telepathology systems should be validated for clinical use, including non-WSI platforms.2 Published validations of other non-WSI platforms (such as by robotic or multimodality telepathology) have followed the structure proposed in the guidelines by CAP for validating WSI.14,15

Ensuring that all relevant responsibilities (clinical, facility, technical, training, documentation/archiving, quality management, and operations related) for the use of telepathology are met is another aspect of validation and implementation.2 Clinical responsibilities include an agreement between the sending (referring) and receiving (consulting) parties on the information to accompany the digital material.2 From ATA clinical guidelines, this includes identification information, provision to the consulting pathologist of all relevant clinical data, provision to retrieve for access any needed and/or relevant diagnostic material, and responsibility by referrer that the correct image/metadata was sent.2 Involved parties should be trained to manage the materials being transmitted.2

Facility responsibilities include maintaining the standard of care defined by the facility and regulatory agencies.2 The maintenance of accreditation, adherence to licensure requirements, and proper management of privileges to practice telepathology are also important.2 Technical responsibilities include ensuring a proper validation that meets the standard of care and covers use cases.2,11-13

All processes, training, and competencies should be followed and documented per standard facility operating procedures.2 ATA recommends that telepathology should result in a formal report for diagnostic consultations, maintain logs of telepathology interactions or disclaimer statements, and have an appropriate retention policy.2 The CAP recommends digital images used for primary diagnosis should be kept for 10 years if the original glass slides are not available.16 Once implemented, telepathology reports must be incorporated into the pathology and laboratory medicine department’s quality management plan for both the technical performance of the telepathology system and diagnostic performance of the pathologists using the system.2 Operations responsibilities include ensuring that the telepathology system is maintained according to vendor recommendations and regulatory standards. Appropriate provisions for space and associated needs should be developed in conjunction with the information technology team of the facility to ensure appropriate security, privacy, and regulatory compliance.2

Applications and Uses

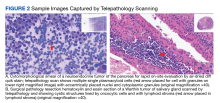

Telecytology. Rapid real-time telecytology has been documented to be useful in rapid on-site evaluations (ROSE) of the adequacy of fine needle aspirations (FNA).17-21 Nevertheless, current Medicare reimbursement is limited given that ROSE is cost prohibitive, time consuming, and affects productivity in cytology laboratories.17,22,23 Estimates of the time to provide ROSE for 1 procedure without telecytology range from 48.7 to 56.2 minutes.17,23 The use of telecytology significantly reduces pathologist ROSE time without losing quality to about 12 minutes, of which only an average of 7.5 minutes was spent by the cytopathologist for the ROSE diagnosis.17-21 ROSE also can be used for distant and remote locations to improve patient care.17-21 Multiple vendors provide real-time telecytology service. Innovations using smartphone adapters, digital cameras that could work as their own IP addresses, and connection with high-speed dedicated connections with viewing platforms on high-sensitivity monitors can facilitate ROSE to improve patient management.24,25 The successful accurate use of ROSE has been described; however, there are currently no FDA-approved telepathology ROSE platforms.17-19,21-25

To date, the FDA has not approved any telecytology whole slide scanner due to a lack of z-stacking capability in submitted scanners.7,21 Not all whole slide scanners offer z-stacking, though even in those that do offer it, the time necessary to scan the entire slide with adequate z-stacking takes too long to be clinically acceptable for many situations involving ROSE.21 WSI has also been used to develop international consensus for cytologic samples.26 Published recommendations for the validation of these other modalities before usage follow the spirit of the CAP guidelines (as far as multiple cases with high concordance rates) for validation of WSI for diagnostic purposes but vary on the exact number of slides and acceptable concordance rate.21,27 For ROSE with a robotic microscope without any on-site cytology personnel, documented standardized training of nonpathology staff members, such as the radiologist or other physician performing the FNA procedure, may be needed to enable the performance of ROSE telecytology and ensure compliance with regulations.2,21 Besides ROSE, there are published validations for telecytology in primary diagnosis and QA, indicating a role for telecytology for diagnosis for laboratories that have properly validated and implemented the laboratory-developed test.28-30

Frozen section. Telepathology has significant potential to improve access to frozen section consultation.5,31-33 Benefits to improving access to frozen section include providing frozen section consultation at remote or off-site locations, increasing access to subspecialty consultation, improving workflow by eliminating the need to travel off-site to the frozen section case, cost savings in staff work time, and providing educational opportunities for pathology trainees.5,31-33 In our experience, WSI with real-time viewing of frozen section allows for the assessment of transplant tissues, which is an evaluation that generally occurs at night. Discrepancies from frozen section telepathology using WSI to the final diagnosis may occur and those specific to WSI could result from slide or image quality, internet connectivity, and lack of training in using the telepathology system.32 Other issues that may lead to discrepancies between the frozen section diagnosis and the final diagnosis may occur with the review of glass slides by light microscopy.34 Appropriate performance of validation, training, implementation, and quality control for telepathology can help in reaping the benefits while mitigating the risks.2 In a large study comparing frozen section evaluation by telepathology with light microscopy, the sensitivity and specificity of frozen section were comparable between telepathology and light microscopy with a trend toward greater sensitivity by telepathology (0.92 and 0.99 for telepathology vs 0.90 and 0.99 by light microscopy alone, sensitivity and specificity, respectively).33

Other applications. Evidence for efficacy in surgical pathology diagnosis led to FDA approval of the Philips IntelliSite Digital Pathology in 2017 and the Leica Aperio AT2 DX in 2020 WSI platforms.6-8 The use of WSI in surgical pathology has been successfully validated or used in clinical practice at several pathology laboratory settings with documented benefits in the literature for primary and secondary diagnoses, QA, research, and education.6-8,35-45 Benefits of telepathology include improved ergonomics and access to real-time pathologic services in remote areas or during on-site pathologist absence and expert second opinions. Telepathology also may reduce risk of slide loss during transport, shortened turnaround time, reduced costs of operation through workflow efficiencies, better load balancing, improve virtual collaboration, and digital storage of slides that may be irreplaceable.3-8,35-45 Telepathology also has been shown to be useful for education, improving access to learning materials and increasing quality instructional materials at a lower cost.45 The increased ease of collaboration with remote experts and access to slide material for other pathologists improves QA capabilities.3-8,35-45 The availability of virtual slides is expected to promote further research in telepathology and pathology due to the increased availability of virtual material to researchers.1,5,46

Telehematology. Published validations have shown effectiveness for hematopathology specimens, such as the peripheral smear. Telehematology also has demonstrated potential in a laboratory after proper validation and implementation as a laboratory-developed test.37,47-49

Telemicrobiology and Computer-Assisted Pathologic Diagnosis. Telemicrobiology also has been successfully used for clinical, educational, and QA purposes.50 The digitalization of slides involved with telepathology enables further innovation in machine learning for computer-assisted pathologic diagnosis (CAPD), which is already being used clinically for cervical Pap smears.20 An artificial intelligence (AI)–based algorithm analyzes the slides to identify cells of interest, which are presented to the cytopathologist for confirmation.20 However, the expansion of CAPD to include a variety of specimen types or diagnostic situations as well as safely and effectively take initiative in completing an accurate automated diagnosis requires additional development.20,51,52 One of the key factors for machine learning to develop AI is the provision of a corpus of data.51,52 Public, open-source data sources have been limited in size while private proprietary sources have highly restricted and expensive access; to address this, there is a current effort to build the world’s largest public open-source digital pathology corpus at Temple University Hospital, which may help enable innovations in the future.52

Long-Term Trends/Applications

The COVID-19 pandemic has been unprecedented not only in its widespread morbidity and mortality, but also for the significant socioeconomic, health, lifestyle, societal, and workspace changes.53-57 Specifically, the pandemic has introduced not only a need for social distancing and staff quarantines to prevent the spread of infection, but also a reduction in the workforce due to the stresses of COVID-19 (also known as the Great Resignation).55 Before the pandemic, there was an existing downtrend in the number of pathologists in the US workforce.9-10,58,59 From 2007 to 2017, the number of active pathologists in the US declined by 17.5% despite the increasing national population, resulting in not only an absolute decrease in the number of pathologists, but also an increasing population served per pathologist ratio.59 Since 2017, this downtrend has continued; given the increasing loss of active pathologists from the workforce and the decreasing training of new pathologists, this decrease shows no signs of reversing even as the impact of the COVID-19 pandemic has begun to wane.9,10,58-60

The advantages of telepathology in enabling social distancing and reducing travel to remote sites are known.3-7,17 Given these advantages, some medical centers in the US have previously successfully validated and implemented telepathology operations earlier during the COVID-19 pandemic to ease workflow and ensure continued operations.56,57 The use of telepathology also helps in balancing workload and continuing pathology operations even in light of the workforce reduction as cases no longer need to be signed out on site with glass slides but instead can be signed out at a remote laboratory. Although the impact of the COVID-19 pandemic on operations is decreasing, the capabilities for social distancing and reducing travel remain important to both improve operations and ensure resiliency in response to similar potential events.3-7,17,60

Considering the long-term trends, the lessons of the COVID-19 pandemic, and the potential for future pandemics or other disasters, telepathology’s validation and implementation remains a reasonable choice for pathology practices looking to improve. A variety of practices not just in the general population, but also among US Department of Veterans Affairs medical centers (VAMCs) and the US Department of Defense Military Health System treating a veteran population can benefit from telepathology where it has previously been reported to have been reliable or successfully implemented.61-63 Although the veteran population differs from the general population in several characteristics, such as the severity of disease, coexisting morbidities, and other history, given proper validation and implementation, telepathology’s usefulness extends across different pathology practice settings.35-43,61-66

Limitations of Telepathology

In telepathology’s current state, there are limitations despite its immense promise.6,35 These include initial capital costs, the additional training requirement, the additional time necessary to scan slides, technical challenges (ie, laboratory information system integration, color calibration, display artifacts, potential for small particle scanner omissions, and information technology dependence), the potential for slower evaluation per slide compared with optical microscopes, limitations of slide imaging (ie, z-stacking or lack of polarization on digital pathology), and occupational concerns regarding eye strain with increased computer monitor usage (ie, computer vision syndrome).6,35 In addition, there are few telepathology scanners with FDA approval for WSI.6-8

The improving technology of telepathology has made these limitations surmountable, including faster slide scanning and increasing digital storage capacity for large WSI files. Due to this improvement in technology, an increasing number of laboratory settings, have adopted telepathology as its advantages have begun to outweigh the limitations.2-5 Additionally, the proper validation performed before implementing telepathology can help laboratories identify their unique challenges, troubleshoot, and resolve the limitations before use in clinical care.11-13 Continuing QA during its use and implementation is important to ensure that telepathology performs as expected for clinical purposes despite its limitations.2

Conclusions

Telepathology is a promising technology that may improve pathology practice once properly validated and implemented.1-8 Though there are barriers to this validation and implementation, particularly the capital costs and training, there are several potential benefits, including increased productivity, cost savings, improvement in the workflow, enhanced access to pathologic consultation, and adaptability of the pathology laboratory in an era of a decreased workforce and social distancing due to the COVID-19 pandemic.1-8,55-56 This potential applies across the wide spectrum of potential telepathology uses from frozen section, telecytology (including ROSE) to primary and second opinion diagnoses.1-8,17-33 The benefits also extends to QA, education, and research, as diagnoses can not only be rereviewed by specialty or second opinion consultation with ease, but also digital slides can be produced for educational and research purposes.3-8,35-45 Settings that treat the general population and those focused on the care of veterans or members of the armed forces have reported similar reliability or successful implementation.35-44,61-63 All in all, the use of telepathology represents an innovation that may transform the practice of pathology tomorrow.

1. Weinstein RS. Prospects for telepathology. Hum Pathol. 1986;17(5):433-434. doi:10.1016/s0046-8177(86)80028-4

2. Pantanowitz L, Dickinson K, Evans AJ, et al. American Telemedicine Association clinical guidelines for telepathology. J Pathol Inform. 2014;5(1):39. Published 2014 Oct 21. doi:10.4103/2153-3539.143329

3. Farahani N, Pantanowitz L. Overview of telepathology. Surg Pathol Clin. 2015;8(2):223-231. doi:10.1016/j.path. 2015.02.018 4. Petersen JM, Jhala D. Telepathology: a transforming practice for the efficient, safe, and best patient care at the regional Veteran Affairs medical center. Am J Clin Pathol. 2022;158(suppl 1):S97-S98. doi:10.1093/ajcp/aqac126.205

5. Bashshur RL, Krupinski EA, Weinstein RS, Dunn MR, Bashshur N. The empirical foundations of telepathology: evidence of feasibility and intermediate effects. Telemed J E Health. 2017;23(3):155-191. doi:10.1089/tmj.2016.0278

6. Jahn SW, Plass M, Moinfar F. Digital pathology: advantages, limitations and emerging perspectives. J Clin Med. 2020;9(11):3697. Published 2020 Nov 18. doi:10.3390/jcm9113697

7. Evans AJ, Bauer TW, Bui MM, et al. US Food and Drug Administration approval of whole slide imaging for primary diagnosis: a key milestone is reached and new questions are raised. Arch Pathol Lab Med. 2018;142(11):1383-1387. doi:10.5858/arpa.2017-0496-CP.

8. Patel A, Balis UGJ, Cheng J, et al. Contemporary whole slide imaging devices and their applications within the modern pathology department: a selected hardware review. J Pathol Inform. 2021;12:50. Published 2021 Dec 9. doi:10.4103/jpi.jpi_66_21

9. Association of American Medical Colleges. 2017 State Physician Workforce Data Book. November 2017. Accessed April 14, 2023. https://store.aamc.org/downloadable/download/sample/sample_id/30

10. Robboy SJ, Gross D, Park JY, et al. Reevaluation of the US pathologist workforce size. JAMA Netw Open. 2020;3(7):e2010648. Published 2020 Jul 1. doi:10.1001/jamanetworkopen.2020.10648

11. Pantanowitz L, Sinard JH, Henricks WH, et al. Validating whole slide imaging for diagnostic purposes in pathology: guideline from the College of American Pathologists Pathology and Laboratory Quality Center. Arch Pathol Lab Med. 2013;137(12):1710-1722. doi:10.5858/arpa.2013-0093-CP

12. Evans AJ, Brown RW, Bui MM, et al. Validating whole slide imaging systems for diagnostic purposes in pathology. Arch Pathol Lab Med. 2021;146(4):440-450. doi:10.5858/arpa.2020-0723-CP

13. Evans AJ, Lacchetti C, Reid K, Thomas NE. Validating whole slide imaging for diagnostic purposes in pathology: guideline update. College of American Pathologists. May 2021. Accessed April 13, 2023. https://documents.cap.org/documents/wsi-methodology.pdf

14. Chandraratnam E, Santos LD, Chou S, et al. Parathyroid frozen section interpretation via desktop telepathology systems: a validation study. J Pathol Inform. 2018;9:41. Published 2018 Dec 3. doi:10.4103/jpi.jpi_57_18

15. Thrall MJ, Rivera AL, Takei H, Powell SZ. Validation of a novel robotic telepathology platform for neuropathology intraoperative touch preparations. J Pathol Inform. 2014;5(1):21. Published 2014 Jul 28. doi:10.4103/2153-3539.137642

16. Balis UGJ, Williams CL, Cheng J, et al. Whole-Slide Imaging: Thinking Twice Before Hitting the Delete Key. AJSP: Reviews & Reports. 2018;23(6):p 249-250. doi:10.1097/PCR.0000000000000283

17. Kim B, Chhieng DC, Crowe DR, et al. Dynamic telecytopathology of on site rapid cytology diagnoses for pancreatic carcinoma. Cytojournal. 2006;3:27. Published 2006 Dec 11. doi:10.1186/1742-6413-3-27

18. Perez D, Stemmer MN, Khurana KK. Utilization of dynamic telecytopathology for rapid onsite evaluation of touch imprint cytology of needle core biopsy: diagnostic accuracy and pitfalls. Telemed J E Health. 2021;27(5):525-531. doi:10.1089/tmj.2020.0117

19. McCarthy EE, McMahon RQ, Das K, Stewart J 3rd. Internal validation testing for new technologies: bringing telecytopathology into the mainstream. Diagn Cytopathol. 2015;43(1):3-7. doi:10.1002/dc.23167

20. Marletta S, Treanor D, Eccher A, Pantanowitz L. Whole-slide imaging in cytopathology: state of the art and future directions. Diagn Histopathol (Oxf). 2021;27(11):425-430. doi:10.1016/j.mpdhp.2021.08.001

21. Lin O. Telecytology for rapid on-site evaluation: current status. J Am Soc Cytopathol. 2018;7(1):1-6. doi:10.1016/j.jasc.2017.10.002

22. Eloubeidi MA, Tamhane A, Jhala N, et al. Agreement between rapid onsite and final cytologic interpretations of EUS-guided FNA specimens: implications for the endosonographer and patient management. Am J Gastroenterol. 2006;101(12):2841-2847. doi:10.1111/j.1572-0241.2006.00852.x

23. Layfield LJ, Bentz JS, Gopez EV. Immediate on-site interpretation of fine-needle aspiration smears: a cost and compensation analysis. Cancer. 2001;93(5):319-322. doi:10.1002/cncr.9046

24. Fontelo P, Liu F, Yagi Y. Evaluation of a smartphone for telepathology: lessons learned. J Pathol Inform. 2015;6:35. Published 2015 Jun 23. doi:10.4103/2153-3539.158912

25. Lin O. Telecytology for rapid on-site evaluation: current status. J Am Soc Cytopathol. 2018;7(1):1-6. doi:10.1016/j.jasc.2017.10.002

26. Johnson DN, Onenerk M, Krane JF, et al. Cytologic grading of primary malignant salivary gland tumors: A blinded review by an international panel. Cancer Cytopathol. 2020;128(6):392-402. doi:10.1002/cncy.22271

27. Trabzonlu L, Chatt G, McIntire PJ, et al. Telecytology validation: is there a recipe for everybody? J Am Soc Cytopathol. 2022;11(4):218-225. doi:10.1016/j.jasc.2022.03.001

28. Canberk S, Behzatoglu K, Caliskan CK, et al. The role of telecytology in the primary diagnosis of thyroid fine-needle aspiration specimens. Acta Cytol. 2020;64(4):323-331. doi:10.1159/000503914.

29. Archondakis S, Roma M, Kaladelfou E. Implementation of pre-captured videos for remote diagnosis of cervical cytology specimens. Cytopathology. 2021;32(3):338-343. doi:10.1111/cyt.12948

30. Lee ES, Kim IS, Choi JS, et al. Accuracy and reproducibility of telecytology diagnosis of cervical smears. A tool for quality assurance programs. Am J Clin Pathol. 2003;119(3):356-360. doi:10.1309/7ytvag4xnr48t75h

31. Dietz RL, Hartman DJ, Pantanowitz L. Systematic review of the use of telepathology during intraoperative consultation. Am J Clin Pathol. 2020;153(2):198-209. doi:10.1093/ajcp/aqz155

32. Bauer TW, Slaw RJ, McKenney JK, Patil DT. Validation of whole slide imaging for frozen section diagnosis in surgical pathology. J Pathol Inform. 2015;6:49. Published 2015 Aug 31. doi:10.4103/2153-3539.163988

33. Vosoughi A, Smith PT, Zeitouni JA, et al. Frozen section evaluation via dynamic real-time nonrobotic telepathology system in a university cancer center by resident/faculty cooperation team. Hum Pathol. 2018;78:144-150. doi:10.1016/j.humpath.2018.04.012

34. Mahe E, Ara S, Bishara M, et al. Intraoperative pathology consultation: error, cause and impact. Can J Surg. 2013;56(3):E13-E18. doi:10.1503/cjs.011112.

35. Farahani N, Parwani AV, Pantanowitz L. Whole slide imaging in pathology: advantages, limitations, and emerging perspectives. Pathol Lab Med Int. 2015;7:23-33. doi:10.2147/PLMI.S59826

36. Thorstenson S, Molin J, Lundström C. Implementation of large-scale routine diagnostics using whole slide imaging in Sweden: digital pathology experiences 2006-2013. J Pathol Inform. 2014;5(1):14. Published 2014 Mar 28. doi:10.4103/2153-3539.129452

37. Pantanowitz L, Wiley CA, Demetris A, et al. Experience with multimodality telepathology at the University of Pittsburgh Medical Center. J Pathol Inform. 2012;3:45. doi:10.4103/2153-3539.104907

38. Al Habeeb A, Evans A, Ghazarian D. Virtual microscopy using whole-slide imaging as an enabler for teledermatopathology: a paired consultant validation study. J Pathol Inform. 2012;3:2. doi:10.4103/2153-3539.93399

39. Al-Janabi S, Huisman A, Vink A, et al. Whole slide images for primary diagnostics in dermatopathology: a feasibility study. J Clin Pathol. 2012;65(2):152-158. doi:10.1136/jclinpath-2011-200277

40. Nielsen PS, Lindebjerg J, Rasmussen J, Starklint H, Waldstrøm M, Nielsen B. Virtual microscopy: an evaluation of its validity and diagnostic performance in routine histologic diagnosis of skin tumors. Hum Pathol. 2010;41(12):1770-1776. doi:10.1016/j.humpath.2010.05.015

41. Leinweber B, Massone C, Kodama K, et al. Telederma-topathology: a controlled study about diagnostic validity and technical requirements for digital transmission. Am J Dermatopathol. 2006;28(5):413-416. doi:10.1097/01.dad.0000211523.95552.86

42. Koch LH, Lampros JN, Delong LK, Chen SC, Woosley JT, Hood AF. Randomized comparison of virtual microscopy and traditional glass microscopy in diagnostic accuracy among dermatology and pathology residents. Hum Pathol. 2009;40(5):662-667. doi:10.1016/j.humpath.2008.10.009

43. Farris AB, Cohen C, Rogers TE, Smith GH. Whole slide imaging for analytical anatomic pathology and telepathology: practical applications today, promises, and perils. Arch Pathol Lab Med. 2017;141(4):542-550. doi:10.5858/arpa.2016-0265-SA

44. Chong T, Palma-Diaz MF, Fisher C, et al. The California Telepathology Service: UCLA’s experience in deploying a regional digital pathology subspecialty consultation network. J Pathol Inform. 2019;10:31. Published 2019 Sep 27. doi:10.4103/jpi.jpi_22_19

45. Meyer J, Paré G. Telepathology impacts and implementation challenges: a scoping review. Arch Pathol Lab Med. 2015;139(12):1550-1557. doi:10.5858/arpa.2014-0606-RA

46. Weinstein RS, Descour MR, Liang C, et al. Telepathology overview: from concept to implementation. Hum Pathol. 2001;32(12):1283-1299. doi:10.1053/hupa.2001.29643

47. Riley RS, Ben-Ezra JM, Massey D, Cousar J. The virtual blood film. Clin Lab Med. 2002;22(1):317-345. doi:10.1016/s0272-2712(03)00077-5

48. Garcia CA, Hanna M, Contis LC, Pantanowitz L, Hyman R. Sharing Cellavision blood smear images with clinicians via the electronic medical record. Blood. 2017;130(suppl 1):5586. doi:10.1182/blood.V130.Suppl_1.5586.5586

49. Goswami R, Pi D, Pal J, Cheng K, Hudoba De Badyn M. Performance evaluation of a dynamic telepathology system (Panoptiq) in the morphologic assessment of peripheral blood film abnormalities. Int J Lab Hematol. 2015;37(3):365-371. doi:10.1111/ijlh.12294

50. Rhoads DD, Mathison BA, Bishop HS, da Silva AJ, Pantanowitz L. Review of telemicrobiology. Arch Pathol Lab Med. 2016;140(4):362-370. doi:10.5858/arpa.2015-0116-RA51. Nam S, Chong Y, Jung CK, et al. Introduction to digital pathology and computer-aided pathology. J Pathol Transl Med. 2020;54(2):125-134. doi:10.4132/jptm.2019.12.31

52. Houser D, Shadhin G, Anstotz R, et al. The Temple University Hospital Digital Pathology Corpus. IEEE Signal Process Med Biol Symp. 2018:1-7. doi:10.1109/SPMB.2018.8615619

53. Petersen J, Dalal S, Jhala D. Criticality of in-house preparation of viral transport medium in times of shortage during COVID-19 pandemic. Lab Med. 2021;52(2):e39-e45. doi:10.1093/labmed/lmaa099

54. Ranney ML, Griffeth V, Jha AK. Critical supply shortages—the need for ventilators and personal protective equipment during the Covid-19 pandemic. N Engl J Med. 2020;382(18):e41. doi:10.1056/NEJMp2006141

55. Ksinan Jiskrova G. Impact of COVID-19 pandemic on the workforce: from psychological distress to the Great Resignation. J Epidemiol Community Health. 2022;76(6):525-526. doi:10.1136/jech-2022-218826

56. Henriksen J, Kolognizak T, Houghton T, et al. Rapid validation of telepathology by an academic neuropathology practice during the COVID-19 pandemic. Arch Pathol Lab Med. 2020;144(11):1311-1320. doi:10.5858/arpa.2020-0372-SA

57. Ardon O, Reuter VE, Hameed M, et al. Digital pathology operations at an NYC tertiary cancer center during the first 4 months of COVID-19 pandemic response. Acad Pathol. 2021;8:23742895211010276. Published 2021 Apr 28. doi:10.1177/23742895211010276

58. Jajosky RP, Jajosky AN, Kleven DT, Singh G. Fewer seniors from United States allopathic medical schools are filling pathology residency positions in the Main Residency Match, 2008-2017. Hum Pathol. 2018;73:26-32. doi:10.1016/j.humpath.2017.11.014

59. Metter DM, Colgan TJ, Leung ST, Timmons CF, Park JY. Trends in the US and Canadian pathologist workforces from 2007 to 2017. JAMA Netw Open. 2019;2(5):e194337. Published 2019 May 3. doi:10.1001/jamanetworkopen.2019.4337

60. Murray CJL. COVID-19 will continue but the end of the pandemic is near. Lancet. 2022;399(10323):417-419. doi:10.1016/S0140-6736(22)00100-3

61. Ghosh A, Brown GT, Fontelo P. Telepathology at the Armed Forces Institute of Pathology: a retrospective review of consultations from 1996 to 1997. Arch Pathol Lab Med. 2018;142(2):248-252. doi:10.5858/arpa.2017-0055-OA

62. Dunn BE, Choi H, Almagro UA, Recla DL, Davis CW. Telepathology networking in VISN-12 of the Veterans Health Administration. Telemed J E Health. 2000;6(3):349-354. doi:10.1089/153056200750040200

63. Dunn BE, Almagro UA, Choi H, et al. Dynamic-robotic telepathology: Department of Veterans Affairs feasibility study. Hum Pathol. 1997;28(1):8-12. doi:10.1016/s0046-8177(97)90271-9

64. Agha Z, Lofgren RP, VanRuiswyk JV, Layde PM. Are patients at Veterans Affairs medical centers sicker? A comparative analysis of health status and medical resource use. Arch Intern Med. 2000;160(21):3252-3257. doi:10.1001/archinte.160.21.3252

65. Eibner C, Krull H, Brown KM, et al. Current and projected characteristics and unique health care needs of the patient population served by the Department of Veterans Affairs. Rand Health Q. 2016;5(4):13. Published 2016 May 9.

66. Morgan RO, Teal CR, Reddy SG, Ford ME, Ashton CM. Measurement in Veterans Affairs Health Services Research: veterans as a special population. Health Serv Res. 2005;40(5, pt 2):1573-1583. doi:10.1111/j.1475-6773.2005.00448

Advances in technology, including ubiquitous access to the internet and the capacity to transfer high-resolution representative images, have facilitated the adoption of telepathology by laboratories worldwide.1-5 Telepathology includes the use of telecommunication links that enable transmission of digital pathology images for primary diagnosis, quality assurance (QA), education, research, or second opinion diagnoses.3 This improvement has culminated in approvals by the US Food and Drug Administration (FDA) of whole slide imaging (WSI) systems for surgical pathology slides: specifically, the Philips IntelliSite Digital Pathology Solution in 2017 and the Leica Aperio AT2 DX in 2020.6-8 However, the approvals do not include telecytology due to lack of whole slide multiplanar scanning at different planes of focus or z-stacking capabilities.7

Long-term trends in pathology, specifically the slow reduction in the number of practicing pathologists available in the workforce compared with the total served population, along with the social distancing imperatives and disruptions brought about by the COVID-19 pandemic have made telepathology implementation pertinent to continue and improve pathology practice.8-10

Description and Definitions

The primary modes of telepathology (static image telepathology, robotic telepathology, video microscopy, WSI, and multimodality telepathology) have been defined by the American Telemedicine Association (ATA).2 WSI has been particularly suited for telepathology due to the ability to view digital slides in high resolution at various magnifications. These image files can also be viewed and shared with ease with other observers. Also, they take a shorter time to view compared with the use of a robotic microscope.3

Selection, Validation, and Implementation

WSI platforms vary in their characteristics and have several parameters, including but not limited to batch scanning vs continuous or random-access processing, throughput volume capacities, scan speed, cost, manual vs automatic loading of slides, image quality, slide capacity, flexibility for different slide sizes/features, telepathology capabilities once slide scanned, z-stacking, and regulatory approval status.8 Selection of the WSI device is dependent on need and cost considerations. For example, use for frozen section requires faster scanning speed and does not generally require a high throughput scanner.

Validation of telepathology by the testing site demonstrates that the new system performs as expected for its intended clinical use before being put into service and that the digital slides produced are acceptable for clinical diagnostic interpretation.11 The College of American Pathologists (CAP) established WSI validation guidelines are part of the published laboratory standard of care.11-13 An appropriate validation enables the benefits of telepathology while mitigating the risks.

There are 3 major CAP recommendations for validation. First, ≥ 60 cases should be included for each use case being validated with 20 additional cases for relevant ancillary applications not included in the 60 cases. Second, diagnostic concordance (ideally ≥ 95%) should be established between digital and glass slides for the same observer. Third, there should be a 2-week washout period between the viewing of digital and glass slides (Table 2).12,13

Guidelines from the ATA establish that telepathology systems should be validated for clinical use, including non-WSI platforms.2 Published validations of other non-WSI platforms (such as by robotic or multimodality telepathology) have followed the structure proposed in the guidelines by CAP for validating WSI.14,15

Ensuring that all relevant responsibilities (clinical, facility, technical, training, documentation/archiving, quality management, and operations related) for the use of telepathology are met is another aspect of validation and implementation.2 Clinical responsibilities include an agreement between the sending (referring) and receiving (consulting) parties on the information to accompany the digital material.2 From ATA clinical guidelines, this includes identification information, provision to the consulting pathologist of all relevant clinical data, provision to retrieve for access any needed and/or relevant diagnostic material, and responsibility by referrer that the correct image/metadata was sent.2 Involved parties should be trained to manage the materials being transmitted.2

Facility responsibilities include maintaining the standard of care defined by the facility and regulatory agencies.2 The maintenance of accreditation, adherence to licensure requirements, and proper management of privileges to practice telepathology are also important.2 Technical responsibilities include ensuring a proper validation that meets the standard of care and covers use cases.2,11-13

All processes, training, and competencies should be followed and documented per standard facility operating procedures.2 ATA recommends that telepathology should result in a formal report for diagnostic consultations, maintain logs of telepathology interactions or disclaimer statements, and have an appropriate retention policy.2 The CAP recommends digital images used for primary diagnosis should be kept for 10 years if the original glass slides are not available.16 Once implemented, telepathology reports must be incorporated into the pathology and laboratory medicine department’s quality management plan for both the technical performance of the telepathology system and diagnostic performance of the pathologists using the system.2 Operations responsibilities include ensuring that the telepathology system is maintained according to vendor recommendations and regulatory standards. Appropriate provisions for space and associated needs should be developed in conjunction with the information technology team of the facility to ensure appropriate security, privacy, and regulatory compliance.2

Applications and Uses

Telecytology. Rapid real-time telecytology has been documented to be useful in rapid on-site evaluations (ROSE) of the adequacy of fine needle aspirations (FNA).17-21 Nevertheless, current Medicare reimbursement is limited given that ROSE is cost prohibitive, time consuming, and affects productivity in cytology laboratories.17,22,23 Estimates of the time to provide ROSE for 1 procedure without telecytology range from 48.7 to 56.2 minutes.17,23 The use of telecytology significantly reduces pathologist ROSE time without losing quality to about 12 minutes, of which only an average of 7.5 minutes was spent by the cytopathologist for the ROSE diagnosis.17-21 ROSE also can be used for distant and remote locations to improve patient care.17-21 Multiple vendors provide real-time telecytology service. Innovations using smartphone adapters, digital cameras that could work as their own IP addresses, and connection with high-speed dedicated connections with viewing platforms on high-sensitivity monitors can facilitate ROSE to improve patient management.24,25 The successful accurate use of ROSE has been described; however, there are currently no FDA-approved telepathology ROSE platforms.17-19,21-25

To date, the FDA has not approved any telecytology whole slide scanner due to a lack of z-stacking capability in submitted scanners.7,21 Not all whole slide scanners offer z-stacking, though even in those that do offer it, the time necessary to scan the entire slide with adequate z-stacking takes too long to be clinically acceptable for many situations involving ROSE.21 WSI has also been used to develop international consensus for cytologic samples.26 Published recommendations for the validation of these other modalities before usage follow the spirit of the CAP guidelines (as far as multiple cases with high concordance rates) for validation of WSI for diagnostic purposes but vary on the exact number of slides and acceptable concordance rate.21,27 For ROSE with a robotic microscope without any on-site cytology personnel, documented standardized training of nonpathology staff members, such as the radiologist or other physician performing the FNA procedure, may be needed to enable the performance of ROSE telecytology and ensure compliance with regulations.2,21 Besides ROSE, there are published validations for telecytology in primary diagnosis and QA, indicating a role for telecytology for diagnosis for laboratories that have properly validated and implemented the laboratory-developed test.28-30

Frozen section. Telepathology has significant potential to improve access to frozen section consultation.5,31-33 Benefits to improving access to frozen section include providing frozen section consultation at remote or off-site locations, increasing access to subspecialty consultation, improving workflow by eliminating the need to travel off-site to the frozen section case, cost savings in staff work time, and providing educational opportunities for pathology trainees.5,31-33 In our experience, WSI with real-time viewing of frozen section allows for the assessment of transplant tissues, which is an evaluation that generally occurs at night. Discrepancies from frozen section telepathology using WSI to the final diagnosis may occur and those specific to WSI could result from slide or image quality, internet connectivity, and lack of training in using the telepathology system.32 Other issues that may lead to discrepancies between the frozen section diagnosis and the final diagnosis may occur with the review of glass slides by light microscopy.34 Appropriate performance of validation, training, implementation, and quality control for telepathology can help in reaping the benefits while mitigating the risks.2 In a large study comparing frozen section evaluation by telepathology with light microscopy, the sensitivity and specificity of frozen section were comparable between telepathology and light microscopy with a trend toward greater sensitivity by telepathology (0.92 and 0.99 for telepathology vs 0.90 and 0.99 by light microscopy alone, sensitivity and specificity, respectively).33

Other applications. Evidence for efficacy in surgical pathology diagnosis led to FDA approval of the Philips IntelliSite Digital Pathology in 2017 and the Leica Aperio AT2 DX in 2020 WSI platforms.6-8 The use of WSI in surgical pathology has been successfully validated or used in clinical practice at several pathology laboratory settings with documented benefits in the literature for primary and secondary diagnoses, QA, research, and education.6-8,35-45 Benefits of telepathology include improved ergonomics and access to real-time pathologic services in remote areas or during on-site pathologist absence and expert second opinions. Telepathology also may reduce risk of slide loss during transport, shortened turnaround time, reduced costs of operation through workflow efficiencies, better load balancing, improve virtual collaboration, and digital storage of slides that may be irreplaceable.3-8,35-45 Telepathology also has been shown to be useful for education, improving access to learning materials and increasing quality instructional materials at a lower cost.45 The increased ease of collaboration with remote experts and access to slide material for other pathologists improves QA capabilities.3-8,35-45 The availability of virtual slides is expected to promote further research in telepathology and pathology due to the increased availability of virtual material to researchers.1,5,46

Telehematology. Published validations have shown effectiveness for hematopathology specimens, such as the peripheral smear. Telehematology also has demonstrated potential in a laboratory after proper validation and implementation as a laboratory-developed test.37,47-49

Telemicrobiology and Computer-Assisted Pathologic Diagnosis. Telemicrobiology also has been successfully used for clinical, educational, and QA purposes.50 The digitalization of slides involved with telepathology enables further innovation in machine learning for computer-assisted pathologic diagnosis (CAPD), which is already being used clinically for cervical Pap smears.20 An artificial intelligence (AI)–based algorithm analyzes the slides to identify cells of interest, which are presented to the cytopathologist for confirmation.20 However, the expansion of CAPD to include a variety of specimen types or diagnostic situations as well as safely and effectively take initiative in completing an accurate automated diagnosis requires additional development.20,51,52 One of the key factors for machine learning to develop AI is the provision of a corpus of data.51,52 Public, open-source data sources have been limited in size while private proprietary sources have highly restricted and expensive access; to address this, there is a current effort to build the world’s largest public open-source digital pathology corpus at Temple University Hospital, which may help enable innovations in the future.52

Long-Term Trends/Applications

The COVID-19 pandemic has been unprecedented not only in its widespread morbidity and mortality, but also for the significant socioeconomic, health, lifestyle, societal, and workspace changes.53-57 Specifically, the pandemic has introduced not only a need for social distancing and staff quarantines to prevent the spread of infection, but also a reduction in the workforce due to the stresses of COVID-19 (also known as the Great Resignation).55 Before the pandemic, there was an existing downtrend in the number of pathologists in the US workforce.9-10,58,59 From 2007 to 2017, the number of active pathologists in the US declined by 17.5% despite the increasing national population, resulting in not only an absolute decrease in the number of pathologists, but also an increasing population served per pathologist ratio.59 Since 2017, this downtrend has continued; given the increasing loss of active pathologists from the workforce and the decreasing training of new pathologists, this decrease shows no signs of reversing even as the impact of the COVID-19 pandemic has begun to wane.9,10,58-60

The advantages of telepathology in enabling social distancing and reducing travel to remote sites are known.3-7,17 Given these advantages, some medical centers in the US have previously successfully validated and implemented telepathology operations earlier during the COVID-19 pandemic to ease workflow and ensure continued operations.56,57 The use of telepathology also helps in balancing workload and continuing pathology operations even in light of the workforce reduction as cases no longer need to be signed out on site with glass slides but instead can be signed out at a remote laboratory. Although the impact of the COVID-19 pandemic on operations is decreasing, the capabilities for social distancing and reducing travel remain important to both improve operations and ensure resiliency in response to similar potential events.3-7,17,60

Considering the long-term trends, the lessons of the COVID-19 pandemic, and the potential for future pandemics or other disasters, telepathology’s validation and implementation remains a reasonable choice for pathology practices looking to improve. A variety of practices not just in the general population, but also among US Department of Veterans Affairs medical centers (VAMCs) and the US Department of Defense Military Health System treating a veteran population can benefit from telepathology where it has previously been reported to have been reliable or successfully implemented.61-63 Although the veteran population differs from the general population in several characteristics, such as the severity of disease, coexisting morbidities, and other history, given proper validation and implementation, telepathology’s usefulness extends across different pathology practice settings.35-43,61-66

Limitations of Telepathology

In telepathology’s current state, there are limitations despite its immense promise.6,35 These include initial capital costs, the additional training requirement, the additional time necessary to scan slides, technical challenges (ie, laboratory information system integration, color calibration, display artifacts, potential for small particle scanner omissions, and information technology dependence), the potential for slower evaluation per slide compared with optical microscopes, limitations of slide imaging (ie, z-stacking or lack of polarization on digital pathology), and occupational concerns regarding eye strain with increased computer monitor usage (ie, computer vision syndrome).6,35 In addition, there are few telepathology scanners with FDA approval for WSI.6-8

The improving technology of telepathology has made these limitations surmountable, including faster slide scanning and increasing digital storage capacity for large WSI files. Due to this improvement in technology, an increasing number of laboratory settings, have adopted telepathology as its advantages have begun to outweigh the limitations.2-5 Additionally, the proper validation performed before implementing telepathology can help laboratories identify their unique challenges, troubleshoot, and resolve the limitations before use in clinical care.11-13 Continuing QA during its use and implementation is important to ensure that telepathology performs as expected for clinical purposes despite its limitations.2

Conclusions

Telepathology is a promising technology that may improve pathology practice once properly validated and implemented.1-8 Though there are barriers to this validation and implementation, particularly the capital costs and training, there are several potential benefits, including increased productivity, cost savings, improvement in the workflow, enhanced access to pathologic consultation, and adaptability of the pathology laboratory in an era of a decreased workforce and social distancing due to the COVID-19 pandemic.1-8,55-56 This potential applies across the wide spectrum of potential telepathology uses from frozen section, telecytology (including ROSE) to primary and second opinion diagnoses.1-8,17-33 The benefits also extends to QA, education, and research, as diagnoses can not only be rereviewed by specialty or second opinion consultation with ease, but also digital slides can be produced for educational and research purposes.3-8,35-45 Settings that treat the general population and those focused on the care of veterans or members of the armed forces have reported similar reliability or successful implementation.35-44,61-63 All in all, the use of telepathology represents an innovation that may transform the practice of pathology tomorrow.

Advances in technology, including ubiquitous access to the internet and the capacity to transfer high-resolution representative images, have facilitated the adoption of telepathology by laboratories worldwide.1-5 Telepathology includes the use of telecommunication links that enable transmission of digital pathology images for primary diagnosis, quality assurance (QA), education, research, or second opinion diagnoses.3 This improvement has culminated in approvals by the US Food and Drug Administration (FDA) of whole slide imaging (WSI) systems for surgical pathology slides: specifically, the Philips IntelliSite Digital Pathology Solution in 2017 and the Leica Aperio AT2 DX in 2020.6-8 However, the approvals do not include telecytology due to lack of whole slide multiplanar scanning at different planes of focus or z-stacking capabilities.7

Long-term trends in pathology, specifically the slow reduction in the number of practicing pathologists available in the workforce compared with the total served population, along with the social distancing imperatives and disruptions brought about by the COVID-19 pandemic have made telepathology implementation pertinent to continue and improve pathology practice.8-10

Description and Definitions

The primary modes of telepathology (static image telepathology, robotic telepathology, video microscopy, WSI, and multimodality telepathology) have been defined by the American Telemedicine Association (ATA).2 WSI has been particularly suited for telepathology due to the ability to view digital slides in high resolution at various magnifications. These image files can also be viewed and shared with ease with other observers. Also, they take a shorter time to view compared with the use of a robotic microscope.3

Selection, Validation, and Implementation

WSI platforms vary in their characteristics and have several parameters, including but not limited to batch scanning vs continuous or random-access processing, throughput volume capacities, scan speed, cost, manual vs automatic loading of slides, image quality, slide capacity, flexibility for different slide sizes/features, telepathology capabilities once slide scanned, z-stacking, and regulatory approval status.8 Selection of the WSI device is dependent on need and cost considerations. For example, use for frozen section requires faster scanning speed and does not generally require a high throughput scanner.

Validation of telepathology by the testing site demonstrates that the new system performs as expected for its intended clinical use before being put into service and that the digital slides produced are acceptable for clinical diagnostic interpretation.11 The College of American Pathologists (CAP) established WSI validation guidelines are part of the published laboratory standard of care.11-13 An appropriate validation enables the benefits of telepathology while mitigating the risks.

There are 3 major CAP recommendations for validation. First, ≥ 60 cases should be included for each use case being validated with 20 additional cases for relevant ancillary applications not included in the 60 cases. Second, diagnostic concordance (ideally ≥ 95%) should be established between digital and glass slides for the same observer. Third, there should be a 2-week washout period between the viewing of digital and glass slides (Table 2).12,13

Guidelines from the ATA establish that telepathology systems should be validated for clinical use, including non-WSI platforms.2 Published validations of other non-WSI platforms (such as by robotic or multimodality telepathology) have followed the structure proposed in the guidelines by CAP for validating WSI.14,15

Ensuring that all relevant responsibilities (clinical, facility, technical, training, documentation/archiving, quality management, and operations related) for the use of telepathology are met is another aspect of validation and implementation.2 Clinical responsibilities include an agreement between the sending (referring) and receiving (consulting) parties on the information to accompany the digital material.2 From ATA clinical guidelines, this includes identification information, provision to the consulting pathologist of all relevant clinical data, provision to retrieve for access any needed and/or relevant diagnostic material, and responsibility by referrer that the correct image/metadata was sent.2 Involved parties should be trained to manage the materials being transmitted.2

Facility responsibilities include maintaining the standard of care defined by the facility and regulatory agencies.2 The maintenance of accreditation, adherence to licensure requirements, and proper management of privileges to practice telepathology are also important.2 Technical responsibilities include ensuring a proper validation that meets the standard of care and covers use cases.2,11-13

All processes, training, and competencies should be followed and documented per standard facility operating procedures.2 ATA recommends that telepathology should result in a formal report for diagnostic consultations, maintain logs of telepathology interactions or disclaimer statements, and have an appropriate retention policy.2 The CAP recommends digital images used for primary diagnosis should be kept for 10 years if the original glass slides are not available.16 Once implemented, telepathology reports must be incorporated into the pathology and laboratory medicine department’s quality management plan for both the technical performance of the telepathology system and diagnostic performance of the pathologists using the system.2 Operations responsibilities include ensuring that the telepathology system is maintained according to vendor recommendations and regulatory standards. Appropriate provisions for space and associated needs should be developed in conjunction with the information technology team of the facility to ensure appropriate security, privacy, and regulatory compliance.2

Applications and Uses

Telecytology. Rapid real-time telecytology has been documented to be useful in rapid on-site evaluations (ROSE) of the adequacy of fine needle aspirations (FNA).17-21 Nevertheless, current Medicare reimbursement is limited given that ROSE is cost prohibitive, time consuming, and affects productivity in cytology laboratories.17,22,23 Estimates of the time to provide ROSE for 1 procedure without telecytology range from 48.7 to 56.2 minutes.17,23 The use of telecytology significantly reduces pathologist ROSE time without losing quality to about 12 minutes, of which only an average of 7.5 minutes was spent by the cytopathologist for the ROSE diagnosis.17-21 ROSE also can be used for distant and remote locations to improve patient care.17-21 Multiple vendors provide real-time telecytology service. Innovations using smartphone adapters, digital cameras that could work as their own IP addresses, and connection with high-speed dedicated connections with viewing platforms on high-sensitivity monitors can facilitate ROSE to improve patient management.24,25 The successful accurate use of ROSE has been described; however, there are currently no FDA-approved telepathology ROSE platforms.17-19,21-25

To date, the FDA has not approved any telecytology whole slide scanner due to a lack of z-stacking capability in submitted scanners.7,21 Not all whole slide scanners offer z-stacking, though even in those that do offer it, the time necessary to scan the entire slide with adequate z-stacking takes too long to be clinically acceptable for many situations involving ROSE.21 WSI has also been used to develop international consensus for cytologic samples.26 Published recommendations for the validation of these other modalities before usage follow the spirit of the CAP guidelines (as far as multiple cases with high concordance rates) for validation of WSI for diagnostic purposes but vary on the exact number of slides and acceptable concordance rate.21,27 For ROSE with a robotic microscope without any on-site cytology personnel, documented standardized training of nonpathology staff members, such as the radiologist or other physician performing the FNA procedure, may be needed to enable the performance of ROSE telecytology and ensure compliance with regulations.2,21 Besides ROSE, there are published validations for telecytology in primary diagnosis and QA, indicating a role for telecytology for diagnosis for laboratories that have properly validated and implemented the laboratory-developed test.28-30

Frozen section. Telepathology has significant potential to improve access to frozen section consultation.5,31-33 Benefits to improving access to frozen section include providing frozen section consultation at remote or off-site locations, increasing access to subspecialty consultation, improving workflow by eliminating the need to travel off-site to the frozen section case, cost savings in staff work time, and providing educational opportunities for pathology trainees.5,31-33 In our experience, WSI with real-time viewing of frozen section allows for the assessment of transplant tissues, which is an evaluation that generally occurs at night. Discrepancies from frozen section telepathology using WSI to the final diagnosis may occur and those specific to WSI could result from slide or image quality, internet connectivity, and lack of training in using the telepathology system.32 Other issues that may lead to discrepancies between the frozen section diagnosis and the final diagnosis may occur with the review of glass slides by light microscopy.34 Appropriate performance of validation, training, implementation, and quality control for telepathology can help in reaping the benefits while mitigating the risks.2 In a large study comparing frozen section evaluation by telepathology with light microscopy, the sensitivity and specificity of frozen section were comparable between telepathology and light microscopy with a trend toward greater sensitivity by telepathology (0.92 and 0.99 for telepathology vs 0.90 and 0.99 by light microscopy alone, sensitivity and specificity, respectively).33

Other applications. Evidence for efficacy in surgical pathology diagnosis led to FDA approval of the Philips IntelliSite Digital Pathology in 2017 and the Leica Aperio AT2 DX in 2020 WSI platforms.6-8 The use of WSI in surgical pathology has been successfully validated or used in clinical practice at several pathology laboratory settings with documented benefits in the literature for primary and secondary diagnoses, QA, research, and education.6-8,35-45 Benefits of telepathology include improved ergonomics and access to real-time pathologic services in remote areas or during on-site pathologist absence and expert second opinions. Telepathology also may reduce risk of slide loss during transport, shortened turnaround time, reduced costs of operation through workflow efficiencies, better load balancing, improve virtual collaboration, and digital storage of slides that may be irreplaceable.3-8,35-45 Telepathology also has been shown to be useful for education, improving access to learning materials and increasing quality instructional materials at a lower cost.45 The increased ease of collaboration with remote experts and access to slide material for other pathologists improves QA capabilities.3-8,35-45 The availability of virtual slides is expected to promote further research in telepathology and pathology due to the increased availability of virtual material to researchers.1,5,46

Telehematology. Published validations have shown effectiveness for hematopathology specimens, such as the peripheral smear. Telehematology also has demonstrated potential in a laboratory after proper validation and implementation as a laboratory-developed test.37,47-49

Telemicrobiology and Computer-Assisted Pathologic Diagnosis. Telemicrobiology also has been successfully used for clinical, educational, and QA purposes.50 The digitalization of slides involved with telepathology enables further innovation in machine learning for computer-assisted pathologic diagnosis (CAPD), which is already being used clinically for cervical Pap smears.20 An artificial intelligence (AI)–based algorithm analyzes the slides to identify cells of interest, which are presented to the cytopathologist for confirmation.20 However, the expansion of CAPD to include a variety of specimen types or diagnostic situations as well as safely and effectively take initiative in completing an accurate automated diagnosis requires additional development.20,51,52 One of the key factors for machine learning to develop AI is the provision of a corpus of data.51,52 Public, open-source data sources have been limited in size while private proprietary sources have highly restricted and expensive access; to address this, there is a current effort to build the world’s largest public open-source digital pathology corpus at Temple University Hospital, which may help enable innovations in the future.52

Long-Term Trends/Applications

The COVID-19 pandemic has been unprecedented not only in its widespread morbidity and mortality, but also for the significant socioeconomic, health, lifestyle, societal, and workspace changes.53-57 Specifically, the pandemic has introduced not only a need for social distancing and staff quarantines to prevent the spread of infection, but also a reduction in the workforce due to the stresses of COVID-19 (also known as the Great Resignation).55 Before the pandemic, there was an existing downtrend in the number of pathologists in the US workforce.9-10,58,59 From 2007 to 2017, the number of active pathologists in the US declined by 17.5% despite the increasing national population, resulting in not only an absolute decrease in the number of pathologists, but also an increasing population served per pathologist ratio.59 Since 2017, this downtrend has continued; given the increasing loss of active pathologists from the workforce and the decreasing training of new pathologists, this decrease shows no signs of reversing even as the impact of the COVID-19 pandemic has begun to wane.9,10,58-60

The advantages of telepathology in enabling social distancing and reducing travel to remote sites are known.3-7,17 Given these advantages, some medical centers in the US have previously successfully validated and implemented telepathology operations earlier during the COVID-19 pandemic to ease workflow and ensure continued operations.56,57 The use of telepathology also helps in balancing workload and continuing pathology operations even in light of the workforce reduction as cases no longer need to be signed out on site with glass slides but instead can be signed out at a remote laboratory. Although the impact of the COVID-19 pandemic on operations is decreasing, the capabilities for social distancing and reducing travel remain important to both improve operations and ensure resiliency in response to similar potential events.3-7,17,60

Considering the long-term trends, the lessons of the COVID-19 pandemic, and the potential for future pandemics or other disasters, telepathology’s validation and implementation remains a reasonable choice for pathology practices looking to improve. A variety of practices not just in the general population, but also among US Department of Veterans Affairs medical centers (VAMCs) and the US Department of Defense Military Health System treating a veteran population can benefit from telepathology where it has previously been reported to have been reliable or successfully implemented.61-63 Although the veteran population differs from the general population in several characteristics, such as the severity of disease, coexisting morbidities, and other history, given proper validation and implementation, telepathology’s usefulness extends across different pathology practice settings.35-43,61-66

Limitations of Telepathology

In telepathology’s current state, there are limitations despite its immense promise.6,35 These include initial capital costs, the additional training requirement, the additional time necessary to scan slides, technical challenges (ie, laboratory information system integration, color calibration, display artifacts, potential for small particle scanner omissions, and information technology dependence), the potential for slower evaluation per slide compared with optical microscopes, limitations of slide imaging (ie, z-stacking or lack of polarization on digital pathology), and occupational concerns regarding eye strain with increased computer monitor usage (ie, computer vision syndrome).6,35 In addition, there are few telepathology scanners with FDA approval for WSI.6-8

The improving technology of telepathology has made these limitations surmountable, including faster slide scanning and increasing digital storage capacity for large WSI files. Due to this improvement in technology, an increasing number of laboratory settings, have adopted telepathology as its advantages have begun to outweigh the limitations.2-5 Additionally, the proper validation performed before implementing telepathology can help laboratories identify their unique challenges, troubleshoot, and resolve the limitations before use in clinical care.11-13 Continuing QA during its use and implementation is important to ensure that telepathology performs as expected for clinical purposes despite its limitations.2

Conclusions

Telepathology is a promising technology that may improve pathology practice once properly validated and implemented.1-8 Though there are barriers to this validation and implementation, particularly the capital costs and training, there are several potential benefits, including increased productivity, cost savings, improvement in the workflow, enhanced access to pathologic consultation, and adaptability of the pathology laboratory in an era of a decreased workforce and social distancing due to the COVID-19 pandemic.1-8,55-56 This potential applies across the wide spectrum of potential telepathology uses from frozen section, telecytology (including ROSE) to primary and second opinion diagnoses.1-8,17-33 The benefits also extends to QA, education, and research, as diagnoses can not only be rereviewed by specialty or second opinion consultation with ease, but also digital slides can be produced for educational and research purposes.3-8,35-45 Settings that treat the general population and those focused on the care of veterans or members of the armed forces have reported similar reliability or successful implementation.35-44,61-63 All in all, the use of telepathology represents an innovation that may transform the practice of pathology tomorrow.

1. Weinstein RS. Prospects for telepathology. Hum Pathol. 1986;17(5):433-434. doi:10.1016/s0046-8177(86)80028-4

2. Pantanowitz L, Dickinson K, Evans AJ, et al. American Telemedicine Association clinical guidelines for telepathology. J Pathol Inform. 2014;5(1):39. Published 2014 Oct 21. doi:10.4103/2153-3539.143329

3. Farahani N, Pantanowitz L. Overview of telepathology. Surg Pathol Clin. 2015;8(2):223-231. doi:10.1016/j.path. 2015.02.018 4. Petersen JM, Jhala D. Telepathology: a transforming practice for the efficient, safe, and best patient care at the regional Veteran Affairs medical center. Am J Clin Pathol. 2022;158(suppl 1):S97-S98. doi:10.1093/ajcp/aqac126.205

5. Bashshur RL, Krupinski EA, Weinstein RS, Dunn MR, Bashshur N. The empirical foundations of telepathology: evidence of feasibility and intermediate effects. Telemed J E Health. 2017;23(3):155-191. doi:10.1089/tmj.2016.0278

6. Jahn SW, Plass M, Moinfar F. Digital pathology: advantages, limitations and emerging perspectives. J Clin Med. 2020;9(11):3697. Published 2020 Nov 18. doi:10.3390/jcm9113697

7. Evans AJ, Bauer TW, Bui MM, et al. US Food and Drug Administration approval of whole slide imaging for primary diagnosis: a key milestone is reached and new questions are raised. Arch Pathol Lab Med. 2018;142(11):1383-1387. doi:10.5858/arpa.2017-0496-CP.

8. Patel A, Balis UGJ, Cheng J, et al. Contemporary whole slide imaging devices and their applications within the modern pathology department: a selected hardware review. J Pathol Inform. 2021;12:50. Published 2021 Dec 9. doi:10.4103/jpi.jpi_66_21

9. Association of American Medical Colleges. 2017 State Physician Workforce Data Book. November 2017. Accessed April 14, 2023. https://store.aamc.org/downloadable/download/sample/sample_id/30

10. Robboy SJ, Gross D, Park JY, et al. Reevaluation of the US pathologist workforce size. JAMA Netw Open. 2020;3(7):e2010648. Published 2020 Jul 1. doi:10.1001/jamanetworkopen.2020.10648

11. Pantanowitz L, Sinard JH, Henricks WH, et al. Validating whole slide imaging for diagnostic purposes in pathology: guideline from the College of American Pathologists Pathology and Laboratory Quality Center. Arch Pathol Lab Med. 2013;137(12):1710-1722. doi:10.5858/arpa.2013-0093-CP

12. Evans AJ, Brown RW, Bui MM, et al. Validating whole slide imaging systems for diagnostic purposes in pathology. Arch Pathol Lab Med. 2021;146(4):440-450. doi:10.5858/arpa.2020-0723-CP

13. Evans AJ, Lacchetti C, Reid K, Thomas NE. Validating whole slide imaging for diagnostic purposes in pathology: guideline update. College of American Pathologists. May 2021. Accessed April 13, 2023. https://documents.cap.org/documents/wsi-methodology.pdf

14. Chandraratnam E, Santos LD, Chou S, et al. Parathyroid frozen section interpretation via desktop telepathology systems: a validation study. J Pathol Inform. 2018;9:41. Published 2018 Dec 3. doi:10.4103/jpi.jpi_57_18

15. Thrall MJ, Rivera AL, Takei H, Powell SZ. Validation of a novel robotic telepathology platform for neuropathology intraoperative touch preparations. J Pathol Inform. 2014;5(1):21. Published 2014 Jul 28. doi:10.4103/2153-3539.137642

16. Balis UGJ, Williams CL, Cheng J, et al. Whole-Slide Imaging: Thinking Twice Before Hitting the Delete Key. AJSP: Reviews & Reports. 2018;23(6):p 249-250. doi:10.1097/PCR.0000000000000283

17. Kim B, Chhieng DC, Crowe DR, et al. Dynamic telecytopathology of on site rapid cytology diagnoses for pancreatic carcinoma. Cytojournal. 2006;3:27. Published 2006 Dec 11. doi:10.1186/1742-6413-3-27

18. Perez D, Stemmer MN, Khurana KK. Utilization of dynamic telecytopathology for rapid onsite evaluation of touch imprint cytology of needle core biopsy: diagnostic accuracy and pitfalls. Telemed J E Health. 2021;27(5):525-531. doi:10.1089/tmj.2020.0117

19. McCarthy EE, McMahon RQ, Das K, Stewart J 3rd. Internal validation testing for new technologies: bringing telecytopathology into the mainstream. Diagn Cytopathol. 2015;43(1):3-7. doi:10.1002/dc.23167

20. Marletta S, Treanor D, Eccher A, Pantanowitz L. Whole-slide imaging in cytopathology: state of the art and future directions. Diagn Histopathol (Oxf). 2021;27(11):425-430. doi:10.1016/j.mpdhp.2021.08.001

21. Lin O. Telecytology for rapid on-site evaluation: current status. J Am Soc Cytopathol. 2018;7(1):1-6. doi:10.1016/j.jasc.2017.10.002

22. Eloubeidi MA, Tamhane A, Jhala N, et al. Agreement between rapid onsite and final cytologic interpretations of EUS-guided FNA specimens: implications for the endosonographer and patient management. Am J Gastroenterol. 2006;101(12):2841-2847. doi:10.1111/j.1572-0241.2006.00852.x

23. Layfield LJ, Bentz JS, Gopez EV. Immediate on-site interpretation of fine-needle aspiration smears: a cost and compensation analysis. Cancer. 2001;93(5):319-322. doi:10.1002/cncr.9046

24. Fontelo P, Liu F, Yagi Y. Evaluation of a smartphone for telepathology: lessons learned. J Pathol Inform. 2015;6:35. Published 2015 Jun 23. doi:10.4103/2153-3539.158912

25. Lin O. Telecytology for rapid on-site evaluation: current status. J Am Soc Cytopathol. 2018;7(1):1-6. doi:10.1016/j.jasc.2017.10.002

26. Johnson DN, Onenerk M, Krane JF, et al. Cytologic grading of primary malignant salivary gland tumors: A blinded review by an international panel. Cancer Cytopathol. 2020;128(6):392-402. doi:10.1002/cncy.22271

27. Trabzonlu L, Chatt G, McIntire PJ, et al. Telecytology validation: is there a recipe for everybody? J Am Soc Cytopathol. 2022;11(4):218-225. doi:10.1016/j.jasc.2022.03.001

28. Canberk S, Behzatoglu K, Caliskan CK, et al. The role of telecytology in the primary diagnosis of thyroid fine-needle aspiration specimens. Acta Cytol. 2020;64(4):323-331. doi:10.1159/000503914.

29. Archondakis S, Roma M, Kaladelfou E. Implementation of pre-captured videos for remote diagnosis of cervical cytology specimens. Cytopathology. 2021;32(3):338-343. doi:10.1111/cyt.12948

30. Lee ES, Kim IS, Choi JS, et al. Accuracy and reproducibility of telecytology diagnosis of cervical smears. A tool for quality assurance programs. Am J Clin Pathol. 2003;119(3):356-360. doi:10.1309/7ytvag4xnr48t75h

31. Dietz RL, Hartman DJ, Pantanowitz L. Systematic review of the use of telepathology during intraoperative consultation. Am J Clin Pathol. 2020;153(2):198-209. doi:10.1093/ajcp/aqz155

32. Bauer TW, Slaw RJ, McKenney JK, Patil DT. Validation of whole slide imaging for frozen section diagnosis in surgical pathology. J Pathol Inform. 2015;6:49. Published 2015 Aug 31. doi:10.4103/2153-3539.163988

33. Vosoughi A, Smith PT, Zeitouni JA, et al. Frozen section evaluation via dynamic real-time nonrobotic telepathology system in a university cancer center by resident/faculty cooperation team. Hum Pathol. 2018;78:144-150. doi:10.1016/j.humpath.2018.04.012

34. Mahe E, Ara S, Bishara M, et al. Intraoperative pathology consultation: error, cause and impact. Can J Surg. 2013;56(3):E13-E18. doi:10.1503/cjs.011112.

35. Farahani N, Parwani AV, Pantanowitz L. Whole slide imaging in pathology: advantages, limitations, and emerging perspectives. Pathol Lab Med Int. 2015;7:23-33. doi:10.2147/PLMI.S59826

36. Thorstenson S, Molin J, Lundström C. Implementation of large-scale routine diagnostics using whole slide imaging in Sweden: digital pathology experiences 2006-2013. J Pathol Inform. 2014;5(1):14. Published 2014 Mar 28. doi:10.4103/2153-3539.129452

37. Pantanowitz L, Wiley CA, Demetris A, et al. Experience with multimodality telepathology at the University of Pittsburgh Medical Center. J Pathol Inform. 2012;3:45. doi:10.4103/2153-3539.104907

38. Al Habeeb A, Evans A, Ghazarian D. Virtual microscopy using whole-slide imaging as an enabler for teledermatopathology: a paired consultant validation study. J Pathol Inform. 2012;3:2. doi:10.4103/2153-3539.93399

39. Al-Janabi S, Huisman A, Vink A, et al. Whole slide images for primary diagnostics in dermatopathology: a feasibility study. J Clin Pathol. 2012;65(2):152-158. doi:10.1136/jclinpath-2011-200277

40. Nielsen PS, Lindebjerg J, Rasmussen J, Starklint H, Waldstrøm M, Nielsen B. Virtual microscopy: an evaluation of its validity and diagnostic performance in routine histologic diagnosis of skin tumors. Hum Pathol. 2010;41(12):1770-1776. doi:10.1016/j.humpath.2010.05.015

41. Leinweber B, Massone C, Kodama K, et al. Telederma-topathology: a controlled study about diagnostic validity and technical requirements for digital transmission. Am J Dermatopathol. 2006;28(5):413-416. doi:10.1097/01.dad.0000211523.95552.86

42. Koch LH, Lampros JN, Delong LK, Chen SC, Woosley JT, Hood AF. Randomized comparison of virtual microscopy and traditional glass microscopy in diagnostic accuracy among dermatology and pathology residents. Hum Pathol. 2009;40(5):662-667. doi:10.1016/j.humpath.2008.10.009

43. Farris AB, Cohen C, Rogers TE, Smith GH. Whole slide imaging for analytical anatomic pathology and telepathology: practical applications today, promises, and perils. Arch Pathol Lab Med. 2017;141(4):542-550. doi:10.5858/arpa.2016-0265-SA