User login

New CLTI Global Guidelines Available

On May 31, new global guidelines on the best ways to treat Chronic Limb-Threatening Ischemia were co-published in the Journal of Vascular Surgery and the European Journal of Vascular and Endovascular Surgery. This comes after four years of collaboration between vascular experts from around the world. According to the SVS’ own Dr. Conte, a co-editor, the group created a unique practice guideline that reflects the spectrum of the diseases and the approaches seen worldwide. Read the guidelines in the JVS here.

On May 31, new global guidelines on the best ways to treat Chronic Limb-Threatening Ischemia were co-published in the Journal of Vascular Surgery and the European Journal of Vascular and Endovascular Surgery. This comes after four years of collaboration between vascular experts from around the world. According to the SVS’ own Dr. Conte, a co-editor, the group created a unique practice guideline that reflects the spectrum of the diseases and the approaches seen worldwide. Read the guidelines in the JVS here.

On May 31, new global guidelines on the best ways to treat Chronic Limb-Threatening Ischemia were co-published in the Journal of Vascular Surgery and the European Journal of Vascular and Endovascular Surgery. This comes after four years of collaboration between vascular experts from around the world. According to the SVS’ own Dr. Conte, a co-editor, the group created a unique practice guideline that reflects the spectrum of the diseases and the approaches seen worldwide. Read the guidelines in the JVS here.

Tailored intervention improves asthma self-management for older patients

A needs- and barriers-based intervention that addressed psychosocial, physical, cognitive, and environmental barriers to self-management of asthma for older adults was successful in improving asthma outcomes and management, a recent trial has shown.

“This study demonstrates the value of patient centeredness and care coaching in supporting older adults with asthma and for ongoing efforts to engage patients in care delivery design and personalization,” Alex D. Federman, MD, of the division of general internal medicine at Icahn School of Medicine at Mount Sinai, New York, and colleagues wrote in their study, which was published in JAMA Internal Medicine. “It also highlights the challenges of engaging vulnerable populations in self-management support, including modest retention rates and reduced impact over time despite repeated encounters designed to sustain its effects.”

The researchers said older adults often have difficulty with self-management tasks like inhaler technique and use of inhaled corticosteroids, which can be caused by various psychosocial, physical, cognitive, or environmental barriers. However, an attempt at creating self-management tools around specific problems, rather than generalized training, has not been traditionally attempted, they noted.

For the SAMBA trial, Dr. Federman and colleagues enrolled 391 patients who were randomized to receive a home-based intervention, clinic-based intervention, or usual care, where an asthma care coach would identify the barriers to asthma control, train the patient in areas of improvement, and provide reinforcement when necessary. Patients were at least age 60 years (15.1% men) with uncontrolled asthma in New York City and were enrolled between February 2014 and December 2017. Researchers used the Mini Asthma Quality of Life Questionnaire, Asthma Control Test, metered dose inhaler technique, Medication Adherence Rating Scale, and visits to the emergency room to assess outcomes between interventions and usual care, and between home and clinic care. The data was analyzed using the ‘difference in differences’ statistical technique to compare the change differential between the groups.

They found significantly better asthma control scores between the intervention group and the control groups at 3 months (difference-in-differences, 1.2; 95% confidence interval, 0.2-2.2; P = .02), 6 months (D-in-Ds, 1.0; 95% CI, 0.0-2.1; P = .049), and 12 months (D-inDs, 0.6; 95% CI, −0.5 to 1.8; P = .28). Quality of life was significantly improved in the intervention group, compared with control patients (overall effect, chi-squared = 10.5; with 4 degrees of freedom; P = .01), as was adherence to medication (overall effect, chi-squared = 9.5, with 4 degrees of freedom; P = .049), and inhaler technique as measured by correctly completed steps at 12 months (75% vs. 58%). Visits to the emergency room were also lower in the intervention group, compared with the control group (6.2% vs. 12.7%; adjusted odds ratio, 0.8; 95% CI, 0.6-0.99; both P = .03). The researchers noted there were no significant differences between home care and clinic care.

Potential limitations in the study included a lower-than-planned statistical power, 70% retention in the intervention arms, low generalizability of the findings, and lack of blinding on the part of research assistants as well as some improvement in asthma control and outcomes in the control group.

This study was funded in part by the Patient-Centered Outcomes Research Institute. Coauthors Nandini Shroff reported grants from the Patient-Centered Outcomes Research Institute; Michael S. Wolf reported grants from Eli Lilly; and Juan P. Wisnivesky reported personal fees from Sanofi, Quintiles, and Banook, and grants from Sanofi and Quorum. The other authors reported no relevant conflicts of interest.

SOURCE: Federman AD et al. JAMA Intern Med. 2019; doi: 10.1001/jamainternmed.2019.1201.

A needs- and barriers-based intervention that addressed psychosocial, physical, cognitive, and environmental barriers to self-management of asthma for older adults was successful in improving asthma outcomes and management, a recent trial has shown.

“This study demonstrates the value of patient centeredness and care coaching in supporting older adults with asthma and for ongoing efforts to engage patients in care delivery design and personalization,” Alex D. Federman, MD, of the division of general internal medicine at Icahn School of Medicine at Mount Sinai, New York, and colleagues wrote in their study, which was published in JAMA Internal Medicine. “It also highlights the challenges of engaging vulnerable populations in self-management support, including modest retention rates and reduced impact over time despite repeated encounters designed to sustain its effects.”

The researchers said older adults often have difficulty with self-management tasks like inhaler technique and use of inhaled corticosteroids, which can be caused by various psychosocial, physical, cognitive, or environmental barriers. However, an attempt at creating self-management tools around specific problems, rather than generalized training, has not been traditionally attempted, they noted.

For the SAMBA trial, Dr. Federman and colleagues enrolled 391 patients who were randomized to receive a home-based intervention, clinic-based intervention, or usual care, where an asthma care coach would identify the barriers to asthma control, train the patient in areas of improvement, and provide reinforcement when necessary. Patients were at least age 60 years (15.1% men) with uncontrolled asthma in New York City and were enrolled between February 2014 and December 2017. Researchers used the Mini Asthma Quality of Life Questionnaire, Asthma Control Test, metered dose inhaler technique, Medication Adherence Rating Scale, and visits to the emergency room to assess outcomes between interventions and usual care, and between home and clinic care. The data was analyzed using the ‘difference in differences’ statistical technique to compare the change differential between the groups.

They found significantly better asthma control scores between the intervention group and the control groups at 3 months (difference-in-differences, 1.2; 95% confidence interval, 0.2-2.2; P = .02), 6 months (D-in-Ds, 1.0; 95% CI, 0.0-2.1; P = .049), and 12 months (D-inDs, 0.6; 95% CI, −0.5 to 1.8; P = .28). Quality of life was significantly improved in the intervention group, compared with control patients (overall effect, chi-squared = 10.5; with 4 degrees of freedom; P = .01), as was adherence to medication (overall effect, chi-squared = 9.5, with 4 degrees of freedom; P = .049), and inhaler technique as measured by correctly completed steps at 12 months (75% vs. 58%). Visits to the emergency room were also lower in the intervention group, compared with the control group (6.2% vs. 12.7%; adjusted odds ratio, 0.8; 95% CI, 0.6-0.99; both P = .03). The researchers noted there were no significant differences between home care and clinic care.

Potential limitations in the study included a lower-than-planned statistical power, 70% retention in the intervention arms, low generalizability of the findings, and lack of blinding on the part of research assistants as well as some improvement in asthma control and outcomes in the control group.

This study was funded in part by the Patient-Centered Outcomes Research Institute. Coauthors Nandini Shroff reported grants from the Patient-Centered Outcomes Research Institute; Michael S. Wolf reported grants from Eli Lilly; and Juan P. Wisnivesky reported personal fees from Sanofi, Quintiles, and Banook, and grants from Sanofi and Quorum. The other authors reported no relevant conflicts of interest.

SOURCE: Federman AD et al. JAMA Intern Med. 2019; doi: 10.1001/jamainternmed.2019.1201.

A needs- and barriers-based intervention that addressed psychosocial, physical, cognitive, and environmental barriers to self-management of asthma for older adults was successful in improving asthma outcomes and management, a recent trial has shown.

“This study demonstrates the value of patient centeredness and care coaching in supporting older adults with asthma and for ongoing efforts to engage patients in care delivery design and personalization,” Alex D. Federman, MD, of the division of general internal medicine at Icahn School of Medicine at Mount Sinai, New York, and colleagues wrote in their study, which was published in JAMA Internal Medicine. “It also highlights the challenges of engaging vulnerable populations in self-management support, including modest retention rates and reduced impact over time despite repeated encounters designed to sustain its effects.”

The researchers said older adults often have difficulty with self-management tasks like inhaler technique and use of inhaled corticosteroids, which can be caused by various psychosocial, physical, cognitive, or environmental barriers. However, an attempt at creating self-management tools around specific problems, rather than generalized training, has not been traditionally attempted, they noted.

For the SAMBA trial, Dr. Federman and colleagues enrolled 391 patients who were randomized to receive a home-based intervention, clinic-based intervention, or usual care, where an asthma care coach would identify the barriers to asthma control, train the patient in areas of improvement, and provide reinforcement when necessary. Patients were at least age 60 years (15.1% men) with uncontrolled asthma in New York City and were enrolled between February 2014 and December 2017. Researchers used the Mini Asthma Quality of Life Questionnaire, Asthma Control Test, metered dose inhaler technique, Medication Adherence Rating Scale, and visits to the emergency room to assess outcomes between interventions and usual care, and between home and clinic care. The data was analyzed using the ‘difference in differences’ statistical technique to compare the change differential between the groups.

They found significantly better asthma control scores between the intervention group and the control groups at 3 months (difference-in-differences, 1.2; 95% confidence interval, 0.2-2.2; P = .02), 6 months (D-in-Ds, 1.0; 95% CI, 0.0-2.1; P = .049), and 12 months (D-inDs, 0.6; 95% CI, −0.5 to 1.8; P = .28). Quality of life was significantly improved in the intervention group, compared with control patients (overall effect, chi-squared = 10.5; with 4 degrees of freedom; P = .01), as was adherence to medication (overall effect, chi-squared = 9.5, with 4 degrees of freedom; P = .049), and inhaler technique as measured by correctly completed steps at 12 months (75% vs. 58%). Visits to the emergency room were also lower in the intervention group, compared with the control group (6.2% vs. 12.7%; adjusted odds ratio, 0.8; 95% CI, 0.6-0.99; both P = .03). The researchers noted there were no significant differences between home care and clinic care.

Potential limitations in the study included a lower-than-planned statistical power, 70% retention in the intervention arms, low generalizability of the findings, and lack of blinding on the part of research assistants as well as some improvement in asthma control and outcomes in the control group.

This study was funded in part by the Patient-Centered Outcomes Research Institute. Coauthors Nandini Shroff reported grants from the Patient-Centered Outcomes Research Institute; Michael S. Wolf reported grants from Eli Lilly; and Juan P. Wisnivesky reported personal fees from Sanofi, Quintiles, and Banook, and grants from Sanofi and Quorum. The other authors reported no relevant conflicts of interest.

SOURCE: Federman AD et al. JAMA Intern Med. 2019; doi: 10.1001/jamainternmed.2019.1201.

FROM JAMA INTERNAL MEDICINE

Induced seizures as effective as spontaneous in identifying epileptic generator

according to a study of patients with focal drug-resistant epilepsy.

“This finding might lead to a more time-efficient intracranial presurgical investigation of focal epilepsy by reducing the need to record spontaneous seizures,” wrote Carolina Cuello Oderiz, MD, formerly of McGill University, and her coauthors. The study was published in JAMA Neurology.

To determine if cortical stimulation-induced seizures and subsequent removal of the informed seizure-onset zone (SOZ) could lead to good surgical outcomes, the researchers selected 103 patients with focal drug-resistant epilepsy who underwent stereoelectroencephalography (SEEG). All participants had to have undergone cortical stimulation during SEEG, followed by open epilepsy surgical procedure with a minimum 1-year follow-up. In addition, complete brain imaging for exact localization of individual electrode contacts and resection cavity was also required.

Of the 103 patients, 59 (57.3%) had cortical stimulation-induced seizures. The percentage of these patients in the good outcome group was higher than in the poor outcome group (70.5% versus 47.5%). The median percentage of resected cortical stimulation-informed SOZ contacts was also higher in the good than in the poor outcome group (63.2% versus 33.3%). The results were similar for spontaneous seizures, where the median percentage of resected contacts of the spontaneous SOZ was 57.1% in the good outcome group and 32.7% in the poor outcome group.

The coauthors noted their study’s limitations, including the exclusion of many patients due to the need for hi-resolution neuroimaging and sufficient postsurgical imaging and follow-up. They added that the strict criteria were “key to the main outcome of this study,” however, and noted that generalizability of the data was supported by similar rates in excluded patients.

The study was supported by grants from the Canadian Institute of Health Research and the Savoy Epilepsy Foundation. Numerous authors reported receiving grants, personal fees, and other funding from organizations like the Montreal Neurological Institute and various pharmaceutical companies.

Dr. Cuello Oderiz is now at SUNY Upstate Medical University, Syracuse, N.Y.

SOURCE: Cuello Oderiz C et al. JAMA Neurol. 2019 Jun 10. doi: 10.1001/jamaneurol.2019.1464.

according to a study of patients with focal drug-resistant epilepsy.

“This finding might lead to a more time-efficient intracranial presurgical investigation of focal epilepsy by reducing the need to record spontaneous seizures,” wrote Carolina Cuello Oderiz, MD, formerly of McGill University, and her coauthors. The study was published in JAMA Neurology.

To determine if cortical stimulation-induced seizures and subsequent removal of the informed seizure-onset zone (SOZ) could lead to good surgical outcomes, the researchers selected 103 patients with focal drug-resistant epilepsy who underwent stereoelectroencephalography (SEEG). All participants had to have undergone cortical stimulation during SEEG, followed by open epilepsy surgical procedure with a minimum 1-year follow-up. In addition, complete brain imaging for exact localization of individual electrode contacts and resection cavity was also required.

Of the 103 patients, 59 (57.3%) had cortical stimulation-induced seizures. The percentage of these patients in the good outcome group was higher than in the poor outcome group (70.5% versus 47.5%). The median percentage of resected cortical stimulation-informed SOZ contacts was also higher in the good than in the poor outcome group (63.2% versus 33.3%). The results were similar for spontaneous seizures, where the median percentage of resected contacts of the spontaneous SOZ was 57.1% in the good outcome group and 32.7% in the poor outcome group.

The coauthors noted their study’s limitations, including the exclusion of many patients due to the need for hi-resolution neuroimaging and sufficient postsurgical imaging and follow-up. They added that the strict criteria were “key to the main outcome of this study,” however, and noted that generalizability of the data was supported by similar rates in excluded patients.

The study was supported by grants from the Canadian Institute of Health Research and the Savoy Epilepsy Foundation. Numerous authors reported receiving grants, personal fees, and other funding from organizations like the Montreal Neurological Institute and various pharmaceutical companies.

Dr. Cuello Oderiz is now at SUNY Upstate Medical University, Syracuse, N.Y.

SOURCE: Cuello Oderiz C et al. JAMA Neurol. 2019 Jun 10. doi: 10.1001/jamaneurol.2019.1464.

according to a study of patients with focal drug-resistant epilepsy.

“This finding might lead to a more time-efficient intracranial presurgical investigation of focal epilepsy by reducing the need to record spontaneous seizures,” wrote Carolina Cuello Oderiz, MD, formerly of McGill University, and her coauthors. The study was published in JAMA Neurology.

To determine if cortical stimulation-induced seizures and subsequent removal of the informed seizure-onset zone (SOZ) could lead to good surgical outcomes, the researchers selected 103 patients with focal drug-resistant epilepsy who underwent stereoelectroencephalography (SEEG). All participants had to have undergone cortical stimulation during SEEG, followed by open epilepsy surgical procedure with a minimum 1-year follow-up. In addition, complete brain imaging for exact localization of individual electrode contacts and resection cavity was also required.

Of the 103 patients, 59 (57.3%) had cortical stimulation-induced seizures. The percentage of these patients in the good outcome group was higher than in the poor outcome group (70.5% versus 47.5%). The median percentage of resected cortical stimulation-informed SOZ contacts was also higher in the good than in the poor outcome group (63.2% versus 33.3%). The results were similar for spontaneous seizures, where the median percentage of resected contacts of the spontaneous SOZ was 57.1% in the good outcome group and 32.7% in the poor outcome group.

The coauthors noted their study’s limitations, including the exclusion of many patients due to the need for hi-resolution neuroimaging and sufficient postsurgical imaging and follow-up. They added that the strict criteria were “key to the main outcome of this study,” however, and noted that generalizability of the data was supported by similar rates in excluded patients.

The study was supported by grants from the Canadian Institute of Health Research and the Savoy Epilepsy Foundation. Numerous authors reported receiving grants, personal fees, and other funding from organizations like the Montreal Neurological Institute and various pharmaceutical companies.

Dr. Cuello Oderiz is now at SUNY Upstate Medical University, Syracuse, N.Y.

SOURCE: Cuello Oderiz C et al. JAMA Neurol. 2019 Jun 10. doi: 10.1001/jamaneurol.2019.1464.

FROM JAMA NEUROLOGY

Key clinical point: Seizures induced by cortical stimulation and spontaneous seizures both led to a similar percentage of good surgical outcomes in patients with epilepsy.

Major finding: The percentage of patients who received cortical stimulation-induced seizures in the good outcome group was higher than in the poor outcome group (70.5% versus 47.5%).

Study details: A cohort study of 103 patients with focal drug-resistant epilepsy who underwent stereoelectroencephalography.

Disclosures: The study was supported by grants from the Canadian Institute of Health Research and the Savoy Epilepsy Foundation. Numerous authors reported receiving grants, personal fees, and other funding from organizations like the Montreal Neurological Institute and various pharmaceutical companies.

Source: Cuello Oderiz C et al. JAMA Neurol. 2019 Jun 10. doi: 10.1001/jamaneurol.2019.1464.

How effective is spironolactone for treating resistant hypertension?

EVIDENCE SUMMARY

A 2017 meta-analysis of 4 RCTs (869 patients) evaluated the effectiveness of prescribing spironolactone for patients with resistant hypertension, defined as above-goal blood pressure (BP) despite treatment with at least 3 BP-lowering drugs (at least 1 of which was a diuretic).1 All 4 trials compared spironolactone 25 to 50 mg/d with placebo. Follow-up periods ranged from 8 to 16 weeks. The primary outcomes were systolic and diastolic BPs, which were evaluated in the office, at home, or with an ambulatory monitor.

Spironolactone markedly lowers systolic and diastolic BP

A statistically significant reduction in SBP occurred in the spironolactone group compared with the placebo group (weighted mean difference [WMD] = −16.7 mm Hg; 95% confidence interval [CI], −27.5 to −5.8 mm Hg). DBP also decreased (WMD = −6.11 mm Hg; 95% CI, −9.34 to −2.88 mm Hg).

Because significant heterogeneity was found in the initial pooled results (I2 = 96% for SBP; I2 = 85% for DBP), investigators performed an analysis that excluded a single study with a small sample size. The re-analysis continued to show significant reductions in SBP and DBP for spironolactone compared with placebo (SBP: WMD = −10.8 mm Hg; 95% CI, −13.16 to −8.43 mm Hg; DBP: WMD = −4.62 mm Hg; 95% CI, −6.05 to −3.2 mm Hg; I2 = 35%), confirming that the excluded trial was the source of heterogeneity in the initial analysis and that spironolactone continued to significantly lower BP for the treatment group compared with controls.

Add-on treatment with spironolactone also reduces BP

A 2016 meta-analysis of 5 RCTs with a total of 553 patients examined the effectiveness of add-on treatment with spironolactone (25-50 mg/d) for patients with resistant hypertension, defined as failure to achieve BP < 140/90 mm Hg despite treatment with 3 or more BP-lowering drugs, including one diuretic.2 Spironolactone was compared with placebo in 4 trials and with ramipril in the remaining study. The follow-up periods were 8 to 16 weeks. Researchers separated BP outcomes into 24-hour ambulatory systolic/diastolic BPs and office systolic/diastolic BPs.

The 24-hour ambulatory BPs were significantly lower in the spironolactone group compared with the control group (24-hour SBP: WMD = −10.5 mm Hg; 95% CI, −12.3 to −8.71 mm Hg; 24-hour DBP: WMD = −4.09 mm Hg; 95% CI, −5.28 to −2.91 mm Hg). No significant heterogeneity was noted in these analyses.

Office-based BPs also were markedly reduced in spironolactone groups compared with controls (office SBP: WMD = −17 mm Hg; 95% CI, −25 to −8.95 mm Hg); office DBP: WMD = −6.18 mm Hg; 95% CI, −9.3 to −3.05 mm Hg). Because the office-based BP data showed significant heterogeneity (I2 = 94% for SBP and 84.2% for DBP), 2 studies determined to be of lower quality caused by lack of detailed methodology were excluded from analysis, yielding continued statistically significant reductions in SBP (WMD = −11.7 mm Hg; 95% CI, −14.4 to −8.95 mm Hg) and DBP (WMD = −4.07 mm Hg; 95% CI, −5.6 to −2.54 mm Hg) compared with controls. Heterogeneity also decreased when the 2 studies were excluded (I2 = 21% for SBP and I2 = 59% for DBP).

How spironolactone compares with alternative drugs

A 2017 meta-analysis of 5 RCTs with 662 patients evaluated the effectiveness of spironolactone (25-50 mg/d) on resistant hypertension in patients taking 3 medications compared with a control group—placebo in 3 trials, placebo or bisoprolol (5-10 mg) in 1 trial, and an alternative treatment (candesartan 8 mg, atenolol 100 mg, or alpha methyldopa 750 mg) in 1 trial.3 Follow-up periods ranged from 4 to 16 weeks. Researchers evaluated changes in office and 24-hour ambulatory or home BP and completed separate analyses of pooled data for spironolactone compared with placebo groups, and spironolactone compared with alternative treatment groups.

Continue to: Investigators found a statistically significant...

Investigators found a statistically significant reduction in office SBP and DBP among patients taking spironolactone compared with control groups (SBP: WMD = −15.7 mm Hg; 95% CI, −20.5 to −11 mm Hg; DBP: WMD = −6.21 mm Hg; 95% CI, −8.33 to −4.1 mm Hg). A significant decrease also occurred in 24-hour ambulatory home SBP and DBP (SBP: MD = −8.7 mm Hg; 95% CI, −8.79 to −8.62 mm Hg; DBP: WMD = −4.12 mm Hg; 95% CI, −4.48 to −3.75 mm Hg).

Patients treated with spironolactone showed a marked decrease in home SBP compared with alternative drug groups (WMD = −4.5 mm Hg; 95% CI, −4.63 to −4.37 mm Hg), but alternative drugs reduced home DBP significantly more than spironolactone (WMD = 0.6 mm Hg; 95% CI, 0.55-0.65 mm Hg). Marked heterogeneity was found in these analyses, and the authors also noted that reductions in SBP are more clinically relevant than decreases in DBP.

RECOMMENDATIONS

The 2017 American Heart Association/American College of Cardiology evidence-based guideline recommends considering adding a mineralocorticoid receptor agonist to treatment regimens for resistant hypertension when: office BP remains ≥ 130/80 mm Hg; the patient is prescribed at least 3 antihypertensive agents at optimal doses including a diuretic; pseudoresistance (nonadherence, inaccurate measurements) is excluded; reversible lifestyle factors have been addressed; substances that interfere with BP treatment (such as nonsteroidal anti-inflammatory drugs and oral contraceptive pills) are excluded; and screening for secondary causes of hypertension is complete.4

The United Kingdom’s National Institute for Health and Care Excellence (NICE) evidence-based guideline recommends considering spironolactone 25 mg/d to treat resistant hypertension if the patient’s potassium level is 4.5 mmol/L or lower and BP is higher than 140/90 mm Hg despite treatment with an optimal or best-tolerated dose of an angiotensin-converting enzyme inhibitor or angiotensin II receptor blocker plus a calcium-channel blocker and diuretic.5

Editor’s takeaway

The evidence from multiple RCTs convincingly shows the effectiveness of spironolactone. Despite the SOR of C because of a disease-oriented outcome, we do treat to blood pressure goals, and therefore, spironolactone is a good option.

1. Zhao D, Liu H, Dong P, et al. A meta-analysis of add-on use of spironolactone in patients with resistant hypertension. Int J Cardiol. 2017;233:113-117.

2. Wang C, Xiong B, Huang J. Efficacy and safety of spironolactone in patients with resistant hypertension: a meta-analysis of randomised controlled trials. Heart Lung Circ. 2016;25:1021-1030.

3. Liu L, Xu B, Ju Y. Addition of spironolactone in patients with resistant hypertension: a meta-analysis of randomized controlled trials. Clin Exp Hypertens. 2017;39:257-263.

4. Whelton PK, Carey RM, Aronow WS, et al. 2017 ACC/AHA/AAPA/ABC/ACPM/AGS/APhA/ASH/ASPC/NMA/PCNA Guideline for the prevention, detection, evaluation, and management of high blood pressure in adults: a report of the American College of Cardiology/American Heart Association Task Force on Clinical Practice Guidelines. Hypertension. 2017. https://doi.org/10.1161/HYP.0000000000000065. Accessed June 6, 2019.

5. National Institute for Health and Care Excellence. Hypertension in adults: diagnosis and management. Clinical guideline [CG127]. August 2011. https://www.nice.org.uk/guidance/cg127/chapter/1-guidance#initiating-and-monitoring-antihypertensive-drug-treatment-including-blood-pressure-targets-2. Accessed June 6, 2019.

EVIDENCE SUMMARY

A 2017 meta-analysis of 4 RCTs (869 patients) evaluated the effectiveness of prescribing spironolactone for patients with resistant hypertension, defined as above-goal blood pressure (BP) despite treatment with at least 3 BP-lowering drugs (at least 1 of which was a diuretic).1 All 4 trials compared spironolactone 25 to 50 mg/d with placebo. Follow-up periods ranged from 8 to 16 weeks. The primary outcomes were systolic and diastolic BPs, which were evaluated in the office, at home, or with an ambulatory monitor.

Spironolactone markedly lowers systolic and diastolic BP

A statistically significant reduction in SBP occurred in the spironolactone group compared with the placebo group (weighted mean difference [WMD] = −16.7 mm Hg; 95% confidence interval [CI], −27.5 to −5.8 mm Hg). DBP also decreased (WMD = −6.11 mm Hg; 95% CI, −9.34 to −2.88 mm Hg).

Because significant heterogeneity was found in the initial pooled results (I2 = 96% for SBP; I2 = 85% for DBP), investigators performed an analysis that excluded a single study with a small sample size. The re-analysis continued to show significant reductions in SBP and DBP for spironolactone compared with placebo (SBP: WMD = −10.8 mm Hg; 95% CI, −13.16 to −8.43 mm Hg; DBP: WMD = −4.62 mm Hg; 95% CI, −6.05 to −3.2 mm Hg; I2 = 35%), confirming that the excluded trial was the source of heterogeneity in the initial analysis and that spironolactone continued to significantly lower BP for the treatment group compared with controls.

Add-on treatment with spironolactone also reduces BP

A 2016 meta-analysis of 5 RCTs with a total of 553 patients examined the effectiveness of add-on treatment with spironolactone (25-50 mg/d) for patients with resistant hypertension, defined as failure to achieve BP < 140/90 mm Hg despite treatment with 3 or more BP-lowering drugs, including one diuretic.2 Spironolactone was compared with placebo in 4 trials and with ramipril in the remaining study. The follow-up periods were 8 to 16 weeks. Researchers separated BP outcomes into 24-hour ambulatory systolic/diastolic BPs and office systolic/diastolic BPs.

The 24-hour ambulatory BPs were significantly lower in the spironolactone group compared with the control group (24-hour SBP: WMD = −10.5 mm Hg; 95% CI, −12.3 to −8.71 mm Hg; 24-hour DBP: WMD = −4.09 mm Hg; 95% CI, −5.28 to −2.91 mm Hg). No significant heterogeneity was noted in these analyses.

Office-based BPs also were markedly reduced in spironolactone groups compared with controls (office SBP: WMD = −17 mm Hg; 95% CI, −25 to −8.95 mm Hg); office DBP: WMD = −6.18 mm Hg; 95% CI, −9.3 to −3.05 mm Hg). Because the office-based BP data showed significant heterogeneity (I2 = 94% for SBP and 84.2% for DBP), 2 studies determined to be of lower quality caused by lack of detailed methodology were excluded from analysis, yielding continued statistically significant reductions in SBP (WMD = −11.7 mm Hg; 95% CI, −14.4 to −8.95 mm Hg) and DBP (WMD = −4.07 mm Hg; 95% CI, −5.6 to −2.54 mm Hg) compared with controls. Heterogeneity also decreased when the 2 studies were excluded (I2 = 21% for SBP and I2 = 59% for DBP).

How spironolactone compares with alternative drugs

A 2017 meta-analysis of 5 RCTs with 662 patients evaluated the effectiveness of spironolactone (25-50 mg/d) on resistant hypertension in patients taking 3 medications compared with a control group—placebo in 3 trials, placebo or bisoprolol (5-10 mg) in 1 trial, and an alternative treatment (candesartan 8 mg, atenolol 100 mg, or alpha methyldopa 750 mg) in 1 trial.3 Follow-up periods ranged from 4 to 16 weeks. Researchers evaluated changes in office and 24-hour ambulatory or home BP and completed separate analyses of pooled data for spironolactone compared with placebo groups, and spironolactone compared with alternative treatment groups.

Continue to: Investigators found a statistically significant...

Investigators found a statistically significant reduction in office SBP and DBP among patients taking spironolactone compared with control groups (SBP: WMD = −15.7 mm Hg; 95% CI, −20.5 to −11 mm Hg; DBP: WMD = −6.21 mm Hg; 95% CI, −8.33 to −4.1 mm Hg). A significant decrease also occurred in 24-hour ambulatory home SBP and DBP (SBP: MD = −8.7 mm Hg; 95% CI, −8.79 to −8.62 mm Hg; DBP: WMD = −4.12 mm Hg; 95% CI, −4.48 to −3.75 mm Hg).

Patients treated with spironolactone showed a marked decrease in home SBP compared with alternative drug groups (WMD = −4.5 mm Hg; 95% CI, −4.63 to −4.37 mm Hg), but alternative drugs reduced home DBP significantly more than spironolactone (WMD = 0.6 mm Hg; 95% CI, 0.55-0.65 mm Hg). Marked heterogeneity was found in these analyses, and the authors also noted that reductions in SBP are more clinically relevant than decreases in DBP.

RECOMMENDATIONS

The 2017 American Heart Association/American College of Cardiology evidence-based guideline recommends considering adding a mineralocorticoid receptor agonist to treatment regimens for resistant hypertension when: office BP remains ≥ 130/80 mm Hg; the patient is prescribed at least 3 antihypertensive agents at optimal doses including a diuretic; pseudoresistance (nonadherence, inaccurate measurements) is excluded; reversible lifestyle factors have been addressed; substances that interfere with BP treatment (such as nonsteroidal anti-inflammatory drugs and oral contraceptive pills) are excluded; and screening for secondary causes of hypertension is complete.4

The United Kingdom’s National Institute for Health and Care Excellence (NICE) evidence-based guideline recommends considering spironolactone 25 mg/d to treat resistant hypertension if the patient’s potassium level is 4.5 mmol/L or lower and BP is higher than 140/90 mm Hg despite treatment with an optimal or best-tolerated dose of an angiotensin-converting enzyme inhibitor or angiotensin II receptor blocker plus a calcium-channel blocker and diuretic.5

Editor’s takeaway

The evidence from multiple RCTs convincingly shows the effectiveness of spironolactone. Despite the SOR of C because of a disease-oriented outcome, we do treat to blood pressure goals, and therefore, spironolactone is a good option.

EVIDENCE SUMMARY

A 2017 meta-analysis of 4 RCTs (869 patients) evaluated the effectiveness of prescribing spironolactone for patients with resistant hypertension, defined as above-goal blood pressure (BP) despite treatment with at least 3 BP-lowering drugs (at least 1 of which was a diuretic).1 All 4 trials compared spironolactone 25 to 50 mg/d with placebo. Follow-up periods ranged from 8 to 16 weeks. The primary outcomes were systolic and diastolic BPs, which were evaluated in the office, at home, or with an ambulatory monitor.

Spironolactone markedly lowers systolic and diastolic BP

A statistically significant reduction in SBP occurred in the spironolactone group compared with the placebo group (weighted mean difference [WMD] = −16.7 mm Hg; 95% confidence interval [CI], −27.5 to −5.8 mm Hg). DBP also decreased (WMD = −6.11 mm Hg; 95% CI, −9.34 to −2.88 mm Hg).

Because significant heterogeneity was found in the initial pooled results (I2 = 96% for SBP; I2 = 85% for DBP), investigators performed an analysis that excluded a single study with a small sample size. The re-analysis continued to show significant reductions in SBP and DBP for spironolactone compared with placebo (SBP: WMD = −10.8 mm Hg; 95% CI, −13.16 to −8.43 mm Hg; DBP: WMD = −4.62 mm Hg; 95% CI, −6.05 to −3.2 mm Hg; I2 = 35%), confirming that the excluded trial was the source of heterogeneity in the initial analysis and that spironolactone continued to significantly lower BP for the treatment group compared with controls.

Add-on treatment with spironolactone also reduces BP

A 2016 meta-analysis of 5 RCTs with a total of 553 patients examined the effectiveness of add-on treatment with spironolactone (25-50 mg/d) for patients with resistant hypertension, defined as failure to achieve BP < 140/90 mm Hg despite treatment with 3 or more BP-lowering drugs, including one diuretic.2 Spironolactone was compared with placebo in 4 trials and with ramipril in the remaining study. The follow-up periods were 8 to 16 weeks. Researchers separated BP outcomes into 24-hour ambulatory systolic/diastolic BPs and office systolic/diastolic BPs.

The 24-hour ambulatory BPs were significantly lower in the spironolactone group compared with the control group (24-hour SBP: WMD = −10.5 mm Hg; 95% CI, −12.3 to −8.71 mm Hg; 24-hour DBP: WMD = −4.09 mm Hg; 95% CI, −5.28 to −2.91 mm Hg). No significant heterogeneity was noted in these analyses.

Office-based BPs also were markedly reduced in spironolactone groups compared with controls (office SBP: WMD = −17 mm Hg; 95% CI, −25 to −8.95 mm Hg); office DBP: WMD = −6.18 mm Hg; 95% CI, −9.3 to −3.05 mm Hg). Because the office-based BP data showed significant heterogeneity (I2 = 94% for SBP and 84.2% for DBP), 2 studies determined to be of lower quality caused by lack of detailed methodology were excluded from analysis, yielding continued statistically significant reductions in SBP (WMD = −11.7 mm Hg; 95% CI, −14.4 to −8.95 mm Hg) and DBP (WMD = −4.07 mm Hg; 95% CI, −5.6 to −2.54 mm Hg) compared with controls. Heterogeneity also decreased when the 2 studies were excluded (I2 = 21% for SBP and I2 = 59% for DBP).

How spironolactone compares with alternative drugs

A 2017 meta-analysis of 5 RCTs with 662 patients evaluated the effectiveness of spironolactone (25-50 mg/d) on resistant hypertension in patients taking 3 medications compared with a control group—placebo in 3 trials, placebo or bisoprolol (5-10 mg) in 1 trial, and an alternative treatment (candesartan 8 mg, atenolol 100 mg, or alpha methyldopa 750 mg) in 1 trial.3 Follow-up periods ranged from 4 to 16 weeks. Researchers evaluated changes in office and 24-hour ambulatory or home BP and completed separate analyses of pooled data for spironolactone compared with placebo groups, and spironolactone compared with alternative treatment groups.

Continue to: Investigators found a statistically significant...

Investigators found a statistically significant reduction in office SBP and DBP among patients taking spironolactone compared with control groups (SBP: WMD = −15.7 mm Hg; 95% CI, −20.5 to −11 mm Hg; DBP: WMD = −6.21 mm Hg; 95% CI, −8.33 to −4.1 mm Hg). A significant decrease also occurred in 24-hour ambulatory home SBP and DBP (SBP: MD = −8.7 mm Hg; 95% CI, −8.79 to −8.62 mm Hg; DBP: WMD = −4.12 mm Hg; 95% CI, −4.48 to −3.75 mm Hg).

Patients treated with spironolactone showed a marked decrease in home SBP compared with alternative drug groups (WMD = −4.5 mm Hg; 95% CI, −4.63 to −4.37 mm Hg), but alternative drugs reduced home DBP significantly more than spironolactone (WMD = 0.6 mm Hg; 95% CI, 0.55-0.65 mm Hg). Marked heterogeneity was found in these analyses, and the authors also noted that reductions in SBP are more clinically relevant than decreases in DBP.

RECOMMENDATIONS

The 2017 American Heart Association/American College of Cardiology evidence-based guideline recommends considering adding a mineralocorticoid receptor agonist to treatment regimens for resistant hypertension when: office BP remains ≥ 130/80 mm Hg; the patient is prescribed at least 3 antihypertensive agents at optimal doses including a diuretic; pseudoresistance (nonadherence, inaccurate measurements) is excluded; reversible lifestyle factors have been addressed; substances that interfere with BP treatment (such as nonsteroidal anti-inflammatory drugs and oral contraceptive pills) are excluded; and screening for secondary causes of hypertension is complete.4

The United Kingdom’s National Institute for Health and Care Excellence (NICE) evidence-based guideline recommends considering spironolactone 25 mg/d to treat resistant hypertension if the patient’s potassium level is 4.5 mmol/L or lower and BP is higher than 140/90 mm Hg despite treatment with an optimal or best-tolerated dose of an angiotensin-converting enzyme inhibitor or angiotensin II receptor blocker plus a calcium-channel blocker and diuretic.5

Editor’s takeaway

The evidence from multiple RCTs convincingly shows the effectiveness of spironolactone. Despite the SOR of C because of a disease-oriented outcome, we do treat to blood pressure goals, and therefore, spironolactone is a good option.

1. Zhao D, Liu H, Dong P, et al. A meta-analysis of add-on use of spironolactone in patients with resistant hypertension. Int J Cardiol. 2017;233:113-117.

2. Wang C, Xiong B, Huang J. Efficacy and safety of spironolactone in patients with resistant hypertension: a meta-analysis of randomised controlled trials. Heart Lung Circ. 2016;25:1021-1030.

3. Liu L, Xu B, Ju Y. Addition of spironolactone in patients with resistant hypertension: a meta-analysis of randomized controlled trials. Clin Exp Hypertens. 2017;39:257-263.

4. Whelton PK, Carey RM, Aronow WS, et al. 2017 ACC/AHA/AAPA/ABC/ACPM/AGS/APhA/ASH/ASPC/NMA/PCNA Guideline for the prevention, detection, evaluation, and management of high blood pressure in adults: a report of the American College of Cardiology/American Heart Association Task Force on Clinical Practice Guidelines. Hypertension. 2017. https://doi.org/10.1161/HYP.0000000000000065. Accessed June 6, 2019.

5. National Institute for Health and Care Excellence. Hypertension in adults: diagnosis and management. Clinical guideline [CG127]. August 2011. https://www.nice.org.uk/guidance/cg127/chapter/1-guidance#initiating-and-monitoring-antihypertensive-drug-treatment-including-blood-pressure-targets-2. Accessed June 6, 2019.

1. Zhao D, Liu H, Dong P, et al. A meta-analysis of add-on use of spironolactone in patients with resistant hypertension. Int J Cardiol. 2017;233:113-117.

2. Wang C, Xiong B, Huang J. Efficacy and safety of spironolactone in patients with resistant hypertension: a meta-analysis of randomised controlled trials. Heart Lung Circ. 2016;25:1021-1030.

3. Liu L, Xu B, Ju Y. Addition of spironolactone in patients with resistant hypertension: a meta-analysis of randomized controlled trials. Clin Exp Hypertens. 2017;39:257-263.

4. Whelton PK, Carey RM, Aronow WS, et al. 2017 ACC/AHA/AAPA/ABC/ACPM/AGS/APhA/ASH/ASPC/NMA/PCNA Guideline for the prevention, detection, evaluation, and management of high blood pressure in adults: a report of the American College of Cardiology/American Heart Association Task Force on Clinical Practice Guidelines. Hypertension. 2017. https://doi.org/10.1161/HYP.0000000000000065. Accessed June 6, 2019.

5. National Institute for Health and Care Excellence. Hypertension in adults: diagnosis and management. Clinical guideline [CG127]. August 2011. https://www.nice.org.uk/guidance/cg127/chapter/1-guidance#initiating-and-monitoring-antihypertensive-drug-treatment-including-blood-pressure-targets-2. Accessed June 6, 2019.

EVIDENCE-BASED ANSWER:

Very effective. Spironolactone reduces systolic blood pressure (SBP) by 11 to 17 mm Hg and diastolic blood pressure (DBP) by up to 6 mm Hg in patients with resistant hypertension taking 3 or more medications (strength of recommendation [SOR]: C, meta-analysis of randomized controlled trials [RCTs] of disease-oriented evidence).

4-year-old girl • genital discomfort and dysuria • clitoral hood swelling • Blood blister on the labia minora • Dx?

THE CASE

A 4-year-old girl presented to her pediatrician with genital discomfort and dysuria of 6 months’ duration. The patient’s mother said that 3 days earlier, she noticed a tear near the child’s clitoris and a scab on the labia minora that the mother attributed to minor trauma from scratching. The pediatrician was concerned about genital trauma from sexual abuse and referred the patient to the emergency department, where a report with child protective services (CPS) was filed. The mother reported that the patient and her 8-year-old sibling spent 3 to 4 hours a day with a babysitter, who was always supervised, and the parents had no concerns about possible sexual abuse.

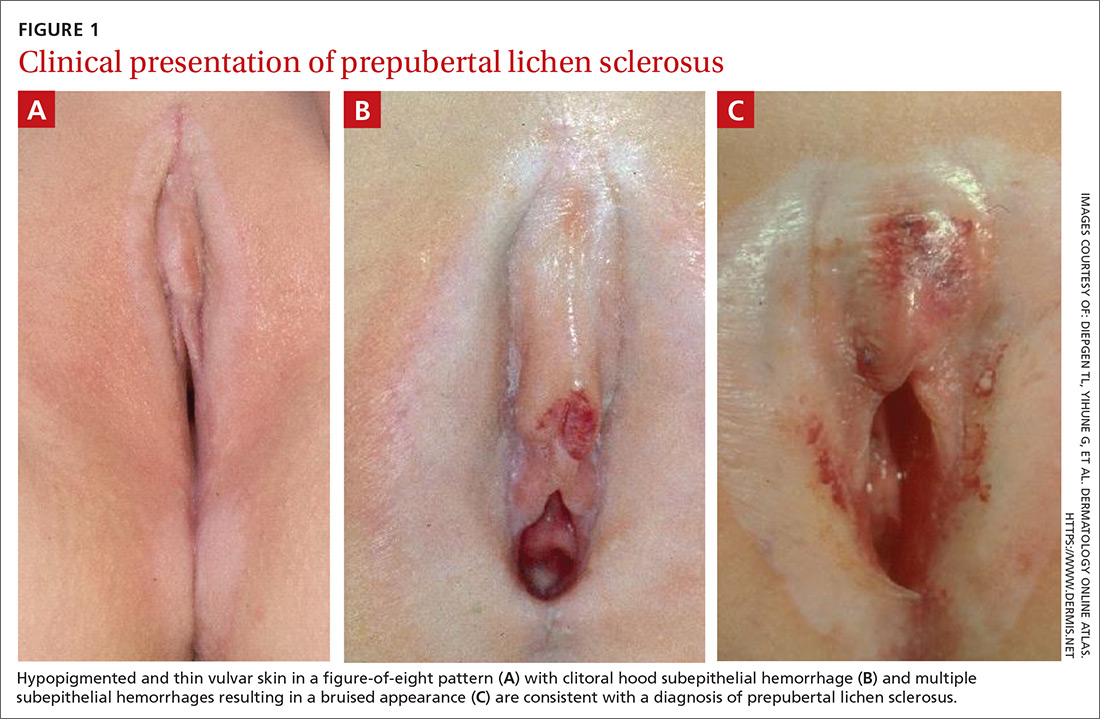

Physical examination by our institution’s Child Protection Team revealed clitoral hood swelling with subepithelial hemorrhages, a blood blister on the right labia minora, a fissure and subepithelial hemorrhages on the posterior fourchette, and a thin depigmented figure-of-eight lesion around the vulva and anus.

THE DIAGNOSIS

Since the clinical findings were consistent with prepubertal lichen sclerosus (LS), the CPS case was closed and the patient was referred to Pediatric Gynecology. Treatment with high-potency topical steroids was initiated with clobetasol ointment 0.05% twice daily for 2 weeks, then once daily for 2 weeks. She was then switched to triamcinolone ointment 0.01% twice daily for 2 weeks, then once daily for 2 weeks. These treatments were enough to stop the LS flare and decrease the anogenital itching.

DISCUSSION

Lichen sclerosus is a chronic inflammatory skin disease that primarily presents in the anogenital region; however, extragenital lesions on the upper extremities, thighs, and breasts have been reported in 15% to 20% of patients.1 Lichen sclerosus most commonly affects females as a result of low estrogen and may occur during puberty or following menopause, but it also is seen in males.1,2 The estimated prevalence of LS in prepubertal girls is 1 in 900.3 The effects of increased estrogen exposure on LS during puberty are not entirely clear. Lichen sclerosus previously was thought to improve with puberty, since it is not as common in women of reproductive age; however, studies have shown persistent symptoms after menarche in some patients.4-6

The pathogenesis of LS is multifactorial, likely with an autoimmune component, as it often is associated with other autoimmune findings such as thyroiditis, alopecia, pernicious anemia, and vitiligo.2 Diagnosis of prepubertal LS usually is made based on a review of the patient’s history and clinical examination. Presenting symptoms may include pruritus, skin irritation, vulvar pain, dysuria, bleeding from excoriations, fissures, and constipation.1,3,7

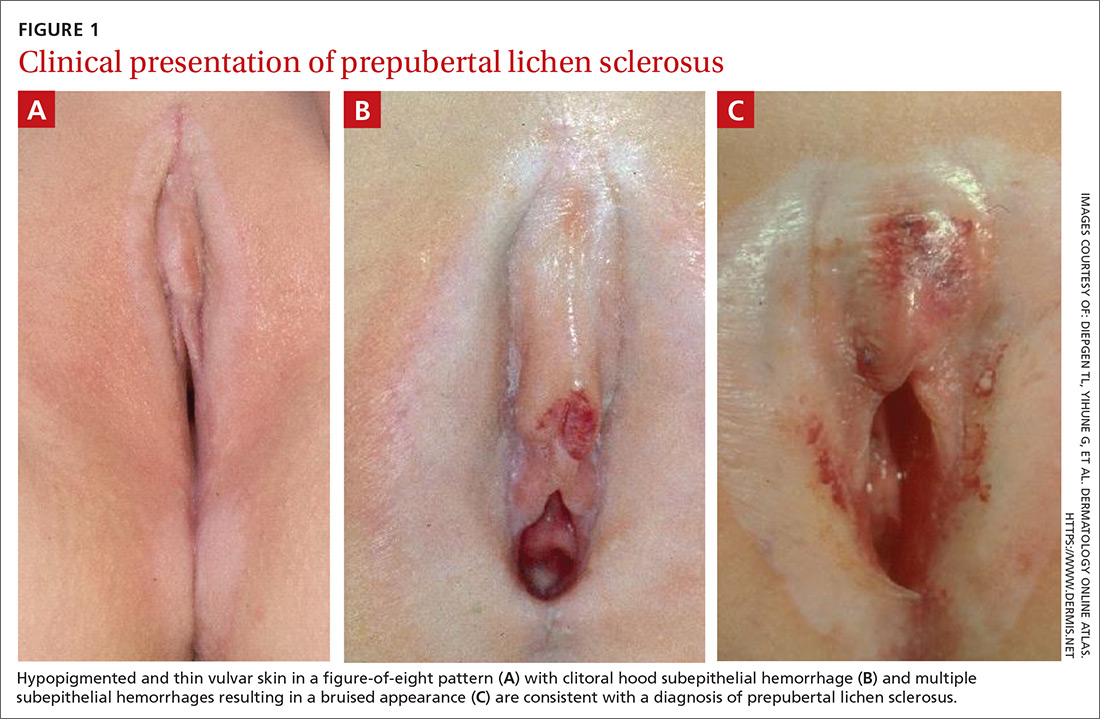

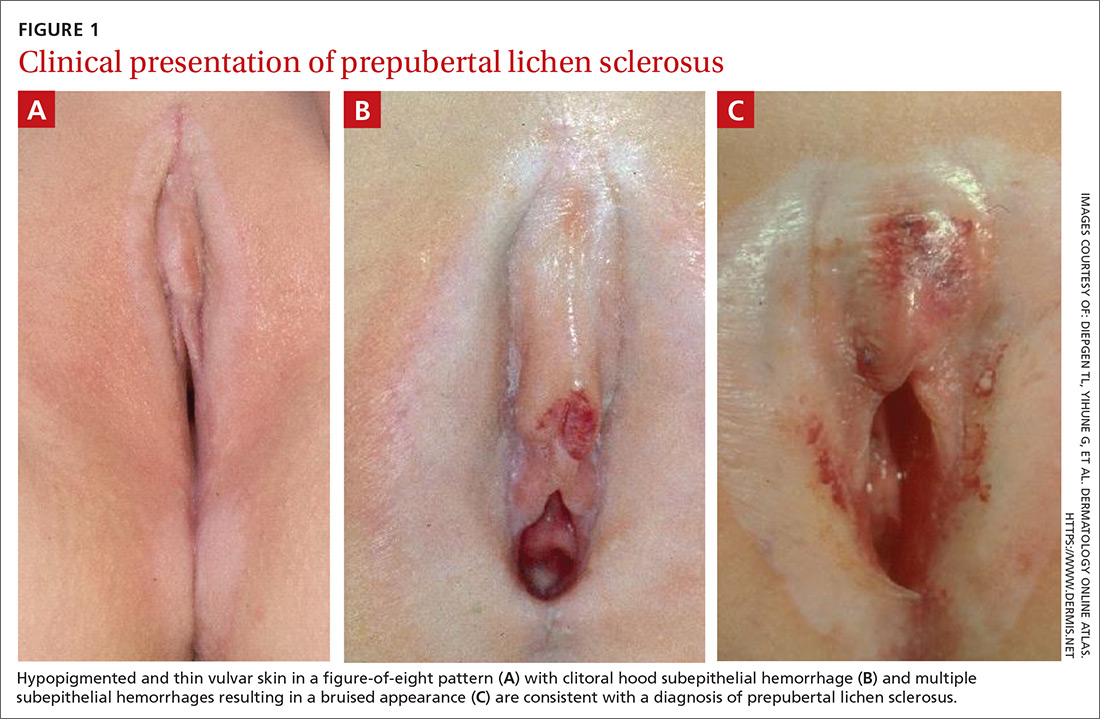

On physical examination, LS can present on the anogenital skin as smooth white spots or wrinkled, blotchy, atrophic patches. The skin around the vaginal opening and anus is thin and often is described as resembling parchment or cigarette paper in a figure-of-eight pattern (FIGURE 1A). Vulvar and anal fissures and subepithelial hemorrhages with the appearance of blood blisters also can be found (FIGURE 1B).8 Affected areas are fragile and susceptible to minor trauma, which may result in bruising or bleeding (FIGURE 1C).

Over time, scarring can occur and may result in disruption of the anogenital architecture—specifically loss of the labia minora, narrowing of the introitus, and burying of the clitoris.1,2 These changes can be similar to the scarring seen in postmenopausal women with LS.

Continue to: The differential diagnosis...

The differential diagnosis for prepubertal LS includes vitiligo, lichen planus, lichen simplex chronicus, psoriasis, eczema, vulvovaginitis, contact dermatitis, and trauma.2,7 On average, it takes 1 to 2 years after onset of symptoms before a correct diagnosis of prepubertal LS is made, and trauma and/or sexual abuse often are first suspected.7,9 For clinicians who are unfamiliar with prepubertal LS, the clinical findings of anogenital bruising and bleeding understandably may be suggestive of abuse. It is important to note that diagnosis of LS does not preclude the possibility of sexual abuse; in some cases, LS can be triggered or exacerbated by anogenital trauma, known as the Koebner phenomenon.2

Treatment. After the diagnosis of prepubertal LS is established, the goals of treatment are to provide symptom relief and prevent scarring of the external genitalia. To our knowledge, there have been no randomized controlled trials for treatment of LS in prepubertal girls. In general, acute symptoms are treated with high-potency topical steroids, such as clobetasol propionate or betamethasone valerate, and treatment regimens are variable.7

LS has an unpredictable clinical course and there often are recurrences that require repeat courses of topical steroids.9 Since concurrent bacterial infection is common,10 genital cultures should be obtained prior to initiation of topical steroids if an infection is suspected.

Topical calcineurin inhibitors have been used successfully, but proof of their effectiveness is limited to case reports in the literature.7 Surgical treatment of LS typically is reserved for complications associated with symptomatic adhesions that are refractory to medical management.7,11 Vulvar hygiene is paramount to symptom control, and topical emollients can be used to manage minor irritation.7,8 In our patient, clobetasol and triamcinolone ointments were enough to stop the LS flare and decrease the anogenital itching.

THE TAKEAWAY

Although LS has very characteristic skin findings, the diagnosis continues to be challenging for physicians who are unfamiliar with this condition. Failure to recognize prepubertal LS not only delays diagnosis and treatment but also may lead to repeated genital examinations and investigation by CPS for suspected sexual abuse. As with any genital complaint in a prepubertal girl, diagnosis of LS should not preclude appropriate screening for sexual abuse. Although providers should be vigilant about potential sexual abuse, familiarity with skin conditions that mimic genital trauma is essential.

CORRESPONDENCE

Monica Rosen, MD, L4000 Women’s Hospital, 1500 E Medical Center Drive, SPC 5276 Ann Arbor, MI 48109; [email protected]

1. Powell JJ, Wojnarowska F. Lichen sclerosus. Lancet. 1999;353:1777-1783.

2. Murphy R. Lichen sclerosus. Dermatol Clin. 2010;28:707-715.

3. Powell J, Wojnarowska F. Childhood vulvar lichen sclerosus: an increasingly common problem. J Am Acad Dermatol. 2001;44:803-806.

4. Powell J, Wojnarowska F. Childhood vulvar lichen sclerosus. The course after puberty. J Reprod Med. 2002;47:706-709.

5. Smith SD, Fischer G. Childhood onset vulvar lichen sclerosus does not resolve at puberty: a prospective case series. Pediatr Dermatol. 2009;26:725-729.

6. Focseneanu MA, Gupta M, Squires KC, et al. The course of lichen sclerosus diagnosed prior to puberty. J Pediatr Adolesc Gynecol. 2013;26:153-155.

7. Bercaw-Pratt JL, Boardman LA, Simms-Cendan JS. Clinical recommendation: pediatric lichen sclerosus. J Pediatr Adolesc Gynecol. 2014;27:111-116.

8. Jenny C, Kirby P, Fuquay D. Genital lichen sclerosus mistaken for child sexual abuse. Pediatrics. 1989;83:597-599.

9. Dendrinos ML, Quint EH. Lichen sclerosus in children and adolescents. Curr Opin Obstet Gynecol. 2013;25:370-374.

10. Lagerstedt M, Karvinen K, Joki-Erkkila M, et al. Childhood lichen sclerosus—a challenge for clinicians. Pediatr Dermatol. 2013;30:444-450.

11. Gurumurthy M, Morah N, Gioffre G, et al. The surgical management of complications of vulval lichen sclerosus. Eur J Obstet Gynecol Reprod Biol. 2012;162:79-82.

THE CASE

A 4-year-old girl presented to her pediatrician with genital discomfort and dysuria of 6 months’ duration. The patient’s mother said that 3 days earlier, she noticed a tear near the child’s clitoris and a scab on the labia minora that the mother attributed to minor trauma from scratching. The pediatrician was concerned about genital trauma from sexual abuse and referred the patient to the emergency department, where a report with child protective services (CPS) was filed. The mother reported that the patient and her 8-year-old sibling spent 3 to 4 hours a day with a babysitter, who was always supervised, and the parents had no concerns about possible sexual abuse.

Physical examination by our institution’s Child Protection Team revealed clitoral hood swelling with subepithelial hemorrhages, a blood blister on the right labia minora, a fissure and subepithelial hemorrhages on the posterior fourchette, and a thin depigmented figure-of-eight lesion around the vulva and anus.

THE DIAGNOSIS

Since the clinical findings were consistent with prepubertal lichen sclerosus (LS), the CPS case was closed and the patient was referred to Pediatric Gynecology. Treatment with high-potency topical steroids was initiated with clobetasol ointment 0.05% twice daily for 2 weeks, then once daily for 2 weeks. She was then switched to triamcinolone ointment 0.01% twice daily for 2 weeks, then once daily for 2 weeks. These treatments were enough to stop the LS flare and decrease the anogenital itching.

DISCUSSION

Lichen sclerosus is a chronic inflammatory skin disease that primarily presents in the anogenital region; however, extragenital lesions on the upper extremities, thighs, and breasts have been reported in 15% to 20% of patients.1 Lichen sclerosus most commonly affects females as a result of low estrogen and may occur during puberty or following menopause, but it also is seen in males.1,2 The estimated prevalence of LS in prepubertal girls is 1 in 900.3 The effects of increased estrogen exposure on LS during puberty are not entirely clear. Lichen sclerosus previously was thought to improve with puberty, since it is not as common in women of reproductive age; however, studies have shown persistent symptoms after menarche in some patients.4-6

The pathogenesis of LS is multifactorial, likely with an autoimmune component, as it often is associated with other autoimmune findings such as thyroiditis, alopecia, pernicious anemia, and vitiligo.2 Diagnosis of prepubertal LS usually is made based on a review of the patient’s history and clinical examination. Presenting symptoms may include pruritus, skin irritation, vulvar pain, dysuria, bleeding from excoriations, fissures, and constipation.1,3,7

On physical examination, LS can present on the anogenital skin as smooth white spots or wrinkled, blotchy, atrophic patches. The skin around the vaginal opening and anus is thin and often is described as resembling parchment or cigarette paper in a figure-of-eight pattern (FIGURE 1A). Vulvar and anal fissures and subepithelial hemorrhages with the appearance of blood blisters also can be found (FIGURE 1B).8 Affected areas are fragile and susceptible to minor trauma, which may result in bruising or bleeding (FIGURE 1C).

Over time, scarring can occur and may result in disruption of the anogenital architecture—specifically loss of the labia minora, narrowing of the introitus, and burying of the clitoris.1,2 These changes can be similar to the scarring seen in postmenopausal women with LS.

Continue to: The differential diagnosis...

The differential diagnosis for prepubertal LS includes vitiligo, lichen planus, lichen simplex chronicus, psoriasis, eczema, vulvovaginitis, contact dermatitis, and trauma.2,7 On average, it takes 1 to 2 years after onset of symptoms before a correct diagnosis of prepubertal LS is made, and trauma and/or sexual abuse often are first suspected.7,9 For clinicians who are unfamiliar with prepubertal LS, the clinical findings of anogenital bruising and bleeding understandably may be suggestive of abuse. It is important to note that diagnosis of LS does not preclude the possibility of sexual abuse; in some cases, LS can be triggered or exacerbated by anogenital trauma, known as the Koebner phenomenon.2

Treatment. After the diagnosis of prepubertal LS is established, the goals of treatment are to provide symptom relief and prevent scarring of the external genitalia. To our knowledge, there have been no randomized controlled trials for treatment of LS in prepubertal girls. In general, acute symptoms are treated with high-potency topical steroids, such as clobetasol propionate or betamethasone valerate, and treatment regimens are variable.7

LS has an unpredictable clinical course and there often are recurrences that require repeat courses of topical steroids.9 Since concurrent bacterial infection is common,10 genital cultures should be obtained prior to initiation of topical steroids if an infection is suspected.

Topical calcineurin inhibitors have been used successfully, but proof of their effectiveness is limited to case reports in the literature.7 Surgical treatment of LS typically is reserved for complications associated with symptomatic adhesions that are refractory to medical management.7,11 Vulvar hygiene is paramount to symptom control, and topical emollients can be used to manage minor irritation.7,8 In our patient, clobetasol and triamcinolone ointments were enough to stop the LS flare and decrease the anogenital itching.

THE TAKEAWAY

Although LS has very characteristic skin findings, the diagnosis continues to be challenging for physicians who are unfamiliar with this condition. Failure to recognize prepubertal LS not only delays diagnosis and treatment but also may lead to repeated genital examinations and investigation by CPS for suspected sexual abuse. As with any genital complaint in a prepubertal girl, diagnosis of LS should not preclude appropriate screening for sexual abuse. Although providers should be vigilant about potential sexual abuse, familiarity with skin conditions that mimic genital trauma is essential.

CORRESPONDENCE

Monica Rosen, MD, L4000 Women’s Hospital, 1500 E Medical Center Drive, SPC 5276 Ann Arbor, MI 48109; [email protected]

THE CASE

A 4-year-old girl presented to her pediatrician with genital discomfort and dysuria of 6 months’ duration. The patient’s mother said that 3 days earlier, she noticed a tear near the child’s clitoris and a scab on the labia minora that the mother attributed to minor trauma from scratching. The pediatrician was concerned about genital trauma from sexual abuse and referred the patient to the emergency department, where a report with child protective services (CPS) was filed. The mother reported that the patient and her 8-year-old sibling spent 3 to 4 hours a day with a babysitter, who was always supervised, and the parents had no concerns about possible sexual abuse.

Physical examination by our institution’s Child Protection Team revealed clitoral hood swelling with subepithelial hemorrhages, a blood blister on the right labia minora, a fissure and subepithelial hemorrhages on the posterior fourchette, and a thin depigmented figure-of-eight lesion around the vulva and anus.

THE DIAGNOSIS

Since the clinical findings were consistent with prepubertal lichen sclerosus (LS), the CPS case was closed and the patient was referred to Pediatric Gynecology. Treatment with high-potency topical steroids was initiated with clobetasol ointment 0.05% twice daily for 2 weeks, then once daily for 2 weeks. She was then switched to triamcinolone ointment 0.01% twice daily for 2 weeks, then once daily for 2 weeks. These treatments were enough to stop the LS flare and decrease the anogenital itching.

DISCUSSION

Lichen sclerosus is a chronic inflammatory skin disease that primarily presents in the anogenital region; however, extragenital lesions on the upper extremities, thighs, and breasts have been reported in 15% to 20% of patients.1 Lichen sclerosus most commonly affects females as a result of low estrogen and may occur during puberty or following menopause, but it also is seen in males.1,2 The estimated prevalence of LS in prepubertal girls is 1 in 900.3 The effects of increased estrogen exposure on LS during puberty are not entirely clear. Lichen sclerosus previously was thought to improve with puberty, since it is not as common in women of reproductive age; however, studies have shown persistent symptoms after menarche in some patients.4-6

The pathogenesis of LS is multifactorial, likely with an autoimmune component, as it often is associated with other autoimmune findings such as thyroiditis, alopecia, pernicious anemia, and vitiligo.2 Diagnosis of prepubertal LS usually is made based on a review of the patient’s history and clinical examination. Presenting symptoms may include pruritus, skin irritation, vulvar pain, dysuria, bleeding from excoriations, fissures, and constipation.1,3,7

On physical examination, LS can present on the anogenital skin as smooth white spots or wrinkled, blotchy, atrophic patches. The skin around the vaginal opening and anus is thin and often is described as resembling parchment or cigarette paper in a figure-of-eight pattern (FIGURE 1A). Vulvar and anal fissures and subepithelial hemorrhages with the appearance of blood blisters also can be found (FIGURE 1B).8 Affected areas are fragile and susceptible to minor trauma, which may result in bruising or bleeding (FIGURE 1C).

Over time, scarring can occur and may result in disruption of the anogenital architecture—specifically loss of the labia minora, narrowing of the introitus, and burying of the clitoris.1,2 These changes can be similar to the scarring seen in postmenopausal women with LS.

Continue to: The differential diagnosis...

The differential diagnosis for prepubertal LS includes vitiligo, lichen planus, lichen simplex chronicus, psoriasis, eczema, vulvovaginitis, contact dermatitis, and trauma.2,7 On average, it takes 1 to 2 years after onset of symptoms before a correct diagnosis of prepubertal LS is made, and trauma and/or sexual abuse often are first suspected.7,9 For clinicians who are unfamiliar with prepubertal LS, the clinical findings of anogenital bruising and bleeding understandably may be suggestive of abuse. It is important to note that diagnosis of LS does not preclude the possibility of sexual abuse; in some cases, LS can be triggered or exacerbated by anogenital trauma, known as the Koebner phenomenon.2

Treatment. After the diagnosis of prepubertal LS is established, the goals of treatment are to provide symptom relief and prevent scarring of the external genitalia. To our knowledge, there have been no randomized controlled trials for treatment of LS in prepubertal girls. In general, acute symptoms are treated with high-potency topical steroids, such as clobetasol propionate or betamethasone valerate, and treatment regimens are variable.7

LS has an unpredictable clinical course and there often are recurrences that require repeat courses of topical steroids.9 Since concurrent bacterial infection is common,10 genital cultures should be obtained prior to initiation of topical steroids if an infection is suspected.

Topical calcineurin inhibitors have been used successfully, but proof of their effectiveness is limited to case reports in the literature.7 Surgical treatment of LS typically is reserved for complications associated with symptomatic adhesions that are refractory to medical management.7,11 Vulvar hygiene is paramount to symptom control, and topical emollients can be used to manage minor irritation.7,8 In our patient, clobetasol and triamcinolone ointments were enough to stop the LS flare and decrease the anogenital itching.

THE TAKEAWAY

Although LS has very characteristic skin findings, the diagnosis continues to be challenging for physicians who are unfamiliar with this condition. Failure to recognize prepubertal LS not only delays diagnosis and treatment but also may lead to repeated genital examinations and investigation by CPS for suspected sexual abuse. As with any genital complaint in a prepubertal girl, diagnosis of LS should not preclude appropriate screening for sexual abuse. Although providers should be vigilant about potential sexual abuse, familiarity with skin conditions that mimic genital trauma is essential.

CORRESPONDENCE

Monica Rosen, MD, L4000 Women’s Hospital, 1500 E Medical Center Drive, SPC 5276 Ann Arbor, MI 48109; [email protected]

1. Powell JJ, Wojnarowska F. Lichen sclerosus. Lancet. 1999;353:1777-1783.

2. Murphy R. Lichen sclerosus. Dermatol Clin. 2010;28:707-715.

3. Powell J, Wojnarowska F. Childhood vulvar lichen sclerosus: an increasingly common problem. J Am Acad Dermatol. 2001;44:803-806.

4. Powell J, Wojnarowska F. Childhood vulvar lichen sclerosus. The course after puberty. J Reprod Med. 2002;47:706-709.

5. Smith SD, Fischer G. Childhood onset vulvar lichen sclerosus does not resolve at puberty: a prospective case series. Pediatr Dermatol. 2009;26:725-729.

6. Focseneanu MA, Gupta M, Squires KC, et al. The course of lichen sclerosus diagnosed prior to puberty. J Pediatr Adolesc Gynecol. 2013;26:153-155.

7. Bercaw-Pratt JL, Boardman LA, Simms-Cendan JS. Clinical recommendation: pediatric lichen sclerosus. J Pediatr Adolesc Gynecol. 2014;27:111-116.

8. Jenny C, Kirby P, Fuquay D. Genital lichen sclerosus mistaken for child sexual abuse. Pediatrics. 1989;83:597-599.

9. Dendrinos ML, Quint EH. Lichen sclerosus in children and adolescents. Curr Opin Obstet Gynecol. 2013;25:370-374.

10. Lagerstedt M, Karvinen K, Joki-Erkkila M, et al. Childhood lichen sclerosus—a challenge for clinicians. Pediatr Dermatol. 2013;30:444-450.

11. Gurumurthy M, Morah N, Gioffre G, et al. The surgical management of complications of vulval lichen sclerosus. Eur J Obstet Gynecol Reprod Biol. 2012;162:79-82.

1. Powell JJ, Wojnarowska F. Lichen sclerosus. Lancet. 1999;353:1777-1783.

2. Murphy R. Lichen sclerosus. Dermatol Clin. 2010;28:707-715.

3. Powell J, Wojnarowska F. Childhood vulvar lichen sclerosus: an increasingly common problem. J Am Acad Dermatol. 2001;44:803-806.

4. Powell J, Wojnarowska F. Childhood vulvar lichen sclerosus. The course after puberty. J Reprod Med. 2002;47:706-709.

5. Smith SD, Fischer G. Childhood onset vulvar lichen sclerosus does not resolve at puberty: a prospective case series. Pediatr Dermatol. 2009;26:725-729.

6. Focseneanu MA, Gupta M, Squires KC, et al. The course of lichen sclerosus diagnosed prior to puberty. J Pediatr Adolesc Gynecol. 2013;26:153-155.

7. Bercaw-Pratt JL, Boardman LA, Simms-Cendan JS. Clinical recommendation: pediatric lichen sclerosus. J Pediatr Adolesc Gynecol. 2014;27:111-116.

8. Jenny C, Kirby P, Fuquay D. Genital lichen sclerosus mistaken for child sexual abuse. Pediatrics. 1989;83:597-599.

9. Dendrinos ML, Quint EH. Lichen sclerosus in children and adolescents. Curr Opin Obstet Gynecol. 2013;25:370-374.

10. Lagerstedt M, Karvinen K, Joki-Erkkila M, et al. Childhood lichen sclerosus—a challenge for clinicians. Pediatr Dermatol. 2013;30:444-450.

11. Gurumurthy M, Morah N, Gioffre G, et al. The surgical management of complications of vulval lichen sclerosus. Eur J Obstet Gynecol Reprod Biol. 2012;162:79-82.

We all benefit from this powerful pairing

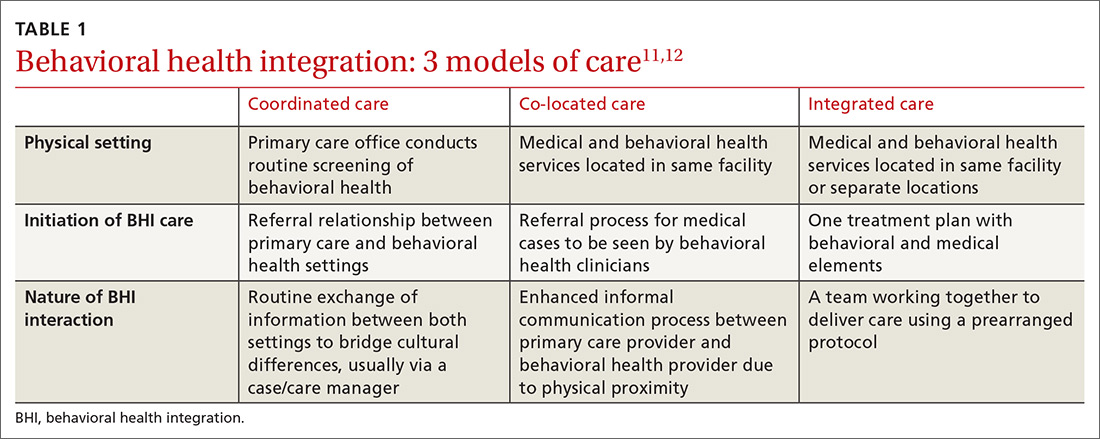

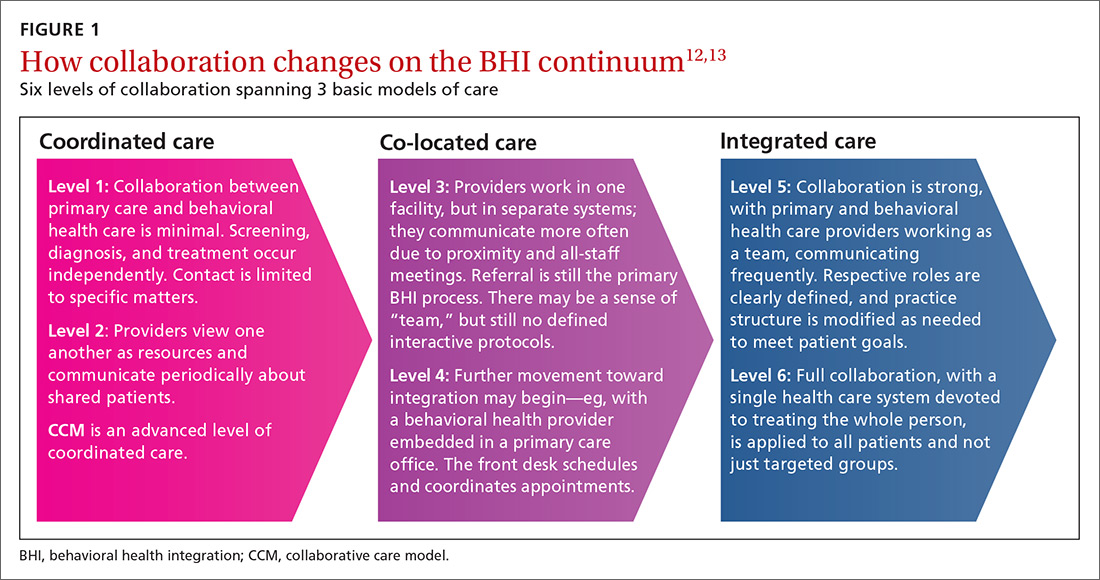

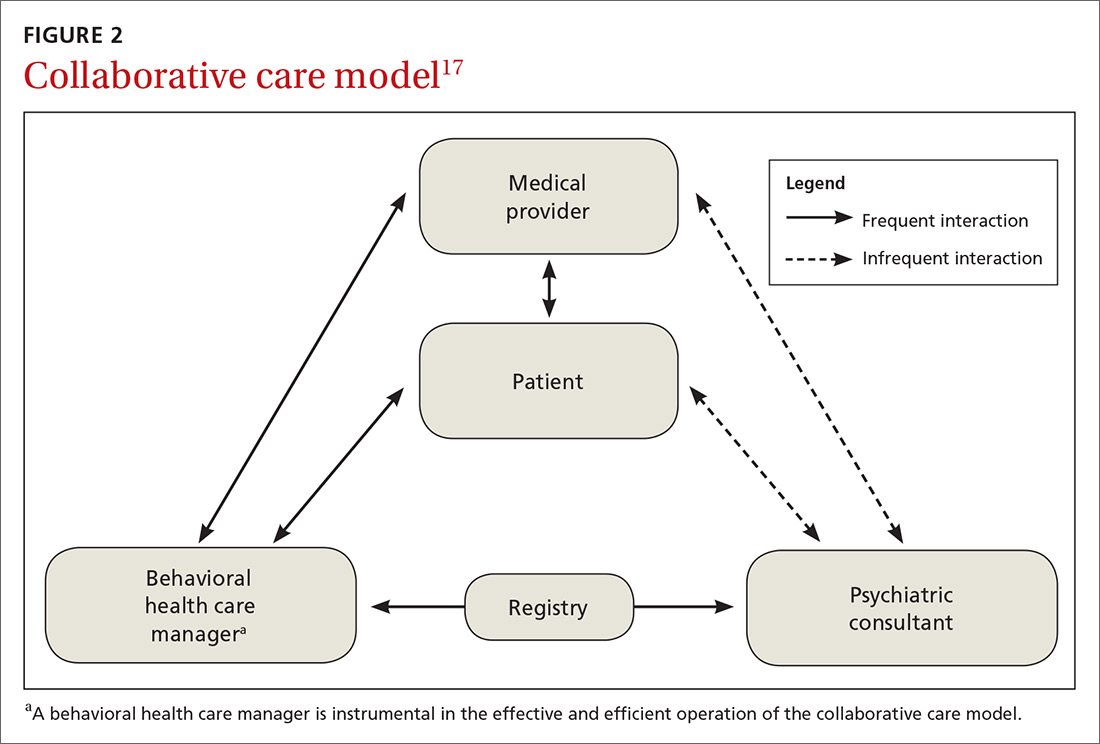

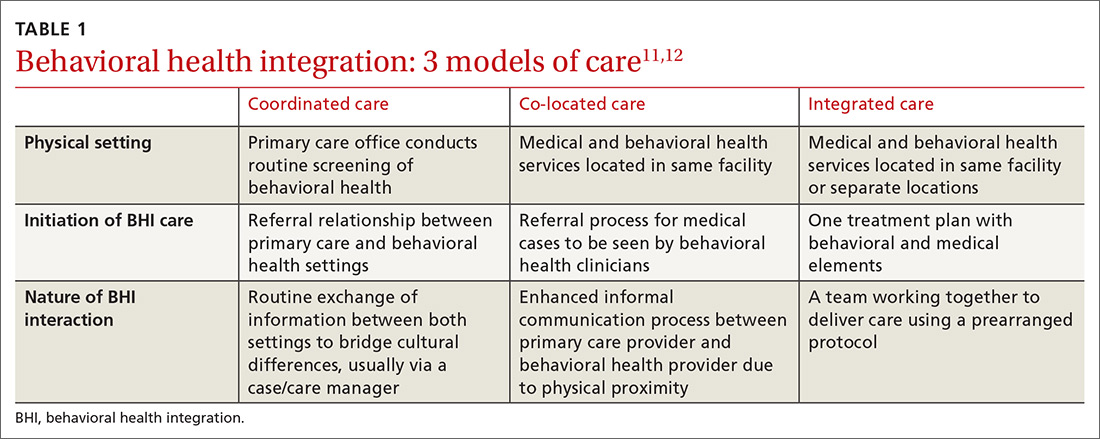

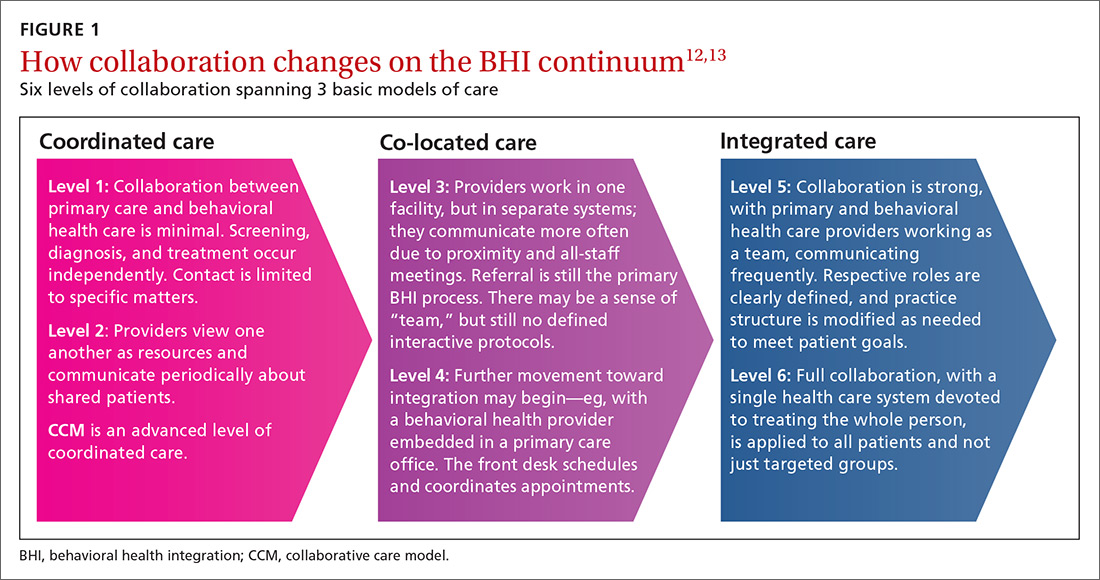

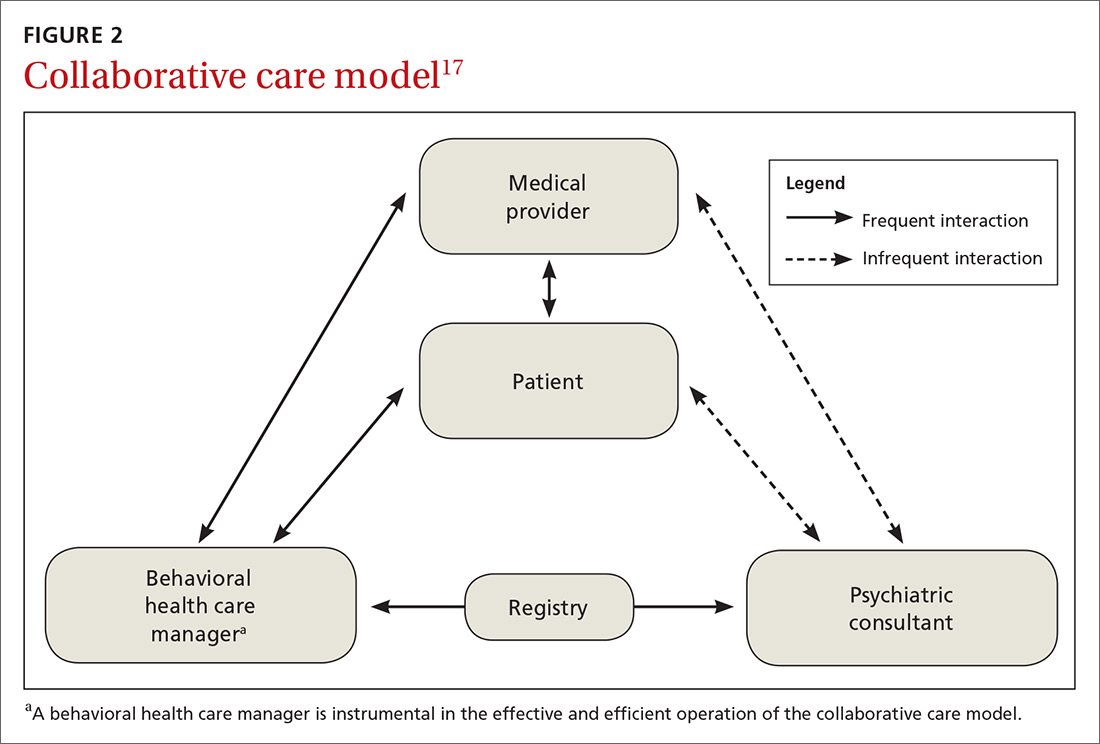

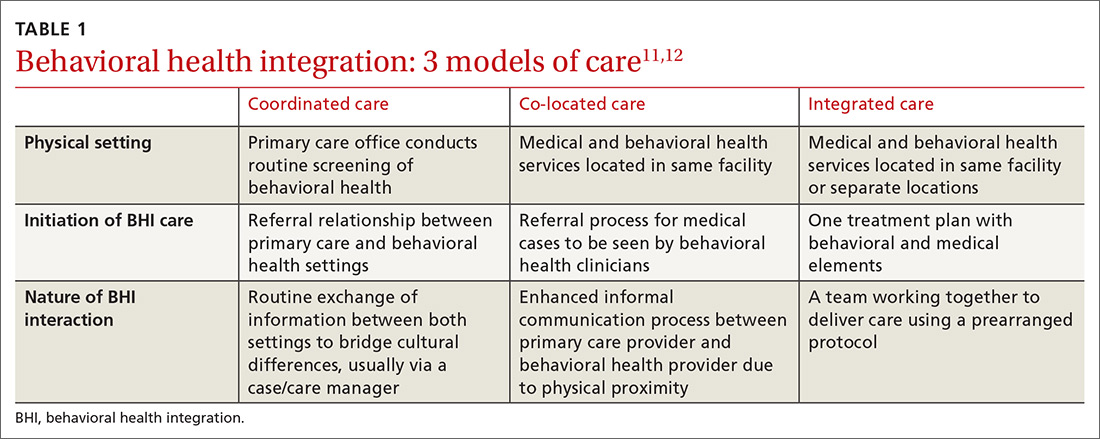

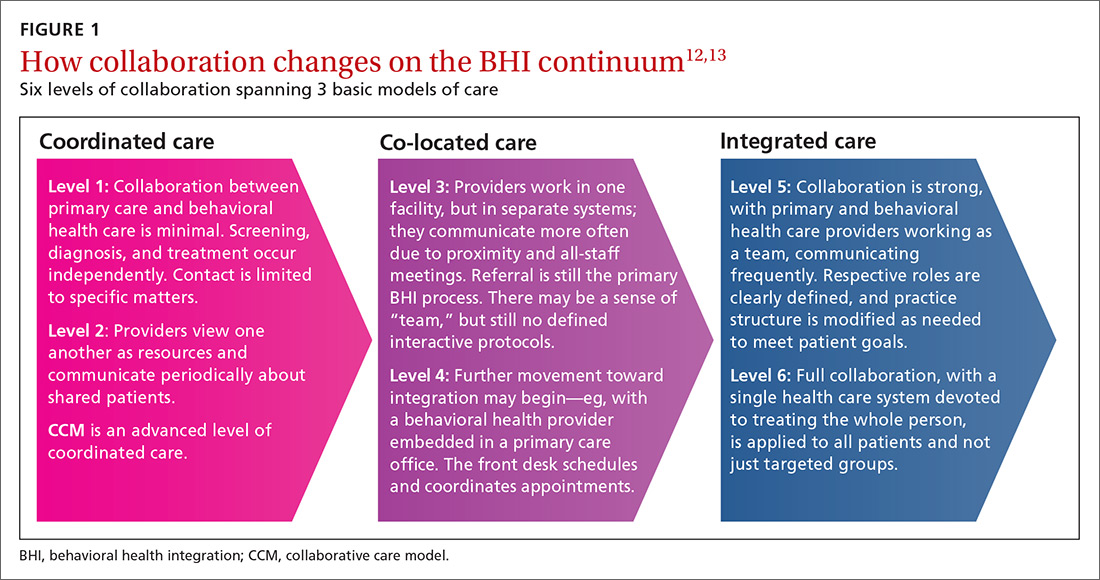

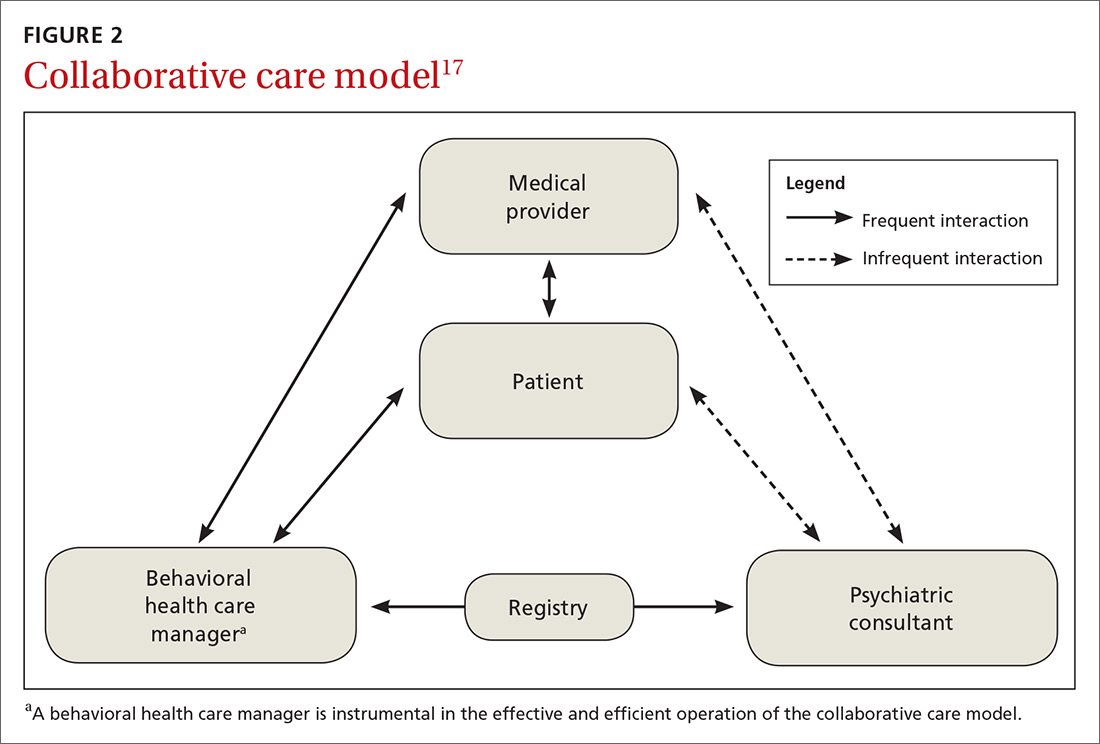

In this issue of JFP, Rajesh and colleagues present a scholarly review that details why it makes sense to integrate behavioral health into primary care. There is strong evidence that the presence of behavioral health care managers in primary care practices improves outcomes for patients with anxiety and depression. The mental health–trained care manager serves as a link between the primary care physician and mental health professional and can provide psychotherapy, as well.

A more integrated model, however, includes a full range of behavioral health services on site. Although not as well studied, co-location is a powerful pairing of physical and mental health treatment. Primary care physicians benefit because referral and feedback are immediate and seamless through warm hand-offs and easy access to medical and mental health notes in a common medical record. Patients benefit because they are more likely to engage with treatment when the physician introduces them to the mental health professional and expresses confidence in his or her abilities.

We know that trust improves treatment outcomes. What better way to encourage trust than with a warm smile and handshake when a patient is most vulnerable? In addition, integrating behavioral health into primary care helps patients avoid the stigma of going to a “mental health clinic.”

Integrating medical and mental health professionals into one practice is hardly a new idea and is spreading quickly in some parts of the country. It is perhaps no coincidence that Rajesh refers to a study of behavioral health integration in Colorado family practice offices. Recently, I (JH) presented a CME program to family physicians in Colorado, and, after reviewing recent studies of anxiety and depression, I asked how many participants had mental health professionals working in their practices. A full third raised their hands.

I (JH) had an excellent psychologist in my practice as far back as 1980, and he was an integral member of the care team. It seems that behavioral health integration has been a long time coming, and as a health care community we would be wise to spread this model of whole-person care to all primary care practices.

In this issue of JFP, Rajesh and colleagues present a scholarly review that details why it makes sense to integrate behavioral health into primary care. There is strong evidence that the presence of behavioral health care managers in primary care practices improves outcomes for patients with anxiety and depression. The mental health–trained care manager serves as a link between the primary care physician and mental health professional and can provide psychotherapy, as well.

A more integrated model, however, includes a full range of behavioral health services on site. Although not as well studied, co-location is a powerful pairing of physical and mental health treatment. Primary care physicians benefit because referral and feedback are immediate and seamless through warm hand-offs and easy access to medical and mental health notes in a common medical record. Patients benefit because they are more likely to engage with treatment when the physician introduces them to the mental health professional and expresses confidence in his or her abilities.

We know that trust improves treatment outcomes. What better way to encourage trust than with a warm smile and handshake when a patient is most vulnerable? In addition, integrating behavioral health into primary care helps patients avoid the stigma of going to a “mental health clinic.”

Integrating medical and mental health professionals into one practice is hardly a new idea and is spreading quickly in some parts of the country. It is perhaps no coincidence that Rajesh refers to a study of behavioral health integration in Colorado family practice offices. Recently, I (JH) presented a CME program to family physicians in Colorado, and, after reviewing recent studies of anxiety and depression, I asked how many participants had mental health professionals working in their practices. A full third raised their hands.

I (JH) had an excellent psychologist in my practice as far back as 1980, and he was an integral member of the care team. It seems that behavioral health integration has been a long time coming, and as a health care community we would be wise to spread this model of whole-person care to all primary care practices.

In this issue of JFP, Rajesh and colleagues present a scholarly review that details why it makes sense to integrate behavioral health into primary care. There is strong evidence that the presence of behavioral health care managers in primary care practices improves outcomes for patients with anxiety and depression. The mental health–trained care manager serves as a link between the primary care physician and mental health professional and can provide psychotherapy, as well.

A more integrated model, however, includes a full range of behavioral health services on site. Although not as well studied, co-location is a powerful pairing of physical and mental health treatment. Primary care physicians benefit because referral and feedback are immediate and seamless through warm hand-offs and easy access to medical and mental health notes in a common medical record. Patients benefit because they are more likely to engage with treatment when the physician introduces them to the mental health professional and expresses confidence in his or her abilities.

We know that trust improves treatment outcomes. What better way to encourage trust than with a warm smile and handshake when a patient is most vulnerable? In addition, integrating behavioral health into primary care helps patients avoid the stigma of going to a “mental health clinic.”

Integrating medical and mental health professionals into one practice is hardly a new idea and is spreading quickly in some parts of the country. It is perhaps no coincidence that Rajesh refers to a study of behavioral health integration in Colorado family practice offices. Recently, I (JH) presented a CME program to family physicians in Colorado, and, after reviewing recent studies of anxiety and depression, I asked how many participants had mental health professionals working in their practices. A full third raised their hands.

I (JH) had an excellent psychologist in my practice as far back as 1980, and he was an integral member of the care team. It seems that behavioral health integration has been a long time coming, and as a health care community we would be wise to spread this model of whole-person care to all primary care practices.

Rapidly growing lesions on the forehead

A 97-year-old woman with a history of atrial fibrillation and nonmelanoma skin cancer presented to our clinic from an assisted living facility with a several-month history of rapidly growing forehead lesions. She denied symptoms, other than some bleeding and crusting, but was concerned about their appearance. She reported a notable history of sun exposure.

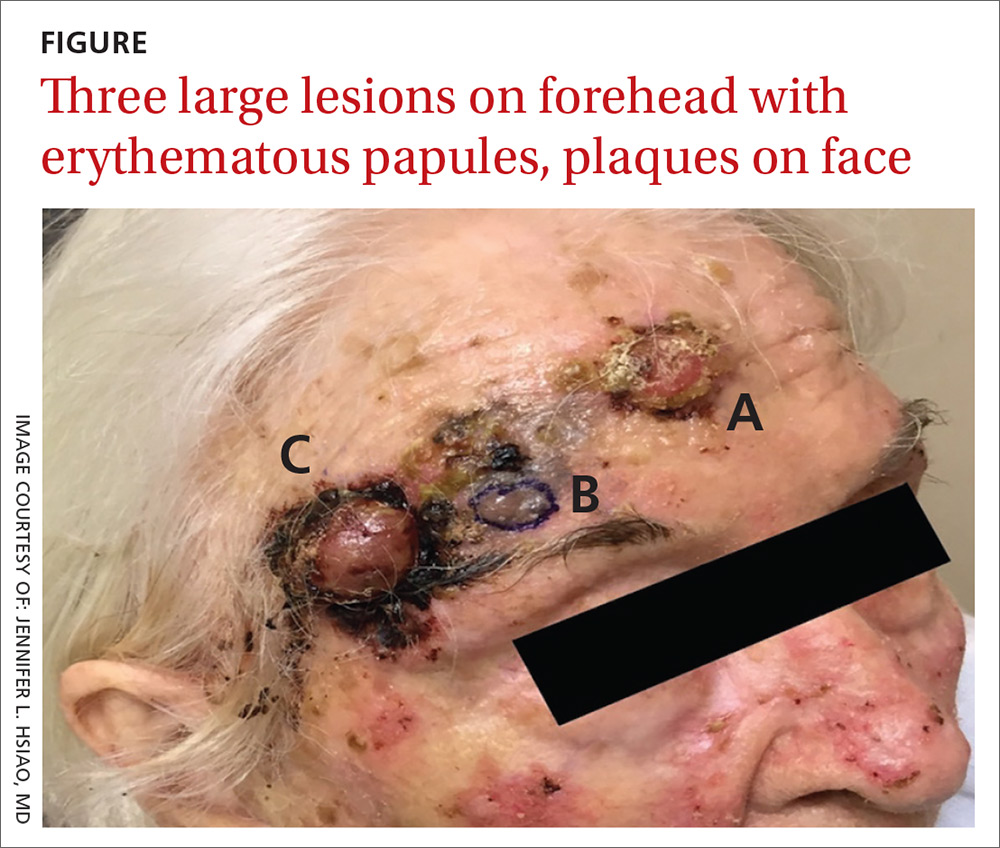

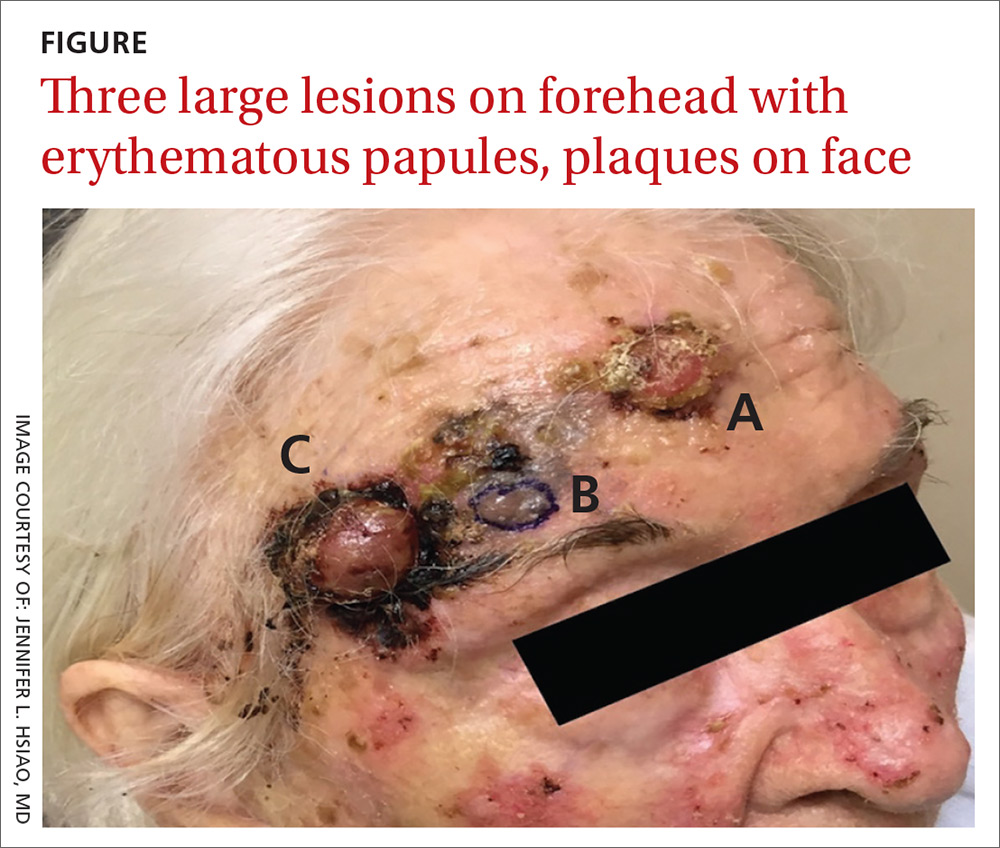

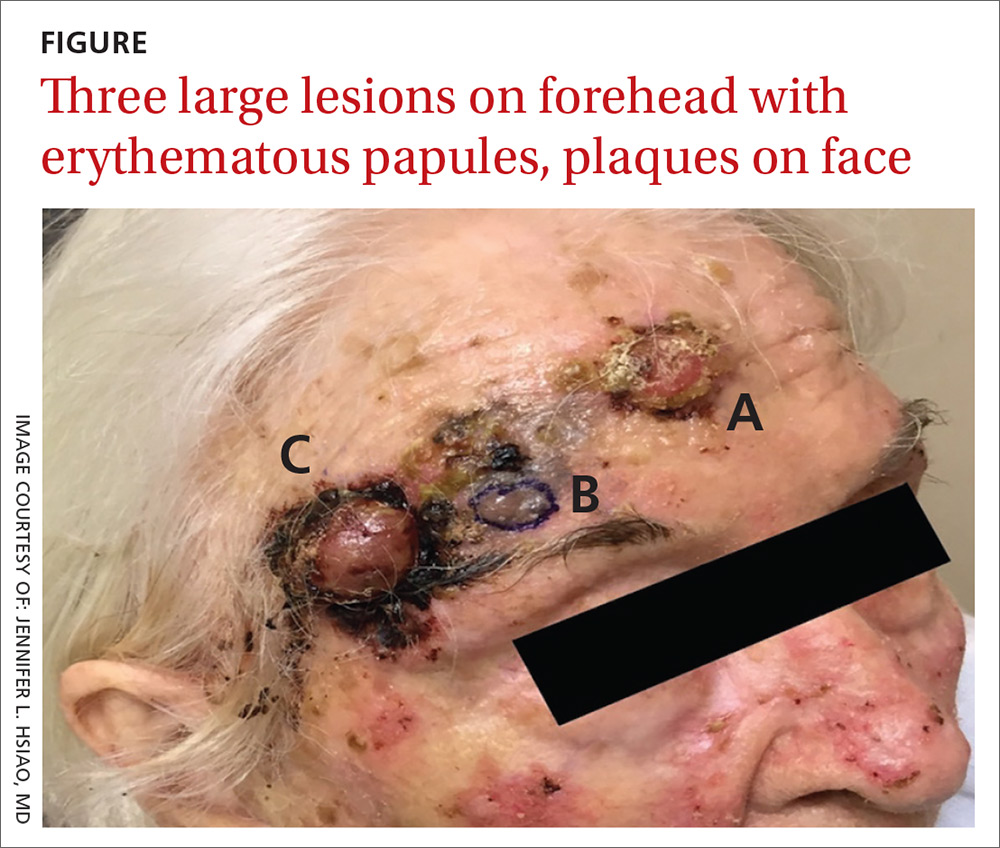

The patient had 3 confluent, but distinct, lesions on her forehead: an erythematous crateriform nodule with overlying hyperkeratotic scale (FIGURE, Lesion A); a nodular hyperpigmented plaque with irregular color and borders (Lesion B); and a pearly well-vascularized erythematous nodule with surrounding hemorrhagic crust (Lesion C).

She also had scattered, thin, gritty pink papules and plaques on the face that were thought to be actinic keratosis and nonmelanoma skin cancers based on clinical morphology; however, the patient deferred workup and treatment of these lesions to focus on the forehead lesions. The decision was made to biopsy all 3 clinical morphologies seen. The risks and benefits of biopsy were reviewed with the patient and her daughter, and they opted to proceed. The areas were anesthetized with an injection of 1% lidocaine and epinephrine 1:100,000; 3 shave biopsies were performed. Hemostasis was obtained with electrodesiccation.

WHAT IS YOUR DIAGNOSIS?

HOW WOULD YOU TREAT THIS PATIENT?

Diagnosis: Skin cancer

A histopathology report revealed that Lesion A was squamous cell carcinoma (SCC), Lesion B was a melanoma with a Breslow depth of at least 1.2 mm, and Lesion C was basal cell carcinoma (BCC). It is unusual to have a patient present with BCC, SCC, and melanoma concurrently in the same anatomic region.

Two of the lesions were nonmelanoma skin cancers (NMSC). BCC is the most common NMSC in the United States, affecting more than 3.3 million people per year.1 Although there are several subtypes of BCC with varying clinical presentations, the most classic appearance is a pearly papule with or without surface telangiectasias.2

SCC has an incidence of 200,000 to 400,000 cases per year in the United States and the lifetime risk is 9% to 14% in men and 4% to 9% in women.3 SCC most commonly presents as a hyperkeratotic papule or plaque.2 Lesions suspicious for SCC and BCC should be biopsied and the diagnosis confirmed by histopathologic analysis. These NMSCs are locally destructive, but rarely metastatic with a generally good prognosis. The standard treatment for both is surgical excision with consideration for other treatment modalities, such as topical therapies, chemotherapy, and radiation, depending on tumor characteristics as well as whether the patient is a good surgical candidate.1,3

Melanoma is rising in incidence each year, with nearly 100,000 new cases expected in the United States this year.4 It is the leading cause of skin cancer related mortality.5 The most common suspicious lesions are variably pigmented macules with irregular borders. Biopsy and subsequent histopathologic analysis will confirm the diagnosis.

When a lesion is clinically suspicious for melanoma, it is particularly important to consider an excisional biopsy to allow for proper staging.5 Examples of appropriate excisional biopsies include elliptical excisions, punch biopsies, and deep shave biopsies.5 Definitive treatment involves a wider and deeper excision with histologically confirmed clear margins.5

Continue to: This case required a multidisciplinary team

This case required a multidisciplinary team

The patient was cleared for surgery; however, after the patient held her warfarin in preparation for the resection, she suffered a left frontal operculum infarction. At this point, she was re-evaluated by her head and neck physician, cardiologist, and anesthesiologist. Consensus was reached that the patient was at high perioperative risk for morbidity and mortality, and surgical intervention was no longer considered a viable option.

The patient then opted for palliative radiation therapy to all 3 lesions, with the understanding that the local control offered by radiotherapy would be inferior to what resection would provide for the melanoma lesion. Although not curative, radiotherapy was expected to provide local symptom relief for the melanoma, consistent with the patient’s palliative goals of care. In the past, melanoma was thought to be resistant to radiation, but recent evidence suggests that it may be at least partially susceptible to hypofractionated courses of radiation.6

Radiation oncology recommended a 6 to 15 fraction regimen and she had a good clinical response with > 50% decrease in the size of all 3 lesions along with cessation of bleeding.