User login

For MD-IQ use only

Hyperhidrosis treatment options include glycopyrrolate

LAHAINA, HAWAII – Hyperhidrosis affects nearly 5% of the U.S. population, and in a survey of U.S. teenagers, about 17% reported excessive sweating, Jashin Wu, MD, said at the Hawaii Dermatology Seminar provided by the Global Academy for Medical Education/Skin Disease Education Foundation.

In an interview with MDedge reporter Bruce Jancin, Dr. Wu, founder of the Dermatology Research and Education Foundation, Irvine, Calif., discussed the off-label use of oral agents to treat hyperhidrosis. Dr. Wu said he is a fan of oral glycopyrrolate in particular, which he tends to use even earlier than suggested in the International Hyperhidrosis Society guidelines.

Glycopyrrolate is available in 1 mg and 2 mg tablets; Dr. Wu starts patients at a dose of 1 mg twice a day, escalating by 1 mg per week until the “desired effects occur” or the patient has problems tolerating treatment because of side effects.

Other oral options include oxybutynin and propranolol. Sofpironium bromide, an analog of glycopyrrolate, is in the pipeline, he said.

During the interview, Dr. Wu discussed mydriasis, an adverse effect associated with both topical and systemic anticholinergic treatment. In the two pivotal phase 3 randomized trials of prescription glycopyrronium cloth (Qbrexza) for axillary hyperhidrosis, the incidence of mydriasis was 6.8% in 463 patients on active treatment for 4 weeks. Three-quarters of cases were unilateral. The mydriasis resolved without permanent treatment discontinuation in 27 of the 31 patients (J Am Acad Dermatol. 2019 Jan;80[1]:128-138.e2).

“The most important point is that patients need to be educated that they need to wash their hands very well after they apply it to the affected areas” to prevent accidental medication contact with the eyes, he advised.

Alarm bells can go off when a patient with anticholinergic therapy–induced mydriasis presents to an ED without mentioning their treatment status, Dr. Wu observed.

Dr. Wu had no relevant disclosures. SDEF/Global Academy for Medical Education and this news organization are owned by the same parent company.

To listen to the interview, click the play button below.

LAHAINA, HAWAII – Hyperhidrosis affects nearly 5% of the U.S. population, and in a survey of U.S. teenagers, about 17% reported excessive sweating, Jashin Wu, MD, said at the Hawaii Dermatology Seminar provided by the Global Academy for Medical Education/Skin Disease Education Foundation.

In an interview with MDedge reporter Bruce Jancin, Dr. Wu, founder of the Dermatology Research and Education Foundation, Irvine, Calif., discussed the off-label use of oral agents to treat hyperhidrosis. Dr. Wu said he is a fan of oral glycopyrrolate in particular, which he tends to use even earlier than suggested in the International Hyperhidrosis Society guidelines.

Glycopyrrolate is available in 1 mg and 2 mg tablets; Dr. Wu starts patients at a dose of 1 mg twice a day, escalating by 1 mg per week until the “desired effects occur” or the patient has problems tolerating treatment because of side effects.

Other oral options include oxybutynin and propranolol. Sofpironium bromide, an analog of glycopyrrolate, is in the pipeline, he said.

During the interview, Dr. Wu discussed mydriasis, an adverse effect associated with both topical and systemic anticholinergic treatment. In the two pivotal phase 3 randomized trials of prescription glycopyrronium cloth (Qbrexza) for axillary hyperhidrosis, the incidence of mydriasis was 6.8% in 463 patients on active treatment for 4 weeks. Three-quarters of cases were unilateral. The mydriasis resolved without permanent treatment discontinuation in 27 of the 31 patients (J Am Acad Dermatol. 2019 Jan;80[1]:128-138.e2).

“The most important point is that patients need to be educated that they need to wash their hands very well after they apply it to the affected areas” to prevent accidental medication contact with the eyes, he advised.

Alarm bells can go off when a patient with anticholinergic therapy–induced mydriasis presents to an ED without mentioning their treatment status, Dr. Wu observed.

Dr. Wu had no relevant disclosures. SDEF/Global Academy for Medical Education and this news organization are owned by the same parent company.

To listen to the interview, click the play button below.

LAHAINA, HAWAII – Hyperhidrosis affects nearly 5% of the U.S. population, and in a survey of U.S. teenagers, about 17% reported excessive sweating, Jashin Wu, MD, said at the Hawaii Dermatology Seminar provided by the Global Academy for Medical Education/Skin Disease Education Foundation.

In an interview with MDedge reporter Bruce Jancin, Dr. Wu, founder of the Dermatology Research and Education Foundation, Irvine, Calif., discussed the off-label use of oral agents to treat hyperhidrosis. Dr. Wu said he is a fan of oral glycopyrrolate in particular, which he tends to use even earlier than suggested in the International Hyperhidrosis Society guidelines.

Glycopyrrolate is available in 1 mg and 2 mg tablets; Dr. Wu starts patients at a dose of 1 mg twice a day, escalating by 1 mg per week until the “desired effects occur” or the patient has problems tolerating treatment because of side effects.

Other oral options include oxybutynin and propranolol. Sofpironium bromide, an analog of glycopyrrolate, is in the pipeline, he said.

During the interview, Dr. Wu discussed mydriasis, an adverse effect associated with both topical and systemic anticholinergic treatment. In the two pivotal phase 3 randomized trials of prescription glycopyrronium cloth (Qbrexza) for axillary hyperhidrosis, the incidence of mydriasis was 6.8% in 463 patients on active treatment for 4 weeks. Three-quarters of cases were unilateral. The mydriasis resolved without permanent treatment discontinuation in 27 of the 31 patients (J Am Acad Dermatol. 2019 Jan;80[1]:128-138.e2).

“The most important point is that patients need to be educated that they need to wash their hands very well after they apply it to the affected areas” to prevent accidental medication contact with the eyes, he advised.

Alarm bells can go off when a patient with anticholinergic therapy–induced mydriasis presents to an ED without mentioning their treatment status, Dr. Wu observed.

Dr. Wu had no relevant disclosures. SDEF/Global Academy for Medical Education and this news organization are owned by the same parent company.

To listen to the interview, click the play button below.

REPORTING FROM THE HAWAII DERMATOLOGY SEMINAR

Resetting your compensation

Using the State of Hospital Medicine Report to bolster your proposal

In the ever-changing world of health care, one thing is for sure: If you’re not paying attention, you’re falling behind. In this column, I will discuss how you may utilize the Society of Hospital Medicine (SHM) State of Hospital Medicine Report (SoHM) to evaluate your current compensation structure and strengthen your business plan for change. For purposes of this exercise, I will focus on data referenced as Internal Medicine only, hospital-owned Hospital Medicine Groups (HMG).

Issues with retention, recruitment, or burnout may be among the first factors that lead you to reevaluate your compensation plan. The SoHM Report can help you to take a dive into feedback for these areas. Look for any indications that compensation may be affecting your turnover, inability to hire, or leaving your current team frustrated with their current pay structure. Feedback surrounding each of these factors may drive you to evaluate your comp plan but remain mindful that money is not always the answer.

You may complete your evaluation and find the data could suggest you are in fact well paid for the work you do. Even though this may be the case, the evaluation and transparency to your provider team may help flush out the real reason you are struggling with recruitment, retention, or burnout. However, if you do find you have an opportunity to improve your compensation structure, remember that you will need a compelling, data-driven case, to present to your C-suite.

Let us start by understanding how the market has changed over time using data from the 2014, 2016, and 2018 SoHM Reports. Of note, each report is based on data from the prior year. Since 2013, hospital owned HMGs have seen a 16% increase in total compensation while experiencing only a 9% increase in collections. Meanwhile RVU productivity has remained relatively stable over time. From this, we see hospitalists are earning more for similar productivity. The hospital reimbursement for professional fees has not grown at the same rate as compensation. Also, the collection per RVU has remained relatively flat over time.

It’s simple: Hospitalists are earning more and professional revenues are not making up the difference. This market change is driving hospitals to invest more money to maintain their HMGs. If your hospital hasn’t been responding to these data, you will need a strong business plan to get buy in from your hospital administration.

Now that you have evaluated the market change, it is time to put some optics on where your compensation falls in the current market. When you combine your total compensation with your total RVU productivity, you can use the SoHM Report to evaluate the current reported benchmarks for Compensation per RVU. Plotting these benchmarks against your own compensation and any proposed changes can help your administration really begin to see whether a change should be considered. Providing that clear picture in relevance to the SoHM benchmark is important, as a chart or graph can simplify your C-suite’s understanding of your proposal.

By simplifying your example using Compensation per RVU, you are making the conversation easier to follow. Your hospital leaders can clearly see the cost for every RVU generated and understand the impact. This is not to say that you should base your compensation around productivity. It is merely a way to roll in all compensation factors, whether quality related, performance based, or productivity driven, and tie them to a metric that is clear and easy for administration to understand.

Remember, when designing your new compensation plan, you can reference the SoHM Report to see how HMGs around the country are providing incentive and what percentage of compensation is based on incentive. There are sections within the report directly outlining these data points.

Now that we have reviewed market change and how to visualize change between your current and proposed future state, I will leave you with some final thoughts regarding other considerations when building your business plan:

- Focus on only physician-generated RVUs.

- Consider Length of Stay impact on productivity.

- Decide if Case Mix Index changes have impacted your staffing needs.

- Understand your E and M coding practices in reference to industry benchmarks. The SoHM Report provides benchmarks for billing practices across the country.

- Lastly, clearly identify the issues you want to address and set goals with measurable outcomes.

There is still time for your group to be part of the 2020 State of Hospital Medicine Report data by participating in the 2020 Survey. Data are being accepted through Feb. 28, 2020. Submit your data at www.hospitalmedicine.org/2020survey.

Mr. Sandroni is director of operations, hospitalists, at Rochester (N.Y.) Regional Health.

Using the State of Hospital Medicine Report to bolster your proposal

Using the State of Hospital Medicine Report to bolster your proposal

In the ever-changing world of health care, one thing is for sure: If you’re not paying attention, you’re falling behind. In this column, I will discuss how you may utilize the Society of Hospital Medicine (SHM) State of Hospital Medicine Report (SoHM) to evaluate your current compensation structure and strengthen your business plan for change. For purposes of this exercise, I will focus on data referenced as Internal Medicine only, hospital-owned Hospital Medicine Groups (HMG).

Issues with retention, recruitment, or burnout may be among the first factors that lead you to reevaluate your compensation plan. The SoHM Report can help you to take a dive into feedback for these areas. Look for any indications that compensation may be affecting your turnover, inability to hire, or leaving your current team frustrated with their current pay structure. Feedback surrounding each of these factors may drive you to evaluate your comp plan but remain mindful that money is not always the answer.

You may complete your evaluation and find the data could suggest you are in fact well paid for the work you do. Even though this may be the case, the evaluation and transparency to your provider team may help flush out the real reason you are struggling with recruitment, retention, or burnout. However, if you do find you have an opportunity to improve your compensation structure, remember that you will need a compelling, data-driven case, to present to your C-suite.

Let us start by understanding how the market has changed over time using data from the 2014, 2016, and 2018 SoHM Reports. Of note, each report is based on data from the prior year. Since 2013, hospital owned HMGs have seen a 16% increase in total compensation while experiencing only a 9% increase in collections. Meanwhile RVU productivity has remained relatively stable over time. From this, we see hospitalists are earning more for similar productivity. The hospital reimbursement for professional fees has not grown at the same rate as compensation. Also, the collection per RVU has remained relatively flat over time.

It’s simple: Hospitalists are earning more and professional revenues are not making up the difference. This market change is driving hospitals to invest more money to maintain their HMGs. If your hospital hasn’t been responding to these data, you will need a strong business plan to get buy in from your hospital administration.

Now that you have evaluated the market change, it is time to put some optics on where your compensation falls in the current market. When you combine your total compensation with your total RVU productivity, you can use the SoHM Report to evaluate the current reported benchmarks for Compensation per RVU. Plotting these benchmarks against your own compensation and any proposed changes can help your administration really begin to see whether a change should be considered. Providing that clear picture in relevance to the SoHM benchmark is important, as a chart or graph can simplify your C-suite’s understanding of your proposal.

By simplifying your example using Compensation per RVU, you are making the conversation easier to follow. Your hospital leaders can clearly see the cost for every RVU generated and understand the impact. This is not to say that you should base your compensation around productivity. It is merely a way to roll in all compensation factors, whether quality related, performance based, or productivity driven, and tie them to a metric that is clear and easy for administration to understand.

Remember, when designing your new compensation plan, you can reference the SoHM Report to see how HMGs around the country are providing incentive and what percentage of compensation is based on incentive. There are sections within the report directly outlining these data points.

Now that we have reviewed market change and how to visualize change between your current and proposed future state, I will leave you with some final thoughts regarding other considerations when building your business plan:

- Focus on only physician-generated RVUs.

- Consider Length of Stay impact on productivity.

- Decide if Case Mix Index changes have impacted your staffing needs.

- Understand your E and M coding practices in reference to industry benchmarks. The SoHM Report provides benchmarks for billing practices across the country.

- Lastly, clearly identify the issues you want to address and set goals with measurable outcomes.

There is still time for your group to be part of the 2020 State of Hospital Medicine Report data by participating in the 2020 Survey. Data are being accepted through Feb. 28, 2020. Submit your data at www.hospitalmedicine.org/2020survey.

Mr. Sandroni is director of operations, hospitalists, at Rochester (N.Y.) Regional Health.

In the ever-changing world of health care, one thing is for sure: If you’re not paying attention, you’re falling behind. In this column, I will discuss how you may utilize the Society of Hospital Medicine (SHM) State of Hospital Medicine Report (SoHM) to evaluate your current compensation structure and strengthen your business plan for change. For purposes of this exercise, I will focus on data referenced as Internal Medicine only, hospital-owned Hospital Medicine Groups (HMG).

Issues with retention, recruitment, or burnout may be among the first factors that lead you to reevaluate your compensation plan. The SoHM Report can help you to take a dive into feedback for these areas. Look for any indications that compensation may be affecting your turnover, inability to hire, or leaving your current team frustrated with their current pay structure. Feedback surrounding each of these factors may drive you to evaluate your comp plan but remain mindful that money is not always the answer.

You may complete your evaluation and find the data could suggest you are in fact well paid for the work you do. Even though this may be the case, the evaluation and transparency to your provider team may help flush out the real reason you are struggling with recruitment, retention, or burnout. However, if you do find you have an opportunity to improve your compensation structure, remember that you will need a compelling, data-driven case, to present to your C-suite.

Let us start by understanding how the market has changed over time using data from the 2014, 2016, and 2018 SoHM Reports. Of note, each report is based on data from the prior year. Since 2013, hospital owned HMGs have seen a 16% increase in total compensation while experiencing only a 9% increase in collections. Meanwhile RVU productivity has remained relatively stable over time. From this, we see hospitalists are earning more for similar productivity. The hospital reimbursement for professional fees has not grown at the same rate as compensation. Also, the collection per RVU has remained relatively flat over time.

It’s simple: Hospitalists are earning more and professional revenues are not making up the difference. This market change is driving hospitals to invest more money to maintain their HMGs. If your hospital hasn’t been responding to these data, you will need a strong business plan to get buy in from your hospital administration.

Now that you have evaluated the market change, it is time to put some optics on where your compensation falls in the current market. When you combine your total compensation with your total RVU productivity, you can use the SoHM Report to evaluate the current reported benchmarks for Compensation per RVU. Plotting these benchmarks against your own compensation and any proposed changes can help your administration really begin to see whether a change should be considered. Providing that clear picture in relevance to the SoHM benchmark is important, as a chart or graph can simplify your C-suite’s understanding of your proposal.

By simplifying your example using Compensation per RVU, you are making the conversation easier to follow. Your hospital leaders can clearly see the cost for every RVU generated and understand the impact. This is not to say that you should base your compensation around productivity. It is merely a way to roll in all compensation factors, whether quality related, performance based, or productivity driven, and tie them to a metric that is clear and easy for administration to understand.

Remember, when designing your new compensation plan, you can reference the SoHM Report to see how HMGs around the country are providing incentive and what percentage of compensation is based on incentive. There are sections within the report directly outlining these data points.

Now that we have reviewed market change and how to visualize change between your current and proposed future state, I will leave you with some final thoughts regarding other considerations when building your business plan:

- Focus on only physician-generated RVUs.

- Consider Length of Stay impact on productivity.

- Decide if Case Mix Index changes have impacted your staffing needs.

- Understand your E and M coding practices in reference to industry benchmarks. The SoHM Report provides benchmarks for billing practices across the country.

- Lastly, clearly identify the issues you want to address and set goals with measurable outcomes.

There is still time for your group to be part of the 2020 State of Hospital Medicine Report data by participating in the 2020 Survey. Data are being accepted through Feb. 28, 2020. Submit your data at www.hospitalmedicine.org/2020survey.

Mr. Sandroni is director of operations, hospitalists, at Rochester (N.Y.) Regional Health.

Make the Diagnosis - March 2020

The patient’s biopsy showed sparse and grouped and slightly enlarged atypical stained mononuclear cells in mostly perifollicular areas with focal epidermotropism. CD30 staining was positive. She responded to potent topical steroids.

The etiology of LyP is unknown. It is unclear whether the proliferation of T-cells is a benign and chronic disorder, or an indolent T-cell malignancy.

In addition, 10% of LyP cases are associated with anaplastic large-cell lymphoma, cutaneous T-cell lymphoma (mycosis fungoides), or Hodgkin lymphoma. Borderline cases are those that overlap LyP and lymphoma.

Patients typically present with crops of asymptomatic erythematous to brown papules that may become pustular, vesicular, or necrotic. Lesions tend to resolve within 2-8 weeks with or without scarring. The trunk and extremities are commonly affected. The condition tends to be chronic over months to years. The waxing and waning course is characteristic of LyP. Constitutional symptoms are generally absent in cases not associated with systemic disease.

Histopathologic examination reveals a dense wedge-shaped dermal infiltrate of atypical lymphocytes along with numerous eosinophils and neutrophils. Epidermotropism may be present and lymphocytes stain positive for CD30+. Vessels in the dermis may exhibit fibrin deposition and red blood cell extravasation. Histologically, LyP can be classified as Type A to E. These subtypes are determined by the size and type of atypical cells, location and amount of infiltrate, and staining of CD30 and CD8.

The differential diagnosis of LyP includes pityriasis lichenoides, anaplastic large cell lymphoma, cutaneous T-cell lymphoma, folliculitis, arthropod assault, Langerhans cell histiocytosis, and leukemia cutis. Treatment is symptomatic. Mild forms of LyP can many times be managed with superpotent topical corticosteroids. Bexarotene gel has been used for early lesions. For more widespread or persistent disease, intralesional corticosteroids, phototherapy (UVB or PUVA), tetracycline antibiotics, and methotrexate have been reported to be effective. Refractory cases may respond to interferon alpha or oral bexarotene. Routine evaluations are recommended as patients may be at increased risk for the development of lymphoma.

This case and photo were submitted by Dr. Bilu Martin.

Dr. Bilu Martin is a board-certified dermatologist in private practice at Premier Dermatology, MD, in Aventura, Fla. More diagnostic cases are available at mdedge.com/dermatology. To submit a case for possible publication, send an email to [email protected].

The patient’s biopsy showed sparse and grouped and slightly enlarged atypical stained mononuclear cells in mostly perifollicular areas with focal epidermotropism. CD30 staining was positive. She responded to potent topical steroids.

The etiology of LyP is unknown. It is unclear whether the proliferation of T-cells is a benign and chronic disorder, or an indolent T-cell malignancy.

In addition, 10% of LyP cases are associated with anaplastic large-cell lymphoma, cutaneous T-cell lymphoma (mycosis fungoides), or Hodgkin lymphoma. Borderline cases are those that overlap LyP and lymphoma.

Patients typically present with crops of asymptomatic erythematous to brown papules that may become pustular, vesicular, or necrotic. Lesions tend to resolve within 2-8 weeks with or without scarring. The trunk and extremities are commonly affected. The condition tends to be chronic over months to years. The waxing and waning course is characteristic of LyP. Constitutional symptoms are generally absent in cases not associated with systemic disease.

Histopathologic examination reveals a dense wedge-shaped dermal infiltrate of atypical lymphocytes along with numerous eosinophils and neutrophils. Epidermotropism may be present and lymphocytes stain positive for CD30+. Vessels in the dermis may exhibit fibrin deposition and red blood cell extravasation. Histologically, LyP can be classified as Type A to E. These subtypes are determined by the size and type of atypical cells, location and amount of infiltrate, and staining of CD30 and CD8.

The differential diagnosis of LyP includes pityriasis lichenoides, anaplastic large cell lymphoma, cutaneous T-cell lymphoma, folliculitis, arthropod assault, Langerhans cell histiocytosis, and leukemia cutis. Treatment is symptomatic. Mild forms of LyP can many times be managed with superpotent topical corticosteroids. Bexarotene gel has been used for early lesions. For more widespread or persistent disease, intralesional corticosteroids, phototherapy (UVB or PUVA), tetracycline antibiotics, and methotrexate have been reported to be effective. Refractory cases may respond to interferon alpha or oral bexarotene. Routine evaluations are recommended as patients may be at increased risk for the development of lymphoma.

This case and photo were submitted by Dr. Bilu Martin.

Dr. Bilu Martin is a board-certified dermatologist in private practice at Premier Dermatology, MD, in Aventura, Fla. More diagnostic cases are available at mdedge.com/dermatology. To submit a case for possible publication, send an email to [email protected].

The patient’s biopsy showed sparse and grouped and slightly enlarged atypical stained mononuclear cells in mostly perifollicular areas with focal epidermotropism. CD30 staining was positive. She responded to potent topical steroids.

The etiology of LyP is unknown. It is unclear whether the proliferation of T-cells is a benign and chronic disorder, or an indolent T-cell malignancy.

In addition, 10% of LyP cases are associated with anaplastic large-cell lymphoma, cutaneous T-cell lymphoma (mycosis fungoides), or Hodgkin lymphoma. Borderline cases are those that overlap LyP and lymphoma.

Patients typically present with crops of asymptomatic erythematous to brown papules that may become pustular, vesicular, or necrotic. Lesions tend to resolve within 2-8 weeks with or without scarring. The trunk and extremities are commonly affected. The condition tends to be chronic over months to years. The waxing and waning course is characteristic of LyP. Constitutional symptoms are generally absent in cases not associated with systemic disease.

Histopathologic examination reveals a dense wedge-shaped dermal infiltrate of atypical lymphocytes along with numerous eosinophils and neutrophils. Epidermotropism may be present and lymphocytes stain positive for CD30+. Vessels in the dermis may exhibit fibrin deposition and red blood cell extravasation. Histologically, LyP can be classified as Type A to E. These subtypes are determined by the size and type of atypical cells, location and amount of infiltrate, and staining of CD30 and CD8.

The differential diagnosis of LyP includes pityriasis lichenoides, anaplastic large cell lymphoma, cutaneous T-cell lymphoma, folliculitis, arthropod assault, Langerhans cell histiocytosis, and leukemia cutis. Treatment is symptomatic. Mild forms of LyP can many times be managed with superpotent topical corticosteroids. Bexarotene gel has been used for early lesions. For more widespread or persistent disease, intralesional corticosteroids, phototherapy (UVB or PUVA), tetracycline antibiotics, and methotrexate have been reported to be effective. Refractory cases may respond to interferon alpha or oral bexarotene. Routine evaluations are recommended as patients may be at increased risk for the development of lymphoma.

This case and photo were submitted by Dr. Bilu Martin.

Dr. Bilu Martin is a board-certified dermatologist in private practice at Premier Dermatology, MD, in Aventura, Fla. More diagnostic cases are available at mdedge.com/dermatology. To submit a case for possible publication, send an email to [email protected].

Glaring gap in CV event reporting in pivotal cancer trials

Clinical trials supporting Food and Drug Adminstration approval of contemporary cancer therapies frequently failed to capture major adverse cardiovascular events (MACE) and, when they did, reported rates 2.6-fold lower than noncancer trials, new research shows.

Overall, 51.3% of trials did not report MACE, with that number reaching 57.6% in trials enrolling patients with baseline cardiovascular disease (CVD).

Nearly 40% of trials did not report any CVD events in follow-up, the authors reported online Feb. 10, 2020, in the Journal of the American College of Cardiology (2020;75:620-8).

“Even in drug classes where there were established or emerging associations with cardiotoxic events, often there were no reported heart events or cardiovascular events across years of follow-up in trials that examined hundreds or even thousands of patients. That was actually pretty surprising,” senior author Daniel Addison, MD, codirector of the cardio-oncology program at the Ohio State University Medical Center, Columbus, said in an interview.

The study was prompted by a series of events that crescendoed when his team was called to the ICU to determine whether a novel targeted agent played a role in the heart decline of a patient with acute myeloid leukemia. “I had a resident ask me a very important question: ‘How do we really know for sure that the trial actually reflects the true risk of heart events?’ to which I told him, ‘it’s difficult to know,’ ” he said.

“I think many of us rely heavily on what we see in the trials, particularly when they make it to the top journals, and quite frankly, we generally take it at face value,” Dr. Addison observed.

Lower Rate of Reported Events

The investigators reviewed CV events reported in 97,365 patients (median age, 61 years; 46% female) enrolled in 189 phase 2 and 3 trials supporting FDA approval of 123 anticancer drugs from 1998 to 2018. Biologic, targeted, or immune-based therapies accounted for 72.5% of drug approvals.

Over 148,138 person-years of follow-up (median trial duration, 30 months), there were 1,148 incidents of MACE (375 heart failure, 253 MIs, 180 strokes, 65 atrial fibrillation, 29 coronary revascularizations, and 246 CVD deaths). MACE rates were higher in the intervention group than in the control group (792 vs. 356; P less than .01). Among the 64 trials that excluded patients with baseline CVD, there were 269 incidents of MACE.

To put this finding in context, the researchers examined the reported incidence of MACE among some 6,000 similarly aged participants in the Multi-Ethnic Study of Atherosclerosis (MESA). The overall weighted-average incidence rate was 1,408 per 100,000 person-years among MESA participants, compared with 542 events per 100,000 person-years among oncology trial participants (716 per 100,000 in the intervention arm). This represents a reported-to-expected ratio of 0.38 – a 2.6-fold lower rate of reported events (P less than .001) – and a risk difference of 866.

Further, MACE reporting was lower by a factor of 1.7 among all cancer trial participants irrespective of baseline CVD status (reported-to-expected ratio, 0.56; risk difference, 613; P less than .001).

There was no significant difference in MACE reporting between independent or industry-sponsored trials, the authors report.

No malicious intent

“There are likely some that might lean toward not wanting to attribute blame to a new drug when the drug is in a study, but I really think that the leading factor is lack of awareness,” Dr. Addison said. “I’ve talked with several cancer collaborators around the country who run large clinical trials, and I think often, when an event may be brought to someone’s attention, there is a tendency to just write it off as kind of a generic expected event due to age, or just something that’s not really pertinent to the study. So they don’t really focus on it as much.”

“Closer collaboration between cardiologists and cancer physicians is needed to better determine true cardiac risks among patients treated with these drugs.”

Breast cancer oncologist Marc E. Lippman, MD, of Georgetown University Medical Center and Georgetown Lombardi Comprehensive Cancer Center, Washington, D.C., isn’t convinced a lack of awareness is the culprit.

“I don’t agree with that at all,” he said in an interview. “I think there are very, very clear rules and guidelines these days for adverse-event reporting. I think that’s not a very likely explanation – that it’s not on the radar.”

Part of the problem may be that some of the toxicities, particularly cardiovascular, may not emerge for years, he said. Participant screening for the trials also likely removed patients with high cardiovascular risk. “It’s very understandable to me – I’m not saying it’s good particularly – but I think it’s very understandable that, if you’re trying to develop a drug, the last thing you’d want to have is a lot of toxicity that you might have avoided by just being restrictive in who you let into the study,” Dr. Lippman said.

The underreported CVD events may also reflect the rapidly changing profile of cardiovascular toxicities associated with novel anticancer therapies.

“Providers, both cancer and noncancer, generally put cardiotoxicity in the box of anthracyclines and radiation, but particularly over the last decade, we’ve begun to understand it’s well beyond any one class of drugs,” Dr. Addison said.

“I agree completely,” Dr. Lippman said. For example, “the checkpoint inhibitors are so unbelievably different in terms of their toxicities that many people simply didn’t even know what they were getting into at first.”

One size does not fit all

Javid Moslehi, MD, director of the cardio-oncology program at Vanderbilt University, Nashville, Tenn., said echocardiography – recommended to detect changes in left ventricular function in patients exposed to anthracyclines or targeted agents like trastuzumab (Herceptin) – isn’t enough to address today’s cancer therapy–related CVD events.

“Initial drugs like anthracyclines or Herceptin in cardio-oncology were associated with systolic cardiac dysfunction, whereas the majority of issues we see in the cardio-oncology clinics today are vascular, metabolic, arrhythmogenic, and inflammatory,” he said in an interview. “Echocardiography misses the big and increasingly complex picture.”

His group, for example, has been studying myocarditis associated with immunotherapies, but none of the clinical trials require screening or surveillance for myocarditis with a cardiac biomarker like troponin.

The group also recently identified 303 deaths in patients exposed to ibrutinib, a drug that revolutionized the treatment of several B-cell malignancies but is associated with higher rates of atrial fibrillation, which is also associated with increased bleeding risk. “So there’s a little bit of a double whammy there, given that we often treat atrial fibrillation with anticoagulation and where we can cause complications in patients,” Dr. Moslehi noted.

Although there needs to be closer collaboration between cardiologists and oncologists on individual trials, cardiologists also have to realize that oncology care has become very personalized, he suggested.

“What’s probably relevant for the breast cancer patient may not be relevant for the prostate cancer patient and their respective treatments,” Dr. Moslehi said. “So if we were to say, ‘every person should get an echo,’ that may be less relevant to the prostate cancer patient where treatments can cause vascular and metabolic perturbations or to the patient treated with immunotherapy who may have myocarditis, where many of the echos can be normal. There’s no one-size-fits-all for these things.”

Wearable technologies like smartwatches could play a role in improving the reporting of CVD events with novel therapies but a lot more research needs to be done to validate these tools, Dr. Addison said. “But as we continue on into the 21st century, this is going to expand and may potentially help us,” he added.

In the interim, better standardization is needed of the cardiovascular events reported in oncology trials, particularly the Common Terminology Criteria for Adverse Events (CTCAE), said Dr. Moslehi, who also serves as chair of the American Heart Association’s subcommittee on cardio-oncology.

“Cardiovascular definitions are not exactly uniform and are not consistent with what we in cardiology consider to be important or relevant,” he said. “So I think there needs to be better standardization of these definitions, specifically within the CTCAE, which is what the oncologists use to identify adverse events.”

In a linked editorial (J Am Coll Cardiol. 2020;75:629-31), Dr. Lippman and cardiologist Nanette Bishopric, MD, of the Medstar Heart and Vascular Institute in Washington, D.C., suggested it may also be time to organize a consortium that can carry out “rigorous multicenter clinical investigations to evaluate the cardiotoxicity of emerging cancer treatments,” similar to the Thrombosis in Myocardial Infarction Study Group.

“The success of this consortium in pioneering and targeting multiple generations of drugs for the treatment of MI, involving tens of thousands of patients and thousands of collaborations across multiple national borders, is a model for how to move forward in providing the new hope of cancer cure without the trade-off of years lost to heart disease,” the editorialists concluded.

The study was supported in part by National Institutes of Health grants, including a K12-CA133250 grant to Dr. Addison. Dr. Bishopric reported being on the scientific board of C&C Biopharma. Dr. Lippman reports being on the board of directors of and holding stock in Seattle Genetics. Dr. Moslehi reported having served on advisory boards for Pfizer, Novartis, Bristol-Myers Squibb, Deciphera, Audentes Pharmaceuticals, Nektar, Takeda, Ipsen, Myokardia, AstraZeneca, GlaxoSmithKline, Intrexon, and Regeneron.

This article first appeared on Medscape.com.

Clinical trials supporting Food and Drug Adminstration approval of contemporary cancer therapies frequently failed to capture major adverse cardiovascular events (MACE) and, when they did, reported rates 2.6-fold lower than noncancer trials, new research shows.

Overall, 51.3% of trials did not report MACE, with that number reaching 57.6% in trials enrolling patients with baseline cardiovascular disease (CVD).

Nearly 40% of trials did not report any CVD events in follow-up, the authors reported online Feb. 10, 2020, in the Journal of the American College of Cardiology (2020;75:620-8).

“Even in drug classes where there were established or emerging associations with cardiotoxic events, often there were no reported heart events or cardiovascular events across years of follow-up in trials that examined hundreds or even thousands of patients. That was actually pretty surprising,” senior author Daniel Addison, MD, codirector of the cardio-oncology program at the Ohio State University Medical Center, Columbus, said in an interview.

The study was prompted by a series of events that crescendoed when his team was called to the ICU to determine whether a novel targeted agent played a role in the heart decline of a patient with acute myeloid leukemia. “I had a resident ask me a very important question: ‘How do we really know for sure that the trial actually reflects the true risk of heart events?’ to which I told him, ‘it’s difficult to know,’ ” he said.

“I think many of us rely heavily on what we see in the trials, particularly when they make it to the top journals, and quite frankly, we generally take it at face value,” Dr. Addison observed.

Lower Rate of Reported Events

The investigators reviewed CV events reported in 97,365 patients (median age, 61 years; 46% female) enrolled in 189 phase 2 and 3 trials supporting FDA approval of 123 anticancer drugs from 1998 to 2018. Biologic, targeted, or immune-based therapies accounted for 72.5% of drug approvals.

Over 148,138 person-years of follow-up (median trial duration, 30 months), there were 1,148 incidents of MACE (375 heart failure, 253 MIs, 180 strokes, 65 atrial fibrillation, 29 coronary revascularizations, and 246 CVD deaths). MACE rates were higher in the intervention group than in the control group (792 vs. 356; P less than .01). Among the 64 trials that excluded patients with baseline CVD, there were 269 incidents of MACE.

To put this finding in context, the researchers examined the reported incidence of MACE among some 6,000 similarly aged participants in the Multi-Ethnic Study of Atherosclerosis (MESA). The overall weighted-average incidence rate was 1,408 per 100,000 person-years among MESA participants, compared with 542 events per 100,000 person-years among oncology trial participants (716 per 100,000 in the intervention arm). This represents a reported-to-expected ratio of 0.38 – a 2.6-fold lower rate of reported events (P less than .001) – and a risk difference of 866.

Further, MACE reporting was lower by a factor of 1.7 among all cancer trial participants irrespective of baseline CVD status (reported-to-expected ratio, 0.56; risk difference, 613; P less than .001).

There was no significant difference in MACE reporting between independent or industry-sponsored trials, the authors report.

No malicious intent

“There are likely some that might lean toward not wanting to attribute blame to a new drug when the drug is in a study, but I really think that the leading factor is lack of awareness,” Dr. Addison said. “I’ve talked with several cancer collaborators around the country who run large clinical trials, and I think often, when an event may be brought to someone’s attention, there is a tendency to just write it off as kind of a generic expected event due to age, or just something that’s not really pertinent to the study. So they don’t really focus on it as much.”

“Closer collaboration between cardiologists and cancer physicians is needed to better determine true cardiac risks among patients treated with these drugs.”

Breast cancer oncologist Marc E. Lippman, MD, of Georgetown University Medical Center and Georgetown Lombardi Comprehensive Cancer Center, Washington, D.C., isn’t convinced a lack of awareness is the culprit.

“I don’t agree with that at all,” he said in an interview. “I think there are very, very clear rules and guidelines these days for adverse-event reporting. I think that’s not a very likely explanation – that it’s not on the radar.”

Part of the problem may be that some of the toxicities, particularly cardiovascular, may not emerge for years, he said. Participant screening for the trials also likely removed patients with high cardiovascular risk. “It’s very understandable to me – I’m not saying it’s good particularly – but I think it’s very understandable that, if you’re trying to develop a drug, the last thing you’d want to have is a lot of toxicity that you might have avoided by just being restrictive in who you let into the study,” Dr. Lippman said.

The underreported CVD events may also reflect the rapidly changing profile of cardiovascular toxicities associated with novel anticancer therapies.

“Providers, both cancer and noncancer, generally put cardiotoxicity in the box of anthracyclines and radiation, but particularly over the last decade, we’ve begun to understand it’s well beyond any one class of drugs,” Dr. Addison said.

“I agree completely,” Dr. Lippman said. For example, “the checkpoint inhibitors are so unbelievably different in terms of their toxicities that many people simply didn’t even know what they were getting into at first.”

One size does not fit all

Javid Moslehi, MD, director of the cardio-oncology program at Vanderbilt University, Nashville, Tenn., said echocardiography – recommended to detect changes in left ventricular function in patients exposed to anthracyclines or targeted agents like trastuzumab (Herceptin) – isn’t enough to address today’s cancer therapy–related CVD events.

“Initial drugs like anthracyclines or Herceptin in cardio-oncology were associated with systolic cardiac dysfunction, whereas the majority of issues we see in the cardio-oncology clinics today are vascular, metabolic, arrhythmogenic, and inflammatory,” he said in an interview. “Echocardiography misses the big and increasingly complex picture.”

His group, for example, has been studying myocarditis associated with immunotherapies, but none of the clinical trials require screening or surveillance for myocarditis with a cardiac biomarker like troponin.

The group also recently identified 303 deaths in patients exposed to ibrutinib, a drug that revolutionized the treatment of several B-cell malignancies but is associated with higher rates of atrial fibrillation, which is also associated with increased bleeding risk. “So there’s a little bit of a double whammy there, given that we often treat atrial fibrillation with anticoagulation and where we can cause complications in patients,” Dr. Moslehi noted.

Although there needs to be closer collaboration between cardiologists and oncologists on individual trials, cardiologists also have to realize that oncology care has become very personalized, he suggested.

“What’s probably relevant for the breast cancer patient may not be relevant for the prostate cancer patient and their respective treatments,” Dr. Moslehi said. “So if we were to say, ‘every person should get an echo,’ that may be less relevant to the prostate cancer patient where treatments can cause vascular and metabolic perturbations or to the patient treated with immunotherapy who may have myocarditis, where many of the echos can be normal. There’s no one-size-fits-all for these things.”

Wearable technologies like smartwatches could play a role in improving the reporting of CVD events with novel therapies but a lot more research needs to be done to validate these tools, Dr. Addison said. “But as we continue on into the 21st century, this is going to expand and may potentially help us,” he added.

In the interim, better standardization is needed of the cardiovascular events reported in oncology trials, particularly the Common Terminology Criteria for Adverse Events (CTCAE), said Dr. Moslehi, who also serves as chair of the American Heart Association’s subcommittee on cardio-oncology.

“Cardiovascular definitions are not exactly uniform and are not consistent with what we in cardiology consider to be important or relevant,” he said. “So I think there needs to be better standardization of these definitions, specifically within the CTCAE, which is what the oncologists use to identify adverse events.”

In a linked editorial (J Am Coll Cardiol. 2020;75:629-31), Dr. Lippman and cardiologist Nanette Bishopric, MD, of the Medstar Heart and Vascular Institute in Washington, D.C., suggested it may also be time to organize a consortium that can carry out “rigorous multicenter clinical investigations to evaluate the cardiotoxicity of emerging cancer treatments,” similar to the Thrombosis in Myocardial Infarction Study Group.

“The success of this consortium in pioneering and targeting multiple generations of drugs for the treatment of MI, involving tens of thousands of patients and thousands of collaborations across multiple national borders, is a model for how to move forward in providing the new hope of cancer cure without the trade-off of years lost to heart disease,” the editorialists concluded.

The study was supported in part by National Institutes of Health grants, including a K12-CA133250 grant to Dr. Addison. Dr. Bishopric reported being on the scientific board of C&C Biopharma. Dr. Lippman reports being on the board of directors of and holding stock in Seattle Genetics. Dr. Moslehi reported having served on advisory boards for Pfizer, Novartis, Bristol-Myers Squibb, Deciphera, Audentes Pharmaceuticals, Nektar, Takeda, Ipsen, Myokardia, AstraZeneca, GlaxoSmithKline, Intrexon, and Regeneron.

This article first appeared on Medscape.com.

Clinical trials supporting Food and Drug Adminstration approval of contemporary cancer therapies frequently failed to capture major adverse cardiovascular events (MACE) and, when they did, reported rates 2.6-fold lower than noncancer trials, new research shows.

Overall, 51.3% of trials did not report MACE, with that number reaching 57.6% in trials enrolling patients with baseline cardiovascular disease (CVD).

Nearly 40% of trials did not report any CVD events in follow-up, the authors reported online Feb. 10, 2020, in the Journal of the American College of Cardiology (2020;75:620-8).

“Even in drug classes where there were established or emerging associations with cardiotoxic events, often there were no reported heart events or cardiovascular events across years of follow-up in trials that examined hundreds or even thousands of patients. That was actually pretty surprising,” senior author Daniel Addison, MD, codirector of the cardio-oncology program at the Ohio State University Medical Center, Columbus, said in an interview.

The study was prompted by a series of events that crescendoed when his team was called to the ICU to determine whether a novel targeted agent played a role in the heart decline of a patient with acute myeloid leukemia. “I had a resident ask me a very important question: ‘How do we really know for sure that the trial actually reflects the true risk of heart events?’ to which I told him, ‘it’s difficult to know,’ ” he said.

“I think many of us rely heavily on what we see in the trials, particularly when they make it to the top journals, and quite frankly, we generally take it at face value,” Dr. Addison observed.

Lower Rate of Reported Events

The investigators reviewed CV events reported in 97,365 patients (median age, 61 years; 46% female) enrolled in 189 phase 2 and 3 trials supporting FDA approval of 123 anticancer drugs from 1998 to 2018. Biologic, targeted, or immune-based therapies accounted for 72.5% of drug approvals.

Over 148,138 person-years of follow-up (median trial duration, 30 months), there were 1,148 incidents of MACE (375 heart failure, 253 MIs, 180 strokes, 65 atrial fibrillation, 29 coronary revascularizations, and 246 CVD deaths). MACE rates were higher in the intervention group than in the control group (792 vs. 356; P less than .01). Among the 64 trials that excluded patients with baseline CVD, there were 269 incidents of MACE.

To put this finding in context, the researchers examined the reported incidence of MACE among some 6,000 similarly aged participants in the Multi-Ethnic Study of Atherosclerosis (MESA). The overall weighted-average incidence rate was 1,408 per 100,000 person-years among MESA participants, compared with 542 events per 100,000 person-years among oncology trial participants (716 per 100,000 in the intervention arm). This represents a reported-to-expected ratio of 0.38 – a 2.6-fold lower rate of reported events (P less than .001) – and a risk difference of 866.

Further, MACE reporting was lower by a factor of 1.7 among all cancer trial participants irrespective of baseline CVD status (reported-to-expected ratio, 0.56; risk difference, 613; P less than .001).

There was no significant difference in MACE reporting between independent or industry-sponsored trials, the authors report.

No malicious intent

“There are likely some that might lean toward not wanting to attribute blame to a new drug when the drug is in a study, but I really think that the leading factor is lack of awareness,” Dr. Addison said. “I’ve talked with several cancer collaborators around the country who run large clinical trials, and I think often, when an event may be brought to someone’s attention, there is a tendency to just write it off as kind of a generic expected event due to age, or just something that’s not really pertinent to the study. So they don’t really focus on it as much.”

“Closer collaboration between cardiologists and cancer physicians is needed to better determine true cardiac risks among patients treated with these drugs.”

Breast cancer oncologist Marc E. Lippman, MD, of Georgetown University Medical Center and Georgetown Lombardi Comprehensive Cancer Center, Washington, D.C., isn’t convinced a lack of awareness is the culprit.

“I don’t agree with that at all,” he said in an interview. “I think there are very, very clear rules and guidelines these days for adverse-event reporting. I think that’s not a very likely explanation – that it’s not on the radar.”

Part of the problem may be that some of the toxicities, particularly cardiovascular, may not emerge for years, he said. Participant screening for the trials also likely removed patients with high cardiovascular risk. “It’s very understandable to me – I’m not saying it’s good particularly – but I think it’s very understandable that, if you’re trying to develop a drug, the last thing you’d want to have is a lot of toxicity that you might have avoided by just being restrictive in who you let into the study,” Dr. Lippman said.

The underreported CVD events may also reflect the rapidly changing profile of cardiovascular toxicities associated with novel anticancer therapies.

“Providers, both cancer and noncancer, generally put cardiotoxicity in the box of anthracyclines and radiation, but particularly over the last decade, we’ve begun to understand it’s well beyond any one class of drugs,” Dr. Addison said.

“I agree completely,” Dr. Lippman said. For example, “the checkpoint inhibitors are so unbelievably different in terms of their toxicities that many people simply didn’t even know what they were getting into at first.”

One size does not fit all

Javid Moslehi, MD, director of the cardio-oncology program at Vanderbilt University, Nashville, Tenn., said echocardiography – recommended to detect changes in left ventricular function in patients exposed to anthracyclines or targeted agents like trastuzumab (Herceptin) – isn’t enough to address today’s cancer therapy–related CVD events.

“Initial drugs like anthracyclines or Herceptin in cardio-oncology were associated with systolic cardiac dysfunction, whereas the majority of issues we see in the cardio-oncology clinics today are vascular, metabolic, arrhythmogenic, and inflammatory,” he said in an interview. “Echocardiography misses the big and increasingly complex picture.”

His group, for example, has been studying myocarditis associated with immunotherapies, but none of the clinical trials require screening or surveillance for myocarditis with a cardiac biomarker like troponin.

The group also recently identified 303 deaths in patients exposed to ibrutinib, a drug that revolutionized the treatment of several B-cell malignancies but is associated with higher rates of atrial fibrillation, which is also associated with increased bleeding risk. “So there’s a little bit of a double whammy there, given that we often treat atrial fibrillation with anticoagulation and where we can cause complications in patients,” Dr. Moslehi noted.

Although there needs to be closer collaboration between cardiologists and oncologists on individual trials, cardiologists also have to realize that oncology care has become very personalized, he suggested.

“What’s probably relevant for the breast cancer patient may not be relevant for the prostate cancer patient and their respective treatments,” Dr. Moslehi said. “So if we were to say, ‘every person should get an echo,’ that may be less relevant to the prostate cancer patient where treatments can cause vascular and metabolic perturbations or to the patient treated with immunotherapy who may have myocarditis, where many of the echos can be normal. There’s no one-size-fits-all for these things.”

Wearable technologies like smartwatches could play a role in improving the reporting of CVD events with novel therapies but a lot more research needs to be done to validate these tools, Dr. Addison said. “But as we continue on into the 21st century, this is going to expand and may potentially help us,” he added.

In the interim, better standardization is needed of the cardiovascular events reported in oncology trials, particularly the Common Terminology Criteria for Adverse Events (CTCAE), said Dr. Moslehi, who also serves as chair of the American Heart Association’s subcommittee on cardio-oncology.

“Cardiovascular definitions are not exactly uniform and are not consistent with what we in cardiology consider to be important or relevant,” he said. “So I think there needs to be better standardization of these definitions, specifically within the CTCAE, which is what the oncologists use to identify adverse events.”

In a linked editorial (J Am Coll Cardiol. 2020;75:629-31), Dr. Lippman and cardiologist Nanette Bishopric, MD, of the Medstar Heart and Vascular Institute in Washington, D.C., suggested it may also be time to organize a consortium that can carry out “rigorous multicenter clinical investigations to evaluate the cardiotoxicity of emerging cancer treatments,” similar to the Thrombosis in Myocardial Infarction Study Group.

“The success of this consortium in pioneering and targeting multiple generations of drugs for the treatment of MI, involving tens of thousands of patients and thousands of collaborations across multiple national borders, is a model for how to move forward in providing the new hope of cancer cure without the trade-off of years lost to heart disease,” the editorialists concluded.

The study was supported in part by National Institutes of Health grants, including a K12-CA133250 grant to Dr. Addison. Dr. Bishopric reported being on the scientific board of C&C Biopharma. Dr. Lippman reports being on the board of directors of and holding stock in Seattle Genetics. Dr. Moslehi reported having served on advisory boards for Pfizer, Novartis, Bristol-Myers Squibb, Deciphera, Audentes Pharmaceuticals, Nektar, Takeda, Ipsen, Myokardia, AstraZeneca, GlaxoSmithKline, Intrexon, and Regeneron.

This article first appeared on Medscape.com.

FDA: Cell phones still look safe

according to a review by the Food and Drug Administration.

The FDA reviewed the published literature from 2008 to 2018 and concluded that the data don’t support any quantifiable adverse health risks from RFR. However, the evidence is not without limitations.

The FDA’s evaluation included evidence from in vivo animal studies from Jan. 1, 2008, to Aug. 1, 2018, and epidemiologic studies in humans from Jan. 1, 2008, to May 8, 2018. Both kinds of evidence had limitations, but neither produced strong indications of any causal risks from cell phone use.

The FDA noted that in vivo animal studies are limited by variability of methods and RFR exposure, which make comparisons of results difficult. These studies are also impacted by the indirect effects of temperature increases (the only currently established biological effect of RFR) and stress experienced by the animals, which make teasing out the direct effects of RFR difficult.

The FDA noted that strong epidemiologic studies can provide more relevant and accurate information than in vivo studies, but epidemiologic studies are not without limitations. For example, most have participants track and self-report their cell phone use. There’s also no way to directly track certain factors of RFR exposure, such as frequency, duration, or intensity.

Even with those caveats in mind, the FDA wrote that, “based on the studies that are described in detail in this report, there is insufficient evidence to support a causal association between RFR exposure and tumorigenesis. There is a lack of clear dose-response relationship, a lack of consistent findings or specificity, and a lack of biological mechanistic plausibility.”

The full review is available on the FDA website.

according to a review by the Food and Drug Administration.

The FDA reviewed the published literature from 2008 to 2018 and concluded that the data don’t support any quantifiable adverse health risks from RFR. However, the evidence is not without limitations.

The FDA’s evaluation included evidence from in vivo animal studies from Jan. 1, 2008, to Aug. 1, 2018, and epidemiologic studies in humans from Jan. 1, 2008, to May 8, 2018. Both kinds of evidence had limitations, but neither produced strong indications of any causal risks from cell phone use.

The FDA noted that in vivo animal studies are limited by variability of methods and RFR exposure, which make comparisons of results difficult. These studies are also impacted by the indirect effects of temperature increases (the only currently established biological effect of RFR) and stress experienced by the animals, which make teasing out the direct effects of RFR difficult.

The FDA noted that strong epidemiologic studies can provide more relevant and accurate information than in vivo studies, but epidemiologic studies are not without limitations. For example, most have participants track and self-report their cell phone use. There’s also no way to directly track certain factors of RFR exposure, such as frequency, duration, or intensity.

Even with those caveats in mind, the FDA wrote that, “based on the studies that are described in detail in this report, there is insufficient evidence to support a causal association between RFR exposure and tumorigenesis. There is a lack of clear dose-response relationship, a lack of consistent findings or specificity, and a lack of biological mechanistic plausibility.”

The full review is available on the FDA website.

according to a review by the Food and Drug Administration.

The FDA reviewed the published literature from 2008 to 2018 and concluded that the data don’t support any quantifiable adverse health risks from RFR. However, the evidence is not without limitations.

The FDA’s evaluation included evidence from in vivo animal studies from Jan. 1, 2008, to Aug. 1, 2018, and epidemiologic studies in humans from Jan. 1, 2008, to May 8, 2018. Both kinds of evidence had limitations, but neither produced strong indications of any causal risks from cell phone use.

The FDA noted that in vivo animal studies are limited by variability of methods and RFR exposure, which make comparisons of results difficult. These studies are also impacted by the indirect effects of temperature increases (the only currently established biological effect of RFR) and stress experienced by the animals, which make teasing out the direct effects of RFR difficult.

The FDA noted that strong epidemiologic studies can provide more relevant and accurate information than in vivo studies, but epidemiologic studies are not without limitations. For example, most have participants track and self-report their cell phone use. There’s also no way to directly track certain factors of RFR exposure, such as frequency, duration, or intensity.

Even with those caveats in mind, the FDA wrote that, “based on the studies that are described in detail in this report, there is insufficient evidence to support a causal association between RFR exposure and tumorigenesis. There is a lack of clear dose-response relationship, a lack of consistent findings or specificity, and a lack of biological mechanistic plausibility.”

The full review is available on the FDA website.

Antineutrophil Cytoplasmic Antibody Vasculitis Induced by Hydralazine

To the Editor:

Hydralazine-induced antineutrophil cytoplasmic antibody vasculitis (HIAV) is a rare side effect that may develop in patients treated with hydralazine. Without early recognition and hydralazine cessation, patients often develop acute renal failure and pulmonary hemorrhage that may result in death. We present a case of HIAV.

A 67-year-old woman presented with progressive, tense, hemorrhagic, and necrotic bullae on both sides of the face and neck as well as the extremities of 2 weeks’ duration. She had a history of hypertension and a thyroid nodule after unilateral thyroid lobectomy. A review of symptoms was positive for worsening dyspnea and progressive generalized weakness. Noteworthy medications included amlodipine, metoprolol, levothyroxine, and oral hydralazine 75 mg 3 times daily for 13 months.

Bullae first appeared on the patient’s scalp and quickly progressed with a cephalocaudal pattern with a propensity for the eyes, nostrils, and labial mucosa (Figure 1). The tongue was covered by an eschar, and she had diffuse periorbital edema. Additionally, concentric purpuric patches were noted on the thighs and lower legs (Figure 2).

Pertinent laboratory findings included a positive antinuclear antibody titer of 1:320 and perinuclear antineutrophil cytoplasmic antibody (ANCA) titer of 1:160, along with an elevated serum creatinine level (2.31 mg/dL [reference range, 0.6–1.2 mg/dL]). Bilateral perihilar infiltrates with bilateral pleural effusions were noted on a chest radiograph.

While hospitalized, she developed pulmonary hemorrhages and a progressive decline in respiratory status. She subsequently was admitted to the medical intensive care unit. Aggressive support was administered, and several skin biopsy specimens were obtained along with an endobronchial biopsy of the right middle lobe.

Skin histopathology revealed a necrotic vasculitis (Figure 3). Direct immunofluorescence was not performed. Lung histopathology showed fragments of bronchial tissue with acute and chronic inflammation, focal necrosis, granulation tissue formation, edema, and squamous metaplasia. Together with the clinical history, these findings were consistent with HIAV.

Hydralazine was immediately discontinued, and the patient was started on 65 mg daily of intravenous methylprednisolone; methylprednisolone was later changed to oral prednisone 30 mg daily. Due to multiple organ involvement—lung and kidney—intravenous rituximab 375 mg/m2 every week for 4 weeks, per lymphoma protocol, was started. Within 2 weeks of beginning therapy, her renal function and respiratory status improved, and by week 4, the skin lesions had completely resolved. Although initially she did well on immunosuppressive therapy with resolution of all symptoms, the patient contracted Clostridium difficile–induced systemic inflammatory response syndrome after 5 weeks of therapy and died.

Hydralazine was first introduced in 1951 for adjunctive hypertension therapy due to its vasodilation effects.1-3 Since its introduction, it has been implicated in 2 important disease processes: HIAV and hydralazine-induced lupus.

Hydralazine-induced ANCA vasculitis was first documented in 1980; by 2011, multiple cases had been reported.1-7 Hydralazine-induced ANCA vasculitis has occurred in patients aged 11 to 79 years taking 50 to 300 mg daily. Symptom onset varies from 6 months to 14 years, with a mean exposure duration of 4.7 years and mean daily dose of 142 mg.1-7

Clinical manifestations range from less specific, such as fever, malaise, arthralgia, myalgia, and weight loss, to single tissue or organ involvement that may be fatal. The most frequent clinical features include kidney involvement (81%), cutaneous vasculitis (25%), arthralgia (24%), and pleuropulmonary involvement (19%). Cutaneous manifestations include but are not limited to palpable lower extremity purpura; morbilliform eruptions; and hemorrhagic blisters on the lower legs, arms, trunk, nasal septum, and uvula.1-4,8

The most commonly affected organ is the kidney, which commonly presents as hematuria, proteinuria, and elevated serum creatinine level. Histopathologically, patients most likely will have necrotizing and crescentic glomerulonephritis that is pauci-immune by immunofluorescence.7,9 The lungs are the next most commonly affected organ, with a classic presentation of cough, dyspnea, and hemoptysis in the setting of intra-alveolar hemorrhage.6,8 When both the kidneys and lungs are involved, the patient is said to have pulmonary-renal syndrome that is characterized by lung infiltrates or nodules with or without hemorrhage, hemoptysis, and pleuritis in the setting of glomerulonephritis.1,6

Clear data on incidence and prevalence of HIAV does not exist due to the rarity of the disease and the lack of prospective studies. To identify a clear incidence and prevalence, prospective longitudinal studies with larger cohorts along with better recognition and diagnosis are needed.2,8,10 A few predisposing risk factors have been identified, including older age, a cumulative dose of 100 g at the time of presentation, female sex, a history of thyroid disease, HLA-DR4 genotypes, slow hepatic acetylation, and the null gene for C4.1,3,5,9-11 Our patient was an older woman with a history of thyroid disease who had been taking oral hydralazine 75 mg 3 times daily for 13 months. During this 13-month duration, she had no dose adjustments.

Currently, the pathomechanism for HIAV is unclear and may be multifactorial. There are 4 main theories2,8-10,12,13:

1. Hydralazine and its metabolites accumulate inside neutrophils, then subsequently bind and alter the configuration of myeloperoxidase (MPO). This alteration leads to spreading of the autoimmune response to other autoantigens, making neutrophil proteins (eg, elastase, lactoferrin, nuclear antigens) immunogenic.

2. Hydralazine binds MPO in neutrophils, creating cytotoxic products that induce neutrophil apoptosis. Neutrophil apoptosis without priming then results in ANCA antigen presence on the neutrophil cell membrane and the formation of MPO-ANCA. Myeloperoxidase-ANCA then binds to these membrane-bound antigens that cause self-perpetuating, constitutive activation through cross-linking with proteinase 3 or MPO and Fcγ receptors.

3. Activated neutrophils in the presence of hydrogen peroxidase release MPO that converts hydralazine into a cytotoxic product that is immunogenic for T cells that activate ANCA-producing B cells.

4. Histone H3 trimethyl Lys27 (H3K27me3) levels are perturbed in HIAV, which leads to aberrant gene silencing of proteinase 3 and MPO.In contrast, the demethylase Jumonji domain-containing protein 3 for the H3K27me3 histone is increased in patients without HIAV. Based on this data and the data showing a role for hydralazine in reversing epigenetic silencing of tumor suppressor genes in cancer cells,13 it has been proposed that hydralazine may reverse epigenetic silencing of proteinase 3 and MPO.

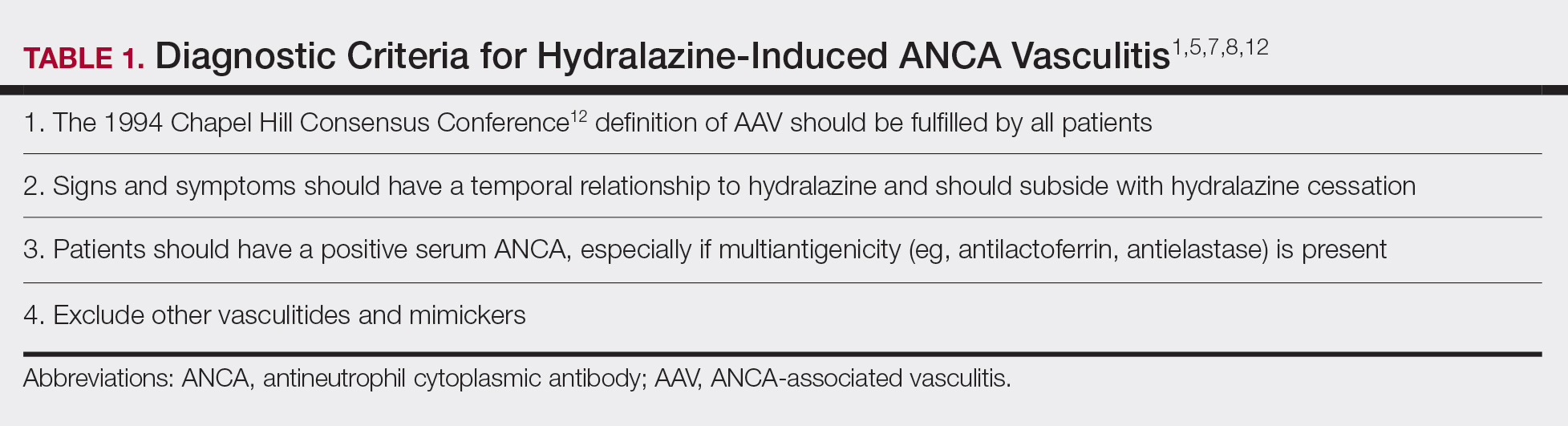

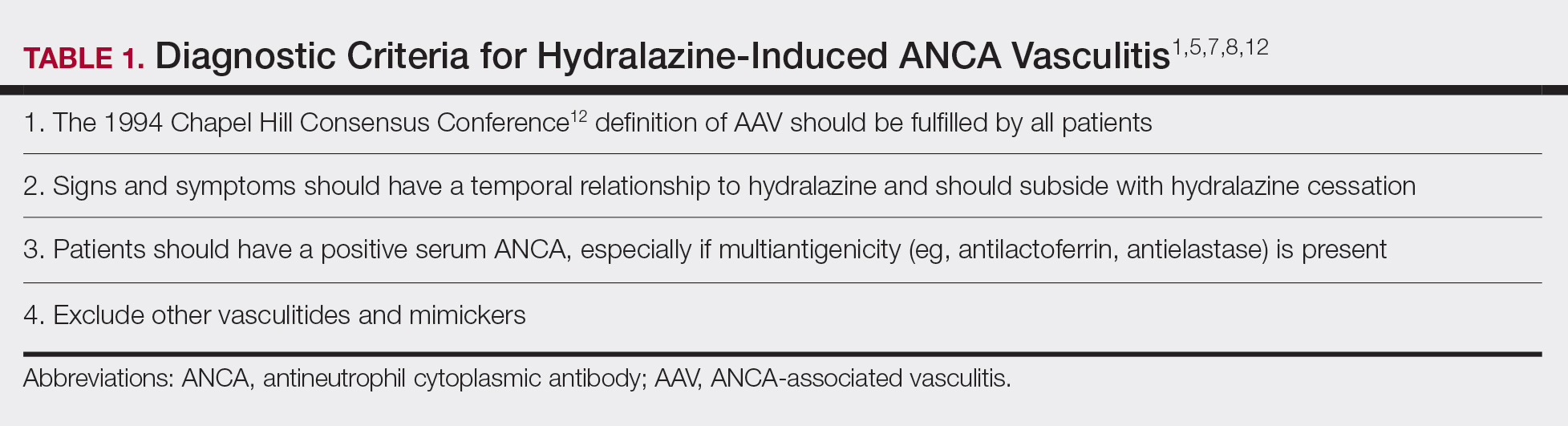

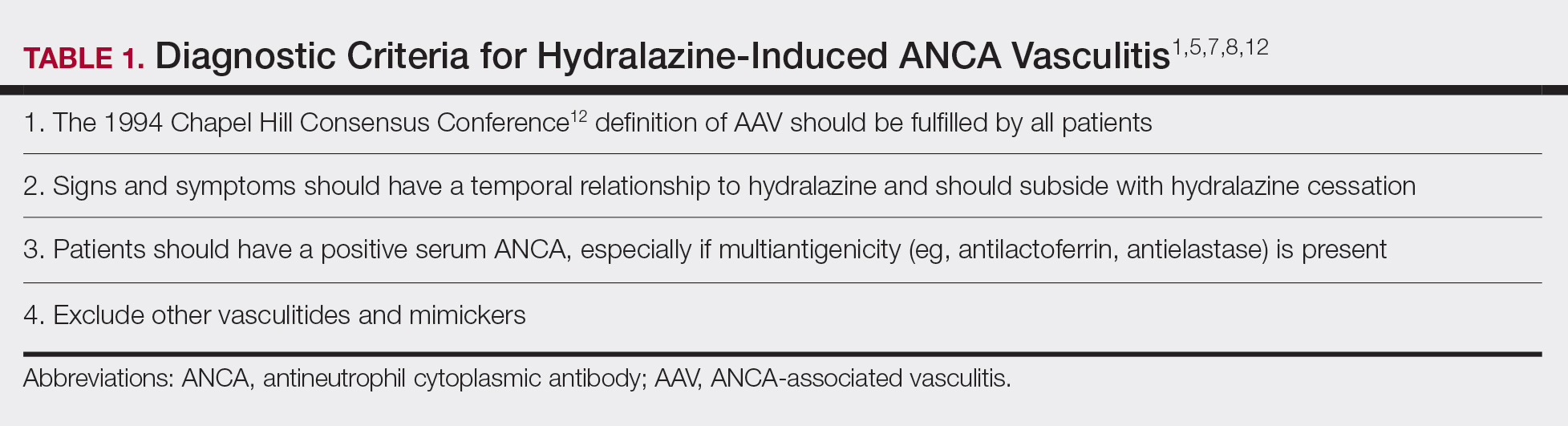

Diagnosing HIAV is still difficult because physicians do not recognize the drug as the etiologic agent, there is extensive variability in duration between starting the drug and onset of symptoms, and there often is a failure to order the appropriate laboratory and invasive tests needed for evaluation and diagnosis.3,5,8,10,12 Despite these difficulties, a set of criteria and practices for diagnosis are delineated in Table 1, with the key diagnostic feature being resolution with hydralazine cessation.1,5,7,8,12

A comprehensive drug history from at least 6 months prior to presentation is essential. Biopsies also are strongly encouraged to confirm the presence of vasculitis and to determine its severity.8,12 If renal biopsies are performed, they typically show scant IgG, IgM, and C3 deposition that is characteristic of ANCA-positive pauci-immune glomerulonephritis. Compared to hydralazine-induced lupus, renal involvement in the setting of HIAV has a relative lack of immunoglobulin and complement deposition with histopathology and immunostaining.14

Laboratory test results including serum MPO-ANCA (perinuclear ANCA) with coexisting elastase and/or lactoferrin autoantibodies is characteristic of HIAV. Antinuclear antibody, antihistone, anti–double-stranded DNA, and antiphospholipid antibodies along with low complement levels also may be present.2,4,9,10,13,15 It is recommended that ANCA assays combine indirect immunofluorescence with antigen-specific enzyme-linked immunosorbent assay.8 With respect to its idiopathic counterpart, patients may only present with MPO-ANCA, while other aforementioned antibodies (eg, antihistone, anti–double-stranded DNA) are rarely found or are entirely absent.2,9 Patients with HIAV often have higher titers of MPO-ANCA.9,15 In hydralazine-induced lupus, patients rarely have MPO-ANCA.

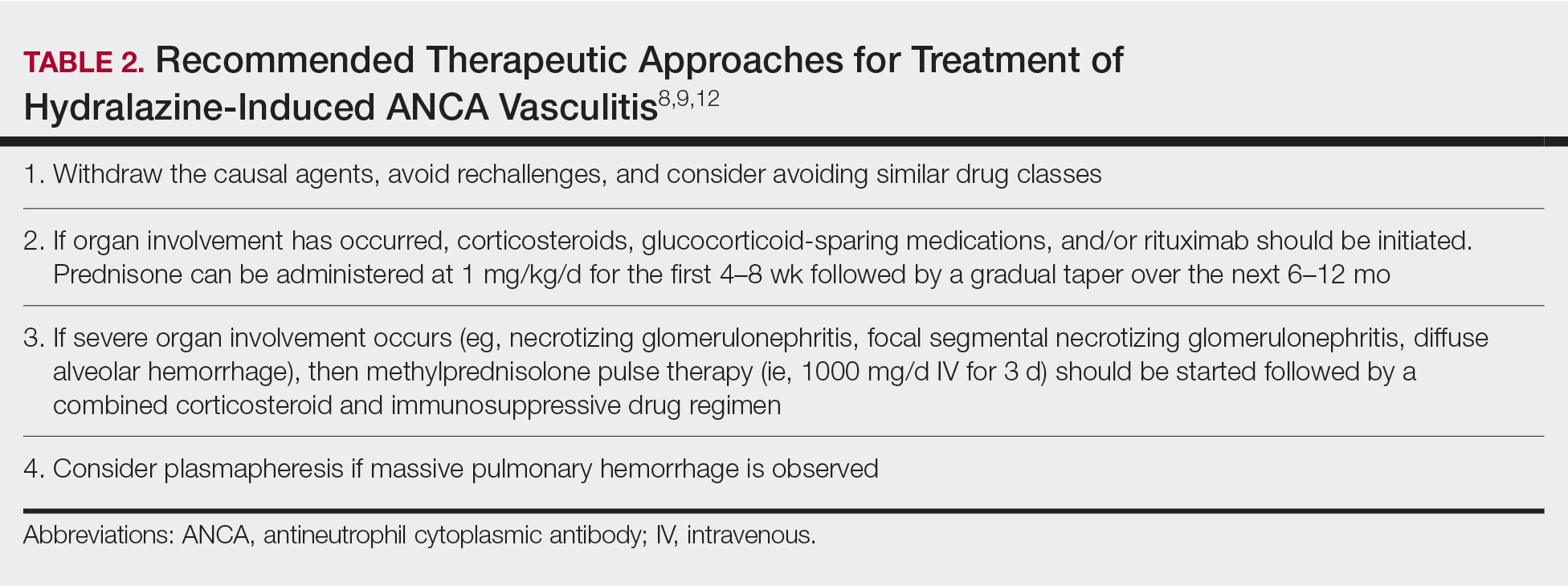

When a diagnosis of HIAV is made, it cannot be confirmed until hydralazine is discontinued and the patient’s symptoms resolve. Therefore, it is both diagnostic and therapeutic to discontinue hydralazine when HIAV is suspected. If recognized when the patient is only presenting with nonspecific symptoms, simple hydralazine cessation may be all that is needed; however, because recognition and diagnosis of HIAV is difficult, most patients present when the disease is severe and has progressed to organ involvement.8-10

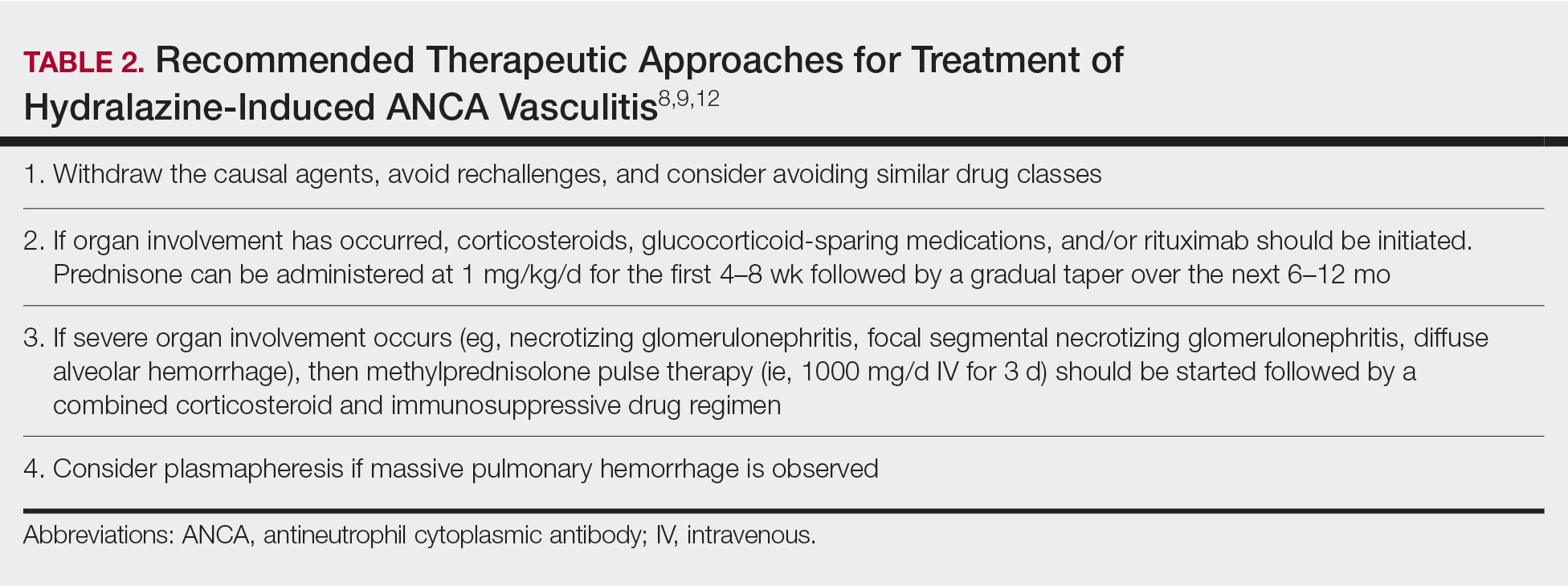

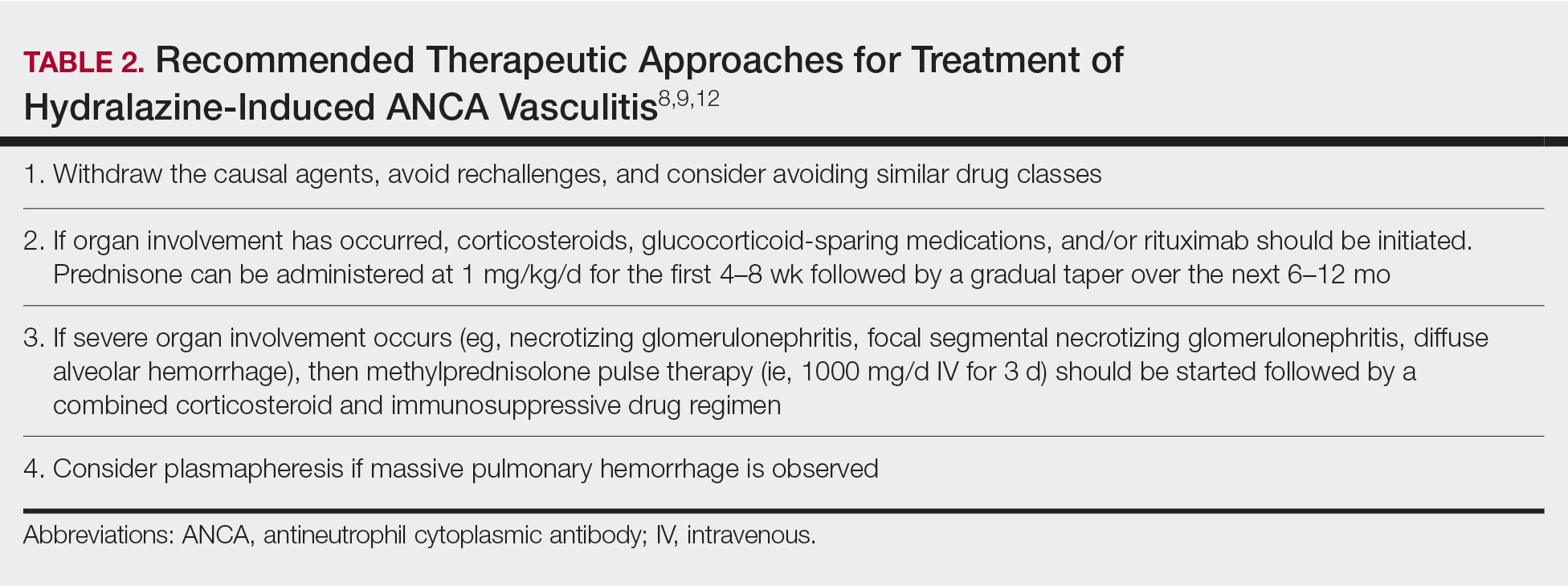

Treatment recommendations are highlighted in Table 2.8,9,12 Glucocorticoid therapy is believed to work by preventing T-cell and B-cell maturation needed to produce MPO-ANCA. Rituximab, on the other hand, is suspected to act by clearing the peripheral blood of MPO-ANCA B cells.12,16 Of note, patients with HIAV are different from their idiopathic counterparts because they usually need shorter courses of immunosuppressive therapy, long-term maintenance usually is unnecessary, and their prognosis generally is good if the offending agent is withdrawn.7-9,12 Once the appropriate therapy is instituted, vasculitic manifestations are expected to resolve 10 days to 8 months after hydralazine cessation; however, a response often is seen within 1 to 4 weeks after initiation of systemic treatment.4,8 Serum ANCA should be monitored, and there should be surveillance for the emergence of a chronic underlying vasculitis.8,12

Our patient highlights the importance of identifying individuals at risk for HIAV. We seek to increase recognition of this entity, as it is not commonly seen in a dermatologic setting and is associated with high morbidity and mortality, as seen in our patient.

- Yokogawa N, Vivino FB. Hydralazine-induced autoimmune disease: comparison to idiopathic lupus and ANCA-positive vasculitis. Mod Rheumatol. 2009;19:338-347.

- Agarwal G, Sultan G, Werner SL, et al. Hydralazine induces myeloperoxidase and proteinase 3 anti-neutrophil cytoplasmic antibody vasculitis and leads to pulmonary renal syndrome. Case Rep Nephrol. 2014;2014:868590.

- Keasberry J, Frazier J, Isbel NM, et al. Hydralazine-induced anti-neutrophilic cytoplasmic antibody-positive renal vasculitis presenting with a vasculitic syndrome, acute nephritis and a puzzling skin rash: a case report. J Med Case Rep. 2013;7:20.

- ten Holder SM, Joy MS, Falk RJ. Cutaneous and systemic manifestations of drug-induced vasculitis. Ann Pharmacother. 2002;36:130-147.

- Namas R, Rubin B, Adwar W, et al. A challenging twist in pulmonary renal syndrome. Case Rep Rheumatol. 2014;2014:516362.

- Dobre M, Wish J, Negrea L. Hydralazine-induced ANCA-positive pauci-immune glomerulonephritis. Ren Fail. 2009;31:745-748.

- Hogan JJ, Markowitz GS, Radhakrishnan J. Drug-induced glomerular disease: immune-mediated injury. Clin J Am Soc Nephrol. 2015;10:1300-1310.

- Radic M, Martinovic Kaliterna D, Radic J. Drug-induced vasculitis: a clinical and pathological review. Neth J Med. 2012;70:12-17.

- Babar F, Posner JN, Obah EA. Hydralazine-induced pauci-immune glomerulonephritis: intriguing case series misleading diagnoses. J Community Hosp Intern Med Perspect. 2016;6:30632.

- Marina VP, Malhotra D, Kaw D. Hydralazine-induced ANCA vasculitis with pulmonary renal syndrome: a rare clinical presentation. Int Urol Nephrol. 2012;44:1907-1909.

- Magro CM. Associated ANCA positive vasculitis. The Dermatologist. 2015;23(7). http://www.the-dermatologist.com/content/associated-anca-positive-vasculitis. Accessed January 30, 2020.

- Gao Y, Zhao MH. Review article: Drug-induced anti-neutrophil cytoplasmic antibody-associated vasculitis. Nephrology (Carlton). 2009;14:33-41.

- Grau RG. Drug-induced vasculitis: new insights and a changing lineup of suspects. Curr Rheumatol Rep. 2015;17:71.

- Sangala N, Lee RW, Horsfield C, et al. Combined ANCA-associated vasculitis and lupus syndrome following prolonged use of hydralazine: a timely reminder of an old foe. Int Urol Nephrol. 2010;42:503-506.

- Choi HK, Merkel PA, Walker AM, et al. Drug-associated antineutrophil cytoplasmic antibody-positive vasculitis: prevalence among patients with high titers of antimyeloperoxidase antibodies. Arthritis Rheum. 2000;43:405-413.

- Coutinho AE, Chapman KE. The anti-inflammatory and immunosuppressive effects of glucocorticoids, recent developments and mechanistic insights. Mol Cell Endocrinol. 2011;335:2-13.

To the Editor:

Hydralazine-induced antineutrophil cytoplasmic antibody vasculitis (HIAV) is a rare side effect that may develop in patients treated with hydralazine. Without early recognition and hydralazine cessation, patients often develop acute renal failure and pulmonary hemorrhage that may result in death. We present a case of HIAV.

A 67-year-old woman presented with progressive, tense, hemorrhagic, and necrotic bullae on both sides of the face and neck as well as the extremities of 2 weeks’ duration. She had a history of hypertension and a thyroid nodule after unilateral thyroid lobectomy. A review of symptoms was positive for worsening dyspnea and progressive generalized weakness. Noteworthy medications included amlodipine, metoprolol, levothyroxine, and oral hydralazine 75 mg 3 times daily for 13 months.