User login

ED visits spike among California Medicaid patients

Medicaid patients in California increased their use of emergency departments by 35.6% over a 5-year period while use by privately insured patients barely increased.

"Increasing ED use by Medicaid beneficiaries could reflect decreasing access to primary care, which is supported by our findings of high and increasing rates of ED use for ambulatory care sensitive conditions by Medicaid patients," wrote Dr. Renee Hsia. The report was published in the Sept. 18 issue of JAMA.

Dr. Hsia of the University of California, San Francisco, and her coauthors examined rates of ED utilization in California from 2005 to 2010 and broke down the data by insurance status (JAMA 2013;310:1181-81). They looked at adult patients younger than 65 years, because these patients "have experienced the greatest changes in insurance coverage in recent years, and are likely to see the biggest shifts as a result of health care reform."

The investigators based their retrospective analysis on the California Office of Statewide Health Planning and Development’s Emergency Discharge Data and Patient Discharge Data. The study grouped patients by insurance status: Medicaid, private, uninsured or self-pay, or other (workers compensation, CHAMPUS/TRICARE, Title V, and Veterans Affairs, and similar coverage).

Overall, ED visits jumped from 5.4 million to 6.1 million over the 5-year period – a 13% increase. While visits for patients with private insurance barely increased, with a 5-year difference of just 1%, visits by Medicaid patients increased by 35.6%, and visits by uninsured patients rose 25.4%. Visits by patients with coverage in the "other" category decreased by more than 10%, the authors noted.

Medicaid patients also maintained the highest rate of visits such as hypertension, which are potentially preventable with primary care. Among Medicaid recipients, the average yearly rate of ED visits for these problems was 54.76/1,000, compared with 10.93/1,000 for those with private insurance and 16.6/1,000 for those who were uninsured.

These visits showed the same kinds of coverage-dependent increases over the study period: a 6.8% hike among Medicaid beneficiaries and 6.2% among the uninsured, but a decline of 0.7% among privately insured patients.

Neither Dr. Hsia nor her coauthors reported any financial disclosures.

Medicaid patients in California increased their use of emergency departments by 35.6% over a 5-year period while use by privately insured patients barely increased.

"Increasing ED use by Medicaid beneficiaries could reflect decreasing access to primary care, which is supported by our findings of high and increasing rates of ED use for ambulatory care sensitive conditions by Medicaid patients," wrote Dr. Renee Hsia. The report was published in the Sept. 18 issue of JAMA.

Dr. Hsia of the University of California, San Francisco, and her coauthors examined rates of ED utilization in California from 2005 to 2010 and broke down the data by insurance status (JAMA 2013;310:1181-81). They looked at adult patients younger than 65 years, because these patients "have experienced the greatest changes in insurance coverage in recent years, and are likely to see the biggest shifts as a result of health care reform."

The investigators based their retrospective analysis on the California Office of Statewide Health Planning and Development’s Emergency Discharge Data and Patient Discharge Data. The study grouped patients by insurance status: Medicaid, private, uninsured or self-pay, or other (workers compensation, CHAMPUS/TRICARE, Title V, and Veterans Affairs, and similar coverage).

Overall, ED visits jumped from 5.4 million to 6.1 million over the 5-year period – a 13% increase. While visits for patients with private insurance barely increased, with a 5-year difference of just 1%, visits by Medicaid patients increased by 35.6%, and visits by uninsured patients rose 25.4%. Visits by patients with coverage in the "other" category decreased by more than 10%, the authors noted.

Medicaid patients also maintained the highest rate of visits such as hypertension, which are potentially preventable with primary care. Among Medicaid recipients, the average yearly rate of ED visits for these problems was 54.76/1,000, compared with 10.93/1,000 for those with private insurance and 16.6/1,000 for those who were uninsured.

These visits showed the same kinds of coverage-dependent increases over the study period: a 6.8% hike among Medicaid beneficiaries and 6.2% among the uninsured, but a decline of 0.7% among privately insured patients.

Neither Dr. Hsia nor her coauthors reported any financial disclosures.

Medicaid patients in California increased their use of emergency departments by 35.6% over a 5-year period while use by privately insured patients barely increased.

"Increasing ED use by Medicaid beneficiaries could reflect decreasing access to primary care, which is supported by our findings of high and increasing rates of ED use for ambulatory care sensitive conditions by Medicaid patients," wrote Dr. Renee Hsia. The report was published in the Sept. 18 issue of JAMA.

Dr. Hsia of the University of California, San Francisco, and her coauthors examined rates of ED utilization in California from 2005 to 2010 and broke down the data by insurance status (JAMA 2013;310:1181-81). They looked at adult patients younger than 65 years, because these patients "have experienced the greatest changes in insurance coverage in recent years, and are likely to see the biggest shifts as a result of health care reform."

The investigators based their retrospective analysis on the California Office of Statewide Health Planning and Development’s Emergency Discharge Data and Patient Discharge Data. The study grouped patients by insurance status: Medicaid, private, uninsured or self-pay, or other (workers compensation, CHAMPUS/TRICARE, Title V, and Veterans Affairs, and similar coverage).

Overall, ED visits jumped from 5.4 million to 6.1 million over the 5-year period – a 13% increase. While visits for patients with private insurance barely increased, with a 5-year difference of just 1%, visits by Medicaid patients increased by 35.6%, and visits by uninsured patients rose 25.4%. Visits by patients with coverage in the "other" category decreased by more than 10%, the authors noted.

Medicaid patients also maintained the highest rate of visits such as hypertension, which are potentially preventable with primary care. Among Medicaid recipients, the average yearly rate of ED visits for these problems was 54.76/1,000, compared with 10.93/1,000 for those with private insurance and 16.6/1,000 for those who were uninsured.

These visits showed the same kinds of coverage-dependent increases over the study period: a 6.8% hike among Medicaid beneficiaries and 6.2% among the uninsured, but a decline of 0.7% among privately insured patients.

Neither Dr. Hsia nor her coauthors reported any financial disclosures.

FROM JAMA

Major finding: From 2005 to 2010, ED visits among California Medicaid patients increased 35.6% while visits for privately insured patients remained almost the same.

Data source: Claims data from several state sources.

Disclosures: The investigators reported no financial conflicts of interest.

A third of older adults may have biomarkers of preclinical Alzheimer’s

A combination of cerebrospinal fluid biomarkers and simple cognitive testing identified stages of preclinical Alzheimer’s that were associated with cognitive decline and death over a decade of follow-up in a prospective, longitudinal study.

Preclinical disease was present in 31% of adults aged 65 years or older who were living independently in the community and was a reliable predictor of progression. The findings suggest that preclinical staging is not only possible, but could be a useful adjunct for stratifying research populations in therapeutic trials, according Dr. Stephanie J. Vos, the lead investigator (Lancet Neurol. 2013 Sept. 4 [doi:10.1016/S1474-4422(13)70194-7]).

The study aimed to identify the prevalence and long-term outcomes of preclinical Alzheimer’s disease in elderly subjects who were cognitively normal at baseline. Dr. Vos, of the Alzheimer’s Center Limburg at Maastricht (the Netherlands) University, and her colleagues used the combination of biomarkers and cognitive testing to define preclinical stages similar to those recently proposed by the Preclinical Working Group of the National Institute on Aging and the Alzheimer’s Association. These criteria propose three progressive stages for cognitively normal subjects:

• Stage 1, cognitively normal individuals with abnormal amyloid markers.

• Stage 2, abnormal amyloid and neuronal injury markers.

• Stage 3, abnormal amyloid and neuronal injury markers with subtle cognitive changes.

Dr. Vos’ study involved 311 subjects who were cognitively normal at baseline. They underwent lumbar puncture to ascertain cerebrospinal fluid levels of beta-amyloid-42 (Abeta-42), total tau, and phosphorylated tau. They also completed cognitive testing with the Clinical Dementia Rating (CDR) scale and Mini Mental State Exam (MMSE). Each year thereafter, subjects had additional cognitive testing. The primary outcome was the proportion of patients in each preclinical stage at baseline. Secondary outcomes were progression of cognitive decline and mortality.

CSF samples were dichotomized as normal or abnormal based on a level that the investigators determined. The cutoff values for abnormal biomarker measurements that best differentiated subjects with no baseline memory deficits from those in a separate cohort with symptomatic Alzheimer’s were Abeta-42 levels less than 459 pg/mL, total tau levels greater than 339 pg/mL, and phosphorylated tau levels greater than 67 pg/mL.

A CDR sum of boxes score of 0 was considered normal memory; scores of 0.5 or higher during follow-up indicated progression to symptomatic Alzheimer’s.

Subjects were stratified according to a combination of memory scores and biomarkers. Normal subjects had no memory impairment and normal biomarkers. Subjects were classified as stage 1 if only their Abeta-42 was abnormal. Stage 2 patients had abnormal Abeta-42and abnormal total or phosphorylated tau levels. Stage 3 subjects had abnormal biomarkers plus memory impairment equal to 0.5 on the CDR. Those in stage 1, 2, or 3 were considered to have preclinical Alzheimer’s.

Those who had normal Abeta-42 but abnormal tau – a marker of neuronal injury – were considered to have suspected non-Alzheimer’s pathophysiology (SNAP), regardless of their baseline memory score.

Patients were a mean of 73 years old at baseline. They had a mean MMSE score was 28.9 and a mean CDR sum of boxes score of 0.03. One-third (34%) were positive for the high-risk apolipoprotein (APOE) epsilon-4 allele.

At baseline, 129 (41%) were classified as normal; 47 (15%) as stage 1; 36 (12%) as stage 2; 13 (4%) as stage 3; and 72 (23%) as being in the SNAP group. The remaining 14 (5%) were unclassified.

Preclinical Alzheimer’s (stages 1-3) was significantly more prevalent among those older than 72 years than in those younger (37% vs. 26%), and in APOE epsilon-4 carriers than in noncarriers (47% vs. 23%).

At 5 years, 110 subjects were available for follow-up; 14 were available at 10 years. By the end of the study, 20 subjects had died.

After a median follow-up of 4 years, progression had occurred in 2% of the normal group; 13% of those in stage 1; 25% of those in stage 2; 54% of those in stage 3; 6% of those in the SNAP group, and 29% of those in the unclassified group, for a total of 32 subjects.

Of those who progressed, symptomatic Alzheimer’s with a CDR sum of boxes score of 0.5 occurred in 22 (69%), CDR 1 symptomatic disease developed in 6 (19%), and CDR 2 symptomatic disease arose in 4 (13%).

Interestingly, the authors noted, "neither age (younger than 72 years vs. 72 years or older) nor APOE genotype predicted the rate of decline," although these subanalyses had limited statistical power because of the small sample sizes. "Although APOE epsilon-4 is often a good predictor of cognitive decline in unselected populations, the absence of its prognostic utility in individuals with AD pathological abnormalities is consistent with findings from previous studies."

After adjustment for multiple covariates, subjects with baseline stage 1 disease were not significantly more likely to progress than was the normal group. However, those in baseline stages 2 and 3 were more likely to progress (hazard ratio 14.3 and 33.8, respectively). Those in the SNAP group were not at a significantly increased risk of progression, compared with the normal group.

After adjustment for covariates, the risk of death was significantly greater in those with baseline preclinical disease (HR 6.2). When the stages were individually assessed, the risk increased as the stages did: HR 3.7 for stage 1, 6.0 for stage 2, and 31.5 for stage 3. Those in the SNAP group were 5.2 times more likely to die by the end of follow-up than were those in the normal group.

Nine subjects with baseline preclinical disease who died received a postpartum autopsy diagnosis. Of these, one had low neuropathological changes consistent with Alzheimer’s and the rest had intermediate-high changes.

Four subjects in the SNAP group who died underwent autopsy. Of these, three had low-level neuropathological changes, including vascular comorbidities. All had a neuritic plaque score of 0. This finding suggests that the cognitive changes were linked to other disorders, the authors said.

Dr. Vos received support from the Center for Translational Molecular Medicine, project LeARN and the EU/EFPIA Innovative Medicines Initiative Joint Undertaking, and Internationale Stichting Alzheimer Onderzoek. Several coauthors were investigators for industry-sponsored studies testing anti-dementia drugs or had ties with pharmaceutical companies developing Alzheimer’s diagnostic tests or therapies.

On Twitter @Alz_Gal

The study by Dr. Stephanie Vos and her colleagues supports the emerging understanding of preclinical staging of Alzheimer’s disease – a concept useful not only for identifying at-risk populations, but for stratifying patient groups in therapeutic trials, Dr. Ronald Petersen wrote in an accompanying editorial (Lancet Neurol. 2013 Sept. 4 [doi:10.1016/S1474-4422(13)70217-5]).

However, he noted, the authors’ data on disease progression is "a bit more difficult to interpret than the frequencies of participants in each stage of preclinical AD."

For example, he noted, the researchers "chose to label the category for the next phase of progression in the AD spectrum as symptomatic AD rather than the more conventional mild cognitive impairment due to AD, amnestic MCI, or prodromal AD. The reason for this terminology is unclear and is likely to add to confusion in the published work."

Dr. Vos and her colleagues’ dichotomization of subjects as normal or abnormal at baseline made no allowance for a symptomatic predementia, he noted – a position that "is inconsistent with the published work from other groups in the specialty." This elimination of mild cognitive impairment from the preclinical staging equation complicates any comparison to prior work.

"The investigators [also] contend that the Clinical Dementia Rating scale is sufficient to verify symptomatic AD irrespective of cognitive test results. The CDR is a staging and not a diagnostic instrument; thus, why all normal participants in this study who progressed were classified as having a diagnosis of symptomatic AD is not clear."

"Notwithstanding these concerns, this study is an important contribution to the published work and supports the NIA-AA criteria for preclinical AD," Dr. Petersen concluded. "Importantly, despite the differences in methodology and types of participants, the findings from this study, which used CSF, and the Mayo Clinic Study of Aging, which used neuroimaging, converge convincingly."

Dr. Ronald Petersen is director of the Mayo Clinic Alzheimer’s Disease Research Center in Rochester, Minn. He had no financial disclosures.

The study by Dr. Stephanie Vos and her colleagues supports the emerging understanding of preclinical staging of Alzheimer’s disease – a concept useful not only for identifying at-risk populations, but for stratifying patient groups in therapeutic trials, Dr. Ronald Petersen wrote in an accompanying editorial (Lancet Neurol. 2013 Sept. 4 [doi:10.1016/S1474-4422(13)70217-5]).

However, he noted, the authors’ data on disease progression is "a bit more difficult to interpret than the frequencies of participants in each stage of preclinical AD."

For example, he noted, the researchers "chose to label the category for the next phase of progression in the AD spectrum as symptomatic AD rather than the more conventional mild cognitive impairment due to AD, amnestic MCI, or prodromal AD. The reason for this terminology is unclear and is likely to add to confusion in the published work."

Dr. Vos and her colleagues’ dichotomization of subjects as normal or abnormal at baseline made no allowance for a symptomatic predementia, he noted – a position that "is inconsistent with the published work from other groups in the specialty." This elimination of mild cognitive impairment from the preclinical staging equation complicates any comparison to prior work.

"The investigators [also] contend that the Clinical Dementia Rating scale is sufficient to verify symptomatic AD irrespective of cognitive test results. The CDR is a staging and not a diagnostic instrument; thus, why all normal participants in this study who progressed were classified as having a diagnosis of symptomatic AD is not clear."

"Notwithstanding these concerns, this study is an important contribution to the published work and supports the NIA-AA criteria for preclinical AD," Dr. Petersen concluded. "Importantly, despite the differences in methodology and types of participants, the findings from this study, which used CSF, and the Mayo Clinic Study of Aging, which used neuroimaging, converge convincingly."

Dr. Ronald Petersen is director of the Mayo Clinic Alzheimer’s Disease Research Center in Rochester, Minn. He had no financial disclosures.

The study by Dr. Stephanie Vos and her colleagues supports the emerging understanding of preclinical staging of Alzheimer’s disease – a concept useful not only for identifying at-risk populations, but for stratifying patient groups in therapeutic trials, Dr. Ronald Petersen wrote in an accompanying editorial (Lancet Neurol. 2013 Sept. 4 [doi:10.1016/S1474-4422(13)70217-5]).

However, he noted, the authors’ data on disease progression is "a bit more difficult to interpret than the frequencies of participants in each stage of preclinical AD."

For example, he noted, the researchers "chose to label the category for the next phase of progression in the AD spectrum as symptomatic AD rather than the more conventional mild cognitive impairment due to AD, amnestic MCI, or prodromal AD. The reason for this terminology is unclear and is likely to add to confusion in the published work."

Dr. Vos and her colleagues’ dichotomization of subjects as normal or abnormal at baseline made no allowance for a symptomatic predementia, he noted – a position that "is inconsistent with the published work from other groups in the specialty." This elimination of mild cognitive impairment from the preclinical staging equation complicates any comparison to prior work.

"The investigators [also] contend that the Clinical Dementia Rating scale is sufficient to verify symptomatic AD irrespective of cognitive test results. The CDR is a staging and not a diagnostic instrument; thus, why all normal participants in this study who progressed were classified as having a diagnosis of symptomatic AD is not clear."

"Notwithstanding these concerns, this study is an important contribution to the published work and supports the NIA-AA criteria for preclinical AD," Dr. Petersen concluded. "Importantly, despite the differences in methodology and types of participants, the findings from this study, which used CSF, and the Mayo Clinic Study of Aging, which used neuroimaging, converge convincingly."

Dr. Ronald Petersen is director of the Mayo Clinic Alzheimer’s Disease Research Center in Rochester, Minn. He had no financial disclosures.

A combination of cerebrospinal fluid biomarkers and simple cognitive testing identified stages of preclinical Alzheimer’s that were associated with cognitive decline and death over a decade of follow-up in a prospective, longitudinal study.

Preclinical disease was present in 31% of adults aged 65 years or older who were living independently in the community and was a reliable predictor of progression. The findings suggest that preclinical staging is not only possible, but could be a useful adjunct for stratifying research populations in therapeutic trials, according Dr. Stephanie J. Vos, the lead investigator (Lancet Neurol. 2013 Sept. 4 [doi:10.1016/S1474-4422(13)70194-7]).

The study aimed to identify the prevalence and long-term outcomes of preclinical Alzheimer’s disease in elderly subjects who were cognitively normal at baseline. Dr. Vos, of the Alzheimer’s Center Limburg at Maastricht (the Netherlands) University, and her colleagues used the combination of biomarkers and cognitive testing to define preclinical stages similar to those recently proposed by the Preclinical Working Group of the National Institute on Aging and the Alzheimer’s Association. These criteria propose three progressive stages for cognitively normal subjects:

• Stage 1, cognitively normal individuals with abnormal amyloid markers.

• Stage 2, abnormal amyloid and neuronal injury markers.

• Stage 3, abnormal amyloid and neuronal injury markers with subtle cognitive changes.

Dr. Vos’ study involved 311 subjects who were cognitively normal at baseline. They underwent lumbar puncture to ascertain cerebrospinal fluid levels of beta-amyloid-42 (Abeta-42), total tau, and phosphorylated tau. They also completed cognitive testing with the Clinical Dementia Rating (CDR) scale and Mini Mental State Exam (MMSE). Each year thereafter, subjects had additional cognitive testing. The primary outcome was the proportion of patients in each preclinical stage at baseline. Secondary outcomes were progression of cognitive decline and mortality.

CSF samples were dichotomized as normal or abnormal based on a level that the investigators determined. The cutoff values for abnormal biomarker measurements that best differentiated subjects with no baseline memory deficits from those in a separate cohort with symptomatic Alzheimer’s were Abeta-42 levels less than 459 pg/mL, total tau levels greater than 339 pg/mL, and phosphorylated tau levels greater than 67 pg/mL.

A CDR sum of boxes score of 0 was considered normal memory; scores of 0.5 or higher during follow-up indicated progression to symptomatic Alzheimer’s.

Subjects were stratified according to a combination of memory scores and biomarkers. Normal subjects had no memory impairment and normal biomarkers. Subjects were classified as stage 1 if only their Abeta-42 was abnormal. Stage 2 patients had abnormal Abeta-42and abnormal total or phosphorylated tau levels. Stage 3 subjects had abnormal biomarkers plus memory impairment equal to 0.5 on the CDR. Those in stage 1, 2, or 3 were considered to have preclinical Alzheimer’s.

Those who had normal Abeta-42 but abnormal tau – a marker of neuronal injury – were considered to have suspected non-Alzheimer’s pathophysiology (SNAP), regardless of their baseline memory score.

Patients were a mean of 73 years old at baseline. They had a mean MMSE score was 28.9 and a mean CDR sum of boxes score of 0.03. One-third (34%) were positive for the high-risk apolipoprotein (APOE) epsilon-4 allele.

At baseline, 129 (41%) were classified as normal; 47 (15%) as stage 1; 36 (12%) as stage 2; 13 (4%) as stage 3; and 72 (23%) as being in the SNAP group. The remaining 14 (5%) were unclassified.

Preclinical Alzheimer’s (stages 1-3) was significantly more prevalent among those older than 72 years than in those younger (37% vs. 26%), and in APOE epsilon-4 carriers than in noncarriers (47% vs. 23%).

At 5 years, 110 subjects were available for follow-up; 14 were available at 10 years. By the end of the study, 20 subjects had died.

After a median follow-up of 4 years, progression had occurred in 2% of the normal group; 13% of those in stage 1; 25% of those in stage 2; 54% of those in stage 3; 6% of those in the SNAP group, and 29% of those in the unclassified group, for a total of 32 subjects.

Of those who progressed, symptomatic Alzheimer’s with a CDR sum of boxes score of 0.5 occurred in 22 (69%), CDR 1 symptomatic disease developed in 6 (19%), and CDR 2 symptomatic disease arose in 4 (13%).

Interestingly, the authors noted, "neither age (younger than 72 years vs. 72 years or older) nor APOE genotype predicted the rate of decline," although these subanalyses had limited statistical power because of the small sample sizes. "Although APOE epsilon-4 is often a good predictor of cognitive decline in unselected populations, the absence of its prognostic utility in individuals with AD pathological abnormalities is consistent with findings from previous studies."

After adjustment for multiple covariates, subjects with baseline stage 1 disease were not significantly more likely to progress than was the normal group. However, those in baseline stages 2 and 3 were more likely to progress (hazard ratio 14.3 and 33.8, respectively). Those in the SNAP group were not at a significantly increased risk of progression, compared with the normal group.

After adjustment for covariates, the risk of death was significantly greater in those with baseline preclinical disease (HR 6.2). When the stages were individually assessed, the risk increased as the stages did: HR 3.7 for stage 1, 6.0 for stage 2, and 31.5 for stage 3. Those in the SNAP group were 5.2 times more likely to die by the end of follow-up than were those in the normal group.

Nine subjects with baseline preclinical disease who died received a postpartum autopsy diagnosis. Of these, one had low neuropathological changes consistent with Alzheimer’s and the rest had intermediate-high changes.

Four subjects in the SNAP group who died underwent autopsy. Of these, three had low-level neuropathological changes, including vascular comorbidities. All had a neuritic plaque score of 0. This finding suggests that the cognitive changes were linked to other disorders, the authors said.

Dr. Vos received support from the Center for Translational Molecular Medicine, project LeARN and the EU/EFPIA Innovative Medicines Initiative Joint Undertaking, and Internationale Stichting Alzheimer Onderzoek. Several coauthors were investigators for industry-sponsored studies testing anti-dementia drugs or had ties with pharmaceutical companies developing Alzheimer’s diagnostic tests or therapies.

On Twitter @Alz_Gal

A combination of cerebrospinal fluid biomarkers and simple cognitive testing identified stages of preclinical Alzheimer’s that were associated with cognitive decline and death over a decade of follow-up in a prospective, longitudinal study.

Preclinical disease was present in 31% of adults aged 65 years or older who were living independently in the community and was a reliable predictor of progression. The findings suggest that preclinical staging is not only possible, but could be a useful adjunct for stratifying research populations in therapeutic trials, according Dr. Stephanie J. Vos, the lead investigator (Lancet Neurol. 2013 Sept. 4 [doi:10.1016/S1474-4422(13)70194-7]).

The study aimed to identify the prevalence and long-term outcomes of preclinical Alzheimer’s disease in elderly subjects who were cognitively normal at baseline. Dr. Vos, of the Alzheimer’s Center Limburg at Maastricht (the Netherlands) University, and her colleagues used the combination of biomarkers and cognitive testing to define preclinical stages similar to those recently proposed by the Preclinical Working Group of the National Institute on Aging and the Alzheimer’s Association. These criteria propose three progressive stages for cognitively normal subjects:

• Stage 1, cognitively normal individuals with abnormal amyloid markers.

• Stage 2, abnormal amyloid and neuronal injury markers.

• Stage 3, abnormal amyloid and neuronal injury markers with subtle cognitive changes.

Dr. Vos’ study involved 311 subjects who were cognitively normal at baseline. They underwent lumbar puncture to ascertain cerebrospinal fluid levels of beta-amyloid-42 (Abeta-42), total tau, and phosphorylated tau. They also completed cognitive testing with the Clinical Dementia Rating (CDR) scale and Mini Mental State Exam (MMSE). Each year thereafter, subjects had additional cognitive testing. The primary outcome was the proportion of patients in each preclinical stage at baseline. Secondary outcomes were progression of cognitive decline and mortality.

CSF samples were dichotomized as normal or abnormal based on a level that the investigators determined. The cutoff values for abnormal biomarker measurements that best differentiated subjects with no baseline memory deficits from those in a separate cohort with symptomatic Alzheimer’s were Abeta-42 levels less than 459 pg/mL, total tau levels greater than 339 pg/mL, and phosphorylated tau levels greater than 67 pg/mL.

A CDR sum of boxes score of 0 was considered normal memory; scores of 0.5 or higher during follow-up indicated progression to symptomatic Alzheimer’s.

Subjects were stratified according to a combination of memory scores and biomarkers. Normal subjects had no memory impairment and normal biomarkers. Subjects were classified as stage 1 if only their Abeta-42 was abnormal. Stage 2 patients had abnormal Abeta-42and abnormal total or phosphorylated tau levels. Stage 3 subjects had abnormal biomarkers plus memory impairment equal to 0.5 on the CDR. Those in stage 1, 2, or 3 were considered to have preclinical Alzheimer’s.

Those who had normal Abeta-42 but abnormal tau – a marker of neuronal injury – were considered to have suspected non-Alzheimer’s pathophysiology (SNAP), regardless of their baseline memory score.

Patients were a mean of 73 years old at baseline. They had a mean MMSE score was 28.9 and a mean CDR sum of boxes score of 0.03. One-third (34%) were positive for the high-risk apolipoprotein (APOE) epsilon-4 allele.

At baseline, 129 (41%) were classified as normal; 47 (15%) as stage 1; 36 (12%) as stage 2; 13 (4%) as stage 3; and 72 (23%) as being in the SNAP group. The remaining 14 (5%) were unclassified.

Preclinical Alzheimer’s (stages 1-3) was significantly more prevalent among those older than 72 years than in those younger (37% vs. 26%), and in APOE epsilon-4 carriers than in noncarriers (47% vs. 23%).

At 5 years, 110 subjects were available for follow-up; 14 were available at 10 years. By the end of the study, 20 subjects had died.

After a median follow-up of 4 years, progression had occurred in 2% of the normal group; 13% of those in stage 1; 25% of those in stage 2; 54% of those in stage 3; 6% of those in the SNAP group, and 29% of those in the unclassified group, for a total of 32 subjects.

Of those who progressed, symptomatic Alzheimer’s with a CDR sum of boxes score of 0.5 occurred in 22 (69%), CDR 1 symptomatic disease developed in 6 (19%), and CDR 2 symptomatic disease arose in 4 (13%).

Interestingly, the authors noted, "neither age (younger than 72 years vs. 72 years or older) nor APOE genotype predicted the rate of decline," although these subanalyses had limited statistical power because of the small sample sizes. "Although APOE epsilon-4 is often a good predictor of cognitive decline in unselected populations, the absence of its prognostic utility in individuals with AD pathological abnormalities is consistent with findings from previous studies."

After adjustment for multiple covariates, subjects with baseline stage 1 disease were not significantly more likely to progress than was the normal group. However, those in baseline stages 2 and 3 were more likely to progress (hazard ratio 14.3 and 33.8, respectively). Those in the SNAP group were not at a significantly increased risk of progression, compared with the normal group.

After adjustment for covariates, the risk of death was significantly greater in those with baseline preclinical disease (HR 6.2). When the stages were individually assessed, the risk increased as the stages did: HR 3.7 for stage 1, 6.0 for stage 2, and 31.5 for stage 3. Those in the SNAP group were 5.2 times more likely to die by the end of follow-up than were those in the normal group.

Nine subjects with baseline preclinical disease who died received a postpartum autopsy diagnosis. Of these, one had low neuropathological changes consistent with Alzheimer’s and the rest had intermediate-high changes.

Four subjects in the SNAP group who died underwent autopsy. Of these, three had low-level neuropathological changes, including vascular comorbidities. All had a neuritic plaque score of 0. This finding suggests that the cognitive changes were linked to other disorders, the authors said.

Dr. Vos received support from the Center for Translational Molecular Medicine, project LeARN and the EU/EFPIA Innovative Medicines Initiative Joint Undertaking, and Internationale Stichting Alzheimer Onderzoek. Several coauthors were investigators for industry-sponsored studies testing anti-dementia drugs or had ties with pharmaceutical companies developing Alzheimer’s diagnostic tests or therapies.

On Twitter @Alz_Gal

FROM THE LANCET NEUROLOGY

Major finding: Cognitively normal elders who had abnormal levels of beta amyloid or tau in CSF had up to 33-fold greater risk for developing symptomatic Alzheimer’s disease compared with those who had normal biomarker levels.

Data source: A prospective, longitudinal study of 311 adults followed for up to 15 years.

Disclosures: Dr. Vos received support from the Center for Translational Molecular Medicine, project LeARN and the EU/EFPIA Innovative Medicines Initiative Joint Undertaking, and Internationale Stichting Alzheimer Onderzoek. Several coauthors were investigators for industry-sponsored studies testing anti-dementia drugs or had ties with pharmaceutical companies developing Alzheimer’s diagnostic tests or therapies.

Dexamethasone improves outcomes for infants with bronchiolitis, atopy history

A 5-day course of dexamethasone significantly shortened hospital stays for infants with bronchiolitis who had eczema or close relatives with asthma.

The randomized, placebo-controlled study suggests that a family history of atopy could identify a subset of babies who would benefit from the addition of a corticosteroid to the usual salbutamol therapy for acute bronchiolitis, according to Dr. Khalid Alansari and colleagues. The report was published in the Sept. 16 issue of Pediatrics.

The researchers examined 7-day outcomes in 200 infants with acute bronchiolitis who were at a high risk of asthma, as determined by having at least one first-degree relative with either asthma or eczema. All of the children (mean age 3.5 months) were admitted to a pediatric hospital for treatment, wrote Dr. Alansari of Weill Cornell Medical College, Doha, Qatar, and coauthors. Infants who received dexamethasone were discharged 8 hours earlier than were those receiving standard treatment. The mean duration of symptoms was 4.5 days (Pediatrics 2013 Sept. 13 [doi: 10.1542/peds.2012-3746]).

The study’s primary outcome was time until discharge. Secondary outcomes included the number of patients who needed epinephrine treatment, readmission for a shorter stay in an infirmary site, and revisiting the emergency department or another clinic for the same illness. A study nurse made daily calls to assess the patients after discharge.

Infants in the dexamethasone group were discharged at a mean of 18.6 hours – significantly sooner than those in the control group (27 hours). Epinephrine was necessary for 19 infants in the dexamethasone group and 31 in the placebo group – again a significant difference.

Similar numbers in each group needed readmission and additional outpatient visits in the week after discharge. During the follow-up week, 22% of the dexamethasone group needed infirmary care and the mean stay was 17 hours, compared with 21% of the placebo group with a mean stay of 18 hours.

Nineteen in the dexamethasone group and 11 in the placebo group made a clinic visit (18.6% vs. 11%); this difference was not significant.

The chest radiograph was normal in about 37% of infants studied. About half showed lesser infiltrates; 15% had a lobar collapse or consolidation.

More than 70% had a full sibling with asthma. About 20% had a parent with the disease; in 5%, both parents had it. About 20% of patients had both eczema and first-degree relative with asthma.

All of the infants received 2.5 mg salbutamol nebulization at baseline and at 30, 60, and 120 minutes, and then every 2 hours until discharge. Nebulized epinephrine (0.5 mL/kg with a maximum dose of 5 mL) was available if needed. In addition, they were randomized to either placebo or to a 5-day course of dexamethasone 1 mg/mL, at a rate of 1 mL/kg on day 1, reduced to 0.6 mL/kg for days 2-5.

The study was sponsored by Hamad Medical Corporation. The authors reported no financial conflicts.

A 5-day course of dexamethasone significantly shortened hospital stays for infants with bronchiolitis who had eczema or close relatives with asthma.

The randomized, placebo-controlled study suggests that a family history of atopy could identify a subset of babies who would benefit from the addition of a corticosteroid to the usual salbutamol therapy for acute bronchiolitis, according to Dr. Khalid Alansari and colleagues. The report was published in the Sept. 16 issue of Pediatrics.

The researchers examined 7-day outcomes in 200 infants with acute bronchiolitis who were at a high risk of asthma, as determined by having at least one first-degree relative with either asthma or eczema. All of the children (mean age 3.5 months) were admitted to a pediatric hospital for treatment, wrote Dr. Alansari of Weill Cornell Medical College, Doha, Qatar, and coauthors. Infants who received dexamethasone were discharged 8 hours earlier than were those receiving standard treatment. The mean duration of symptoms was 4.5 days (Pediatrics 2013 Sept. 13 [doi: 10.1542/peds.2012-3746]).

The study’s primary outcome was time until discharge. Secondary outcomes included the number of patients who needed epinephrine treatment, readmission for a shorter stay in an infirmary site, and revisiting the emergency department or another clinic for the same illness. A study nurse made daily calls to assess the patients after discharge.

Infants in the dexamethasone group were discharged at a mean of 18.6 hours – significantly sooner than those in the control group (27 hours). Epinephrine was necessary for 19 infants in the dexamethasone group and 31 in the placebo group – again a significant difference.

Similar numbers in each group needed readmission and additional outpatient visits in the week after discharge. During the follow-up week, 22% of the dexamethasone group needed infirmary care and the mean stay was 17 hours, compared with 21% of the placebo group with a mean stay of 18 hours.

Nineteen in the dexamethasone group and 11 in the placebo group made a clinic visit (18.6% vs. 11%); this difference was not significant.

The chest radiograph was normal in about 37% of infants studied. About half showed lesser infiltrates; 15% had a lobar collapse or consolidation.

More than 70% had a full sibling with asthma. About 20% had a parent with the disease; in 5%, both parents had it. About 20% of patients had both eczema and first-degree relative with asthma.

All of the infants received 2.5 mg salbutamol nebulization at baseline and at 30, 60, and 120 minutes, and then every 2 hours until discharge. Nebulized epinephrine (0.5 mL/kg with a maximum dose of 5 mL) was available if needed. In addition, they were randomized to either placebo or to a 5-day course of dexamethasone 1 mg/mL, at a rate of 1 mL/kg on day 1, reduced to 0.6 mL/kg for days 2-5.

The study was sponsored by Hamad Medical Corporation. The authors reported no financial conflicts.

A 5-day course of dexamethasone significantly shortened hospital stays for infants with bronchiolitis who had eczema or close relatives with asthma.

The randomized, placebo-controlled study suggests that a family history of atopy could identify a subset of babies who would benefit from the addition of a corticosteroid to the usual salbutamol therapy for acute bronchiolitis, according to Dr. Khalid Alansari and colleagues. The report was published in the Sept. 16 issue of Pediatrics.

The researchers examined 7-day outcomes in 200 infants with acute bronchiolitis who were at a high risk of asthma, as determined by having at least one first-degree relative with either asthma or eczema. All of the children (mean age 3.5 months) were admitted to a pediatric hospital for treatment, wrote Dr. Alansari of Weill Cornell Medical College, Doha, Qatar, and coauthors. Infants who received dexamethasone were discharged 8 hours earlier than were those receiving standard treatment. The mean duration of symptoms was 4.5 days (Pediatrics 2013 Sept. 13 [doi: 10.1542/peds.2012-3746]).

The study’s primary outcome was time until discharge. Secondary outcomes included the number of patients who needed epinephrine treatment, readmission for a shorter stay in an infirmary site, and revisiting the emergency department or another clinic for the same illness. A study nurse made daily calls to assess the patients after discharge.

Infants in the dexamethasone group were discharged at a mean of 18.6 hours – significantly sooner than those in the control group (27 hours). Epinephrine was necessary for 19 infants in the dexamethasone group and 31 in the placebo group – again a significant difference.

Similar numbers in each group needed readmission and additional outpatient visits in the week after discharge. During the follow-up week, 22% of the dexamethasone group needed infirmary care and the mean stay was 17 hours, compared with 21% of the placebo group with a mean stay of 18 hours.

Nineteen in the dexamethasone group and 11 in the placebo group made a clinic visit (18.6% vs. 11%); this difference was not significant.

The chest radiograph was normal in about 37% of infants studied. About half showed lesser infiltrates; 15% had a lobar collapse or consolidation.

More than 70% had a full sibling with asthma. About 20% had a parent with the disease; in 5%, both parents had it. About 20% of patients had both eczema and first-degree relative with asthma.

All of the infants received 2.5 mg salbutamol nebulization at baseline and at 30, 60, and 120 minutes, and then every 2 hours until discharge. Nebulized epinephrine (0.5 mL/kg with a maximum dose of 5 mL) was available if needed. In addition, they were randomized to either placebo or to a 5-day course of dexamethasone 1 mg/mL, at a rate of 1 mL/kg on day 1, reduced to 0.6 mL/kg for days 2-5.

The study was sponsored by Hamad Medical Corporation. The authors reported no financial conflicts.

Diagnosing Alzheimer’s: The eyes may have it

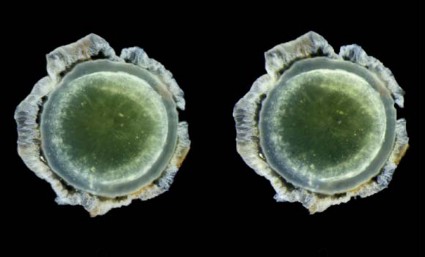

You never know what will happen when you look into a mouse’s eyes.

Twelve years ago, Dr. Lee Goldstein was investigating reactive oxygen species’ effect on the brain of a young Alzheimer’s model mouse. Holding the tiny creature in his hand, he carefully inserted a miniscule microdialysis probe through its skull. As he did, he happened to look right into the mouse’s face. And since he was doing some unrelated work on cataracts at the time, something unusual caught his very practiced gaze.

"The mouse had a cataract. I looked at the other eye, and there was a cataract there, too. That’s very unusual – really not ever seen – in a mouse this age."

Then he looked at all the other mice he was using in that experiment, all of which were older. They all had bilateral cataracts. My first thought was, "This can’t be related to Alzheimer’s disease."

But in fact, he said, it appeared to be. He and his lab soon showed that the cataracts contained a large concentration of aggregated beta-amyloid (Abeta) in the same fraction that’s measured in today’s cerebrospinal fluid Alzheimer’s biomarker tests.

That first observation has birthed two investigational noninvasive amyloid eye tests, which Dr. Goldstein envisions could some day be part of everyone’s annual physical exam.

In people destined to develop Alzheimer’s, some research suggests that Abeta proteins may begin to accumulate in the lens long before they build up to dangerous levels in the brain. If this turns out to be a reliable marker of risk, it could be a sign that would trigger early, presymptomatic Alzheimer’s treatment.

That’s in the future, though, because right now, there is no such treatment. But at this time, Dr. Goldstein said, an amyloid eye test could prove invaluable in reaching that goal. One reason that symptomatic patients don’t respond to investigational drugs could be that by the time the patients are treated, irreversible brain damage has already occurred.

"Once you have cognitive symptoms, the horse is not only out of the barn, it’s run out of the state," said Dr. Goldstein, director of the molecular aging and development laboratory at Boston University. "I hate the term ‘mild cognitive impairment,’ because by the time you have that, there’s nothing mild about it."

Researchers now almost universally agree that the best way to get a true picture of any drug’s potential effectiveness in Alzheimer’s will be to implement treatment before symptoms set in. In addition, Dr. Goldstein said, "Research pools are polluted. Control groups contain subjects who would develop Alzheimer’s if they live long enough," which could be skewing study results. Lens amyloid measurements might help stratify groups in drug studies, and even be a way to track very early effect on amyloid.

But that is a future yet to be determined. In the meantime, researchers still need definitive proof that supranuclear amyloid cataracts are inextricably linked to the amyloid brain plaques of Alzheimer’s.

Initial findings

In 2003, Dr. Goldstein, then at Harvard Medical School, published his original proof of concept study. It comprised postmortem eye and brain specimens from nine subjects with Alzheimer’s and eight controls without the disease, and samples of primary aqueous humor from three people without the disorder who were undergoing cataract surgery (Lancet 2003;361:1258-65).

Abeta-40 and Abeta-42 were present in all of the lenses, in amounts similar to those seen in the corresponding brains. But in patients with Alzheimer’s, the protein aggregated into clumps within the lens fiber cells, forming unusual supranuclear cataracts at the equatorial periphery that appeared to be different from common age-related cataracts and that weren’t present in the control subjects.

The cataract location is an important clue to how long the Abeta has been accumulating, Dr. Goldstein said. Lens fiber cells are particularly long lived, remaining alive for as long as a person lives or until the lens is removed during cataract surgery. The lens starts to form in very early fetal life, with more and more lens cells forming in an outward direction, creating a virtual map of a person’s lifetime, "like the rings of a tree," he said.

Dr. Goldstein and his team discovered that these distinctive cataracts develop in some patients with Alzheimer’s. They appear toward the outer edge of the lens and are composed of the same toxic Abeta protein that builds up in the brain. "The history of amyloid in the body is time stamped in the lens," he said.

As lens fiber cells age, they lose most of their organelles and become transparent – just the right state for a device meant to focus light. "They also make tons of Abeta," Dr. Goldstein said, and it appears to have a very specific function in the eye, one Dr. Rudolph Tanzi and his colleagues at Massachusetts General Hospital, Boston, identified in collaboration with Dr. Goldstein’s team (PLoS ONE 2010;5:e9505).

"It turns out to be a very potent antimicrobial peptide," one of several the eye and brain produce to defend themselves. Amyloid’s sticky nature causes foreign invaders to clump together, so they’re more easily destroyed. This finding also suggests that Abeta could have a similar function in the brain, supporting some theories that Alzheimer’s might be at least partially triggered by a hyperinflammatory response toward an invading pathogen or another immunoreactive incident.

Testing lenses in Down syndrome patients

Interesting as all of that is, it doesn’t prove the theory that the lens amyloid record somehow tracks Alzheimer’s development. But other studies do explore that concept, including one Dr. Goldstein published in 2010. In this study, Dr. Goldstein and his colleagues examined lens amyloid in people with Down syndrome, a group predestined to develop Alzheimer’s (PLoS ONE 2010;5:e10659). The genetic mutation that causes the syndrome also increases production of the amyloid precursor protein (APP), Abeta’s antecedent.

The lenses from subjects with Down syndrome, aged 2-69 years, were compared with lenses from control subjects and people with both familial and late-onset Alzheimer’s. "The 2-year-old with Down syndrome in this study actually had more lens amyloid than the adults with familial Alzheimer’s," Dr. Goldstein said. In unpublished data, he added, the protein has even been observed in Down syndrome fetal lenses.

He expanded on this work in a poster presented at the 2013 Alzheimer’s Association International Conference. Dr. Goldstein and his team have developed and validated a laser eye scanning instrument that noninvasively measures how light is reflected from the tiniest particles – in this case clumps of Abeta protein – within the lens of living human subjects.

"We hypothesize that due to the trisomy of chromosome 21 in Down syndrome (and triplication of the APP gene), which results in increased expression of Abeta in the lens, the intensity of scattered light in Down syndrome patients will be higher than [in] age-matched controls," he noted in the poster.

Not everyone agrees with this idea, however. It has stirred controversy since he first introduced the idea, when, he said, "mainstream Alzheimer’s research simply didn’t believe it." In fact, at least two other researchers’ studies have come to quite different conclusions.

Dr. Charles Eberhart, a pathologist at Johns Hopkins University, Baltimore, published his data in the journal Brain Pathology (2013 June 28 [doi:10.1111/bpa.12070]). The study examined retinas, lenses, and brains from 11 patients with Alzheimer’s, 6 with Parkinson’s, and 6 age-matched controls. Eight eyes (five from Alzheimer’s patients and three from controls) did have cataracts. Dr. Eberhart and his colleagues used immunohistochemistry and Congo red staining to look for amyloid, phosphorylated tau, and alpha-synuclein.

"The short answer is – we didn’t find any amyloid deposits in the lens, or any abnormal tau accumulations," he said in an interview.

The study has two possible interpretations, he said: Either Abeta, tau, and synuclein don’t accumulate in Alzheimer’s eyes as they do in Alzheimer’s brains, or they are there, but simply not detected by his methods. "It certainly might be there. All we can say is that with this method, which is the accepted way of determining amyloid in brain tissue, we didn’t see it in eyes," he said.

The second study, conducted by Dr. Ralph Michael of the Universitat Autònoma de Barcelona and his colleagues, came to a similar conclusion (Exp. Eye Res. 2013;106:5-13). It involved 39 lenses and brains from 21 Alzheimer’s patients, and 15 lenses from age-matched controls. Six of the Alzheimer’s lenses and seven control lenses had cataracts. These investigators used staining methods similar to those in the Hopkins study.

"Beta-amyloid immunohistochemistry was positive in the brain tissues but not in the cornea sample," they wrote. "Lenses from control and AD [Alzheimer’s disease] donors were, without exception, negative after Congo red, thioflavin, and beta-amyloid immunohistochemical staining. ... The absence of staining in AD and control lenses with the techniques employed lead us to conclude that there is no beta-amyloid in lenses from donors with AD or in control cortical cataracts."

Dr. Goldstein said he doesn’t doubt these findings. Congo red staining yields a difficult-to-interpret sign, he said. Amyloid appears red under standard light spectroscopy, but takes on a very characteristic shade, called apple green under polarized light. "This is an old staining method that’s not very sensitive nor is it specific for Abeta – it’s also highly variable."

Technique is critical, he added. "It took us years to perfect our technique for the lens. It’s very difficult to work with lens, harder to work with old lens, and extremely hard to work with old, sick lens."

Instead of relying solely on Congo red or other staining techniques, Dr. Goldstein’s team confirmed their findings using a combination of biochemical analyses, immunogold electron microscopy, and two different types of mass spectrometry – methods he said are irrefutably accurate. "You can’t argue with this unless you are willing to argue with the very concept of mass spectrometry. It’s the gold standard," he said.

Confirmation in transgenic mice and Down syndrome patients strengthens the hypothesis, he said, as do the conclusions of his most recent paper. It looked at data from 1,249 people included in the Framingham Eye Study, and found a genetic link between a specific type of midlife cataracts (consistent with those previously found in Alzheimer’s) and later cognitive and brain structural changes associated with Alzheimer’s (PLoS ONE 2012;7:e43728) .

The culprit appeared to be a mutation of a gene that codes for delta-catenin, which Dr. Goldstein postulated may normally help suppress Abeta production. The altered form, however, appears to affect neuronal structure and is instead associated with an increase in Abeta-42 production in cell culture. The malformed delta-catenin protein was also found throughout the lenses of study subjects with Alzheimer’s, but not in control lenses.

Screening patients in the future?

Dr. Goldstein said he envisions a future in which annual lens exams might guide risk assessment and treatment initiation. But physicians who might someday screen patients certainly won’t have a mass spectrometer in the back room.

He has invented two devices, he said, that will fill that need. The most recent is a laser scanning ophthalmoscope that uses dynamic light scattering to detect the tiniest amyloid particles in the lens – particles less than 30 nm. This is the device he’s using in the ongoing Boston University/Boston Children’s Hospital study of lens amyloid in children with Down syndrome.

The second device combines optical imaging with aftobetin, a fluorescent amyloid ligand. Dr. Goldstein holds a patent on this device, which he invented in partnership with Cognoptix (formerly Neuroptix), a company he cofounded in 2001, although he is no longer operationally affiliated with it.

Cognoptix has developed the SAPPHIRE II system, a combination drug/device that detects amyloid in the lens using aftobetin. The company licensed aftobetin from the University of California, San Diego. It’s formulated into an ophthalmic ointment administered prior to scanning with the SAPPHIRE II system. The procedure uses fluorescent ligand scanning to detect amyloid aggregates in the lens, said Paul Hartung, president and chief executive officer of the Acton, Mass., company.

"We use an eye-safe laser tuned to pick up the fluorescence. It doesn’t require dilation of the pupil, and it has the capability of actually registering itself in the correct location in the eye," he said in an interview.

SAPPHIRE II has had a busy year, including a proof of concept study published in May and reported at the Alzheimer’s Association International Conference. In this study, the system successfully differentiated five Alzheimer’s patients from five controls (Front. Neurol. 2013 May 27 [doi:10.3389/fneur.2013.00062]).

Cognoptix has begun a second study testing the system against PET amyloid brain imaging in 20 patients with probable Alzheimer’s and 20 controls, Mr. Hartung said.

A third planned study is a pivotal phase III trial that will enroll 400 subjects, all of whom will undergo both the eye exam and PET amyloid imaging. It’s designed to support premarketing approval, Mr. Hartung said. Currently SAPPHIRE II has an investigational device exemption from the Food and Drug Administration’s Center for Devices and Radiological Health.

"Our end goal is to get this into the general practitioner’s office, where about 40% of Alzheimer’s drug prescriptions are written by general practitioners who really have no data on hand. Right now, based on cognitive assessments, they have only a 50-50 chance of getting the right diagnosis," Mr. Hartung said.

Dr. Goldstein and Mr. Hartung hold financial interests in devices to measure lens amyloid. Dr. Ralph Michael listed no financial disclosures. Dr. Charles Eberhart said he had no relevant financial disclosures.

On Twitter @Alz_Gal

You never know what will happen when you look into a mouse’s eyes.

Twelve years ago, Dr. Lee Goldstein was investigating reactive oxygen species’ effect on the brain of a young Alzheimer’s model mouse. Holding the tiny creature in his hand, he carefully inserted a miniscule microdialysis probe through its skull. As he did, he happened to look right into the mouse’s face. And since he was doing some unrelated work on cataracts at the time, something unusual caught his very practiced gaze.

"The mouse had a cataract. I looked at the other eye, and there was a cataract there, too. That’s very unusual – really not ever seen – in a mouse this age."

Then he looked at all the other mice he was using in that experiment, all of which were older. They all had bilateral cataracts. My first thought was, "This can’t be related to Alzheimer’s disease."

But in fact, he said, it appeared to be. He and his lab soon showed that the cataracts contained a large concentration of aggregated beta-amyloid (Abeta) in the same fraction that’s measured in today’s cerebrospinal fluid Alzheimer’s biomarker tests.

That first observation has birthed two investigational noninvasive amyloid eye tests, which Dr. Goldstein envisions could some day be part of everyone’s annual physical exam.

In people destined to develop Alzheimer’s, some research suggests that Abeta proteins may begin to accumulate in the lens long before they build up to dangerous levels in the brain. If this turns out to be a reliable marker of risk, it could be a sign that would trigger early, presymptomatic Alzheimer’s treatment.

That’s in the future, though, because right now, there is no such treatment. But at this time, Dr. Goldstein said, an amyloid eye test could prove invaluable in reaching that goal. One reason that symptomatic patients don’t respond to investigational drugs could be that by the time the patients are treated, irreversible brain damage has already occurred.

"Once you have cognitive symptoms, the horse is not only out of the barn, it’s run out of the state," said Dr. Goldstein, director of the molecular aging and development laboratory at Boston University. "I hate the term ‘mild cognitive impairment,’ because by the time you have that, there’s nothing mild about it."

Researchers now almost universally agree that the best way to get a true picture of any drug’s potential effectiveness in Alzheimer’s will be to implement treatment before symptoms set in. In addition, Dr. Goldstein said, "Research pools are polluted. Control groups contain subjects who would develop Alzheimer’s if they live long enough," which could be skewing study results. Lens amyloid measurements might help stratify groups in drug studies, and even be a way to track very early effect on amyloid.

But that is a future yet to be determined. In the meantime, researchers still need definitive proof that supranuclear amyloid cataracts are inextricably linked to the amyloid brain plaques of Alzheimer’s.

Initial findings

In 2003, Dr. Goldstein, then at Harvard Medical School, published his original proof of concept study. It comprised postmortem eye and brain specimens from nine subjects with Alzheimer’s and eight controls without the disease, and samples of primary aqueous humor from three people without the disorder who were undergoing cataract surgery (Lancet 2003;361:1258-65).

Abeta-40 and Abeta-42 were present in all of the lenses, in amounts similar to those seen in the corresponding brains. But in patients with Alzheimer’s, the protein aggregated into clumps within the lens fiber cells, forming unusual supranuclear cataracts at the equatorial periphery that appeared to be different from common age-related cataracts and that weren’t present in the control subjects.

The cataract location is an important clue to how long the Abeta has been accumulating, Dr. Goldstein said. Lens fiber cells are particularly long lived, remaining alive for as long as a person lives or until the lens is removed during cataract surgery. The lens starts to form in very early fetal life, with more and more lens cells forming in an outward direction, creating a virtual map of a person’s lifetime, "like the rings of a tree," he said.

Dr. Goldstein and his team discovered that these distinctive cataracts develop in some patients with Alzheimer’s. They appear toward the outer edge of the lens and are composed of the same toxic Abeta protein that builds up in the brain. "The history of amyloid in the body is time stamped in the lens," he said.

As lens fiber cells age, they lose most of their organelles and become transparent – just the right state for a device meant to focus light. "They also make tons of Abeta," Dr. Goldstein said, and it appears to have a very specific function in the eye, one Dr. Rudolph Tanzi and his colleagues at Massachusetts General Hospital, Boston, identified in collaboration with Dr. Goldstein’s team (PLoS ONE 2010;5:e9505).

"It turns out to be a very potent antimicrobial peptide," one of several the eye and brain produce to defend themselves. Amyloid’s sticky nature causes foreign invaders to clump together, so they’re more easily destroyed. This finding also suggests that Abeta could have a similar function in the brain, supporting some theories that Alzheimer’s might be at least partially triggered by a hyperinflammatory response toward an invading pathogen or another immunoreactive incident.

Testing lenses in Down syndrome patients

Interesting as all of that is, it doesn’t prove the theory that the lens amyloid record somehow tracks Alzheimer’s development. But other studies do explore that concept, including one Dr. Goldstein published in 2010. In this study, Dr. Goldstein and his colleagues examined lens amyloid in people with Down syndrome, a group predestined to develop Alzheimer’s (PLoS ONE 2010;5:e10659). The genetic mutation that causes the syndrome also increases production of the amyloid precursor protein (APP), Abeta’s antecedent.

The lenses from subjects with Down syndrome, aged 2-69 years, were compared with lenses from control subjects and people with both familial and late-onset Alzheimer’s. "The 2-year-old with Down syndrome in this study actually had more lens amyloid than the adults with familial Alzheimer’s," Dr. Goldstein said. In unpublished data, he added, the protein has even been observed in Down syndrome fetal lenses.

He expanded on this work in a poster presented at the 2013 Alzheimer’s Association International Conference. Dr. Goldstein and his team have developed and validated a laser eye scanning instrument that noninvasively measures how light is reflected from the tiniest particles – in this case clumps of Abeta protein – within the lens of living human subjects.

"We hypothesize that due to the trisomy of chromosome 21 in Down syndrome (and triplication of the APP gene), which results in increased expression of Abeta in the lens, the intensity of scattered light in Down syndrome patients will be higher than [in] age-matched controls," he noted in the poster.

Not everyone agrees with this idea, however. It has stirred controversy since he first introduced the idea, when, he said, "mainstream Alzheimer’s research simply didn’t believe it." In fact, at least two other researchers’ studies have come to quite different conclusions.

Dr. Charles Eberhart, a pathologist at Johns Hopkins University, Baltimore, published his data in the journal Brain Pathology (2013 June 28 [doi:10.1111/bpa.12070]). The study examined retinas, lenses, and brains from 11 patients with Alzheimer’s, 6 with Parkinson’s, and 6 age-matched controls. Eight eyes (five from Alzheimer’s patients and three from controls) did have cataracts. Dr. Eberhart and his colleagues used immunohistochemistry and Congo red staining to look for amyloid, phosphorylated tau, and alpha-synuclein.

"The short answer is – we didn’t find any amyloid deposits in the lens, or any abnormal tau accumulations," he said in an interview.

The study has two possible interpretations, he said: Either Abeta, tau, and synuclein don’t accumulate in Alzheimer’s eyes as they do in Alzheimer’s brains, or they are there, but simply not detected by his methods. "It certainly might be there. All we can say is that with this method, which is the accepted way of determining amyloid in brain tissue, we didn’t see it in eyes," he said.

The second study, conducted by Dr. Ralph Michael of the Universitat Autònoma de Barcelona and his colleagues, came to a similar conclusion (Exp. Eye Res. 2013;106:5-13). It involved 39 lenses and brains from 21 Alzheimer’s patients, and 15 lenses from age-matched controls. Six of the Alzheimer’s lenses and seven control lenses had cataracts. These investigators used staining methods similar to those in the Hopkins study.

"Beta-amyloid immunohistochemistry was positive in the brain tissues but not in the cornea sample," they wrote. "Lenses from control and AD [Alzheimer’s disease] donors were, without exception, negative after Congo red, thioflavin, and beta-amyloid immunohistochemical staining. ... The absence of staining in AD and control lenses with the techniques employed lead us to conclude that there is no beta-amyloid in lenses from donors with AD or in control cortical cataracts."

Dr. Goldstein said he doesn’t doubt these findings. Congo red staining yields a difficult-to-interpret sign, he said. Amyloid appears red under standard light spectroscopy, but takes on a very characteristic shade, called apple green under polarized light. "This is an old staining method that’s not very sensitive nor is it specific for Abeta – it’s also highly variable."

Technique is critical, he added. "It took us years to perfect our technique for the lens. It’s very difficult to work with lens, harder to work with old lens, and extremely hard to work with old, sick lens."

Instead of relying solely on Congo red or other staining techniques, Dr. Goldstein’s team confirmed their findings using a combination of biochemical analyses, immunogold electron microscopy, and two different types of mass spectrometry – methods he said are irrefutably accurate. "You can’t argue with this unless you are willing to argue with the very concept of mass spectrometry. It’s the gold standard," he said.

Confirmation in transgenic mice and Down syndrome patients strengthens the hypothesis, he said, as do the conclusions of his most recent paper. It looked at data from 1,249 people included in the Framingham Eye Study, and found a genetic link between a specific type of midlife cataracts (consistent with those previously found in Alzheimer’s) and later cognitive and brain structural changes associated with Alzheimer’s (PLoS ONE 2012;7:e43728) .

The culprit appeared to be a mutation of a gene that codes for delta-catenin, which Dr. Goldstein postulated may normally help suppress Abeta production. The altered form, however, appears to affect neuronal structure and is instead associated with an increase in Abeta-42 production in cell culture. The malformed delta-catenin protein was also found throughout the lenses of study subjects with Alzheimer’s, but not in control lenses.

Screening patients in the future?

Dr. Goldstein said he envisions a future in which annual lens exams might guide risk assessment and treatment initiation. But physicians who might someday screen patients certainly won’t have a mass spectrometer in the back room.

He has invented two devices, he said, that will fill that need. The most recent is a laser scanning ophthalmoscope that uses dynamic light scattering to detect the tiniest amyloid particles in the lens – particles less than 30 nm. This is the device he’s using in the ongoing Boston University/Boston Children’s Hospital study of lens amyloid in children with Down syndrome.

The second device combines optical imaging with aftobetin, a fluorescent amyloid ligand. Dr. Goldstein holds a patent on this device, which he invented in partnership with Cognoptix (formerly Neuroptix), a company he cofounded in 2001, although he is no longer operationally affiliated with it.

Cognoptix has developed the SAPPHIRE II system, a combination drug/device that detects amyloid in the lens using aftobetin. The company licensed aftobetin from the University of California, San Diego. It’s formulated into an ophthalmic ointment administered prior to scanning with the SAPPHIRE II system. The procedure uses fluorescent ligand scanning to detect amyloid aggregates in the lens, said Paul Hartung, president and chief executive officer of the Acton, Mass., company.

"We use an eye-safe laser tuned to pick up the fluorescence. It doesn’t require dilation of the pupil, and it has the capability of actually registering itself in the correct location in the eye," he said in an interview.

SAPPHIRE II has had a busy year, including a proof of concept study published in May and reported at the Alzheimer’s Association International Conference. In this study, the system successfully differentiated five Alzheimer’s patients from five controls (Front. Neurol. 2013 May 27 [doi:10.3389/fneur.2013.00062]).

Cognoptix has begun a second study testing the system against PET amyloid brain imaging in 20 patients with probable Alzheimer’s and 20 controls, Mr. Hartung said.

A third planned study is a pivotal phase III trial that will enroll 400 subjects, all of whom will undergo both the eye exam and PET amyloid imaging. It’s designed to support premarketing approval, Mr. Hartung said. Currently SAPPHIRE II has an investigational device exemption from the Food and Drug Administration’s Center for Devices and Radiological Health.

"Our end goal is to get this into the general practitioner’s office, where about 40% of Alzheimer’s drug prescriptions are written by general practitioners who really have no data on hand. Right now, based on cognitive assessments, they have only a 50-50 chance of getting the right diagnosis," Mr. Hartung said.

Dr. Goldstein and Mr. Hartung hold financial interests in devices to measure lens amyloid. Dr. Ralph Michael listed no financial disclosures. Dr. Charles Eberhart said he had no relevant financial disclosures.

On Twitter @Alz_Gal

You never know what will happen when you look into a mouse’s eyes.

Twelve years ago, Dr. Lee Goldstein was investigating reactive oxygen species’ effect on the brain of a young Alzheimer’s model mouse. Holding the tiny creature in his hand, he carefully inserted a miniscule microdialysis probe through its skull. As he did, he happened to look right into the mouse’s face. And since he was doing some unrelated work on cataracts at the time, something unusual caught his very practiced gaze.

"The mouse had a cataract. I looked at the other eye, and there was a cataract there, too. That’s very unusual – really not ever seen – in a mouse this age."

Then he looked at all the other mice he was using in that experiment, all of which were older. They all had bilateral cataracts. My first thought was, "This can’t be related to Alzheimer’s disease."

But in fact, he said, it appeared to be. He and his lab soon showed that the cataracts contained a large concentration of aggregated beta-amyloid (Abeta) in the same fraction that’s measured in today’s cerebrospinal fluid Alzheimer’s biomarker tests.

That first observation has birthed two investigational noninvasive amyloid eye tests, which Dr. Goldstein envisions could some day be part of everyone’s annual physical exam.

In people destined to develop Alzheimer’s, some research suggests that Abeta proteins may begin to accumulate in the lens long before they build up to dangerous levels in the brain. If this turns out to be a reliable marker of risk, it could be a sign that would trigger early, presymptomatic Alzheimer’s treatment.

That’s in the future, though, because right now, there is no such treatment. But at this time, Dr. Goldstein said, an amyloid eye test could prove invaluable in reaching that goal. One reason that symptomatic patients don’t respond to investigational drugs could be that by the time the patients are treated, irreversible brain damage has already occurred.

"Once you have cognitive symptoms, the horse is not only out of the barn, it’s run out of the state," said Dr. Goldstein, director of the molecular aging and development laboratory at Boston University. "I hate the term ‘mild cognitive impairment,’ because by the time you have that, there’s nothing mild about it."

Researchers now almost universally agree that the best way to get a true picture of any drug’s potential effectiveness in Alzheimer’s will be to implement treatment before symptoms set in. In addition, Dr. Goldstein said, "Research pools are polluted. Control groups contain subjects who would develop Alzheimer’s if they live long enough," which could be skewing study results. Lens amyloid measurements might help stratify groups in drug studies, and even be a way to track very early effect on amyloid.

But that is a future yet to be determined. In the meantime, researchers still need definitive proof that supranuclear amyloid cataracts are inextricably linked to the amyloid brain plaques of Alzheimer’s.

Initial findings

In 2003, Dr. Goldstein, then at Harvard Medical School, published his original proof of concept study. It comprised postmortem eye and brain specimens from nine subjects with Alzheimer’s and eight controls without the disease, and samples of primary aqueous humor from three people without the disorder who were undergoing cataract surgery (Lancet 2003;361:1258-65).

Abeta-40 and Abeta-42 were present in all of the lenses, in amounts similar to those seen in the corresponding brains. But in patients with Alzheimer’s, the protein aggregated into clumps within the lens fiber cells, forming unusual supranuclear cataracts at the equatorial periphery that appeared to be different from common age-related cataracts and that weren’t present in the control subjects.

The cataract location is an important clue to how long the Abeta has been accumulating, Dr. Goldstein said. Lens fiber cells are particularly long lived, remaining alive for as long as a person lives or until the lens is removed during cataract surgery. The lens starts to form in very early fetal life, with more and more lens cells forming in an outward direction, creating a virtual map of a person’s lifetime, "like the rings of a tree," he said.

Dr. Goldstein and his team discovered that these distinctive cataracts develop in some patients with Alzheimer’s. They appear toward the outer edge of the lens and are composed of the same toxic Abeta protein that builds up in the brain. "The history of amyloid in the body is time stamped in the lens," he said.

As lens fiber cells age, they lose most of their organelles and become transparent – just the right state for a device meant to focus light. "They also make tons of Abeta," Dr. Goldstein said, and it appears to have a very specific function in the eye, one Dr. Rudolph Tanzi and his colleagues at Massachusetts General Hospital, Boston, identified in collaboration with Dr. Goldstein’s team (PLoS ONE 2010;5:e9505).

"It turns out to be a very potent antimicrobial peptide," one of several the eye and brain produce to defend themselves. Amyloid’s sticky nature causes foreign invaders to clump together, so they’re more easily destroyed. This finding also suggests that Abeta could have a similar function in the brain, supporting some theories that Alzheimer’s might be at least partially triggered by a hyperinflammatory response toward an invading pathogen or another immunoreactive incident.

Testing lenses in Down syndrome patients

Interesting as all of that is, it doesn’t prove the theory that the lens amyloid record somehow tracks Alzheimer’s development. But other studies do explore that concept, including one Dr. Goldstein published in 2010. In this study, Dr. Goldstein and his colleagues examined lens amyloid in people with Down syndrome, a group predestined to develop Alzheimer’s (PLoS ONE 2010;5:e10659). The genetic mutation that causes the syndrome also increases production of the amyloid precursor protein (APP), Abeta’s antecedent.

The lenses from subjects with Down syndrome, aged 2-69 years, were compared with lenses from control subjects and people with both familial and late-onset Alzheimer’s. "The 2-year-old with Down syndrome in this study actually had more lens amyloid than the adults with familial Alzheimer’s," Dr. Goldstein said. In unpublished data, he added, the protein has even been observed in Down syndrome fetal lenses.