User login

Methodological Progress Note: Group Level Assessment

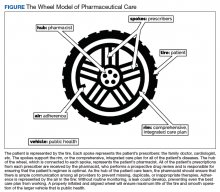

Group Level Assessment (GLA) is a qualitative research methodology designed to enable groups of stakeholders to generate and evaluate data in participatory sessions.1 It has been used in diverse health-related settings for multiple research purposes, including needs/resource assessment, program evaluation, quality improvement, intervention development, feasibility/acceptability testing, knowledge generation, and prioritization.2-6 Unlike traditional qualitative research methods in which participants provide data and researchers analyze it, GLA uses a seven-step structured process (Table) that actively involves a large group of stakeholders in the generation, interpretation, and synthesis of data and allows salient themes to be identified from stakeholders’ perspectives.7 GLA deliverables include a set of action items that are relevant to the target issue and representative of the collective view of stakeholders. In this issue of the Journal of Hospital Medicine, Choe and colleagues used GLA methodology to identify the perspectives of pediatric medical providers and interpreters with regard to the use of interpreter services for hospitalized children having limited English proficiency (LEP).8

Each individual GLA session is intended for a group of 15-60 stakeholders. Ideally, a GLA session is scheduled for approximately three hours with a skilled facilitator guiding the group through the steps of the session.1 Depending on the study scope and research questions, modifications to GLA can be made when engaging fewer stakeholders, conducting the GLA across several shorter sessions with the same group, or conducting multiple sessions with different stakeholder groups wherein results are integrated across the groups.1

APPLICATION OF GLA

Stakeholder Recruitment

GLAs are designed to bring diverse groups together to be able to generate and evaluate ideas collectively, which in turn helps to reduce potential power differentials between or among participants. Depending on the research question(s), relevant stakeholders may include local community residents, patients, caregivers, community leaders, practitioners, providers, community-based organizations, and even CEOs. The use of purposeful sampling techniques can obtain a diverse group of stakeholders, thus helping ensure a wide range of ideas and perspectives. Choe and colleagues used flyers and announcements at staff meetings to recruit physicians, nursing staff, and interpreters who were subsequently assigned to GLA sessions to ensure engagement from a range of stakeholder roles at each session.8

Session Logistics

Strategies to create an open, equitable atmosphere in GLA sessions include role-based assigning of individuals to specific groups, avoiding introductions that emphasize status, pre-education for any leaders and supervisors about the participatory and equitable nature of GLA, and minimizing cliques and overly dominant voices throughout the session. Stakeholders who take part in activities in a GLA session typically receive an incentive for participating. Additional supports such as food and childcare may be considered. GLA sessions involving children may require providing the young participants assistance in writing their responses and/or the use of additional facilitators to keep the small groups on track.5 Interpreters and facilitators can be incorporated into GLA sessions to assist stakeholders who may need assistance with understanding and responding to prompts, such as language interpretation and translation services.

Prompt Development

Similar to the development of questions for interview and focus group guides, creating effective prompts is a critical component of data collection in GLA. Prompts are statements worded as incomplete or fill-in-the-blank sentences that should be open ended to allow participants to respond with their own thoughts and experiences. Prompts that resemble the beginning of a sentence (eg, “The biggest challenge we face is…”) encourage honest reflection rather than questions that can make participants feel like they are being evaluated. We recommend varying the number of prompts based on the group size: approximately one chart and prompt per person attending, with a maximum of 35 prompts at one session.1 This allows for sufficient variability in the responses generated without being overwhelming or too time-consuming. For example, Choe et al. developed a pool of 51 unique prompts addressing their research questions and then used 15-32 prompts in each GLA session, depending on the number of participants. 8 Prompts should be written with some purposeful redundancy, targeting the research question from several angles. The emphasis should be on the content’s alignment with the research questions rather than the actual wording of the prompts as a way of ensuring that the generated data is both valid and useful.

Prompts should also vary in format, style (eg, different color markers, pictures, fonts, etc.), and placement on each flip chart page. An individual flip chart can include multiple related prompts: for example, “split-halves” in two columns or rows (ie, the best part/worst part). Taken as a whole, the flip charts and accompanying prompts create different lenses for gathering participant perspectives on the research questions. See Appendix Table for suggested prompt characteristics and examples from a hypothetical study related to pediatric healthcare.

GLA prompt development will ideally occur in collaboration with an advisory team comprised of representative members from each of the stakeholder groups. Using a participatory research approach in the research design and preparation phases ensures that GLA prompts are understandable and relevant to participants and are able to appropriately capture the underlying purpose of the study.

Description of the Seven Steps in GLA

In step one, climate setting, the facilitator provides an overview of the session, including a description of the GLA rationale and process. Typically, an icebreaker or brief introduction activity is conducted. Step two, generating, is a hallmark step of GLA in which participants walk around and respond to prompts prewritten on flip charts hung on walls in a large room. Participants use markers and respond to each prompt by either providing a unique comment and/or corroborating an existing comment by adding a checkmark or star. During this step, organizers typically play music and encourage participants to enjoy food, chat with fellow participants, and leisurely move from prompt to prompt in any order. Step three, appreciating, is a brief interim step where participants take a “gallery walk” and view responses written on the charts.

In step four, reflecting, participants reflect on the data and briefly write down their thoughts about the responses generated in the session. In step five, understanding, smaller groups synthesize responses across a subset of charts and report their findings to the larger group. Depending on the size and composition of the larger group, small groups of four to seven people are formed or assigned. Each small group is assigned a subset of approximately four to six charts. Using thematic analysis, participants look for relationships among the responses on their assigned charts, referring to individual responses as evidence for the main findings. Groups will take notes on the charts, circle key phrases, or draw arrows to show relationships in the data and thereafter develop themes. As each small group reports their findings, the facilitator will keep a running list of generated themes, ideally in the participants’ own words. Step six, selecting, involves participants discussing, further synthesizing, and prioritizing data. Step six can occur as a facilitated large group discussion or in a form in which participants can remain in the same small groups from step five and work together to complete this further step. Themes across all of the small groups are consolidated and developed into overarching themes. Step seven, action, includes planning the next steps to address priorities.

Data Analysis

Analyzing the data generated through a GLA is an iterative process incorporated into steps three to seven as described above and often continues after the GLA session is complete. Step seven can be scheduled as a separate action-planning session depending on time constraints and the study goals. This final step moves the group toward interpretation and dissemination as themes are prioritized and used to drive action steps toward a programmatic, policy, or community change. In some studies, themes will be aggregated across multiple GLAs to integrate the findings from several sessions. This step is sometimes completed with a smaller group of stakeholders, an advisory board, or the research team.

Complementary Data and Synthesis

Research teams often collect additional sources of data that are later used to analyze and interpret the initial stakeholder-developed findings (ie, demographic surveys) and to identify priority areas. Field notes, photographs of completed charts, and recorded participant quotes can also be incorporated into the thematic analysis. Small and large group discussions could be audio recorded and transcribed to capture participants’ individual comments and interpretations. In Choe et al. the team recorded detailed notes, including quotations from participants, and collected a demographic survey. After each GLA session, Choe and colleagues compiled all of the stakeholder-driven findings to develop an overarching set of themes related to communication with LEP families and priority areas that could inform subsequent action. Similar to the qualitative validation strategy of member checking, the authors shared and revised this overarching set of themes in discussion with stakeholders to ensure that participant ideas were adequately and accurately represented.8

STRENGTHS OF GLA

Compared to traditional qualitative methods such as one-on-one interviews and focus groups, GLA is designed for large groups and is used to promote active engagement of diverse stakeholders in the participatory process. Unlike many other qualitative methods, GLA provides a stakeholder-driven, structured format to elicit diverse stakeholder viewpoints in the moment and build consensus in a participatory manner about priorities and subsequent actions. The progression of the GLA process is collaborative, with stakeholders generating, analyzing, and prioritizing data from their own perspectives. In a focus group or one-on-one interviews, researchers would conduct the analysis after the audio recordings were transcribed. In GLA, stakeholders conduct a thematic analysis in real time, an aspect that adds the stakeholder perspective to analysis of the findings, interpretation, and implications. GLA offers a fun and interactive experience that can build a sense of community among participants (eg, walking around, impromptu conversation, working in small groups, sharing perspectives on the same issue from different vantage points, etc.). GLA is a versatile, flexible methodology that can be used to address different research objectives, be modified for use with various size groups, and be adapted based on the needs and characteristics of stakeholders (eg, children, people with disabilities, etc.).1 When used in recruitment, GLA is designed to include stakeholders representing different roles and levels of a system. GLA can be particularly useful when engaging underserved communities in research because the process is nonthreatening and promotive of shared perspectives and decision-making. Importantly, the final step of GLA provides interested stakeholders with a way to stay involved in the research through prioritization and action.

LIMITATIONS OF GLA

Like other self-report research methods, GLA relies on stakeholder comfort and willingness to share “public data.”1 Thus, controversial or sensitive issues may not be brought forth. Since the final themes of GLA are consensus based in terms of what the group of stakeholders finds to be most important, nuances and outlier data can be missed. Successfully conducting a GLA requires a skilled, flexible facilitator who can manage group dynamics while also balancing the structure of the seven-step process, promoting an open and equitable environment, and ensuring the research process remains rigorous. Large groups can be more difficult for facilitators to manage especially when there are power differentials, conflict, and hidden agendas among stakeholders. The large group design, multiple steps of GLA, and participatory atmosphere with music and food can be off-putting for some stakeholders who find the process too noisy, overwhelming, or unstructured. In addition, large groups can be challenging to schedule at times and to find locations that are convenient for stakeholders.

WHY DID THE AUTHORS USE GLA?

Compared to researcher-driven qualitative methods that can be resource-intensive and are limited by researcher perspective, GLA emphasizes the contextual, “lived” expertise of stakeholders and relies on them in real time to identify and prioritize matters relevant to the participants. The participatory process of GLA promotes stakeholder buy-in and builds on the collective wisdom of the stakeholder group. This is ideally seen in Choe et al.’s study where GLA offered the researchers a structured qualitative methodology that engaged a large number of medical providers and interpreters to identify effective practices that should ultimately enhance communication with families of hospitalized LEP children.

Disclosures

The authors have nothing to disclose.

1. Vaughn LM, Lohmueller M. Calling all stakeholders: group-level assessment (GLA)—a qualitative and participatory method for large groups. Eval Rev. 2014;38(4):336-355. https:// doi.org/10.1177/0193841X14544903.

2. Gosdin CH, Vaughn L. Perceptions of physician bedside handoff with nurse and family involvement. Hosp Pediatr. 2012;2(1):34-38. https://doi.org/10.1542/hpeds.2011-0008-2

3. Graham KE, Schellinger AR, Vaughn LM. Developing strategies for positive change: transitioning foster youth to adulthood. Child Youth Serv Rev. 2015;54:71-79. https://doi.org/10.1016/j.childyouth.2015.04.014

4. Schondelmeyer AC, Jenkins AM, Allison B, et al. Factors influencing use of continuous physiologic monitors for hospitalized pediatric patients. Hosp Pediatr. 2019;9(6):423-428. https://doi.org/10.1542/hpeds.2019-0007

5. Vaughn LM, Jacquez F, Zhao J, Lang M. Partnering with students to explore the health needs of an ethnically diverse, low-resource school: an innovative large group assessment approach. Fam Community Health. 2011;34(1):72-84. https://doi.org/10.1097/FCH.0b013e3181fded12

6. Vaughn LM. Group level assessment: a large group method for identifying primary issues and needs within a community. Sage Journals. 2014;38:336-355. https://doi.org/10.4135/978144627305014541626

7. Vaughn LM. Psychology and culture: thinking, feeling and behaving in a global context. 2nd ed. New York, NY: Taylor & Francis; 2019.

8. Choe A, Unaka N, Schondelmeyer AC, Bignall, RW, Vilvens H, Thomson J. Inpatient communication barriers and drivers when caring for children with limited English proficiency [published online ahead of print July 24, 2019]. J Hosp Med. https://doi.org/10.12788/jhm.3240.

Group Level Assessment (GLA) is a qualitative research methodology designed to enable groups of stakeholders to generate and evaluate data in participatory sessions.1 It has been used in diverse health-related settings for multiple research purposes, including needs/resource assessment, program evaluation, quality improvement, intervention development, feasibility/acceptability testing, knowledge generation, and prioritization.2-6 Unlike traditional qualitative research methods in which participants provide data and researchers analyze it, GLA uses a seven-step structured process (Table) that actively involves a large group of stakeholders in the generation, interpretation, and synthesis of data and allows salient themes to be identified from stakeholders’ perspectives.7 GLA deliverables include a set of action items that are relevant to the target issue and representative of the collective view of stakeholders. In this issue of the Journal of Hospital Medicine, Choe and colleagues used GLA methodology to identify the perspectives of pediatric medical providers and interpreters with regard to the use of interpreter services for hospitalized children having limited English proficiency (LEP).8

Each individual GLA session is intended for a group of 15-60 stakeholders. Ideally, a GLA session is scheduled for approximately three hours with a skilled facilitator guiding the group through the steps of the session.1 Depending on the study scope and research questions, modifications to GLA can be made when engaging fewer stakeholders, conducting the GLA across several shorter sessions with the same group, or conducting multiple sessions with different stakeholder groups wherein results are integrated across the groups.1

APPLICATION OF GLA

Stakeholder Recruitment

GLAs are designed to bring diverse groups together to be able to generate and evaluate ideas collectively, which in turn helps to reduce potential power differentials between or among participants. Depending on the research question(s), relevant stakeholders may include local community residents, patients, caregivers, community leaders, practitioners, providers, community-based organizations, and even CEOs. The use of purposeful sampling techniques can obtain a diverse group of stakeholders, thus helping ensure a wide range of ideas and perspectives. Choe and colleagues used flyers and announcements at staff meetings to recruit physicians, nursing staff, and interpreters who were subsequently assigned to GLA sessions to ensure engagement from a range of stakeholder roles at each session.8

Session Logistics

Strategies to create an open, equitable atmosphere in GLA sessions include role-based assigning of individuals to specific groups, avoiding introductions that emphasize status, pre-education for any leaders and supervisors about the participatory and equitable nature of GLA, and minimizing cliques and overly dominant voices throughout the session. Stakeholders who take part in activities in a GLA session typically receive an incentive for participating. Additional supports such as food and childcare may be considered. GLA sessions involving children may require providing the young participants assistance in writing their responses and/or the use of additional facilitators to keep the small groups on track.5 Interpreters and facilitators can be incorporated into GLA sessions to assist stakeholders who may need assistance with understanding and responding to prompts, such as language interpretation and translation services.

Prompt Development

Similar to the development of questions for interview and focus group guides, creating effective prompts is a critical component of data collection in GLA. Prompts are statements worded as incomplete or fill-in-the-blank sentences that should be open ended to allow participants to respond with their own thoughts and experiences. Prompts that resemble the beginning of a sentence (eg, “The biggest challenge we face is…”) encourage honest reflection rather than questions that can make participants feel like they are being evaluated. We recommend varying the number of prompts based on the group size: approximately one chart and prompt per person attending, with a maximum of 35 prompts at one session.1 This allows for sufficient variability in the responses generated without being overwhelming or too time-consuming. For example, Choe et al. developed a pool of 51 unique prompts addressing their research questions and then used 15-32 prompts in each GLA session, depending on the number of participants. 8 Prompts should be written with some purposeful redundancy, targeting the research question from several angles. The emphasis should be on the content’s alignment with the research questions rather than the actual wording of the prompts as a way of ensuring that the generated data is both valid and useful.

Prompts should also vary in format, style (eg, different color markers, pictures, fonts, etc.), and placement on each flip chart page. An individual flip chart can include multiple related prompts: for example, “split-halves” in two columns or rows (ie, the best part/worst part). Taken as a whole, the flip charts and accompanying prompts create different lenses for gathering participant perspectives on the research questions. See Appendix Table for suggested prompt characteristics and examples from a hypothetical study related to pediatric healthcare.

GLA prompt development will ideally occur in collaboration with an advisory team comprised of representative members from each of the stakeholder groups. Using a participatory research approach in the research design and preparation phases ensures that GLA prompts are understandable and relevant to participants and are able to appropriately capture the underlying purpose of the study.

Description of the Seven Steps in GLA

In step one, climate setting, the facilitator provides an overview of the session, including a description of the GLA rationale and process. Typically, an icebreaker or brief introduction activity is conducted. Step two, generating, is a hallmark step of GLA in which participants walk around and respond to prompts prewritten on flip charts hung on walls in a large room. Participants use markers and respond to each prompt by either providing a unique comment and/or corroborating an existing comment by adding a checkmark or star. During this step, organizers typically play music and encourage participants to enjoy food, chat with fellow participants, and leisurely move from prompt to prompt in any order. Step three, appreciating, is a brief interim step where participants take a “gallery walk” and view responses written on the charts.

In step four, reflecting, participants reflect on the data and briefly write down their thoughts about the responses generated in the session. In step five, understanding, smaller groups synthesize responses across a subset of charts and report their findings to the larger group. Depending on the size and composition of the larger group, small groups of four to seven people are formed or assigned. Each small group is assigned a subset of approximately four to six charts. Using thematic analysis, participants look for relationships among the responses on their assigned charts, referring to individual responses as evidence for the main findings. Groups will take notes on the charts, circle key phrases, or draw arrows to show relationships in the data and thereafter develop themes. As each small group reports their findings, the facilitator will keep a running list of generated themes, ideally in the participants’ own words. Step six, selecting, involves participants discussing, further synthesizing, and prioritizing data. Step six can occur as a facilitated large group discussion or in a form in which participants can remain in the same small groups from step five and work together to complete this further step. Themes across all of the small groups are consolidated and developed into overarching themes. Step seven, action, includes planning the next steps to address priorities.

Data Analysis

Analyzing the data generated through a GLA is an iterative process incorporated into steps three to seven as described above and often continues after the GLA session is complete. Step seven can be scheduled as a separate action-planning session depending on time constraints and the study goals. This final step moves the group toward interpretation and dissemination as themes are prioritized and used to drive action steps toward a programmatic, policy, or community change. In some studies, themes will be aggregated across multiple GLAs to integrate the findings from several sessions. This step is sometimes completed with a smaller group of stakeholders, an advisory board, or the research team.

Complementary Data and Synthesis

Research teams often collect additional sources of data that are later used to analyze and interpret the initial stakeholder-developed findings (ie, demographic surveys) and to identify priority areas. Field notes, photographs of completed charts, and recorded participant quotes can also be incorporated into the thematic analysis. Small and large group discussions could be audio recorded and transcribed to capture participants’ individual comments and interpretations. In Choe et al. the team recorded detailed notes, including quotations from participants, and collected a demographic survey. After each GLA session, Choe and colleagues compiled all of the stakeholder-driven findings to develop an overarching set of themes related to communication with LEP families and priority areas that could inform subsequent action. Similar to the qualitative validation strategy of member checking, the authors shared and revised this overarching set of themes in discussion with stakeholders to ensure that participant ideas were adequately and accurately represented.8

STRENGTHS OF GLA

Compared to traditional qualitative methods such as one-on-one interviews and focus groups, GLA is designed for large groups and is used to promote active engagement of diverse stakeholders in the participatory process. Unlike many other qualitative methods, GLA provides a stakeholder-driven, structured format to elicit diverse stakeholder viewpoints in the moment and build consensus in a participatory manner about priorities and subsequent actions. The progression of the GLA process is collaborative, with stakeholders generating, analyzing, and prioritizing data from their own perspectives. In a focus group or one-on-one interviews, researchers would conduct the analysis after the audio recordings were transcribed. In GLA, stakeholders conduct a thematic analysis in real time, an aspect that adds the stakeholder perspective to analysis of the findings, interpretation, and implications. GLA offers a fun and interactive experience that can build a sense of community among participants (eg, walking around, impromptu conversation, working in small groups, sharing perspectives on the same issue from different vantage points, etc.). GLA is a versatile, flexible methodology that can be used to address different research objectives, be modified for use with various size groups, and be adapted based on the needs and characteristics of stakeholders (eg, children, people with disabilities, etc.).1 When used in recruitment, GLA is designed to include stakeholders representing different roles and levels of a system. GLA can be particularly useful when engaging underserved communities in research because the process is nonthreatening and promotive of shared perspectives and decision-making. Importantly, the final step of GLA provides interested stakeholders with a way to stay involved in the research through prioritization and action.

LIMITATIONS OF GLA

Like other self-report research methods, GLA relies on stakeholder comfort and willingness to share “public data.”1 Thus, controversial or sensitive issues may not be brought forth. Since the final themes of GLA are consensus based in terms of what the group of stakeholders finds to be most important, nuances and outlier data can be missed. Successfully conducting a GLA requires a skilled, flexible facilitator who can manage group dynamics while also balancing the structure of the seven-step process, promoting an open and equitable environment, and ensuring the research process remains rigorous. Large groups can be more difficult for facilitators to manage especially when there are power differentials, conflict, and hidden agendas among stakeholders. The large group design, multiple steps of GLA, and participatory atmosphere with music and food can be off-putting for some stakeholders who find the process too noisy, overwhelming, or unstructured. In addition, large groups can be challenging to schedule at times and to find locations that are convenient for stakeholders.

WHY DID THE AUTHORS USE GLA?

Compared to researcher-driven qualitative methods that can be resource-intensive and are limited by researcher perspective, GLA emphasizes the contextual, “lived” expertise of stakeholders and relies on them in real time to identify and prioritize matters relevant to the participants. The participatory process of GLA promotes stakeholder buy-in and builds on the collective wisdom of the stakeholder group. This is ideally seen in Choe et al.’s study where GLA offered the researchers a structured qualitative methodology that engaged a large number of medical providers and interpreters to identify effective practices that should ultimately enhance communication with families of hospitalized LEP children.

Disclosures

The authors have nothing to disclose.

Group Level Assessment (GLA) is a qualitative research methodology designed to enable groups of stakeholders to generate and evaluate data in participatory sessions.1 It has been used in diverse health-related settings for multiple research purposes, including needs/resource assessment, program evaluation, quality improvement, intervention development, feasibility/acceptability testing, knowledge generation, and prioritization.2-6 Unlike traditional qualitative research methods in which participants provide data and researchers analyze it, GLA uses a seven-step structured process (Table) that actively involves a large group of stakeholders in the generation, interpretation, and synthesis of data and allows salient themes to be identified from stakeholders’ perspectives.7 GLA deliverables include a set of action items that are relevant to the target issue and representative of the collective view of stakeholders. In this issue of the Journal of Hospital Medicine, Choe and colleagues used GLA methodology to identify the perspectives of pediatric medical providers and interpreters with regard to the use of interpreter services for hospitalized children having limited English proficiency (LEP).8

Each individual GLA session is intended for a group of 15-60 stakeholders. Ideally, a GLA session is scheduled for approximately three hours with a skilled facilitator guiding the group through the steps of the session.1 Depending on the study scope and research questions, modifications to GLA can be made when engaging fewer stakeholders, conducting the GLA across several shorter sessions with the same group, or conducting multiple sessions with different stakeholder groups wherein results are integrated across the groups.1

APPLICATION OF GLA

Stakeholder Recruitment

GLAs are designed to bring diverse groups together to be able to generate and evaluate ideas collectively, which in turn helps to reduce potential power differentials between or among participants. Depending on the research question(s), relevant stakeholders may include local community residents, patients, caregivers, community leaders, practitioners, providers, community-based organizations, and even CEOs. The use of purposeful sampling techniques can obtain a diverse group of stakeholders, thus helping ensure a wide range of ideas and perspectives. Choe and colleagues used flyers and announcements at staff meetings to recruit physicians, nursing staff, and interpreters who were subsequently assigned to GLA sessions to ensure engagement from a range of stakeholder roles at each session.8

Session Logistics

Strategies to create an open, equitable atmosphere in GLA sessions include role-based assigning of individuals to specific groups, avoiding introductions that emphasize status, pre-education for any leaders and supervisors about the participatory and equitable nature of GLA, and minimizing cliques and overly dominant voices throughout the session. Stakeholders who take part in activities in a GLA session typically receive an incentive for participating. Additional supports such as food and childcare may be considered. GLA sessions involving children may require providing the young participants assistance in writing their responses and/or the use of additional facilitators to keep the small groups on track.5 Interpreters and facilitators can be incorporated into GLA sessions to assist stakeholders who may need assistance with understanding and responding to prompts, such as language interpretation and translation services.

Prompt Development

Similar to the development of questions for interview and focus group guides, creating effective prompts is a critical component of data collection in GLA. Prompts are statements worded as incomplete or fill-in-the-blank sentences that should be open ended to allow participants to respond with their own thoughts and experiences. Prompts that resemble the beginning of a sentence (eg, “The biggest challenge we face is…”) encourage honest reflection rather than questions that can make participants feel like they are being evaluated. We recommend varying the number of prompts based on the group size: approximately one chart and prompt per person attending, with a maximum of 35 prompts at one session.1 This allows for sufficient variability in the responses generated without being overwhelming or too time-consuming. For example, Choe et al. developed a pool of 51 unique prompts addressing their research questions and then used 15-32 prompts in each GLA session, depending on the number of participants. 8 Prompts should be written with some purposeful redundancy, targeting the research question from several angles. The emphasis should be on the content’s alignment with the research questions rather than the actual wording of the prompts as a way of ensuring that the generated data is both valid and useful.

Prompts should also vary in format, style (eg, different color markers, pictures, fonts, etc.), and placement on each flip chart page. An individual flip chart can include multiple related prompts: for example, “split-halves” in two columns or rows (ie, the best part/worst part). Taken as a whole, the flip charts and accompanying prompts create different lenses for gathering participant perspectives on the research questions. See Appendix Table for suggested prompt characteristics and examples from a hypothetical study related to pediatric healthcare.

GLA prompt development will ideally occur in collaboration with an advisory team comprised of representative members from each of the stakeholder groups. Using a participatory research approach in the research design and preparation phases ensures that GLA prompts are understandable and relevant to participants and are able to appropriately capture the underlying purpose of the study.

Description of the Seven Steps in GLA

In step one, climate setting, the facilitator provides an overview of the session, including a description of the GLA rationale and process. Typically, an icebreaker or brief introduction activity is conducted. Step two, generating, is a hallmark step of GLA in which participants walk around and respond to prompts prewritten on flip charts hung on walls in a large room. Participants use markers and respond to each prompt by either providing a unique comment and/or corroborating an existing comment by adding a checkmark or star. During this step, organizers typically play music and encourage participants to enjoy food, chat with fellow participants, and leisurely move from prompt to prompt in any order. Step three, appreciating, is a brief interim step where participants take a “gallery walk” and view responses written on the charts.

In step four, reflecting, participants reflect on the data and briefly write down their thoughts about the responses generated in the session. In step five, understanding, smaller groups synthesize responses across a subset of charts and report their findings to the larger group. Depending on the size and composition of the larger group, small groups of four to seven people are formed or assigned. Each small group is assigned a subset of approximately four to six charts. Using thematic analysis, participants look for relationships among the responses on their assigned charts, referring to individual responses as evidence for the main findings. Groups will take notes on the charts, circle key phrases, or draw arrows to show relationships in the data and thereafter develop themes. As each small group reports their findings, the facilitator will keep a running list of generated themes, ideally in the participants’ own words. Step six, selecting, involves participants discussing, further synthesizing, and prioritizing data. Step six can occur as a facilitated large group discussion or in a form in which participants can remain in the same small groups from step five and work together to complete this further step. Themes across all of the small groups are consolidated and developed into overarching themes. Step seven, action, includes planning the next steps to address priorities.

Data Analysis

Analyzing the data generated through a GLA is an iterative process incorporated into steps three to seven as described above and often continues after the GLA session is complete. Step seven can be scheduled as a separate action-planning session depending on time constraints and the study goals. This final step moves the group toward interpretation and dissemination as themes are prioritized and used to drive action steps toward a programmatic, policy, or community change. In some studies, themes will be aggregated across multiple GLAs to integrate the findings from several sessions. This step is sometimes completed with a smaller group of stakeholders, an advisory board, or the research team.

Complementary Data and Synthesis

Research teams often collect additional sources of data that are later used to analyze and interpret the initial stakeholder-developed findings (ie, demographic surveys) and to identify priority areas. Field notes, photographs of completed charts, and recorded participant quotes can also be incorporated into the thematic analysis. Small and large group discussions could be audio recorded and transcribed to capture participants’ individual comments and interpretations. In Choe et al. the team recorded detailed notes, including quotations from participants, and collected a demographic survey. After each GLA session, Choe and colleagues compiled all of the stakeholder-driven findings to develop an overarching set of themes related to communication with LEP families and priority areas that could inform subsequent action. Similar to the qualitative validation strategy of member checking, the authors shared and revised this overarching set of themes in discussion with stakeholders to ensure that participant ideas were adequately and accurately represented.8

STRENGTHS OF GLA

Compared to traditional qualitative methods such as one-on-one interviews and focus groups, GLA is designed for large groups and is used to promote active engagement of diverse stakeholders in the participatory process. Unlike many other qualitative methods, GLA provides a stakeholder-driven, structured format to elicit diverse stakeholder viewpoints in the moment and build consensus in a participatory manner about priorities and subsequent actions. The progression of the GLA process is collaborative, with stakeholders generating, analyzing, and prioritizing data from their own perspectives. In a focus group or one-on-one interviews, researchers would conduct the analysis after the audio recordings were transcribed. In GLA, stakeholders conduct a thematic analysis in real time, an aspect that adds the stakeholder perspective to analysis of the findings, interpretation, and implications. GLA offers a fun and interactive experience that can build a sense of community among participants (eg, walking around, impromptu conversation, working in small groups, sharing perspectives on the same issue from different vantage points, etc.). GLA is a versatile, flexible methodology that can be used to address different research objectives, be modified for use with various size groups, and be adapted based on the needs and characteristics of stakeholders (eg, children, people with disabilities, etc.).1 When used in recruitment, GLA is designed to include stakeholders representing different roles and levels of a system. GLA can be particularly useful when engaging underserved communities in research because the process is nonthreatening and promotive of shared perspectives and decision-making. Importantly, the final step of GLA provides interested stakeholders with a way to stay involved in the research through prioritization and action.

LIMITATIONS OF GLA

Like other self-report research methods, GLA relies on stakeholder comfort and willingness to share “public data.”1 Thus, controversial or sensitive issues may not be brought forth. Since the final themes of GLA are consensus based in terms of what the group of stakeholders finds to be most important, nuances and outlier data can be missed. Successfully conducting a GLA requires a skilled, flexible facilitator who can manage group dynamics while also balancing the structure of the seven-step process, promoting an open and equitable environment, and ensuring the research process remains rigorous. Large groups can be more difficult for facilitators to manage especially when there are power differentials, conflict, and hidden agendas among stakeholders. The large group design, multiple steps of GLA, and participatory atmosphere with music and food can be off-putting for some stakeholders who find the process too noisy, overwhelming, or unstructured. In addition, large groups can be challenging to schedule at times and to find locations that are convenient for stakeholders.

WHY DID THE AUTHORS USE GLA?

Compared to researcher-driven qualitative methods that can be resource-intensive and are limited by researcher perspective, GLA emphasizes the contextual, “lived” expertise of stakeholders and relies on them in real time to identify and prioritize matters relevant to the participants. The participatory process of GLA promotes stakeholder buy-in and builds on the collective wisdom of the stakeholder group. This is ideally seen in Choe et al.’s study where GLA offered the researchers a structured qualitative methodology that engaged a large number of medical providers and interpreters to identify effective practices that should ultimately enhance communication with families of hospitalized LEP children.

Disclosures

The authors have nothing to disclose.

1. Vaughn LM, Lohmueller M. Calling all stakeholders: group-level assessment (GLA)—a qualitative and participatory method for large groups. Eval Rev. 2014;38(4):336-355. https:// doi.org/10.1177/0193841X14544903.

2. Gosdin CH, Vaughn L. Perceptions of physician bedside handoff with nurse and family involvement. Hosp Pediatr. 2012;2(1):34-38. https://doi.org/10.1542/hpeds.2011-0008-2

3. Graham KE, Schellinger AR, Vaughn LM. Developing strategies for positive change: transitioning foster youth to adulthood. Child Youth Serv Rev. 2015;54:71-79. https://doi.org/10.1016/j.childyouth.2015.04.014

4. Schondelmeyer AC, Jenkins AM, Allison B, et al. Factors influencing use of continuous physiologic monitors for hospitalized pediatric patients. Hosp Pediatr. 2019;9(6):423-428. https://doi.org/10.1542/hpeds.2019-0007

5. Vaughn LM, Jacquez F, Zhao J, Lang M. Partnering with students to explore the health needs of an ethnically diverse, low-resource school: an innovative large group assessment approach. Fam Community Health. 2011;34(1):72-84. https://doi.org/10.1097/FCH.0b013e3181fded12

6. Vaughn LM. Group level assessment: a large group method for identifying primary issues and needs within a community. Sage Journals. 2014;38:336-355. https://doi.org/10.4135/978144627305014541626

7. Vaughn LM. Psychology and culture: thinking, feeling and behaving in a global context. 2nd ed. New York, NY: Taylor & Francis; 2019.

8. Choe A, Unaka N, Schondelmeyer AC, Bignall, RW, Vilvens H, Thomson J. Inpatient communication barriers and drivers when caring for children with limited English proficiency [published online ahead of print July 24, 2019]. J Hosp Med. https://doi.org/10.12788/jhm.3240.

1. Vaughn LM, Lohmueller M. Calling all stakeholders: group-level assessment (GLA)—a qualitative and participatory method for large groups. Eval Rev. 2014;38(4):336-355. https:// doi.org/10.1177/0193841X14544903.

2. Gosdin CH, Vaughn L. Perceptions of physician bedside handoff with nurse and family involvement. Hosp Pediatr. 2012;2(1):34-38. https://doi.org/10.1542/hpeds.2011-0008-2

3. Graham KE, Schellinger AR, Vaughn LM. Developing strategies for positive change: transitioning foster youth to adulthood. Child Youth Serv Rev. 2015;54:71-79. https://doi.org/10.1016/j.childyouth.2015.04.014

4. Schondelmeyer AC, Jenkins AM, Allison B, et al. Factors influencing use of continuous physiologic monitors for hospitalized pediatric patients. Hosp Pediatr. 2019;9(6):423-428. https://doi.org/10.1542/hpeds.2019-0007

5. Vaughn LM, Jacquez F, Zhao J, Lang M. Partnering with students to explore the health needs of an ethnically diverse, low-resource school: an innovative large group assessment approach. Fam Community Health. 2011;34(1):72-84. https://doi.org/10.1097/FCH.0b013e3181fded12

6. Vaughn LM. Group level assessment: a large group method for identifying primary issues and needs within a community. Sage Journals. 2014;38:336-355. https://doi.org/10.4135/978144627305014541626

7. Vaughn LM. Psychology and culture: thinking, feeling and behaving in a global context. 2nd ed. New York, NY: Taylor & Francis; 2019.

8. Choe A, Unaka N, Schondelmeyer AC, Bignall, RW, Vilvens H, Thomson J. Inpatient communication barriers and drivers when caring for children with limited English proficiency [published online ahead of print July 24, 2019]. J Hosp Med. https://doi.org/10.12788/jhm.3240.

© 2019 Society of Hospital Medicine

Clinical Progress Note: Pediatric Acute Kidney Injury

Acute kidney injury (AKI) occurs in 5%-30% of noncritically ill hospitalized children.1 Initially thought to be simply a symptom of more severe pathologies, it is now recognized that AKI independently increases mortality and is associated with the development of chronic kidney disease (CKD), even in children.2 The wide acceptance of the Kidney Disease Improving Global Outcome (KDIGO) diagnostic criteria has enabled a more uniform definition of AKI from both clinical and research perspectives.2 A better understanding of the pathophysiology and risk factors for AKI has led to new methods for early detection and prevention efforts. While serum creatinine (SCr) was historically one of the sole markers of AKI, novel biomarkers can facilitate earlier diagnosis of AKI, identify subclinical AKI, and guide clinical management. This clinical practice update addresses the latest clinical advances in risk assessment, diagnosis, and prevention of pediatric AKI, with a focus on AKI biomarkers.

DIAGNOSIS, BIOMARKERS, AND DEFINITION

Several sets of criteria have been used to diagnose AKI. The KDIGO classification, based on a systematic review of the literature and developed through expert consensus, is the current recommended definition.3 Increasing AKI stage, as defined by the KDIGO classification, is associated with increased mortality, the need for renal replacement therapy, length of stay, and CKD, thus underscoring the importance of accurate classification.3 Stage 1 AKI is defined by a rise in SCr of ≥0.3 mg/dL,1.5-1.9 times the baseline SCr, or urine output <0.5 ml/kg/h for six to 12 hours; stage 2 by a rise of ≥2.0-2.9 times the baseline SCr or urine output <0.5 ml/kg/h for >12 hours; and stage 3 by a rise of ≥4.0 mg/dL, ≥three times the baseline SCr, initiation of renal replacement therapy, urine output <0.3 ml/kg/h for ≥24 hours, or anuria ≥12 hours. However, these criteria rely on SCr, which is a suboptimal marker of renal dysfunction, as it rises only once the glomerular filtration rate (GFR) has already decreased, in some cases by as much as 50%. Additionally, interpretation of SCr in the diagnosis of AKI requires a prior Scr measurement to determine the magnitude of change from the baseline value, which is often lacking in children. To mitigate this limitation, different formulas exist to estimate a baseline SCr value based on height or age, an approach that assumes patients have preexisting normal renal function.

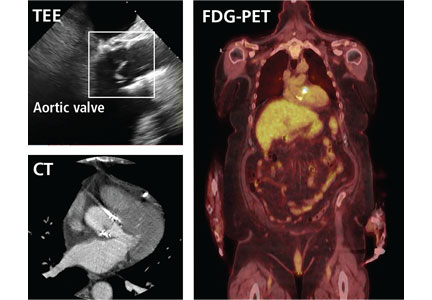

The limitations of SCr have led to interest in identifying more accurate biomarkers of AKI. Although many candidates have been identified, we will limit our discussion to those currently available for clinical use: serum cystatin C, urine neutrophil gelatinase-associated lipocalin (NGAL), urine TIMP-2, and urine IGFBP7 (Table).4-8 While urine NGAL and cystatin C are measured individually, TIMP-2 and IGFBP7 are measured on the same panel and the product of their multiplied values is used for clinical guidance. While each of these biomarkers have good predictive accuracy for AKI when used independently, their combined use increases the accuracy of AKI diagnosis. These biomarkers can be divided into broad categories based on their utility as either functional markers or markers of injury.6 Serum cystatin C is a functional marker and as such can be used to estimate GFR more accurately than SCr.9 Comparatively, urine NGAL is a marker of renal injury, while TIMP2 and IGFBP7 are markers of renal stress. These markers are not useful in estimating GFR, but rather aid in the prediction and diagnosis of AKI (Figure). Despite the limitations of SCr, these biomarkers have yet to be incorporated into the diagnostic criteria. They have, however, helped to refine our understanding of the pathophysiology of AKI.

AKI has classically been divided into three categories based on the etiology of injury, namely prerenal azotemia, intrinsic renal disease, and postrenal causes. The discovery of new biomarkers adds nuance to the classification of AKI. Two groups of biomarkers are particularly helpful in this regard: markers of structural injury (eg, NGAL) and functional markers (eg, cystatin C). The combination of these biomarkers with SCr has refined the categories of AKI (Figure). For example, NGAL can accurately distinguish between a rise in SCr due to functional AKI, previously referred to as prerenal azotemia, and a rise in SCr due to intrinsic kidney injury. An elevation of structural injury biomarkers in the absence of a significant rise in SCr is referred to as subclinical AKI. Patients with subclinical AKI have worse outcomes than those without AKI but better outcomes than patients with AKI with elevation of both SCr and NGAL (Figure).2,6 Time to resolution of AKI further refines our ability to predict prognosis and outcomes. Transient AKI, defined as resolution within 48 hours, is associated with a better prognosis than persistent AKI. Renal dysfunction lasting more than seven days but less than 90 days is referred to as acute kidney disease (AKD). While both transient AKI and AKD represent different entities on the continuum between AKI and CKD, further research is needed to better elucidate these classifications.2

RISK STRATIFICATION

The renal angina index (RAI) identifies critically ill children at high risk for AKI. The RAI combines traditional markers of AKI, such as a change in estimated creatinine clearance and fluid overload, with patient factors, including need for ventilation, inotropic support, and history of transplantation (solid organ or bone marrow) to identify those patients who are at high risk for severe AKI. Patients identified as high risk by the patient factors component of the RAI have a much lower threshold for both a decrease in creatinine clearance and fluid overload to be considered at risk for severe AKI, as these early signs are more likely to reflect an early impending severe AKI in this high-risk group. Conversely, patients that do not meet these patient factors are more likely to simply have a transient or functional AKI, and therefore have a higher threshold for both a change in creatinine clearance and fluid overload in order to be considered at high risk for severe AKI.

The RAI has been validated in the critical care setting as a method to predict severe AKI at day three of admission to the pediatric intensive care unit, with a negative predictive value of 92%-99% when the score is negative in the first 12 hours.10 In selected high-risk patients (RAI ≥ 8), biomarkers become even more reliable for AKI prediction (eg, injury markers have an excellent area under the receiver operating characteristic curve (AUC) of 0.97 for severe AKI prediction in this high-risk group).11 While only validated for critically ill patients, the concept of renal angina is still applicable in the complex populations managed by hospitalists who practice outside of the intensive care unit setting. Early signs of renal dysfunction (eg, rising SCr, fluid overload ≥5%) in patients with risk factors (see below) should prompt a thorough evaluation, including urinalysis, daily SCr, nephrotoxin avoidance, and tissue injury biomarkers, if available.

The risk factors for AKI are numerous and tend to potentiate one another. The most frequent predisposing comorbidities include CKD, heart failure or congenital heart diseases, transplantation (bone marrow or solid organs), and diabetes. Disease-related factors include sepsis, cardiac surgery, cardio-pulmonary bypass, mechanical ventilation, and vasopressor use. Potentially modifiable factors include hypovolemia and multiple nephrotoxic exposures. 2,3

Nephrotoxic medications are now among the most common causes of AKI in hospitalized children.12 Approximately 80% of children are exposed to at least one nephrotoxin during an inpatient admission.12 Exposure to a single nephrotoxic medication is sufficient to place a child at risk of AKI, and each additional nephrotoxin further increases the risk.12 While some drugs are routinely recognized to be nephrotoxic (eg, ibuprofen), others are commonly overlooked, notably certain antibiotics (eg, cefotaxime, ceftazidime, cefuroxime, nafcillin, and piperacillin) and anticonvulsants (eg, zonisamide).12 Furthermore, the combination of multiple nephrotoxins can potentiate the risk of AKI. For example, the combination of vancomycin and piperacillin/tazobactam increases the risk of AKI by 3.4 times compared with the combination of vancomycin with another antipseudomonal beta-lactam antibiotic.13

Adequate monitoring, including daily SCr measurements and risk awareness, are critical as nephrotoxin-associated AKI can be easily missed in the absence of routine SCr monitoring, especially since these children are typically nonoliguric12. Quality improvement efforts focused on obtaining daily SCr in patients exposed to either three or more nephrotoxins or three days of either aminoglycoside or vancomycin, even without concomitant exposure to other nephrotoxins, have shown success in decreasing both the number of nephrotoxins and the rate of nephrotoxin-associated AKI.12

While a significant injury cannot always be avoided, a mindful clinical approach and management can help to prevent some complications of AKI. An awareness of fluid status is critical, as fluid overload greater than 10% of the patient’s weight independently increases the risk of mortality in both adults and children.14 To assess the risk of AKI progression and potential failure of conservative management with diuretics, a furosemide stress test (FST) is an easy, safe, and accessible functional assessment of tubular reserve in a patient without intravascular depletion.15 A growing body of literature in adults shows that FST-responders are less likely to progress to stage 3 AKI or need renal replacement therapy than nonresponders.15 The FST is currently being investigated and standardized in children.

CONCLUSION

Research in AKI has made significant strides over the last few years. Nevertheless, many areas of research remain to be explored (eg, the impact of IV fluid type in the pediatric population, AKD characterization and impact on CKD development). AKI is common, associated with significant morbidity and mortality and, in some instances, preventable. While no targeted therapeutic options are currently under investigation, recent advances allow for better identification of high-risk patients and offer opportunities for impactful preventive approaches. Thoughtful use of nephrotoxic medications, early identification of patients at high risk for AKI, and accurate diagnosis and appropriate management of AKI are the recommended best practice.

Disclosures

The authors have nothing to disclose.

1. McGregor TL, Jones DP, Wang L, et al. Acute kidney injury incidence in noncritically ill hospitalized children, adolescents, and young adults: a retrospective observational study. Am J Kidney Dis. 2016;67(3):384-390. https://doi.org/10.1053/j.ajkd.2015.07.019.

2. Chawla LS, Bellomo R, Bihorac A, et al. Acute kidney disease and renal recovery: consensus report of the Acute Disease Quality Initiative (ADQI) 16 Workgroup. Nat Rev Nephrol. 2017;13(4):241-257. https://doi.org/10.1038/nrneph.2017.2.

3. Khwaja A. KDIGO clinical practice guidelines for acute kidney injury. Nephron Clin Pract. 2012;120(4):179-184. https://doi.org/10.1159/000339789.

4. Filho LT, Grande AJ, Colonetti T, Della ÉSP, da Rosa MI. Accuracy of neutrophil gelatinase-associated lipocalin for acute kidney injury diagnosis in children: systematic review and meta-analysis. Pediatr Nephrol. 2017;32(10):1979-1988. https://doi.org/10.1007/s00467-017-3704-6.

5. Levey AS, Inker LA. Assessment of glomerular filtration rate in health and disease: a state of the art review. Clin Pharmacol Ther. 2017;102(3):405-419. https://doi.org/10.1002/cpt.729.

6. Endre ZH, Kellum JA, Di Somma S, et al. Differential diagnosis of AKI in clinical practice by functional and damage biomarkers: workgroup statements from the tenth Acute Dialysis Quality Initiative Consensus Conference. Contrib Nephrol. 2013;182:30-44. https://doi.org/10.1159/000349964.

7. Su LJ, Li YM, Kellum JA, Peng ZY. Predictive value of cell cycle arrest biomarkers for cardiac surgery-associated acute kidney injury: a meta-analysis. Br J Anaesth. 2018;121(2):350-357. https://doi.org/10.1016/j.bja.2018.02.069.

8. Westhoff JH, Tönshoff B, Waldherr S, et al. Urinary tissue inhibitor of metalloproteinase-2 (TIMP-2) · insulin-like growth factor-binding protein 7 (IGFBP7) predicts adverse outcome in pediatric acute kidney injury. PLoS One. 2015;10(11):1-16. https://doi.org/10.1371/journal.pone.0143628.

9. Berg UB, Nyman U, Bäck R, et al. New standardized cystatin C and creatinine GFR equations in children validated with inulin clearance. Pediatr Nephrol. 2015;30(8):1317-1326. https://doi.org/10.1007/s00467-015-3060-3.

10. Chawla LS, Goldstein SL, Kellum JA, Ronco C. Renal angina: concept and development of pretest probability assessment in acute kidney injury. Crit Care. 2015;19(1):93. https://doi.org/10.1186/s13054-015-0779-y.

11. Menon S, Goldstein SL, Mottes T, et al. Urinary biomarker incorporation into the renal angina index early in intensive care unit admission optimizes acute kidney injury prediction in critically ill children: a prospective cohort study. Nephrol Dial Transplant. 2016;31(4):586-594. https://doi.org/10.1093/ndt/gfv457.

12. Goldstein SL, Mottes T, Simpson K, et al. A sustained quality improvement program reduces nephrotoxic medication-associated acute kidney injury. Kidney Int. 2016;90(1):212-221. https://doi.org/10.1016/j.kint.2016.03.031.

13. Downes KJ, Cowden C, Laskin BL, et al. Association of acute kidney injury with concomitant vancomycin and piperacillin/tazobactam treatment among hospitalized children. JAMA Pediatr. 2017;19146:e173219-e173219. https://doi.org/10.1001/JAMAPEDIATRICS.2017.3219.

14. Naipaul A, Jefferson LS, Goldstein SL, Loftis LL, Zappitelli M, Arikan AA. Fluid overload is associated with impaired oxygenation and morbidity in critically ill children*. Pediatr Crit Care Med. 2011;13(3):253-258. https://doi.org/10.1097/pcc.0b013e31822882a3.

15. Lumlertgul N, Peerapornratana S, Trakarnvanich T, et al. Early versus standard initiation of renal replacement therapy in furosemide stress test non-responsive acute kidney injury patients (the FST trial). Crit Care. 2018;22(1):1-9. https://doi.org/10.1186/s13054-018-2021-1.

Acute kidney injury (AKI) occurs in 5%-30% of noncritically ill hospitalized children.1 Initially thought to be simply a symptom of more severe pathologies, it is now recognized that AKI independently increases mortality and is associated with the development of chronic kidney disease (CKD), even in children.2 The wide acceptance of the Kidney Disease Improving Global Outcome (KDIGO) diagnostic criteria has enabled a more uniform definition of AKI from both clinical and research perspectives.2 A better understanding of the pathophysiology and risk factors for AKI has led to new methods for early detection and prevention efforts. While serum creatinine (SCr) was historically one of the sole markers of AKI, novel biomarkers can facilitate earlier diagnosis of AKI, identify subclinical AKI, and guide clinical management. This clinical practice update addresses the latest clinical advances in risk assessment, diagnosis, and prevention of pediatric AKI, with a focus on AKI biomarkers.

DIAGNOSIS, BIOMARKERS, AND DEFINITION

Several sets of criteria have been used to diagnose AKI. The KDIGO classification, based on a systematic review of the literature and developed through expert consensus, is the current recommended definition.3 Increasing AKI stage, as defined by the KDIGO classification, is associated with increased mortality, the need for renal replacement therapy, length of stay, and CKD, thus underscoring the importance of accurate classification.3 Stage 1 AKI is defined by a rise in SCr of ≥0.3 mg/dL,1.5-1.9 times the baseline SCr, or urine output <0.5 ml/kg/h for six to 12 hours; stage 2 by a rise of ≥2.0-2.9 times the baseline SCr or urine output <0.5 ml/kg/h for >12 hours; and stage 3 by a rise of ≥4.0 mg/dL, ≥three times the baseline SCr, initiation of renal replacement therapy, urine output <0.3 ml/kg/h for ≥24 hours, or anuria ≥12 hours. However, these criteria rely on SCr, which is a suboptimal marker of renal dysfunction, as it rises only once the glomerular filtration rate (GFR) has already decreased, in some cases by as much as 50%. Additionally, interpretation of SCr in the diagnosis of AKI requires a prior Scr measurement to determine the magnitude of change from the baseline value, which is often lacking in children. To mitigate this limitation, different formulas exist to estimate a baseline SCr value based on height or age, an approach that assumes patients have preexisting normal renal function.

The limitations of SCr have led to interest in identifying more accurate biomarkers of AKI. Although many candidates have been identified, we will limit our discussion to those currently available for clinical use: serum cystatin C, urine neutrophil gelatinase-associated lipocalin (NGAL), urine TIMP-2, and urine IGFBP7 (Table).4-8 While urine NGAL and cystatin C are measured individually, TIMP-2 and IGFBP7 are measured on the same panel and the product of their multiplied values is used for clinical guidance. While each of these biomarkers have good predictive accuracy for AKI when used independently, their combined use increases the accuracy of AKI diagnosis. These biomarkers can be divided into broad categories based on their utility as either functional markers or markers of injury.6 Serum cystatin C is a functional marker and as such can be used to estimate GFR more accurately than SCr.9 Comparatively, urine NGAL is a marker of renal injury, while TIMP2 and IGFBP7 are markers of renal stress. These markers are not useful in estimating GFR, but rather aid in the prediction and diagnosis of AKI (Figure). Despite the limitations of SCr, these biomarkers have yet to be incorporated into the diagnostic criteria. They have, however, helped to refine our understanding of the pathophysiology of AKI.

AKI has classically been divided into three categories based on the etiology of injury, namely prerenal azotemia, intrinsic renal disease, and postrenal causes. The discovery of new biomarkers adds nuance to the classification of AKI. Two groups of biomarkers are particularly helpful in this regard: markers of structural injury (eg, NGAL) and functional markers (eg, cystatin C). The combination of these biomarkers with SCr has refined the categories of AKI (Figure). For example, NGAL can accurately distinguish between a rise in SCr due to functional AKI, previously referred to as prerenal azotemia, and a rise in SCr due to intrinsic kidney injury. An elevation of structural injury biomarkers in the absence of a significant rise in SCr is referred to as subclinical AKI. Patients with subclinical AKI have worse outcomes than those without AKI but better outcomes than patients with AKI with elevation of both SCr and NGAL (Figure).2,6 Time to resolution of AKI further refines our ability to predict prognosis and outcomes. Transient AKI, defined as resolution within 48 hours, is associated with a better prognosis than persistent AKI. Renal dysfunction lasting more than seven days but less than 90 days is referred to as acute kidney disease (AKD). While both transient AKI and AKD represent different entities on the continuum between AKI and CKD, further research is needed to better elucidate these classifications.2

RISK STRATIFICATION

The renal angina index (RAI) identifies critically ill children at high risk for AKI. The RAI combines traditional markers of AKI, such as a change in estimated creatinine clearance and fluid overload, with patient factors, including need for ventilation, inotropic support, and history of transplantation (solid organ or bone marrow) to identify those patients who are at high risk for severe AKI. Patients identified as high risk by the patient factors component of the RAI have a much lower threshold for both a decrease in creatinine clearance and fluid overload to be considered at risk for severe AKI, as these early signs are more likely to reflect an early impending severe AKI in this high-risk group. Conversely, patients that do not meet these patient factors are more likely to simply have a transient or functional AKI, and therefore have a higher threshold for both a change in creatinine clearance and fluid overload in order to be considered at high risk for severe AKI.

The RAI has been validated in the critical care setting as a method to predict severe AKI at day three of admission to the pediatric intensive care unit, with a negative predictive value of 92%-99% when the score is negative in the first 12 hours.10 In selected high-risk patients (RAI ≥ 8), biomarkers become even more reliable for AKI prediction (eg, injury markers have an excellent area under the receiver operating characteristic curve (AUC) of 0.97 for severe AKI prediction in this high-risk group).11 While only validated for critically ill patients, the concept of renal angina is still applicable in the complex populations managed by hospitalists who practice outside of the intensive care unit setting. Early signs of renal dysfunction (eg, rising SCr, fluid overload ≥5%) in patients with risk factors (see below) should prompt a thorough evaluation, including urinalysis, daily SCr, nephrotoxin avoidance, and tissue injury biomarkers, if available.

The risk factors for AKI are numerous and tend to potentiate one another. The most frequent predisposing comorbidities include CKD, heart failure or congenital heart diseases, transplantation (bone marrow or solid organs), and diabetes. Disease-related factors include sepsis, cardiac surgery, cardio-pulmonary bypass, mechanical ventilation, and vasopressor use. Potentially modifiable factors include hypovolemia and multiple nephrotoxic exposures. 2,3

Nephrotoxic medications are now among the most common causes of AKI in hospitalized children.12 Approximately 80% of children are exposed to at least one nephrotoxin during an inpatient admission.12 Exposure to a single nephrotoxic medication is sufficient to place a child at risk of AKI, and each additional nephrotoxin further increases the risk.12 While some drugs are routinely recognized to be nephrotoxic (eg, ibuprofen), others are commonly overlooked, notably certain antibiotics (eg, cefotaxime, ceftazidime, cefuroxime, nafcillin, and piperacillin) and anticonvulsants (eg, zonisamide).12 Furthermore, the combination of multiple nephrotoxins can potentiate the risk of AKI. For example, the combination of vancomycin and piperacillin/tazobactam increases the risk of AKI by 3.4 times compared with the combination of vancomycin with another antipseudomonal beta-lactam antibiotic.13

Adequate monitoring, including daily SCr measurements and risk awareness, are critical as nephrotoxin-associated AKI can be easily missed in the absence of routine SCr monitoring, especially since these children are typically nonoliguric12. Quality improvement efforts focused on obtaining daily SCr in patients exposed to either three or more nephrotoxins or three days of either aminoglycoside or vancomycin, even without concomitant exposure to other nephrotoxins, have shown success in decreasing both the number of nephrotoxins and the rate of nephrotoxin-associated AKI.12

While a significant injury cannot always be avoided, a mindful clinical approach and management can help to prevent some complications of AKI. An awareness of fluid status is critical, as fluid overload greater than 10% of the patient’s weight independently increases the risk of mortality in both adults and children.14 To assess the risk of AKI progression and potential failure of conservative management with diuretics, a furosemide stress test (FST) is an easy, safe, and accessible functional assessment of tubular reserve in a patient without intravascular depletion.15 A growing body of literature in adults shows that FST-responders are less likely to progress to stage 3 AKI or need renal replacement therapy than nonresponders.15 The FST is currently being investigated and standardized in children.

CONCLUSION

Research in AKI has made significant strides over the last few years. Nevertheless, many areas of research remain to be explored (eg, the impact of IV fluid type in the pediatric population, AKD characterization and impact on CKD development). AKI is common, associated with significant morbidity and mortality and, in some instances, preventable. While no targeted therapeutic options are currently under investigation, recent advances allow for better identification of high-risk patients and offer opportunities for impactful preventive approaches. Thoughtful use of nephrotoxic medications, early identification of patients at high risk for AKI, and accurate diagnosis and appropriate management of AKI are the recommended best practice.

Disclosures

The authors have nothing to disclose.

Acute kidney injury (AKI) occurs in 5%-30% of noncritically ill hospitalized children.1 Initially thought to be simply a symptom of more severe pathologies, it is now recognized that AKI independently increases mortality and is associated with the development of chronic kidney disease (CKD), even in children.2 The wide acceptance of the Kidney Disease Improving Global Outcome (KDIGO) diagnostic criteria has enabled a more uniform definition of AKI from both clinical and research perspectives.2 A better understanding of the pathophysiology and risk factors for AKI has led to new methods for early detection and prevention efforts. While serum creatinine (SCr) was historically one of the sole markers of AKI, novel biomarkers can facilitate earlier diagnosis of AKI, identify subclinical AKI, and guide clinical management. This clinical practice update addresses the latest clinical advances in risk assessment, diagnosis, and prevention of pediatric AKI, with a focus on AKI biomarkers.

DIAGNOSIS, BIOMARKERS, AND DEFINITION

Several sets of criteria have been used to diagnose AKI. The KDIGO classification, based on a systematic review of the literature and developed through expert consensus, is the current recommended definition.3 Increasing AKI stage, as defined by the KDIGO classification, is associated with increased mortality, the need for renal replacement therapy, length of stay, and CKD, thus underscoring the importance of accurate classification.3 Stage 1 AKI is defined by a rise in SCr of ≥0.3 mg/dL,1.5-1.9 times the baseline SCr, or urine output <0.5 ml/kg/h for six to 12 hours; stage 2 by a rise of ≥2.0-2.9 times the baseline SCr or urine output <0.5 ml/kg/h for >12 hours; and stage 3 by a rise of ≥4.0 mg/dL, ≥three times the baseline SCr, initiation of renal replacement therapy, urine output <0.3 ml/kg/h for ≥24 hours, or anuria ≥12 hours. However, these criteria rely on SCr, which is a suboptimal marker of renal dysfunction, as it rises only once the glomerular filtration rate (GFR) has already decreased, in some cases by as much as 50%. Additionally, interpretation of SCr in the diagnosis of AKI requires a prior Scr measurement to determine the magnitude of change from the baseline value, which is often lacking in children. To mitigate this limitation, different formulas exist to estimate a baseline SCr value based on height or age, an approach that assumes patients have preexisting normal renal function.

The limitations of SCr have led to interest in identifying more accurate biomarkers of AKI. Although many candidates have been identified, we will limit our discussion to those currently available for clinical use: serum cystatin C, urine neutrophil gelatinase-associated lipocalin (NGAL), urine TIMP-2, and urine IGFBP7 (Table).4-8 While urine NGAL and cystatin C are measured individually, TIMP-2 and IGFBP7 are measured on the same panel and the product of their multiplied values is used for clinical guidance. While each of these biomarkers have good predictive accuracy for AKI when used independently, their combined use increases the accuracy of AKI diagnosis. These biomarkers can be divided into broad categories based on their utility as either functional markers or markers of injury.6 Serum cystatin C is a functional marker and as such can be used to estimate GFR more accurately than SCr.9 Comparatively, urine NGAL is a marker of renal injury, while TIMP2 and IGFBP7 are markers of renal stress. These markers are not useful in estimating GFR, but rather aid in the prediction and diagnosis of AKI (Figure). Despite the limitations of SCr, these biomarkers have yet to be incorporated into the diagnostic criteria. They have, however, helped to refine our understanding of the pathophysiology of AKI.

AKI has classically been divided into three categories based on the etiology of injury, namely prerenal azotemia, intrinsic renal disease, and postrenal causes. The discovery of new biomarkers adds nuance to the classification of AKI. Two groups of biomarkers are particularly helpful in this regard: markers of structural injury (eg, NGAL) and functional markers (eg, cystatin C). The combination of these biomarkers with SCr has refined the categories of AKI (Figure). For example, NGAL can accurately distinguish between a rise in SCr due to functional AKI, previously referred to as prerenal azotemia, and a rise in SCr due to intrinsic kidney injury. An elevation of structural injury biomarkers in the absence of a significant rise in SCr is referred to as subclinical AKI. Patients with subclinical AKI have worse outcomes than those without AKI but better outcomes than patients with AKI with elevation of both SCr and NGAL (Figure).2,6 Time to resolution of AKI further refines our ability to predict prognosis and outcomes. Transient AKI, defined as resolution within 48 hours, is associated with a better prognosis than persistent AKI. Renal dysfunction lasting more than seven days but less than 90 days is referred to as acute kidney disease (AKD). While both transient AKI and AKD represent different entities on the continuum between AKI and CKD, further research is needed to better elucidate these classifications.2

RISK STRATIFICATION

The renal angina index (RAI) identifies critically ill children at high risk for AKI. The RAI combines traditional markers of AKI, such as a change in estimated creatinine clearance and fluid overload, with patient factors, including need for ventilation, inotropic support, and history of transplantation (solid organ or bone marrow) to identify those patients who are at high risk for severe AKI. Patients identified as high risk by the patient factors component of the RAI have a much lower threshold for both a decrease in creatinine clearance and fluid overload to be considered at risk for severe AKI, as these early signs are more likely to reflect an early impending severe AKI in this high-risk group. Conversely, patients that do not meet these patient factors are more likely to simply have a transient or functional AKI, and therefore have a higher threshold for both a change in creatinine clearance and fluid overload in order to be considered at high risk for severe AKI.

The RAI has been validated in the critical care setting as a method to predict severe AKI at day three of admission to the pediatric intensive care unit, with a negative predictive value of 92%-99% when the score is negative in the first 12 hours.10 In selected high-risk patients (RAI ≥ 8), biomarkers become even more reliable for AKI prediction (eg, injury markers have an excellent area under the receiver operating characteristic curve (AUC) of 0.97 for severe AKI prediction in this high-risk group).11 While only validated for critically ill patients, the concept of renal angina is still applicable in the complex populations managed by hospitalists who practice outside of the intensive care unit setting. Early signs of renal dysfunction (eg, rising SCr, fluid overload ≥5%) in patients with risk factors (see below) should prompt a thorough evaluation, including urinalysis, daily SCr, nephrotoxin avoidance, and tissue injury biomarkers, if available.

The risk factors for AKI are numerous and tend to potentiate one another. The most frequent predisposing comorbidities include CKD, heart failure or congenital heart diseases, transplantation (bone marrow or solid organs), and diabetes. Disease-related factors include sepsis, cardiac surgery, cardio-pulmonary bypass, mechanical ventilation, and vasopressor use. Potentially modifiable factors include hypovolemia and multiple nephrotoxic exposures. 2,3