User login

The Journal of Clinical Outcomes Management® is an independent, peer-reviewed journal offering evidence-based, practical information for improving the quality, safety, and value of health care.

div[contains(@class, 'header__large-screen')]

div[contains(@class, 'read-next-article')]

div[contains(@class, 'nav-primary')]

nav[contains(@class, 'nav-primary')]

section[contains(@class, 'footer-nav-section-wrapper')]

footer[@id='footer']

div[contains(@class, 'main-prefix')]

section[contains(@class, 'nav-hidden')]

div[contains(@class, 'ce-card-content')]

nav[contains(@class, 'nav-ce-stack')]

Hospitalists and PCPs crave greater communication

Hospitalists and PCPs want more dialogue while patients are in the hospital in order to coordinate and personalize care, according to data collected at Beth Israel Deaconess Medical Center, Boston. The results were presented at the annual meeting of the Society of General Internal Medicine.

“I think a major takeaway is that both hospitalists and primary care doctors agree that it’s important for primary care doctors to be involved in a patient’s hospitalization. They both identified a value that PCPs can bring to the table,” coresearcher Kristen Flint, MD, a primary care resident, told this news organization.

A majority in both camps reported that communication with the other party occurred in less than 25% of cases, whereas ideally it would happen half of the time. Dr. Flint noted that communication tools differ among hospitals, limiting the applicability of the findings.

The research team surveyed 39 hospitalists and 28 PCPs employed by the medical center during the first half of 2021. They also interviewed six hospitalists as they admitted and discharged patients.

The hospitalist movement, which took hold in response to cost and efficiency demands of managed care, led to the start of inpatient specialists, thereby reducing the need for PCPs to commute between their offices and the hospital to care for patients in both settings.

Primary care involvement is important during hospitalization

In the Beth Israel Deaconess survey, four out of five hospitalists and three-quarters of PCPs agreed that primary care involvement is still important during hospitalization, most critically during discharge and admission. Hospitalists reported that PCPs provide valuable data about a patient’s medical status, social supports, mental health, and goals for care. They also said having such data helps to boost patient trust and improve the quality of inpatient care.

“Most projects around communication between inpatient and outpatient doctors have really focused on the time of discharge,” when clinicians identify what care a patient will need after they leave the hospital, Dr. Flint said. “But we found that both sides felt increased communication at time of admission would also be beneficial.”

The biggest barrier for PCPs, cited by 82% of respondents, was lack of time. Hospitalists’ top impediment was being unable to find contact information for the other party, which was cited by 79% of these survey participants.

Hospitalists operate ‘in a very stressful environment’

The Beth Israel Deaconess research “documents what has largely been suspected,” said primary care general internist Allan Goroll, MD.

Dr. Goroll, a professor of medicine at Harvard Medical School, Boston, said in an interview that hospitalists operate “in a very stressful environment.”

“They [hospitalists] appreciate accurate information about a patient’s recent medical history, test results, and responses to treatment as well as a briefing on patient values and preferences, family dynamics, and priorities for the admission. It makes for a safer, more personalized, and more efficient hospital admission,” said Dr. Goroll, who was not involved in the research.

In a 2015 article in the New England Journal of Medicine, Dr. Goroll and Daniel Hunt, MD, director of hospital medicine at Emory University, Atlanta, proposed a collaborative model in which PCPs visit hospitalized patients and serve as consultants to inpatient staff. Dr. Goroll said Massachusetts General Hospital in Boston, where he practices, initiated a study of that approach, but it was interrupted by the pandemic.

“As limited time is the most often cited barrier to communication, future interventions such as asynchronous forms of communication between the two groups should be considered,” the researchers wrote in the NEJM perspective.

To narrow the gap, Beth Israel Deaconess will study converting an admission notification letter sent to PCPs into a two-way communication tool in which PCPs can insert patient information, Dr. Flint said.

Dr. Flint and Dr. Goroll have disclosed no relevant financial relationships.

A version of this article first appeared on Medscape.com.

Hospitalists and PCPs want more dialogue while patients are in the hospital in order to coordinate and personalize care, according to data collected at Beth Israel Deaconess Medical Center, Boston. The results were presented at the annual meeting of the Society of General Internal Medicine.

“I think a major takeaway is that both hospitalists and primary care doctors agree that it’s important for primary care doctors to be involved in a patient’s hospitalization. They both identified a value that PCPs can bring to the table,” coresearcher Kristen Flint, MD, a primary care resident, told this news organization.

A majority in both camps reported that communication with the other party occurred in less than 25% of cases, whereas ideally it would happen half of the time. Dr. Flint noted that communication tools differ among hospitals, limiting the applicability of the findings.

The research team surveyed 39 hospitalists and 28 PCPs employed by the medical center during the first half of 2021. They also interviewed six hospitalists as they admitted and discharged patients.

The hospitalist movement, which took hold in response to cost and efficiency demands of managed care, led to the start of inpatient specialists, thereby reducing the need for PCPs to commute between their offices and the hospital to care for patients in both settings.

Primary care involvement is important during hospitalization

In the Beth Israel Deaconess survey, four out of five hospitalists and three-quarters of PCPs agreed that primary care involvement is still important during hospitalization, most critically during discharge and admission. Hospitalists reported that PCPs provide valuable data about a patient’s medical status, social supports, mental health, and goals for care. They also said having such data helps to boost patient trust and improve the quality of inpatient care.

“Most projects around communication between inpatient and outpatient doctors have really focused on the time of discharge,” when clinicians identify what care a patient will need after they leave the hospital, Dr. Flint said. “But we found that both sides felt increased communication at time of admission would also be beneficial.”

The biggest barrier for PCPs, cited by 82% of respondents, was lack of time. Hospitalists’ top impediment was being unable to find contact information for the other party, which was cited by 79% of these survey participants.

Hospitalists operate ‘in a very stressful environment’

The Beth Israel Deaconess research “documents what has largely been suspected,” said primary care general internist Allan Goroll, MD.

Dr. Goroll, a professor of medicine at Harvard Medical School, Boston, said in an interview that hospitalists operate “in a very stressful environment.”

“They [hospitalists] appreciate accurate information about a patient’s recent medical history, test results, and responses to treatment as well as a briefing on patient values and preferences, family dynamics, and priorities for the admission. It makes for a safer, more personalized, and more efficient hospital admission,” said Dr. Goroll, who was not involved in the research.

In a 2015 article in the New England Journal of Medicine, Dr. Goroll and Daniel Hunt, MD, director of hospital medicine at Emory University, Atlanta, proposed a collaborative model in which PCPs visit hospitalized patients and serve as consultants to inpatient staff. Dr. Goroll said Massachusetts General Hospital in Boston, where he practices, initiated a study of that approach, but it was interrupted by the pandemic.

“As limited time is the most often cited barrier to communication, future interventions such as asynchronous forms of communication between the two groups should be considered,” the researchers wrote in the NEJM perspective.

To narrow the gap, Beth Israel Deaconess will study converting an admission notification letter sent to PCPs into a two-way communication tool in which PCPs can insert patient information, Dr. Flint said.

Dr. Flint and Dr. Goroll have disclosed no relevant financial relationships.

A version of this article first appeared on Medscape.com.

Hospitalists and PCPs want more dialogue while patients are in the hospital in order to coordinate and personalize care, according to data collected at Beth Israel Deaconess Medical Center, Boston. The results were presented at the annual meeting of the Society of General Internal Medicine.

“I think a major takeaway is that both hospitalists and primary care doctors agree that it’s important for primary care doctors to be involved in a patient’s hospitalization. They both identified a value that PCPs can bring to the table,” coresearcher Kristen Flint, MD, a primary care resident, told this news organization.

A majority in both camps reported that communication with the other party occurred in less than 25% of cases, whereas ideally it would happen half of the time. Dr. Flint noted that communication tools differ among hospitals, limiting the applicability of the findings.

The research team surveyed 39 hospitalists and 28 PCPs employed by the medical center during the first half of 2021. They also interviewed six hospitalists as they admitted and discharged patients.

The hospitalist movement, which took hold in response to cost and efficiency demands of managed care, led to the start of inpatient specialists, thereby reducing the need for PCPs to commute between their offices and the hospital to care for patients in both settings.

Primary care involvement is important during hospitalization

In the Beth Israel Deaconess survey, four out of five hospitalists and three-quarters of PCPs agreed that primary care involvement is still important during hospitalization, most critically during discharge and admission. Hospitalists reported that PCPs provide valuable data about a patient’s medical status, social supports, mental health, and goals for care. They also said having such data helps to boost patient trust and improve the quality of inpatient care.

“Most projects around communication between inpatient and outpatient doctors have really focused on the time of discharge,” when clinicians identify what care a patient will need after they leave the hospital, Dr. Flint said. “But we found that both sides felt increased communication at time of admission would also be beneficial.”

The biggest barrier for PCPs, cited by 82% of respondents, was lack of time. Hospitalists’ top impediment was being unable to find contact information for the other party, which was cited by 79% of these survey participants.

Hospitalists operate ‘in a very stressful environment’

The Beth Israel Deaconess research “documents what has largely been suspected,” said primary care general internist Allan Goroll, MD.

Dr. Goroll, a professor of medicine at Harvard Medical School, Boston, said in an interview that hospitalists operate “in a very stressful environment.”

“They [hospitalists] appreciate accurate information about a patient’s recent medical history, test results, and responses to treatment as well as a briefing on patient values and preferences, family dynamics, and priorities for the admission. It makes for a safer, more personalized, and more efficient hospital admission,” said Dr. Goroll, who was not involved in the research.

In a 2015 article in the New England Journal of Medicine, Dr. Goroll and Daniel Hunt, MD, director of hospital medicine at Emory University, Atlanta, proposed a collaborative model in which PCPs visit hospitalized patients and serve as consultants to inpatient staff. Dr. Goroll said Massachusetts General Hospital in Boston, where he practices, initiated a study of that approach, but it was interrupted by the pandemic.

“As limited time is the most often cited barrier to communication, future interventions such as asynchronous forms of communication between the two groups should be considered,” the researchers wrote in the NEJM perspective.

To narrow the gap, Beth Israel Deaconess will study converting an admission notification letter sent to PCPs into a two-way communication tool in which PCPs can insert patient information, Dr. Flint said.

Dr. Flint and Dr. Goroll have disclosed no relevant financial relationships.

A version of this article first appeared on Medscape.com.

FROM SGIM 2022

DIAMOND: Adding patiromer helps optimize HF meds, foils hyperkalemia

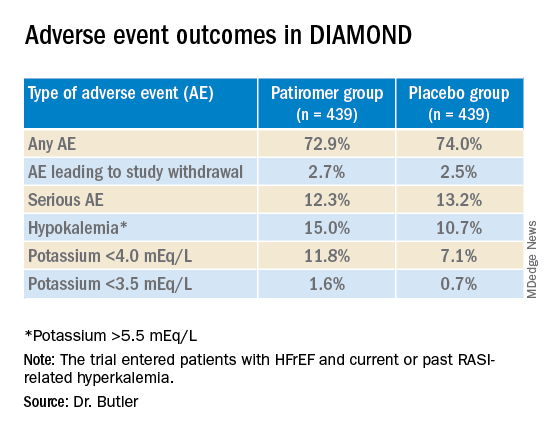

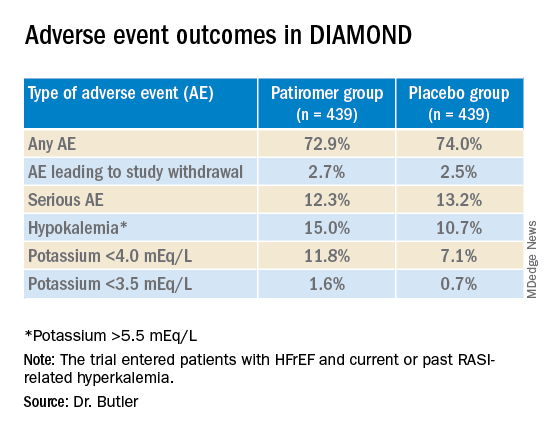

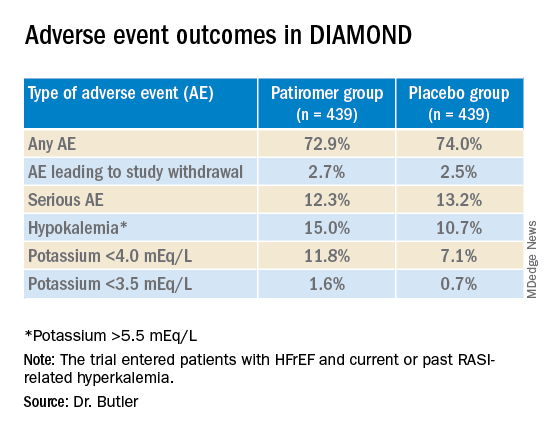

Several of the core medications for patients with heart failure with reduced ejection fraction (HFrEF) come with a well-known risk of causing hyperkalemia, to which many clinicians respond by pulling back on dosing or withdrawing the culprit drug.

But accompanying renin-angiotensin system–inhibiting agents with the potassium-sequestrant patiromer (Veltassa, Vifor Pharma) appears to shield patients against hyperkalemia enough that they can take more RASI medications at higher doses, suggests a randomized, a controlled study.

The DIAMOND trial’s HFrEF patients, who had current or a history of RASI-related hyperkalemia, added either patiromer or placebo to their guideline-directed medical therapy (GDMT), which includes, even emphasizes, the culprit medication. They include ACE inhibitors, angiotensin-receptor blockers (ARBs), angiotensin-receptor/neprilysin inhibitors (ARNIs), and mineralocorticoid receptor antagonists (MRAs).

Those taking patiromer tolerated more intense RASI therapy – including MRAs, which are especially prone to causing hyperkalemia – than the patients assigned to placebo. They also maintained lower potassium concentrations and experienced fewer clinically important hyperkalemia episodes, reported Javed Butler, MD, MPH, MBA, Baylor Scott and White Research Institute, Dallas, at the annual scientific sessions of the American College of Cardiology.

The apparent benefit from patiromer came in part from an advantage for a composite hyperkalemia-event endpoint that included mortality, Dr. Butler noted. That advantage seemed to hold regardless of age, sex, body mass index, HFrEF symptom severity, or initial natriuretic peptide levels.

Patients who took patiromer, compared with those who took placebo, showed a 37% reduction in risk for hyperkalemia (P = .006), defined as potassium levels exceeding 5.5 mEq/L, over a median follow-up of 27 weeks. They were 38% less likely to have their MRA dosage reduced to below target level (P = .006).

More patients in the patiromer group than in the control group attained at least 50% of target dosage for MRAs and ACE inhibitors, ARBs, or ARNIs (92% vs. 87%; P = .015).

Patients with HFrEF are unlikely to achieve best possible outcomes without GDMT optimization, but failure to optimize is often attributed to hyperkalemia concerns. DIAMOND, Dr. Butler said, suggests that, by adding the potassium sequestrant to GDMT, “you can simultaneously control potassium and optimize RASI therapy.” Many clinicians seem to believe they can achieve only one or the other.

DIAMOND was too underpowered to show whether preventing hyperkalemia with patiromer could improve clinical outcomes. But failure to optimize RASI medication in HFrEF can worsen risk for heart failure events and death. So “it stands to reason that optimization of RASI therapy without a concomitant risk of hyperkalemia may, in the long run, lead to better outcomes for these patients,” Dr. Butler said in an interview.

Given the drug’s ability to keep potassium levels in check during RASI therapy, Dr. Butler said, “hypokalemia should not be a reason for suboptimal therapy.”

Patiromer and other potassium sequestrants have been available in the United States and Europe for 4-6 years, but their value as adjuncts to RASI medication in HFrEF or other heart failure has been unclear.

“There’s a good opportunity to expand the use of the drug. The question is, in whom and when?” James L. Januzzi, MD, Massachusetts General Hospital, Boston, said in an interview.

Some HFrEF patients on GDMT “should be treated with patiromer. The bigger question is, should we give someone who has a history of hyperkalemia another chance at GDMT before we treat them with patiromer? Because they may not necessarily develop hyperkalemia a second time,” said Dr. Januzzi, who was on the DIAMOND endpoint-adjudication committee.

Among the most notable findings of the trial, he said, is that the number of people who developed hyperkalemia on RASI medication, although significantly elevated, “wasn’t as high as they expected it would be,” he said. “The data from DIAMOND argue that if a really significant majority does not become hyperkalemic on rechallenge, jumping straight to a potassium-binding drug may be premature.”

Physicians across specialties can differ in how they interpret potassium-level elevation and can use various cut points to flag when to stop RASI medication or at least hold back on up-titration, Dr. Butler observed. “Cardiologists have a different threshold of potassium that they tolerate than say, for instance, a nephrologist.”

Useful, then, might be a way to tell which patients are most likely to develop hyperkalemia with RASI up-titration and so might benefit from a potassium-binding agent right away. But DIAMOND, Dr. Butler said, “does not necessarily define any patient phenotype or any potassium level where we would say that you should use a potassium binder.”

The trial entered 1,642 patients with HFrEF and current or past RASI-related hyperkalemia to a 12-week run-in phase for optimization of GDMT with patiromer. The trial was conducted at nearly 400 centers in 21 countries.

RASI medication could be optimized in 85% of the cohort, from which 878 patients were randomly assigned either to continue optimized GDMT with patiromer or to have the potassium-sequestrant replaced with a placebo.

The patients on patiromer showed a 0.03-mEq/L mean rise in serum potassium levels from randomization to the end of the study, the primary endpoint, compared with a 0.13 mEq/L mean increase for those in the control group (P < .001), Dr. Butler reported.

The win ratio for a RASI-use score hierarchically featuring cardiovascular death and CV hospitalization for hyperkalemia at several levels of severity was 1.25 (95% confidence interval, 1.003-1.564; P = .048), favoring the patiromer group. The win ratio solely for hyperkalemia-related events also favored patients on patiromer, at 1.53 (95% CI, 1.23-1.91; P < .001).

Patiromer also seemed well tolerated, Dr. Butler said.

Hyperkalemia is “one of the most common excuses” from clinicians for failing to up-titrate RASI medicine in patients with heart failure, Dr. Januzzi said. DIAMOND was less about patiromer itself than about ways “to facilitate better GDMT, where we’re really falling short of the mark. During the run-in phase they were able to get the vast majority of individuals to target, which to me is a critically important point, and emblematic of the need for things that facilitate this kind of excellent care.”

DIAMOND was funded by Vifor Pharma. Dr. Butler disclosed receiving consulting fees from Abbott, Adrenomed, Amgen, Applied Therapeutics, Array, AstraZeneca, Bayer, Boehringer Ingelheim, CVRx, G3 Pharma, Impulse Dynamics, Innolife, Janssen, LivaNova, Luitpold, Medtronic, Merck, Novartis, Novo Nordisk, Relypsa, Sequana Medical, and Vifor Pharma. Dr. Januzzi disclosed receiving consultant fees or honoraria from Abbott Laboratories, Imbria, Jana Care, Novartis, Prevencio, and Roche Diagnostics; serving on a data safety monitoring board for AbbVie, Amgen, Bayer Healthcare Pharmaceuticals, Beyer, CVRx, and Takeda Pharmaceuticals North America; and receiving research grants from Abbott Laboratories, Janssen, and Vifor Pharma.

A version of this article first appeared on Medscape.com.

Several of the core medications for patients with heart failure with reduced ejection fraction (HFrEF) come with a well-known risk of causing hyperkalemia, to which many clinicians respond by pulling back on dosing or withdrawing the culprit drug.

But accompanying renin-angiotensin system–inhibiting agents with the potassium-sequestrant patiromer (Veltassa, Vifor Pharma) appears to shield patients against hyperkalemia enough that they can take more RASI medications at higher doses, suggests a randomized, a controlled study.

The DIAMOND trial’s HFrEF patients, who had current or a history of RASI-related hyperkalemia, added either patiromer or placebo to their guideline-directed medical therapy (GDMT), which includes, even emphasizes, the culprit medication. They include ACE inhibitors, angiotensin-receptor blockers (ARBs), angiotensin-receptor/neprilysin inhibitors (ARNIs), and mineralocorticoid receptor antagonists (MRAs).

Those taking patiromer tolerated more intense RASI therapy – including MRAs, which are especially prone to causing hyperkalemia – than the patients assigned to placebo. They also maintained lower potassium concentrations and experienced fewer clinically important hyperkalemia episodes, reported Javed Butler, MD, MPH, MBA, Baylor Scott and White Research Institute, Dallas, at the annual scientific sessions of the American College of Cardiology.

The apparent benefit from patiromer came in part from an advantage for a composite hyperkalemia-event endpoint that included mortality, Dr. Butler noted. That advantage seemed to hold regardless of age, sex, body mass index, HFrEF symptom severity, or initial natriuretic peptide levels.

Patients who took patiromer, compared with those who took placebo, showed a 37% reduction in risk for hyperkalemia (P = .006), defined as potassium levels exceeding 5.5 mEq/L, over a median follow-up of 27 weeks. They were 38% less likely to have their MRA dosage reduced to below target level (P = .006).

More patients in the patiromer group than in the control group attained at least 50% of target dosage for MRAs and ACE inhibitors, ARBs, or ARNIs (92% vs. 87%; P = .015).

Patients with HFrEF are unlikely to achieve best possible outcomes without GDMT optimization, but failure to optimize is often attributed to hyperkalemia concerns. DIAMOND, Dr. Butler said, suggests that, by adding the potassium sequestrant to GDMT, “you can simultaneously control potassium and optimize RASI therapy.” Many clinicians seem to believe they can achieve only one or the other.

DIAMOND was too underpowered to show whether preventing hyperkalemia with patiromer could improve clinical outcomes. But failure to optimize RASI medication in HFrEF can worsen risk for heart failure events and death. So “it stands to reason that optimization of RASI therapy without a concomitant risk of hyperkalemia may, in the long run, lead to better outcomes for these patients,” Dr. Butler said in an interview.

Given the drug’s ability to keep potassium levels in check during RASI therapy, Dr. Butler said, “hypokalemia should not be a reason for suboptimal therapy.”

Patiromer and other potassium sequestrants have been available in the United States and Europe for 4-6 years, but their value as adjuncts to RASI medication in HFrEF or other heart failure has been unclear.

“There’s a good opportunity to expand the use of the drug. The question is, in whom and when?” James L. Januzzi, MD, Massachusetts General Hospital, Boston, said in an interview.

Some HFrEF patients on GDMT “should be treated with patiromer. The bigger question is, should we give someone who has a history of hyperkalemia another chance at GDMT before we treat them with patiromer? Because they may not necessarily develop hyperkalemia a second time,” said Dr. Januzzi, who was on the DIAMOND endpoint-adjudication committee.

Among the most notable findings of the trial, he said, is that the number of people who developed hyperkalemia on RASI medication, although significantly elevated, “wasn’t as high as they expected it would be,” he said. “The data from DIAMOND argue that if a really significant majority does not become hyperkalemic on rechallenge, jumping straight to a potassium-binding drug may be premature.”

Physicians across specialties can differ in how they interpret potassium-level elevation and can use various cut points to flag when to stop RASI medication or at least hold back on up-titration, Dr. Butler observed. “Cardiologists have a different threshold of potassium that they tolerate than say, for instance, a nephrologist.”

Useful, then, might be a way to tell which patients are most likely to develop hyperkalemia with RASI up-titration and so might benefit from a potassium-binding agent right away. But DIAMOND, Dr. Butler said, “does not necessarily define any patient phenotype or any potassium level where we would say that you should use a potassium binder.”

The trial entered 1,642 patients with HFrEF and current or past RASI-related hyperkalemia to a 12-week run-in phase for optimization of GDMT with patiromer. The trial was conducted at nearly 400 centers in 21 countries.

RASI medication could be optimized in 85% of the cohort, from which 878 patients were randomly assigned either to continue optimized GDMT with patiromer or to have the potassium-sequestrant replaced with a placebo.

The patients on patiromer showed a 0.03-mEq/L mean rise in serum potassium levels from randomization to the end of the study, the primary endpoint, compared with a 0.13 mEq/L mean increase for those in the control group (P < .001), Dr. Butler reported.

The win ratio for a RASI-use score hierarchically featuring cardiovascular death and CV hospitalization for hyperkalemia at several levels of severity was 1.25 (95% confidence interval, 1.003-1.564; P = .048), favoring the patiromer group. The win ratio solely for hyperkalemia-related events also favored patients on patiromer, at 1.53 (95% CI, 1.23-1.91; P < .001).

Patiromer also seemed well tolerated, Dr. Butler said.

Hyperkalemia is “one of the most common excuses” from clinicians for failing to up-titrate RASI medicine in patients with heart failure, Dr. Januzzi said. DIAMOND was less about patiromer itself than about ways “to facilitate better GDMT, where we’re really falling short of the mark. During the run-in phase they were able to get the vast majority of individuals to target, which to me is a critically important point, and emblematic of the need for things that facilitate this kind of excellent care.”

DIAMOND was funded by Vifor Pharma. Dr. Butler disclosed receiving consulting fees from Abbott, Adrenomed, Amgen, Applied Therapeutics, Array, AstraZeneca, Bayer, Boehringer Ingelheim, CVRx, G3 Pharma, Impulse Dynamics, Innolife, Janssen, LivaNova, Luitpold, Medtronic, Merck, Novartis, Novo Nordisk, Relypsa, Sequana Medical, and Vifor Pharma. Dr. Januzzi disclosed receiving consultant fees or honoraria from Abbott Laboratories, Imbria, Jana Care, Novartis, Prevencio, and Roche Diagnostics; serving on a data safety monitoring board for AbbVie, Amgen, Bayer Healthcare Pharmaceuticals, Beyer, CVRx, and Takeda Pharmaceuticals North America; and receiving research grants from Abbott Laboratories, Janssen, and Vifor Pharma.

A version of this article first appeared on Medscape.com.

Several of the core medications for patients with heart failure with reduced ejection fraction (HFrEF) come with a well-known risk of causing hyperkalemia, to which many clinicians respond by pulling back on dosing or withdrawing the culprit drug.

But accompanying renin-angiotensin system–inhibiting agents with the potassium-sequestrant patiromer (Veltassa, Vifor Pharma) appears to shield patients against hyperkalemia enough that they can take more RASI medications at higher doses, suggests a randomized, a controlled study.

The DIAMOND trial’s HFrEF patients, who had current or a history of RASI-related hyperkalemia, added either patiromer or placebo to their guideline-directed medical therapy (GDMT), which includes, even emphasizes, the culprit medication. They include ACE inhibitors, angiotensin-receptor blockers (ARBs), angiotensin-receptor/neprilysin inhibitors (ARNIs), and mineralocorticoid receptor antagonists (MRAs).

Those taking patiromer tolerated more intense RASI therapy – including MRAs, which are especially prone to causing hyperkalemia – than the patients assigned to placebo. They also maintained lower potassium concentrations and experienced fewer clinically important hyperkalemia episodes, reported Javed Butler, MD, MPH, MBA, Baylor Scott and White Research Institute, Dallas, at the annual scientific sessions of the American College of Cardiology.

The apparent benefit from patiromer came in part from an advantage for a composite hyperkalemia-event endpoint that included mortality, Dr. Butler noted. That advantage seemed to hold regardless of age, sex, body mass index, HFrEF symptom severity, or initial natriuretic peptide levels.

Patients who took patiromer, compared with those who took placebo, showed a 37% reduction in risk for hyperkalemia (P = .006), defined as potassium levels exceeding 5.5 mEq/L, over a median follow-up of 27 weeks. They were 38% less likely to have their MRA dosage reduced to below target level (P = .006).

More patients in the patiromer group than in the control group attained at least 50% of target dosage for MRAs and ACE inhibitors, ARBs, or ARNIs (92% vs. 87%; P = .015).

Patients with HFrEF are unlikely to achieve best possible outcomes without GDMT optimization, but failure to optimize is often attributed to hyperkalemia concerns. DIAMOND, Dr. Butler said, suggests that, by adding the potassium sequestrant to GDMT, “you can simultaneously control potassium and optimize RASI therapy.” Many clinicians seem to believe they can achieve only one or the other.

DIAMOND was too underpowered to show whether preventing hyperkalemia with patiromer could improve clinical outcomes. But failure to optimize RASI medication in HFrEF can worsen risk for heart failure events and death. So “it stands to reason that optimization of RASI therapy without a concomitant risk of hyperkalemia may, in the long run, lead to better outcomes for these patients,” Dr. Butler said in an interview.

Given the drug’s ability to keep potassium levels in check during RASI therapy, Dr. Butler said, “hypokalemia should not be a reason for suboptimal therapy.”

Patiromer and other potassium sequestrants have been available in the United States and Europe for 4-6 years, but their value as adjuncts to RASI medication in HFrEF or other heart failure has been unclear.

“There’s a good opportunity to expand the use of the drug. The question is, in whom and when?” James L. Januzzi, MD, Massachusetts General Hospital, Boston, said in an interview.

Some HFrEF patients on GDMT “should be treated with patiromer. The bigger question is, should we give someone who has a history of hyperkalemia another chance at GDMT before we treat them with patiromer? Because they may not necessarily develop hyperkalemia a second time,” said Dr. Januzzi, who was on the DIAMOND endpoint-adjudication committee.

Among the most notable findings of the trial, he said, is that the number of people who developed hyperkalemia on RASI medication, although significantly elevated, “wasn’t as high as they expected it would be,” he said. “The data from DIAMOND argue that if a really significant majority does not become hyperkalemic on rechallenge, jumping straight to a potassium-binding drug may be premature.”

Physicians across specialties can differ in how they interpret potassium-level elevation and can use various cut points to flag when to stop RASI medication or at least hold back on up-titration, Dr. Butler observed. “Cardiologists have a different threshold of potassium that they tolerate than say, for instance, a nephrologist.”

Useful, then, might be a way to tell which patients are most likely to develop hyperkalemia with RASI up-titration and so might benefit from a potassium-binding agent right away. But DIAMOND, Dr. Butler said, “does not necessarily define any patient phenotype or any potassium level where we would say that you should use a potassium binder.”

The trial entered 1,642 patients with HFrEF and current or past RASI-related hyperkalemia to a 12-week run-in phase for optimization of GDMT with patiromer. The trial was conducted at nearly 400 centers in 21 countries.

RASI medication could be optimized in 85% of the cohort, from which 878 patients were randomly assigned either to continue optimized GDMT with patiromer or to have the potassium-sequestrant replaced with a placebo.

The patients on patiromer showed a 0.03-mEq/L mean rise in serum potassium levels from randomization to the end of the study, the primary endpoint, compared with a 0.13 mEq/L mean increase for those in the control group (P < .001), Dr. Butler reported.

The win ratio for a RASI-use score hierarchically featuring cardiovascular death and CV hospitalization for hyperkalemia at several levels of severity was 1.25 (95% confidence interval, 1.003-1.564; P = .048), favoring the patiromer group. The win ratio solely for hyperkalemia-related events also favored patients on patiromer, at 1.53 (95% CI, 1.23-1.91; P < .001).

Patiromer also seemed well tolerated, Dr. Butler said.

Hyperkalemia is “one of the most common excuses” from clinicians for failing to up-titrate RASI medicine in patients with heart failure, Dr. Januzzi said. DIAMOND was less about patiromer itself than about ways “to facilitate better GDMT, where we’re really falling short of the mark. During the run-in phase they were able to get the vast majority of individuals to target, which to me is a critically important point, and emblematic of the need for things that facilitate this kind of excellent care.”

DIAMOND was funded by Vifor Pharma. Dr. Butler disclosed receiving consulting fees from Abbott, Adrenomed, Amgen, Applied Therapeutics, Array, AstraZeneca, Bayer, Boehringer Ingelheim, CVRx, G3 Pharma, Impulse Dynamics, Innolife, Janssen, LivaNova, Luitpold, Medtronic, Merck, Novartis, Novo Nordisk, Relypsa, Sequana Medical, and Vifor Pharma. Dr. Januzzi disclosed receiving consultant fees or honoraria from Abbott Laboratories, Imbria, Jana Care, Novartis, Prevencio, and Roche Diagnostics; serving on a data safety monitoring board for AbbVie, Amgen, Bayer Healthcare Pharmaceuticals, Beyer, CVRx, and Takeda Pharmaceuticals North America; and receiving research grants from Abbott Laboratories, Janssen, and Vifor Pharma.

A version of this article first appeared on Medscape.com.

FROM ACC 2022

AI model predicts ovarian cancer responses

The model, using still-frame images from pretreatment laparoscopic surgical videos, had an overall accuracy rate of 93%, according to the pilot study’s first author, Deanna Glassman, MD, an oncologic fellow at the University of Texas MD Anderson Cancer Center, Houston.

Dr. Glassman described her research in a presentation given at the annual meeting of the Society of Gynecologic Oncology.

While the AI model successfully identified all excellent-response patients, it did classify about a third of patients with poor responses as excellent responses. The smaller number of images in the poor-response category, Dr. Glassman speculated, may explain the misclassification.

Researchers took 435 representative still-frame images from pretreatment laparoscopic surgical videos of 113 patients with pathologically proven high-grade serous ovarian cancer. Using 70% of the images to train the model, they used 10% for validation and 20% for the actual testing. They developed the AI model with images from four anatomical locations (diaphragm, omentum, peritoneum, and pelvis), training it using deep learning and neural networks to extract morphological disease patterns for correlation with either of two outcomes: excellent response or poor response. An excellent response was defined as progression-free survival of 12 months or more, and poor response as PFS of 6 months or less. In the retrospective study of images, after excluding 32 gray-zone patients, 75 patients (66%) had durable responses to therapy and 6 (5%) had poor responses.

The PFS was 19 months in the excellent-response group and 3 months in the poor-response group.

Clinicians have often observed differences in gross morphology within the single histologic diagnosis of high-grade serous ovarian cancer. The research intent was to determine if AI could detect these distinct morphological patterns in the still frame images taken at the time of laparoscopy, and correlate them with the eventual clinical outcomes. Dr. Glassman and colleagues are currently validating the model with a much larger cohort, and will look into clinical testing.

“The big-picture goal,” Dr. Glassman said in an interview, “would be to utilize the model to predict which patients would do well with traditional standard of care treatments and those who wouldn’t do well so that we can personalize the treatment plan for those patients with alternative agents and therapies.”

Once validated, the model could also be employed to identify patterns of disease in other gynecologic cancers or distinguish between viable and necrosed malignant tissue.

The study’s predominant limitation was the small sample size which is being addressed in a larger ongoing study.

Funding was provided by a T32 grant, MD Anderson Cancer Center Support Grant, MD Anderson Ovarian Cancer Moon Shot, SPORE in Ovarian Cancer, the American Cancer Society, and the Ovarian Cancer Research Alliance. Dr. Glassman declared no relevant financial relationships.

The model, using still-frame images from pretreatment laparoscopic surgical videos, had an overall accuracy rate of 93%, according to the pilot study’s first author, Deanna Glassman, MD, an oncologic fellow at the University of Texas MD Anderson Cancer Center, Houston.

Dr. Glassman described her research in a presentation given at the annual meeting of the Society of Gynecologic Oncology.

While the AI model successfully identified all excellent-response patients, it did classify about a third of patients with poor responses as excellent responses. The smaller number of images in the poor-response category, Dr. Glassman speculated, may explain the misclassification.

Researchers took 435 representative still-frame images from pretreatment laparoscopic surgical videos of 113 patients with pathologically proven high-grade serous ovarian cancer. Using 70% of the images to train the model, they used 10% for validation and 20% for the actual testing. They developed the AI model with images from four anatomical locations (diaphragm, omentum, peritoneum, and pelvis), training it using deep learning and neural networks to extract morphological disease patterns for correlation with either of two outcomes: excellent response or poor response. An excellent response was defined as progression-free survival of 12 months or more, and poor response as PFS of 6 months or less. In the retrospective study of images, after excluding 32 gray-zone patients, 75 patients (66%) had durable responses to therapy and 6 (5%) had poor responses.

The PFS was 19 months in the excellent-response group and 3 months in the poor-response group.

Clinicians have often observed differences in gross morphology within the single histologic diagnosis of high-grade serous ovarian cancer. The research intent was to determine if AI could detect these distinct morphological patterns in the still frame images taken at the time of laparoscopy, and correlate them with the eventual clinical outcomes. Dr. Glassman and colleagues are currently validating the model with a much larger cohort, and will look into clinical testing.

“The big-picture goal,” Dr. Glassman said in an interview, “would be to utilize the model to predict which patients would do well with traditional standard of care treatments and those who wouldn’t do well so that we can personalize the treatment plan for those patients with alternative agents and therapies.”

Once validated, the model could also be employed to identify patterns of disease in other gynecologic cancers or distinguish between viable and necrosed malignant tissue.

The study’s predominant limitation was the small sample size which is being addressed in a larger ongoing study.

Funding was provided by a T32 grant, MD Anderson Cancer Center Support Grant, MD Anderson Ovarian Cancer Moon Shot, SPORE in Ovarian Cancer, the American Cancer Society, and the Ovarian Cancer Research Alliance. Dr. Glassman declared no relevant financial relationships.

The model, using still-frame images from pretreatment laparoscopic surgical videos, had an overall accuracy rate of 93%, according to the pilot study’s first author, Deanna Glassman, MD, an oncologic fellow at the University of Texas MD Anderson Cancer Center, Houston.

Dr. Glassman described her research in a presentation given at the annual meeting of the Society of Gynecologic Oncology.

While the AI model successfully identified all excellent-response patients, it did classify about a third of patients with poor responses as excellent responses. The smaller number of images in the poor-response category, Dr. Glassman speculated, may explain the misclassification.

Researchers took 435 representative still-frame images from pretreatment laparoscopic surgical videos of 113 patients with pathologically proven high-grade serous ovarian cancer. Using 70% of the images to train the model, they used 10% for validation and 20% for the actual testing. They developed the AI model with images from four anatomical locations (diaphragm, omentum, peritoneum, and pelvis), training it using deep learning and neural networks to extract morphological disease patterns for correlation with either of two outcomes: excellent response or poor response. An excellent response was defined as progression-free survival of 12 months or more, and poor response as PFS of 6 months or less. In the retrospective study of images, after excluding 32 gray-zone patients, 75 patients (66%) had durable responses to therapy and 6 (5%) had poor responses.

The PFS was 19 months in the excellent-response group and 3 months in the poor-response group.

Clinicians have often observed differences in gross morphology within the single histologic diagnosis of high-grade serous ovarian cancer. The research intent was to determine if AI could detect these distinct morphological patterns in the still frame images taken at the time of laparoscopy, and correlate them with the eventual clinical outcomes. Dr. Glassman and colleagues are currently validating the model with a much larger cohort, and will look into clinical testing.

“The big-picture goal,” Dr. Glassman said in an interview, “would be to utilize the model to predict which patients would do well with traditional standard of care treatments and those who wouldn’t do well so that we can personalize the treatment plan for those patients with alternative agents and therapies.”

Once validated, the model could also be employed to identify patterns of disease in other gynecologic cancers or distinguish between viable and necrosed malignant tissue.

The study’s predominant limitation was the small sample size which is being addressed in a larger ongoing study.

Funding was provided by a T32 grant, MD Anderson Cancer Center Support Grant, MD Anderson Ovarian Cancer Moon Shot, SPORE in Ovarian Cancer, the American Cancer Society, and the Ovarian Cancer Research Alliance. Dr. Glassman declared no relevant financial relationships.

FROM SGO 2022

About 19% of COVID-19 headaches become chronic

Approximately one in five patients who presented with headache during the acute phase of COVID-19 developed chronic daily headache, according to a study published in Cephalalgia. The greater the headache’s intensity during the acute phase, the greater the likelihood that it would persist.

The research, carried out by members of the Headache Study Group of the Spanish Society of Neurology, evaluated the evolution of headache in more than 900 Spanish patients. Because they found that headache intensity during the acute phase was associated with a more prolonged duration of headache, the team stressed the importance of promptly evaluating patients who have had COVID-19 and who then experience persistent headache.

Long-term evolution unknown

Headache is a common symptom of COVID-19, but its long-term evolution remains unknown. The objective of this study was to evaluate the long-term duration of headache in patients who presented with this symptom during the acute phase of the disease.

Recruitment for this multicenter study took place in March and April 2020. The 905 patients who were enrolled came from six level 3 hospitals in Spain. All completed 9 months of neurologic follow-up.

Their median age was 51 years, 66.5% were women, and more than half (52.7%) had a history of primary headache. About half of the patients required hospitalization (50.5%); the rest were treated as outpatients. The most common headache phenotype was holocranial (67.8%) of severe intensity (50.6%).

Persistent headache common

In the 96.6% cases for which data were available, the median duration of headache was 14 days. The headache persisted at 1 month in 31.1% of patients, at 2 months in 21.5%, at 3 months in 19%, at 6 months in 16.8%, and at 9 months in 16.0%.

“The median duration of COVID-19 headache is around 2 weeks,” David García Azorín, MD, PhD, a member of the Spanish Society of Neurology and one of the coauthors of the study, said in an interview. “However, almost 20% of patients experience it for longer than that. When still present at 2 months, the headache is more likely to follow a chronic daily pattern.” Dr. García Azorín is a neurologist and clinical researcher at the headache unit of the Hospital Clínico Universitario in Valladolid, Spain.

“So, if the headache isn’t letting up, it’s important to make the most of that window of opportunity and provide treatment in that period of 6-12 weeks,” he continued. “To do this, the best option is to carry out preventive treatment so that the patient will have a better chance of recovering.”

Study participants whose headache persisted at 9 months were older and were mostly women. They were less likely to have had pneumonia or to have experienced stabbing pain, photophobia, or phonophobia. They reported that the headache got worse when they engaged in physical activity but less frequently manifested as a throbbing headache.

Secondary tension headaches

On the other hand, Jaime Rodríguez Vico, MD, head of the headache unit at the Jiménez Díaz Foundation Hospital in Madrid, said in an interview that, according to his case studies, the most striking characteristics of post–COVID-19 headaches “in general are secondary, with similarities to tension headaches that patients are able to differentiate from other clinical types of headache. In patients with migraine, very often we see that we’re dealing with a trigger. In other words, more migraines – and more intense ones at that – are brought about.”

He added: “Generally, post–COVID-19 headache usually lasts 1-2 weeks, but we have cases of it lasting several months and even over a year with persistent daily headache. These more persistent cases are probably connected to another type of pathology that makes them more susceptible to becoming chronic, something that occurs in another type of primary headache known as new daily persistent headache.”

Primary headache exacerbation

Dr. García Azorín pointed out that it’s not uncommon that among people who already have primary headache, their condition worsens after they become infected with SARS-CoV-2. However, many people differentiate the headache associated with the infection from their usual headache because after becoming infected, their headache is predominantly frontal, oppressive, and chronic.

“Having a prior history of headache is one of the factors that can increase the likelihood that a headache experienced while suffering from COVID-19 will become chronic,” he noted.

This study also found that, more often than not, patients with persistent headache at 9 months had migraine-like pain.

As for headaches in these patients beyond 9 months, “based on our research, the evolution is quite variable,” said Dr. Rodríguez Vico. “Our unit’s numbers are skewed due to the high number of migraine cases that we follow, and therefore our high volume of migraine patients who’ve gotten worse. The same thing happens with COVID-19 vaccines. Migraine is a polygenic disorder with multiple variants and a pathophysiology that we are just beginning to describe. This is why one patient is completely different from another. It’s a real challenge.”

Infections are a common cause of acute and chronic headache. The persistence of a headache after an infection may be caused by the infection becoming chronic, as happens in some types of chronic meningitis, such as tuberculous meningitis. It may also be caused by the persistence of a certain response and activation of the immune system or to the uncovering or worsening of a primary headache coincident with the infection, added Dr. García Azorín.

“Likewise, there are other people who have a biological predisposition to headache as a multifactorial disorder and polygenic disorder, such that a particular stimulus – from trauma or an infection to alcohol consumption – can cause them to develop a headache very similar to a migraine,” he said.

Providing prognosis and treatment

Certain factors can give an idea of how long the headache might last. The study’s univariate analysis showed that age, female sex, headache intensity, pressure-like quality, the presence of photophobia/phonophobia, and worsening with physical activity were associated with headache of longer duration. But in the multivariate analysis, only headache intensity during the acute phase remained statistically significant (hazard ratio, 0.655; 95% confidence interval, 0.582-0.737; P < .001).

When asked whether they planned to continue the study, Dr. García Azorín commented, “The main questions that have arisen from this study have been, above all: ‘Why does this headache happen?’ and ‘How can it be treated or avoided?’ To answer them, we’re looking into pain: which factors could predispose a person to it and which changes may be associated with its presence.”

In addition, different treatments that may improve patient outcomes are being evaluated, because to date, treatment has been empirical and based on the predominant pain phenotype.

In any case, most doctors currently treat post–COVID-19 headache on the basis of how similar the symptoms are to those of other primary headaches. “Given the impact that headache has on patients’ quality of life, there’s a pressing need for controlled studies on possible treatments and their effectiveness,” noted Patricia Pozo Rosich, MD, PhD, one of the coauthors of the study.

“We at the Spanish Society of Neurology truly believe that if these patients were to have this symptom correctly addressed from the start, they could avoid many of the problems that arise in the situation becoming chronic,” she concluded.

Dr. García Azorín and Dr. Rodríguez Vico disclosed no relevant financial relationships.

A version of this article first appeared on Medscape.com.

Approximately one in five patients who presented with headache during the acute phase of COVID-19 developed chronic daily headache, according to a study published in Cephalalgia. The greater the headache’s intensity during the acute phase, the greater the likelihood that it would persist.

The research, carried out by members of the Headache Study Group of the Spanish Society of Neurology, evaluated the evolution of headache in more than 900 Spanish patients. Because they found that headache intensity during the acute phase was associated with a more prolonged duration of headache, the team stressed the importance of promptly evaluating patients who have had COVID-19 and who then experience persistent headache.

Long-term evolution unknown

Headache is a common symptom of COVID-19, but its long-term evolution remains unknown. The objective of this study was to evaluate the long-term duration of headache in patients who presented with this symptom during the acute phase of the disease.

Recruitment for this multicenter study took place in March and April 2020. The 905 patients who were enrolled came from six level 3 hospitals in Spain. All completed 9 months of neurologic follow-up.

Their median age was 51 years, 66.5% were women, and more than half (52.7%) had a history of primary headache. About half of the patients required hospitalization (50.5%); the rest were treated as outpatients. The most common headache phenotype was holocranial (67.8%) of severe intensity (50.6%).

Persistent headache common

In the 96.6% cases for which data were available, the median duration of headache was 14 days. The headache persisted at 1 month in 31.1% of patients, at 2 months in 21.5%, at 3 months in 19%, at 6 months in 16.8%, and at 9 months in 16.0%.

“The median duration of COVID-19 headache is around 2 weeks,” David García Azorín, MD, PhD, a member of the Spanish Society of Neurology and one of the coauthors of the study, said in an interview. “However, almost 20% of patients experience it for longer than that. When still present at 2 months, the headache is more likely to follow a chronic daily pattern.” Dr. García Azorín is a neurologist and clinical researcher at the headache unit of the Hospital Clínico Universitario in Valladolid, Spain.

“So, if the headache isn’t letting up, it’s important to make the most of that window of opportunity and provide treatment in that period of 6-12 weeks,” he continued. “To do this, the best option is to carry out preventive treatment so that the patient will have a better chance of recovering.”

Study participants whose headache persisted at 9 months were older and were mostly women. They were less likely to have had pneumonia or to have experienced stabbing pain, photophobia, or phonophobia. They reported that the headache got worse when they engaged in physical activity but less frequently manifested as a throbbing headache.

Secondary tension headaches

On the other hand, Jaime Rodríguez Vico, MD, head of the headache unit at the Jiménez Díaz Foundation Hospital in Madrid, said in an interview that, according to his case studies, the most striking characteristics of post–COVID-19 headaches “in general are secondary, with similarities to tension headaches that patients are able to differentiate from other clinical types of headache. In patients with migraine, very often we see that we’re dealing with a trigger. In other words, more migraines – and more intense ones at that – are brought about.”

He added: “Generally, post–COVID-19 headache usually lasts 1-2 weeks, but we have cases of it lasting several months and even over a year with persistent daily headache. These more persistent cases are probably connected to another type of pathology that makes them more susceptible to becoming chronic, something that occurs in another type of primary headache known as new daily persistent headache.”

Primary headache exacerbation

Dr. García Azorín pointed out that it’s not uncommon that among people who already have primary headache, their condition worsens after they become infected with SARS-CoV-2. However, many people differentiate the headache associated with the infection from their usual headache because after becoming infected, their headache is predominantly frontal, oppressive, and chronic.

“Having a prior history of headache is one of the factors that can increase the likelihood that a headache experienced while suffering from COVID-19 will become chronic,” he noted.

This study also found that, more often than not, patients with persistent headache at 9 months had migraine-like pain.

As for headaches in these patients beyond 9 months, “based on our research, the evolution is quite variable,” said Dr. Rodríguez Vico. “Our unit’s numbers are skewed due to the high number of migraine cases that we follow, and therefore our high volume of migraine patients who’ve gotten worse. The same thing happens with COVID-19 vaccines. Migraine is a polygenic disorder with multiple variants and a pathophysiology that we are just beginning to describe. This is why one patient is completely different from another. It’s a real challenge.”

Infections are a common cause of acute and chronic headache. The persistence of a headache after an infection may be caused by the infection becoming chronic, as happens in some types of chronic meningitis, such as tuberculous meningitis. It may also be caused by the persistence of a certain response and activation of the immune system or to the uncovering or worsening of a primary headache coincident with the infection, added Dr. García Azorín.

“Likewise, there are other people who have a biological predisposition to headache as a multifactorial disorder and polygenic disorder, such that a particular stimulus – from trauma or an infection to alcohol consumption – can cause them to develop a headache very similar to a migraine,” he said.

Providing prognosis and treatment

Certain factors can give an idea of how long the headache might last. The study’s univariate analysis showed that age, female sex, headache intensity, pressure-like quality, the presence of photophobia/phonophobia, and worsening with physical activity were associated with headache of longer duration. But in the multivariate analysis, only headache intensity during the acute phase remained statistically significant (hazard ratio, 0.655; 95% confidence interval, 0.582-0.737; P < .001).

When asked whether they planned to continue the study, Dr. García Azorín commented, “The main questions that have arisen from this study have been, above all: ‘Why does this headache happen?’ and ‘How can it be treated or avoided?’ To answer them, we’re looking into pain: which factors could predispose a person to it and which changes may be associated with its presence.”

In addition, different treatments that may improve patient outcomes are being evaluated, because to date, treatment has been empirical and based on the predominant pain phenotype.

In any case, most doctors currently treat post–COVID-19 headache on the basis of how similar the symptoms are to those of other primary headaches. “Given the impact that headache has on patients’ quality of life, there’s a pressing need for controlled studies on possible treatments and their effectiveness,” noted Patricia Pozo Rosich, MD, PhD, one of the coauthors of the study.

“We at the Spanish Society of Neurology truly believe that if these patients were to have this symptom correctly addressed from the start, they could avoid many of the problems that arise in the situation becoming chronic,” she concluded.

Dr. García Azorín and Dr. Rodríguez Vico disclosed no relevant financial relationships.

A version of this article first appeared on Medscape.com.

Approximately one in five patients who presented with headache during the acute phase of COVID-19 developed chronic daily headache, according to a study published in Cephalalgia. The greater the headache’s intensity during the acute phase, the greater the likelihood that it would persist.

The research, carried out by members of the Headache Study Group of the Spanish Society of Neurology, evaluated the evolution of headache in more than 900 Spanish patients. Because they found that headache intensity during the acute phase was associated with a more prolonged duration of headache, the team stressed the importance of promptly evaluating patients who have had COVID-19 and who then experience persistent headache.

Long-term evolution unknown

Headache is a common symptom of COVID-19, but its long-term evolution remains unknown. The objective of this study was to evaluate the long-term duration of headache in patients who presented with this symptom during the acute phase of the disease.

Recruitment for this multicenter study took place in March and April 2020. The 905 patients who were enrolled came from six level 3 hospitals in Spain. All completed 9 months of neurologic follow-up.

Their median age was 51 years, 66.5% were women, and more than half (52.7%) had a history of primary headache. About half of the patients required hospitalization (50.5%); the rest were treated as outpatients. The most common headache phenotype was holocranial (67.8%) of severe intensity (50.6%).

Persistent headache common

In the 96.6% cases for which data were available, the median duration of headache was 14 days. The headache persisted at 1 month in 31.1% of patients, at 2 months in 21.5%, at 3 months in 19%, at 6 months in 16.8%, and at 9 months in 16.0%.

“The median duration of COVID-19 headache is around 2 weeks,” David García Azorín, MD, PhD, a member of the Spanish Society of Neurology and one of the coauthors of the study, said in an interview. “However, almost 20% of patients experience it for longer than that. When still present at 2 months, the headache is more likely to follow a chronic daily pattern.” Dr. García Azorín is a neurologist and clinical researcher at the headache unit of the Hospital Clínico Universitario in Valladolid, Spain.

“So, if the headache isn’t letting up, it’s important to make the most of that window of opportunity and provide treatment in that period of 6-12 weeks,” he continued. “To do this, the best option is to carry out preventive treatment so that the patient will have a better chance of recovering.”

Study participants whose headache persisted at 9 months were older and were mostly women. They were less likely to have had pneumonia or to have experienced stabbing pain, photophobia, or phonophobia. They reported that the headache got worse when they engaged in physical activity but less frequently manifested as a throbbing headache.

Secondary tension headaches

On the other hand, Jaime Rodríguez Vico, MD, head of the headache unit at the Jiménez Díaz Foundation Hospital in Madrid, said in an interview that, according to his case studies, the most striking characteristics of post–COVID-19 headaches “in general are secondary, with similarities to tension headaches that patients are able to differentiate from other clinical types of headache. In patients with migraine, very often we see that we’re dealing with a trigger. In other words, more migraines – and more intense ones at that – are brought about.”

He added: “Generally, post–COVID-19 headache usually lasts 1-2 weeks, but we have cases of it lasting several months and even over a year with persistent daily headache. These more persistent cases are probably connected to another type of pathology that makes them more susceptible to becoming chronic, something that occurs in another type of primary headache known as new daily persistent headache.”

Primary headache exacerbation

Dr. García Azorín pointed out that it’s not uncommon that among people who already have primary headache, their condition worsens after they become infected with SARS-CoV-2. However, many people differentiate the headache associated with the infection from their usual headache because after becoming infected, their headache is predominantly frontal, oppressive, and chronic.

“Having a prior history of headache is one of the factors that can increase the likelihood that a headache experienced while suffering from COVID-19 will become chronic,” he noted.

This study also found that, more often than not, patients with persistent headache at 9 months had migraine-like pain.

As for headaches in these patients beyond 9 months, “based on our research, the evolution is quite variable,” said Dr. Rodríguez Vico. “Our unit’s numbers are skewed due to the high number of migraine cases that we follow, and therefore our high volume of migraine patients who’ve gotten worse. The same thing happens with COVID-19 vaccines. Migraine is a polygenic disorder with multiple variants and a pathophysiology that we are just beginning to describe. This is why one patient is completely different from another. It’s a real challenge.”

Infections are a common cause of acute and chronic headache. The persistence of a headache after an infection may be caused by the infection becoming chronic, as happens in some types of chronic meningitis, such as tuberculous meningitis. It may also be caused by the persistence of a certain response and activation of the immune system or to the uncovering or worsening of a primary headache coincident with the infection, added Dr. García Azorín.

“Likewise, there are other people who have a biological predisposition to headache as a multifactorial disorder and polygenic disorder, such that a particular stimulus – from trauma or an infection to alcohol consumption – can cause them to develop a headache very similar to a migraine,” he said.

Providing prognosis and treatment

Certain factors can give an idea of how long the headache might last. The study’s univariate analysis showed that age, female sex, headache intensity, pressure-like quality, the presence of photophobia/phonophobia, and worsening with physical activity were associated with headache of longer duration. But in the multivariate analysis, only headache intensity during the acute phase remained statistically significant (hazard ratio, 0.655; 95% confidence interval, 0.582-0.737; P < .001).

When asked whether they planned to continue the study, Dr. García Azorín commented, “The main questions that have arisen from this study have been, above all: ‘Why does this headache happen?’ and ‘How can it be treated or avoided?’ To answer them, we’re looking into pain: which factors could predispose a person to it and which changes may be associated with its presence.”

In addition, different treatments that may improve patient outcomes are being evaluated, because to date, treatment has been empirical and based on the predominant pain phenotype.

In any case, most doctors currently treat post–COVID-19 headache on the basis of how similar the symptoms are to those of other primary headaches. “Given the impact that headache has on patients’ quality of life, there’s a pressing need for controlled studies on possible treatments and their effectiveness,” noted Patricia Pozo Rosich, MD, PhD, one of the coauthors of the study.

“We at the Spanish Society of Neurology truly believe that if these patients were to have this symptom correctly addressed from the start, they could avoid many of the problems that arise in the situation becoming chronic,” she concluded.

Dr. García Azorín and Dr. Rodríguez Vico disclosed no relevant financial relationships.

A version of this article first appeared on Medscape.com.

FROM CEPHALALGIA

Live-donor liver transplants for patients with CRC liver mets

These patients usually have a poor prognosis, and for many, palliative chemotherapy is the standard of care.

“For the first time, we have been able to demonstrate [outside of Norway] that liver transplantation for patients with unresectable liver metastases is feasible with good outcomes,” lead author Gonzalo Sapisochin, MD, PhD, an assistant professor of surgery at the University of Toronto, said in an interview.

“Furthermore, this is the first time we are able to prove that living donation may be a good strategy in this setting,” Dr. Sapisochin said of the series of 10 cases that they published in JAMA Surgery.

The series showed “excellent perioperative outcomes for both donors and recipients,” noted the authors of an accompanying commentary. They said the team “should be commended for adding liver-donor live transplantation to the armamentarium of surgical options for patients with CRC liver metastases.”

However, they express concern about the relatively short follow-up of 1.5 years and the “very high” recurrence rate of 30%.

Commenting in an interview, lead editorialist Shimul Shah, MD, an associate professor of surgery and the chief of solid organ transplantation at the University of Cincinnati, said: “I agree that overall survival is an important measure to look at, but it’s hard to look at overall survival with [1.5] years of follow-up.”

Other key areas of concern are the need for more standardized practices and for more data on how liver transplantation compares with patients who just continue to receive chemotherapy.

“I certainly think that there’s a role for liver transplantation in these patients, and I am a big fan of this,” Dr. Shah emphasized, noting that four patients at his own center have recently received liver transplants, including three from deceased donors.

“However, I just think that as a community, we need to be cautious and not get too excited too early,” he said. “We need to keep studying it and take it one step at a time.”

Moving from deceased to living donors

Nearly 70% of patients with CRC develop liver metastases, and when these are unresectable, the prognosis is poor, with 5-year survival rates of less than 10%.

The option of liver transplantation was first reported in 2015 by a group in Norway. Their study included 21 patients with CRC and unresectable liver tumors. They reported a striking improvement in overall survival at 5 years (56% vs. 9% among patients who started first-line chemotherapy).

But with shortages of donor livers, this approach has not caught on. Deceased-donor liver allografts are in short supply in most countries, and recent allocation changes have further shifted available organs away from patients with liver tumors.

An alternative is to use living donors. In a recent study, Dr. Sapisochin and colleagues showed viability and a survival advantage, compared with deceased-donor liver transplantation.

Building on that work, they established treatment protocols at three centers – the University of Rochester (N.Y.) Medical Center, the Cleveland Clinic, , and the University Health Network in Toronto.

Of 91 evaluated patients who were prospectively enrolled with liver-confined, unresectable CRC liver metastases, 10 met all inclusion criteria and received living-donor liver transplants between December 2017 and May 2021. The median age of the patients was 45 years; six were men, and four were women.

These patients all had primary tumors greater than stage T2 (six T3 and four T4b). Lymphovascular invasion was present in two patients, and perineural invasion was present in one patient.

The median time from diagnosis of the liver metastases to liver transplant was 1.7 years (range, 1.1-7.8 years).

At a median follow-up of 1.5 years (range, 0.4-2.9 years), recurrences occurred in three patients, with a rate of recurrence-free survival, using Kaplan-Meier estimates, of 62% and a rate of overall survival of 100%.

Rates of morbidity associated with transplantation were no higher than those observed in established standards for the donors or recipients, the authors noted.

Among transplant recipients, three patients had no Clavien-Dindo complications; three had grade II, and four had grade III complications. Among donors, five had no complications, four had grade I, and one had grade III complications.

All 10 donors were discharged from the hospital 4-7 days after surgery and recovered fully.

All three patients who experienced recurrences were treated with palliative chemotherapy. One died of disease after 3 months of treatment. As of the time of publication of the study, the other two had survived for 2 or more years following their live donor liver transplant.

Patient selection key

The authors are now investigating tumor subtypes, responses in CRC liver metastases, and other factors, with the aim of developing a novel screening method to identify appropriate candidates more quickly.

In the meantime, they emphasized that indicators of disease biology, such as the Oslo Score, the Clinical Risk Score, and sustained clinical response to systemic therapy, “remain the key filters through which to select patients who have sufficient opportunity for long-term cancer control, which is necessary to justify the risk to a living donor.”

Dr. Sapisochin reported receiving grants from Roche and Bayer and personal fees from Integra, Roche, AstraZeneca, and Novartis outside the submitted work. Dr. Shah disclosed no relevant financial relationships.

A version of this article first appeared on Medscape.com.

These patients usually have a poor prognosis, and for many, palliative chemotherapy is the standard of care.

“For the first time, we have been able to demonstrate [outside of Norway] that liver transplantation for patients with unresectable liver metastases is feasible with good outcomes,” lead author Gonzalo Sapisochin, MD, PhD, an assistant professor of surgery at the University of Toronto, said in an interview.

“Furthermore, this is the first time we are able to prove that living donation may be a good strategy in this setting,” Dr. Sapisochin said of the series of 10 cases that they published in JAMA Surgery.

The series showed “excellent perioperative outcomes for both donors and recipients,” noted the authors of an accompanying commentary. They said the team “should be commended for adding liver-donor live transplantation to the armamentarium of surgical options for patients with CRC liver metastases.”

However, they express concern about the relatively short follow-up of 1.5 years and the “very high” recurrence rate of 30%.

Commenting in an interview, lead editorialist Shimul Shah, MD, an associate professor of surgery and the chief of solid organ transplantation at the University of Cincinnati, said: “I agree that overall survival is an important measure to look at, but it’s hard to look at overall survival with [1.5] years of follow-up.”

Other key areas of concern are the need for more standardized practices and for more data on how liver transplantation compares with patients who just continue to receive chemotherapy.

“I certainly think that there’s a role for liver transplantation in these patients, and I am a big fan of this,” Dr. Shah emphasized, noting that four patients at his own center have recently received liver transplants, including three from deceased donors.

“However, I just think that as a community, we need to be cautious and not get too excited too early,” he said. “We need to keep studying it and take it one step at a time.”

Moving from deceased to living donors

Nearly 70% of patients with CRC develop liver metastases, and when these are unresectable, the prognosis is poor, with 5-year survival rates of less than 10%.

The option of liver transplantation was first reported in 2015 by a group in Norway. Their study included 21 patients with CRC and unresectable liver tumors. They reported a striking improvement in overall survival at 5 years (56% vs. 9% among patients who started first-line chemotherapy).

But with shortages of donor livers, this approach has not caught on. Deceased-donor liver allografts are in short supply in most countries, and recent allocation changes have further shifted available organs away from patients with liver tumors.

An alternative is to use living donors. In a recent study, Dr. Sapisochin and colleagues showed viability and a survival advantage, compared with deceased-donor liver transplantation.

Building on that work, they established treatment protocols at three centers – the University of Rochester (N.Y.) Medical Center, the Cleveland Clinic, , and the University Health Network in Toronto.

Of 91 evaluated patients who were prospectively enrolled with liver-confined, unresectable CRC liver metastases, 10 met all inclusion criteria and received living-donor liver transplants between December 2017 and May 2021. The median age of the patients was 45 years; six were men, and four were women.

These patients all had primary tumors greater than stage T2 (six T3 and four T4b). Lymphovascular invasion was present in two patients, and perineural invasion was present in one patient.

The median time from diagnosis of the liver metastases to liver transplant was 1.7 years (range, 1.1-7.8 years).

At a median follow-up of 1.5 years (range, 0.4-2.9 years), recurrences occurred in three patients, with a rate of recurrence-free survival, using Kaplan-Meier estimates, of 62% and a rate of overall survival of 100%.

Rates of morbidity associated with transplantation were no higher than those observed in established standards for the donors or recipients, the authors noted.

Among transplant recipients, three patients had no Clavien-Dindo complications; three had grade II, and four had grade III complications. Among donors, five had no complications, four had grade I, and one had grade III complications.

All 10 donors were discharged from the hospital 4-7 days after surgery and recovered fully.

All three patients who experienced recurrences were treated with palliative chemotherapy. One died of disease after 3 months of treatment. As of the time of publication of the study, the other two had survived for 2 or more years following their live donor liver transplant.

Patient selection key

The authors are now investigating tumor subtypes, responses in CRC liver metastases, and other factors, with the aim of developing a novel screening method to identify appropriate candidates more quickly.